Model Architecture

A model architecture is recommended because it provides a proven, standardized blueprint that ensures resiliency, reliability, scalability, and agility in production environments. By adhering to this structured approach, users can reduce risks and uncertainties associated with the design and implementation phases. This standardization fosters better consistency and collaboration among stakeholders and team members, enhancing the overall efficiency and effectiveness of both development and deployment processes.

Moreover, leveraging best practices and industry standards embedded within the model architecture enables users to achieve their goals more effectively. It serves as a baseline for future improvements and innovations, facilitating continuous enhancement and adaptation to evolving business requirements. In summary, a model architecture not only supports business continuity but also empowers users to maintain high operational standards while minimizing QA and support costs.

1 - Overview

The Model Architecture for Protegrity Appliance consists of various components aimed at ensuring data security, high availability, fault tolerance, and effective disaster recovery. It includes an Enterprise Security Administrator (ESA), Data Security Gateway (DSG), standard protectors, and cloud protectors.

Purpose

Protegrity’s Model Architecture must support business continuity, agility, and scalability in production. It should be prescriptive but adaptable to user specific requirements for additional capacity, geo-proximity, and domain isolation by simply extending the deployment architecture in a cookie cutter manner. Adhering to the principles of such an architecture would increase the reliability of solutions while reducing QA and support costs.

Goals

Provide a proven, standardized blueprint for building a Protegrity Appliance.

Help to reduce risk and uncertainty in design and implementation.

Allow for better consistency and collaboration among stakeholders and team members.

Improve the overall efficiency and effectiveness of development and deployment.

Enable users to leverage best practices and industry standards to achieve their goals.

Serve as a baseline for future improvements and enhancements.

What is NOT the goal

It is NOT a complete universal architecture for all user deployments.

Example: A user does not currently have the resources or infrastructure available to achieve the model architecture.

Example: A user cannot implement the changes needed in time for the next change window.

It is NOT a mandated solution for the user to have support from Protegrity experts, not necessarily meaning just Protegrity Support.

When an architecture does not meet a modeled architecture, what to do?

Audience

This document is intended for:

Solution Architects, Solution Engineers, Technical Account Managers, Customer Success Managers, Support Engineers, and Product Managers.

Partners (with corresponding technical roles above).

Scope

The scope of this document includes:

System Requirements: References to respective sections in documentation containing the detailed specifications of hardware and software prerequisites for deployment.

Installation and Upgrade Procedures: References to respective sections in documentation containing the step-by-step instructions for installing the system and performing upgrades.

Configuration Guidelines: Best practices and recommendations for configuring the system to meet operational needs.

Best Practices for Maintaining and Operating the System: Guidelines on routine maintenance tasks and optimal operation strategies.

Disaster Recovery Planning: Strategies and plans to recover and restore functionality in the event of a disaster. This includes failover and failback from Primary site to DR site and vice-versa.

Fault Tolerance Strategies: Methods and techniques to ensure continuous operation and mitigate system failure risks.

Security Measures: Security protocols and measures to protect the system from threats and vulnerabilities.

Key Concepts

The following key concepts are important to understand when reading the Model Architecture documentation.

GTM: Global Traffic Manager (GTM) is made available, configured, and controlled by the user. GTM is at a minimum required to achieve High Availability (HA) and Disaster Recovery (DR).

LTM: Local Traffic Manager (LTM) is made available, configured, and controlled by the user. LTM is required to achieve redundancy of ESAs in case of failover of primary ESA.

Replication Job: A manual replication job is required between the Primary ESA and all the Secondary ESAs.

Recovery Plan: A recovery plan is created and owned by the user.

For example, in an event of a failover (where Primary ESA is down, and Secondary ESA is up) and the load balancer now points to Secondary ESA. It is the best practice to freeze policy changes during this period to avoid having to consider the role of Secondary ESA being altered. A recovery from this scenario would be to resolve the reason Primary ESA is down and let the load balance simply switch back to the primary ESA.

Keystore: It is made available, configured and controlled by the user. The Keystore is required when regulations standards such as PCI DSS, HIPAA or FIPS are required to be enforced.

Port and Connection: All ports and connection requirements must be met to ensure seamless operation and communication across system components.

2 - Model Architecture

Model Architecture

2.1 - Deployment with Default Audit Logging Flow to ESA

The architecture ensures comprehensive management of network resiliency through correctly configured traffic managers (GTM, LTM-1, and LTM-2) across both Primary and DR sites. Communication flows are meticulously routed to ensure high availability and redundancy.

The default logging flow from the protectors to the ESA is shown in the image below.

Legends

| Component | Active Flow | Failover Flow |

|---|

| Policy download for v9.1.0.0 Protector | ______ | - - - - - - |

| Package download for v10.0.0 Standard Protector | ______ | - - - - - - |

| Forwarding of Audit Events to ESA | ______ | - - - - - - |

2.2 - Deployment with Audit Logging Flow to External SIEM

The architecture also supports forwarding logs from protectors to the External SIEM.

The logging flow from protectors to the ESA and the External SIEM is shown in the image below.

Legends

| Component | Active Flow | Failover Flow |

|---|

| Policy download for v9.1.0.0 Protector | ______ | - - - - - - |

| Package download for v10.0.0 Standard Protector | ______ | - - - - - - |

| Forwarding of Audit Events to External SIEM via ESA | ______ | - - - - - - |

2.3 - Network Architecture Overview

Table 1. Sites and Components

| Sites | Components | Description |

| Primary Site | ESA | ESA P1, ESA S1, ESA S2 |

| LTM | LTM-1: Manages resiliency within the Primary Site |

| DR Site | ESA | ESA S3, ESA S4, ESA S5 |

| LTM | LTM-2: Manages resiliency within the DR Site |

| GTM | GTM | GTM: Manages resiliency between the Primary and DR

Sites |

Table 2. ESA Compatibility

| ESA version Supported Protectors | Supported Protectors |

| 10.x.x | - v9.1.0.0 Protectors (Backward Compatibility Mode)

- v10.0.0 Standard Protectors

- DSG 3.3.0.0 (Backward Compatibility Mode)

|

Communication Flows

Below table describes communication flows as depicted in diagrams in Deployment with Default Audit logging flow to ESA and Deployment with Audit logging flow to External SIEM.

Table 3. Communication Flows

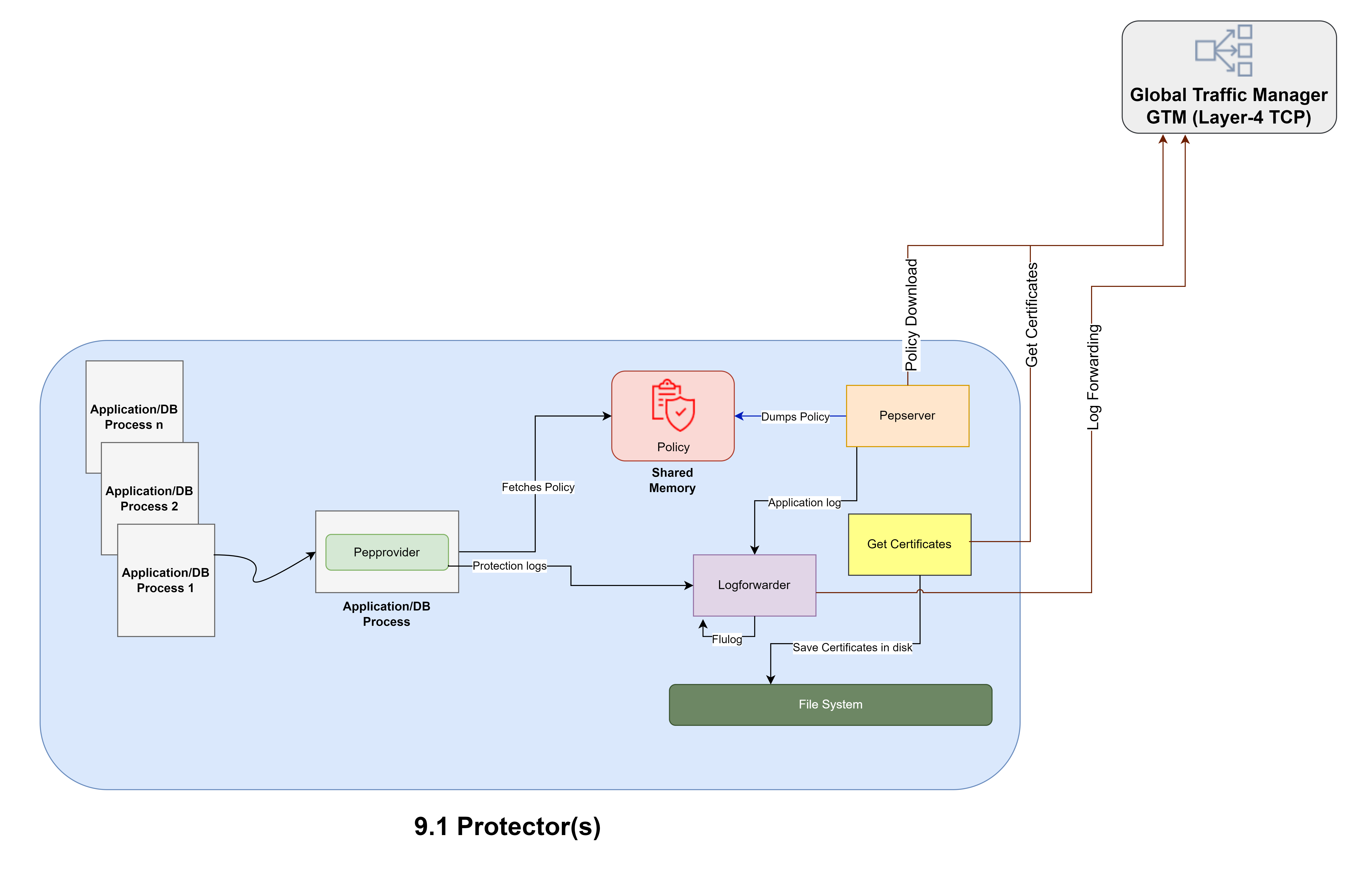

| Flow | Request Initiator | Destination | Port | Protocol | Flow Sequence | LTM Configuration |

Policy Download for v9.1.0.0

Protector | Pepserver in the Protector node | Service Dispatcher in ESA | 8443 | TLS | - Through GTM.

- Through LTM-1 for active flow and LTM-2 for failover

flow to Service Dispatcher in ESA.

| Primary Active Flow: Active connection to ESA P1 and

standby connection to other ESAs Protector 9.1 -> GTM

->LTM-1->ESA P1 DR Flow: Active connection to ESA S3

and standby connections to other ESAs Protector 9.1->

GTM ->LTM-2->ESA S3 |

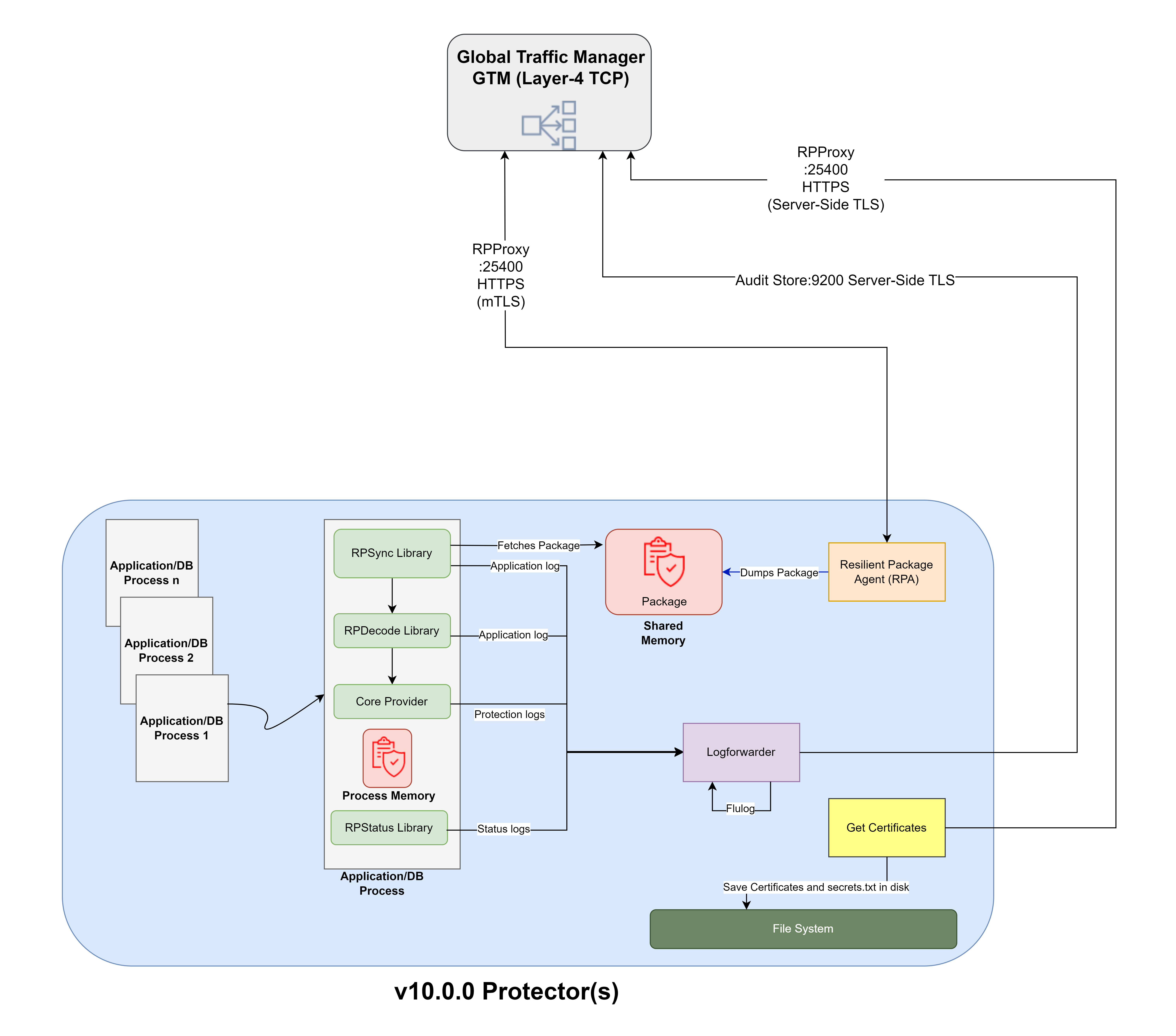

Package Download for v10.0.0 Standard

Protector | RPAgent in the Protector node | RPP in ESA | 25400 | TLS | - Through GTM.

- Through LTM-1 for active flow and LTM-2 for failover

flow to RPP in ESA

| Primary Active Flow: Active connection to ESA P1 and

standby connection to other ESAs Protector 10.0.0->GTM

->LTM-1->ESA P1 DR Flow: Active connection to ESA S3

and standby connection other ESAs Protector 10.0.0->GTM

->LTM-2->ESA S3 |

Forwarding of Audit Events to

ESA | Log Forwarder in the Protector node | Insight in ESA | 9200 | TLS | - Through GTM.

- Through LTM-1 for active flow and LTM-2 for failover

flow to Insight in ESA.

| Primary Active Flow: Routed to all ESAs in the Primary

Site Protector 9.1.0.0/10.0.0->GTM ->LTM-1->ESA P1,

S1,S2 DR Flow: Routed to all ESAs in the DR

Site Protector 9.1.0.0/10.0.0->GTM ->LTM-2->ESA S3,

S4,S5 |

Forwarding of Audit Events to External SIEM via

ESA | Log Forwarder in the Protector node | TD-Agent in ESA | 24224/ 24284 | Non-TLS/TLS | - Through GTM.

- Through LTM-1 for active flow and LTM-2 for failover

flow to Insight in ESA.

| Primary Active Flow: Routed to all ESAs in the Primary

Site Protector 9.1.0.0/10.0.0->GTM ->LTM-1->ESA P1,

S1,S2-> External SIEM DR Flow: Routed to all ESAs in

the DR Site Protector 9.1.0.0/10.0.0->GTM ->LTM-2->ESA

S3, S4,S5 -> External SIEM |

The table below summarizes the key measurements for the recommended model architecture across various dimensions.

Table 4. Key measurements for the recommended model architecture across

various dimensions

| Measurement | Policy | Insight | Criteria summary |

| Extensibility | √ | √ | The current architecture allows easy addition of new features, capabilities, or functionalities without requiring significant changes to the existing architecture. |

| Vertical Scalability | √ | √ | The current architecture allows enabling a node to

expand its capacity by adding additional resources such as

processing power, memory, or storage. |

| Horizontal Scalability | X | √ | The current architecture has the ability to

distribute the load among multiple machines to improve the

system's reliability and performance through a static consistent

routing. But for Policy, it is always recommended to perform

authoring and modification only from Primary ESA. Hence, policy

does not support horizontal scalability. |

| High Availability | X | √ | For Policy, HA is not supported as there is no real time

replication of changes in policy to other ESAs from the Primary

ESA. There is a dependency on TAC replication job for

replication. For Insight, audit logs are replicated to all the

ESAs in a round robin fashion and there are replicas available

in each of the ESAs handled by OpenSearch. |

| Disaster Recovery | √ | √ | The architecture meets the necessary criteria for

disaster recovery, but it is important to understand that an

appropriate DR plan is ready and tested by the user. The

solution relies on the external SIEM for a complete log

retention to be in place. |

| Federation | √ | √ | The current architecture has the ability to manage

policy (monitor nodes), analyse events, and access logs to

monitor performance as well as troubleshoot potential issues at

the enterprise level (single sheet of glass). This criterion is

met due to the use of an external SIEM. |

These measurements underscore the importance and effectiveness of adhering to a well-defined model architecture, ensuring resiliency, fault tolerance, scalability, security, and maintainability and adaptable to changes.

2.4 - Enterprise Security Administrator (ESA)

ESA handles the management of policies, keys, monitoring, auditing, and reporting of protected systems in the enterprise. ESA appliances have the Insight, and the td-agent service installed and are capable of hosting Docker containers.

The detailed Architecture diagram for ESA v10.0.1 is shown below.

2.4.1 - Infrastructure Requirements

2.4.2 - Installing and Configuring ESA

The ESA can be installed on-premise or a cloud platform such as AWS, GCP, or Azure. When upgrading from a previous version, the ESA installer is available as a patch.

Assumptions

This section assumes that there is no prior installation of protegrity product and installation is happening from scratch.

GTM and LTM are provisioned and installed.

For more information about the prescribed configurations, refer Recommended Traffic Manager.

This section explains about Installation and Configuration of ESAs as per Architecture Diagram in Deployment with Default Audit logging flow to ESA.

For more information about installing ESA on on-premise or cloud platforms, refer Installation.

Prerequisites

Before proceeding with the installation, ensure the following prerequisites are met.

Sites: Ensure that two sites are available — one designated for the Primary Site and another for the Disaster Recovery (DR) Site.

Network Connectivity: Ensure there is reliable network connectivity between the Primary and DR sites.

Installing and Configuring ESA

Install ESA (ESA P1) in the Primary Site.

For more information about detailed installation instructions for on-premise and cloud installation, refer Installing ESA.

Initialize PIM in ESA P1.

For more information about initializing PIM in ESA, refer Initializing the Policy Management.

Initialize Analytics in the Insight in ESA P1.

For more information about initializing Analytics, refer Creating the Audit Store Cluster on the ESA.

Upload and apply Custom Certificates. Apply custom certificates for the following components:

- Management Server/Client

- Consul Server

- Audit Store Server/Client

- Audit Store REST Client

- Insight Analytics Client

- PLUG Client

For more information about recommendations related to certificates for various components, refer Certificate Requirements.

For more information about steps to upload certificates in ESA, refer Uploading Certificates.

For more information about steps to apply certificates in ESA, refer Changing Certificates.

Define policies for Data Security. On ESA P1, define the necessary data elements, policies and configure external member source.

Configure Proxy Authentication and External LDAP. On ESA P1, configure proxy authentication for configuration with External LDAP for managing ESA Administration. Refer below sections:

Configuring ESA with External Keystore.

For more information about setting ESA to External Keystore, refer Section Switching HSM Modules from HSM Integration Guide for ESA.

Configure Rollover Index Insight Scheduler Parameters. Set the rollover index scheduler parameters according to specific requirements.

For more information about recommended configurations, refer Index Rollover.

Configure Information Lifecycle Management (ILM) Export Insight Scheduler Parameters. Adjust the ILM export scheduler settings based on the requirements.

For more information about recommendation configurations for ILM Export, refer ILM Export.

Configure ILM Multi Delete Insight Scheduler Parameters. Set the ILM Multi Delete insight scheduler parameters according to specific requirements.

For more information about recommendation configurations for ILM Multi Delete, refer [ILM Delete](/docs/model_arch/model_arch/esa/insight/#ilm-delete). <!-- fix link here -->

- Configure Alerts. Set up the required alerts to monitor system health and events.

For more information about recommendations related to configuration of alerts, refer [Alerting](/docs/model_arch/model_arch/esa/insight/#alerting). <!-- fix link here -->

Install additional ESAs.

In the Primary Site, install two additional ESAs: ESA S1 and ESA S2.

In the DR Site, install three ESAs: ESA S3, ESA S4, and ESA S5.

Create Trusted Appliances Cluster (TAC) between all ESAs in Primary and DR site. Create a TAC between ESAs P1, S1,S2 in Primary and ESAs S3,S4,S5 in DR Site.

For more information about creating TAC, refer [Creating a TAC using the Web UI](/docs/aog/trusted_appliances_cluster/aog_creating_tac_ui/).

- Form an Insight Cluster: Join ESA S1 and ESA S2 to ESA P1 to form a robust and redundant Insight Cluster. Form Insight cluster in DR site between ESA S3,S4 and S5.

For more information about creating Insight cluster, refer [Creating an Audit Store cluster](/docs/installation/upg_creating_audit_store_cluster/).

Create Replication Tasks. Establish a replication task on ESA P1 to replicate all ESA data—excluding SSH settings—to ESA S1, S2, S3, S4, and S5.

For recommendations, refer point 1 ESA Primary to Secondary Replication Job in the Scheduler Tasks.

For more information about configuring scheduled task for cluster export, refer Scheduling Configuration Export to Cluster Tasks.

Enable ILM Multi Export Scheduler task. By default, ILM Multi Export Scheduled task is disabled. So, it is necessary to enable this task and configure as per requirements.

For more information about ILM Multi Export, refer Exporting logs.

Create Scheduled Tasks for copying ILM Exported Files from Primary Site to DR Site. Create a scheduled task in each of the ESAs in the primary site, that is, ESA P1, ESA S1, ESA S2 to copy ILM exported files from ESA P1,ESA S1, and ESA S2 to ESAs in DR site, that is, ESA S3, ESA S4, and ESA S5 in the DR Site.

For recommendations, refer point 2 ESA Exported Indexes Purge in Scheduler Tasks.

For more information, refer Creating a scheduled task.

Create Scheduled task for taking backup of ESA P1 to a file.

For recommendations, refer point 3 Back up Primary ESA data to file in Scheduler Tasks.

Keep a detailed log and documentation of each step performed for future reference and troubleshooting.

Meticulously following these steps help establish a resilient and secure ESA infrastructure that aligns with the specified model architecture diagram.

2.4.3 - Upgrading ESA

This section describes:

2.4.4 - External Keystore Configuration

To enhance security, Protegrity products can be integrated with external Key Stores. For more information to configure ESA with External Key Store, refer section Configuring ESA Appliance or DSG Appliance to Communicate with Safenet HSM in the HSM Integration Guide the My.Protegrity portal.

2.4.5 - Scheduler Tasks

To maintain the resiliency, efficiency, and reliability of the ESA, it is essential to set up scheduled tasks.

The recommended scheduled tasks to configure on the ESA is provided here.

Task Summary and Description

1. ESA Primary to Secondary Replication Job

Description: This task must be configured in primary ESA P1. It performs replication of data from the Primary ESA P1 to all the secondary ESAs, that is, ESA S1, ESA2, ESA S3, ESA S4, ESA S5.

Frequency: Recommended to run daily at midnight. It is important that this task must be executed immediately after a change in policy or rulesets or in case of changes made to any of the keys under Key Management menu in ESA Web UI.

Important: Ensure only the following items are selected as part of this Cluster Export task.

Appliance OS Configuration: Export OS configuration

- Web Settings

- Firewall Settings

- Appliance Authentication Settings

- Appliance JWT Configuration

- Appliance AZURE AD Configuration (If Azure AD is being used)

- Time-zone and NTP settings

- OS Services Status

- Appliance FIM Policies and Settings

- User custom list of files (If custom files are present)

Directory Server And Settings: Export directory services and related settings

Export Consul Configuration and Data

- Import Consul Certificates and keys

Cloud Utility AWS: Cloud Utility AWS CloudWatch configuration files

- Import Cloud Utility AWS CloudWatch configuration files

Backup Policy-Management for Trusted Appliances Cluster: Backup All Policy-Management Configs, Keys, Certs and Data for Trusted Appliance Cluster

- Import All Policy-Management Configs and Data for Trusted Appliance Cluster

Policy Manager Web UI Settings: Export policy manager web ui settings

- Import policy manager web ui settings

Export Gateway Configuration Files: Gateway Config Files Export

- Gateway Config Files Import

Do not select the below options as part of this cluster export task

- SSH settings

- Management and WebServices Certificates

- Certificates

- Import All Policy-Management Configs, Keys, Certs, Data but without Key Store files for Trusted Appliance Cluster

Important: Scheduled tasks are not replicated as part of cluster export.

2. ESA Exported Indexes Move

Description: This task must be configured in all the ESAs in primary site, that is, ESA P1, ESA S1, and ESA2. It moves the ILM exported index files from respective ESAs, that is, ESA P1, ESA S1 and ESA S2 to ESA S5 in DR site.

Frequency: Recommended to run weekly.

3. Back up Primary ESA data to file.

Description: This task must be configured in primary ESA P1. It backs up data from Primary ESA to a file and moves it to a remote machine.

Frequency: Recommended to run weekly.

Important: Ensure only the following items are selected as part of this File Export task.

Appliance OS Configuration

Directory Server And Settings

Export Consul Configuration and Data

Cloud Utility AWS (if AWS Cloud Utility is configured and being used)

Backup Policy-Management

Policy Manager Web UI Settings

Export Gateway Configuration Files

Do not select the below option as part of this file export task

- Backup Policy-Management for Trusted Appliances Cluster without Key Store.

Important: Scheduled tasks are not backed up as part of file backup.

2.4.6 - Backup and Restore

Effective backup and restore procedures are crucial for ensuring data integrity and system reliability.

Below are the key backup and restore strategies to implement in the ESA environment:

Full OS

Description: Backup of the complete operating system including all configurations, applications, and user data in ESA. This is applicable only for on-prem environment.

Frequency: Recommended weekly or before and after significant changes such as upgrade.

Restore Steps: Boot into a System Restore Mode to restore to last backed up state.

For more information to perform full OS backup and restore, refer Working with OS Full Backup and Restore.

Cloud

Description: Create snapshots of ESA instances for disaster recovery. This is applicable only for cloud environment.

Frequency: Recommended weekly or before and after significant changes such as upgrade or changes to policy in ESA.

Restore Steps: Restore the snapshot by following the cloud provider-specific restore procedure.

File

Description: Ensure that ESA data is regularly backed up and stored securely. Automating the process using scheduled task in ESA will help maintain consistency and reduce manual intervention. The restore steps allow easy recovery of data, ensuring minimal disruption in case of failure.

Frequency: Recommended weekly or before and after significant changes such as upgrade or changes to policy in ESA or in case of changes made to any of the keys under Key Management menu in ESA Web UI.

Tools: Configure Scheduled task in ESA to use tools like rsync, scp to copy exported backup files from ESA to an external machine. Ensure backup files are created along with timestamp. Refer point 3 in Scheduler Tasks for achieving this.

Restore Steps: Upload the required backed up matching the required timestamp to the ESA and initiate import. For more information about steps to import the backed up file, refer Importing Data/Configurations from a File.

Important: Scheduled tasks are not backed up as part of file backup.

Custom Files

The following custom files should be backed up and included as part of customer.custom to ensure they are replicated to all ESAs in the cluster during replication jobs:

Any custom files for td-agent.

Any custom files related to External HSM configurations, shared library files.

Any changes to rsyslog.

For more information about adding the required custom files as mentioned above, refer Working with the custom files.

2.4.7 - Insight

Insight Analytics and Audit (Insight) leverages OpenSearch and OpenSearch Dashboard to perform analytics on audit events and log messages aggregated in the Audit Store. Both are distributed as Docker containers that can be hosted on ESAs.

ILM Export

This section outlines the ILM export configuration for various log and metric indices. The objective is to manage the lifecycle of these indices efficiently, ensuring data is archived or deleted as required.

| Index | ILM Export Configuration |

|---|

| Audit Log Index | Maximum size: 50 GB

Maximum doc count: 50 million

Maximum index age: 30 days |

| Protector Status Logs Index | Maximum size: 150 GB

Maximum Doc count: 1 billion

Maximum index Age: 365 days |

| Troubleshooting Logs Index | Maximum size: 150 GB

Maximum Doc count: 1 billion

Maximum index Age: 365 days |

| Policy Logs Index | Maximum size: 150 GB

Maximum doc count: 1 billion

Maximum index age: 365 days |

| Miscellaneous Index | Maximum size: 200 MB

Maximum doc count: 3.5 million

Maximum index age: 7 days |

| DSG Transaction Metrics Index | Maximum size: 1 GB

Maximum doc count: 10 million

Maximum index age: 1 day |

| DSG Error Metrics Index | Maximum size: 1 GB

Maximum doc count: 3.5 million

Maximum index age: 1 day |

| DSG Usage Metrics Index | Maximum size: 1 GB

Maximum doc count: 3.5 million

Maximum index age: 1 day |

ILM Export Configuration Considerations

Maximum size: Defines the maximum size an index can reach before getting exported.

Maximum doc count: Defines the maximum doc count an index can reach before getting exported.

Maximum index age: Defines the maximum age an index can reach before getting exported.

Conditions: ILM Export occurs when either of the above limits is reached where index entries from the index are removed and archived to a file.

For more information about configuring ILM Export, refer ILM Multi Export.

ILM Delete

This section outlines the ILM export configuration for various log and metric indices. The objective is to manage the lifecycle of these indices efficiently, ensuring data is archived or deleted as required.

| Index | ILM Export Configuration |

|---|

| Miscellaneous Index | Maximum size: 1 GB |

| DSG Transaction Metrics Index | Maximum size: 14 GB

Maximum doc count: 100 million

Maximum index age: 30 days |

| DSG Error Metrics Index | Maximum size: 6 GB

Maximum doc count: 50 million

Maximum index age: 30 days |

| DSG Usage Metrics Index | Maximum size: 10 GB

Maximum doc count: 75 million

Maximum index age: 30 days |

ILM Export Configuration Considerations

Maximum size: Defines the maximum size an index can reach before getting deleted.

Maximum doc count: Defines the maximum doc count an index can reach before getting deleted.

Maximum age: Defines the maximum age an index can reach before getting deleted.

Conditions: Deletion occurs when either of the above limits is reached.

For more information about configuring ILM Delete, refer ILM Multi Export.

Index Rollover

This section details the index rollover settings for various log and metric indices. Efficient rollover policies ensure high performance and manageability of the indices.

| Index | Index Rollover Configuration |

|---|

| Audit Log Index | Maximum size: 50 GB

Maximum doc count: 50 million

Maximum index age: 1 day |

| Protector Status Logs Index | Maximum size: 5 GB

Maximum doc count: 200 million

Maximum index age: 30 days |

| Troubleshooting Logs Index | Maximum size: 5 GB

Maximum doc count: 200 million

Maximum index age: 30 days |

| Policy Logs Index | Maximum size: 5 GB

Maximum doc count: 200 million

Maximum index age: 30 days |

| Miscellaneous Index | Maximum size: 200 MB

Maximum doc count: 3.5 million

Maximum index age: 7 days |

| DSG Transaction Metrics Index | Maximum size: 1 GB

Maximum doc count: 10 million

Maximum index age: 1 day |

| DSG Error Metrics Index | Maximum size: 1 GB

Maximum doc count: 3.5 million

Maximum index age: 1 day |

| DSG Usage Metrics Index | Maximum size: 1 GB

Maximum doc count: 3.5 million

Maximum index age: 1 day |

Index Deletion Configuration Considerations

Maximum size: Defines the maximum size an index can reach before rolling over.

Maximum doc count: Defines the maximum doc count an index can reach before rolling over.

Maximum age: Defines the maximum age an index can reach before rolling over.

Conditions: Index Rollover occurs when either of the limits is reached.

For more information about configuring Audit Index Rollover, refer Audit Index Rollover.

Alerting

Create a scheduled task in all the ESAs to monitor and log spikes in CPU, memory, or disk usage that exceed configured thresholds. These logs must be systematically forwarded to the Insight within the ESA.

For more information about configuring alerts, refer Working with alerts.

Requirements

Monitoring Metrics: The task should observe the following system metrics:

- CPU Usage

- Memory Usage

- Disk Usage

Threshold Configuration

- Define specific thresholds for CPU, memory, and disk usage.

- Ensure these thresholds can be adjusted as needed.

Log Generation

Generate detailed logs whenever a spike in CPU, memory, or disk usage exceeds the configured threshold.

Log Forwarding

Implement mechanisms to forward these logs to the Insight within the ESA.

Implementation Steps

Script Development

- Develop a script to monitor CPU, memory, and disk usage.

- Incorporate threshold parameters into the script.

Schedule the task using Task Scheduler.

For more information about creating scheduled task using task scheduler, refer Creating a scheduled task.

Logging Mechanism

Use logger library to write logs to syslog.

Test and Validate

By implementing this scheduled task, the ability to monitor system health and respond proactively to potential issues is enhanced, thereby improving overall system stability and security compliance.

Audit Store Dashboards

It is recommended that default dashboards in ESAs are not modified or deleted.

For more information on Protegrity provided dashboards, refer Working with Protegrity dashboards.

2.5 - Recommended Traffic Manager

The Global and Local Traffic Manager should be a Layer-4 Proxy/Load Balancer. Alternatively, it could also be a DNS Switch.

Layer-4 Proxy/Load Balancer

A Layer-4 proxy/load balancer operates at the transport layer, which means it handles traffic based on IP address and TCP/UDP ports. This type of load balancer is efficient for distributing traffic evenly across servers without inspecting the actual application data.

Examples

HAProxy: A reliable, high-performance TCP/HTTP load balancer.

Nginx: Can be configured to operate as a Layer-4 load balancer.

Configuration Example (HAProxy)

frontend tcp_in

bind *:8443

mode tcp

default_backend esa_servers

backend esa_servers

mode tcp

balance first

server esa1 192.168.1.2:8443 check

server esa2 192.168.1.3:8443 check backup

server esa2 192.168.1.4:8443 check backup

DNS Switch

A DNS switch changes the DNS records to direct traffic to different servers based on predefined rules. It can be used for simple load balancing or failover scenarios.

Examples

- Amazon Route 53: AWS’s scalable DNS and domain name registration service.

Configuration Considerations for DNS Switch

TTL Settings: Keep TTL low for quicker propagation of changes.

Health Checks: Ensure the DNS provider supports health checks and automatic failover.

Geo-routing: Use geographical routing to minimize latency for users.

Ports Eligible for Load Balancer/Proxy with Active and DR Flows

| Port | Service in ESA | Purpose | Active Flow | DR Flow |

|---|

| 8443 | Service Dispatcher | For v9.1.0.0 Protectors to download policy from ESA | Protector -> GTM -> LTM-1 -> ESA P1 | Protector -> GTM -> LTM-2 -> ESA S3 |

| 443 | Service Dispatcher | For Web UI | Protector -> GTM -> LTM-1 -> ESA P1 | Protector -> GTM -> LTM-2 -> ESA S3 |

| 25400 | RPP | For v10.0.0 Protectors to download package from ESA | Protector -> GTM -> LTM-1 -> ESA P1 | Protector -> GTM -> LTM-2 -> ESA S3 |

| 9200 | Insight | For protectors to forward logs to Insight in all 3 ESAs directly in the site in round robin fashion without External SIEM | Protector -> GTM -> LTM-1 -> ESA P1,ESA S1, ESA S2 | Protector -> GTM -> LTM-2 -> ESA S3, ESA S4, ESA S5 |

| 24224/24284 | TD-Agent | For protectors to forward logs to TD-Agent in all 3 ESAs in the site in round robin fashion with External SIEM | Protector -> GTM -> LTM-1 -> ESA P1,ESA S1, ESA S2 | Protector -> GTM -> LTM-2 -> ESA S3, ESA S4, ESA S5 |

| 389 | LDAP | For LDAP and REST API Basic Authentication for DSG(s) | Protector -> GTM -> LTM-1 -> ESA P1 | Protector -> GTM -> LTM-2 -> ESA S3 |

2.6 - Disaster Recovery

In the realm of Disaster Recovery, multiple ESA instances are organized into a TAC in different sites, that is, Primary site and DR site to ensure Disaster Recovery (DR). The following details outline how this system is configured and operates:

Cluster Configuration

Primary-Secondary Replication: Changes made on the Primary ESA P1 to files, configurations, and other data are replicated over a trusted channel to Secondary ESAs- ESA S1, S2, S3 in the primary site and ESA S4, S5, S6 in the DR site.

This is configured using a scheduled task mentioned in point 1 ESA Primary to Secondary Replication Job under section Scheduler Tasks.

Failover Mechanism: If the Primary becomes unavailable, a Secondary can be promoted to Primary to maintain operational continuity.

For more information about promoting Secondary ESA to Primary ESA and its associated changes, refer Promoting Secondary ESA to Primary ESA.

Back up Primary ESA P1 data to a file

Alternatively, backed up file from primary ESA P1 can also provide disaster recovery. This is configured using a scheduled task mentioned in Back up Primary ESA data to file.

2.6.1 - Promoting Secondary ESA to Primary ESA

If there is a failover from Primary ESA P1 to Secondary ESA S3 in DR Site, refer this Architecture Diagram, and if the down time of Primary ESA P1 is going to be for more than an hour, then it is recommended to perform the following steps:

Make secondary ESA S3 as Primary. Create a replication task to replicate the Policy Management and other required data from Secondary ESA S3 to Primary ESA P1.

As soon as the Primary ESA P1 is online, ensure to disable the replication task that is already created.

Perform the following steps for performing failover from Primary to DR Site and failback, that is, from DR site to Primary site:

Failover to DR site

Since ESA P1 in primary site is down, failover to DR site’s ESA, that is, ESA S3 happens.

Promote Secondary ESA to Primary in DR Site

Secondary ESA S3 is now promoted to Primary by enabling the TAC replication job to replicate from ESA S3 to all other ESAs.

Bring up ESA P1 in Site A as Secondary

Once ESA P1 in primary site is ready to be brought up, bring it up as secondary ESA.

As ESA S3 is already elevated to Primary, ESA P1 would start getting the latest updates from ESA S3 through replication.

This can be confirmed through the following steps:

a. Log in to ESA P1 Web UI.

b. Navigate to System > Task Scheduler.

c. Select the replication task.

d. Scroll down the page to view the details of the scheduled task.

e. Ensure that Exportimport command in the Command line shows the updated command as per Step 2.

Allow for replication tasks from ESA S3 to ESA P1 to happen for atleast 2 cycles.

Failback to Primary Site

After the original primary ESA (ESA P1) has replicated from primary ESA, that is, ESA S3, then follow the below steps to disable the replication task in ESA S3 to other ESAs.

a. Log in to web UI of ESA S3.

b. Disable the replication task to replicate data from ESA s3 to other ESAs.

Perform the following steps to enable replication task in ESA P1:

a. Log in to web UI of original primary ESA (ESA P1).

b. Enable the replication task to replicate data from ESA P1 to ESA S3.

c. Restore GTM to point to primary site.

d. Validate that all the pepserver node entry in the ESA P1 is GREEN.

2.7 - Fault Tolerance

The Fault Tolerance strategy encompasses measures to ensure that the ESA infrastructure remains robust against failures and continues to operate optimally under various failure conditions. The key aspects include the following.

ESA Redundancy

Achieve network redundancy by utilizing multiple network paths to prevent single points of failure in the network infrastructure for ESA, that is, having GTM/LTM architecture.

Load Balancing

Deploying load balancers not only aids in disaster recovery but also ensures balanced distribution of traffic specially for forwarding logs to prevent any single ESA from becoming a bottleneck.

Regular Testing

Periodically test failover mechanisms to ensure that they work correctly when needed.

Conduct regular DR drills to verify that the transition from primary to DR site occurs smoothly without service disruption.

Proactive Monitoring

Continuously monitor ESA performance and health metrics to detect issues early and take corrective actions before they escalate into major problems. This can be done by configuring alerts to monitor system monitoring metrics as described in section Alerting.

2.8 - Security

These guidelines are intended to enhance the overall security posture, safeguard sensitive information, and mitigate potential risks. By adhering to these recommendations, organizations can ensure a more secure and resilient environment for their operations.

2.8.1 - Open Ports

For information about the list of ports that needs to be configured to access the features and services on the Protegrity Products, refer Open listening ports.

2.8.2 - Certificate Requirements

The following table outlines the certificate requirements for various components within the ESA infrastructure:

| S.No. | Certificate | CN | SAN | Cert Type | Comments |

| 1 | CA | As per industry standards | NA | CA | NA |

| 2 | ESA Management – Server | FQDN of ESA where it is applied | Hostname and FQDN of ESA where it is applied | Server | Each ESA would have its own unique server

certificate. |

| 3 | ESA Management – Client | Protegrity Client | NA | Client | Each ESA would have its own unique client

certificate. |

| 4 | Consul Server | server.<datacenter name>.<domain> | 127.0.0.1 Hostname and FQDN of ESA where it is applied | Server | Each ESA would have its own unique server certificate. The

domain and datacenter name must be equal to the value mentioned in

the config.json file. For example, server.ptydatacenter.protegrity.

Skip this certificate, consul is uninstalled, and traditional TAC is

being used. |

| 5 | Audit Store – Server | insights_cluster | Hostname and FQDN of all the ESAs in the Audit Store

Cluster | Server | All the ESAs in the Audit Store Cluster should share the

same certificate. |

| 6 | Audit Store – Client | es_security_admin | NA | Client | All the ESAs in the Audit Store Cluster should share the

same certificate. |

| 7 | Audit Store REST – Server | Use same certificate created in entry 5 | Use same certificate created in entry 5 | Server | All the ESAs in the Audit Store Cluster should share the

same certificate. |

| 8 | Audit Store REST – Client | es_admin | NA | Client | All the ESAs in the Audit Store Cluster should share the

same certificate. |

| 9 | Audit Store PLUG – Client | plug | NA | Client | All the ESAs in the Audit Store Cluster should share the

same certificate. |

| 10 | Audit Store Analytics – Client | insight_analytics | NA | Client | All the ESAs in the Audit Store Cluster should share the

same certificate. |

| 11 | DSG Management-Server | FQDN of DSG where it is applied | Hostname and FQDN of DSG where it is applied | Server | Each DSG would have its own unique server

certificate. |

| 12 | DSG Admin Tunnel – Server Certificate | FQDN of DSG where it is applied | Hostname and FQDN of DSG where it is applied | Server | Each DSG would have its own unique server

certificate. |

| 13 | DSG Tunnel – Client Certificate | ProtegrityClient | NA | Client | CN value is configurable in

gateway.json |

The following table provides example as per the recommended deployment architecture mentioned in the section Model Architecture.

| S.No. | Certificate | CN | SAN | Cert Type |

| 1 | CA | As per industry standards | NA | CA |

| 2 | ESA Management – Server | ESA P1 | ESAP1.protegrity.com | ESA P1 | ESAP1.protegrity.com | |

| ESA S1 | ESAS1.protegrity.com | ESA S1 | ESAS1.protegrity.com |

| ESA S2 | ESAS2.protegrity.com | ESA S2 | ESAS2.protegrity.com |

| ESA S3 | ESAS3.protegrity.com | ESA S3 | ESAS3.protegrity.com |

| ESA S4 | ESAS4.protegrity.com | ESA S4 | ESAS4.protegrity.co |

| ESA S5 | ESAS5.protegrity.com | ESA S5 | ESAS5.protegrity.com |

| 3 | ESA Management – Client | Protegrity Client | NA | Client |

| 4 | Consul Server | ESA P1 | server.ptydatacenter. protegrity | ESA P1 | ESAP1.protegrity.com | Server |

| ESA S1 | server.ptydatacenter. protegrity | ESA S1 | ESAS1.protegrity.com |

| ESA S2 | server.ptydatacenter. protegrity | ESA S2 | ESAS2.protegrity.com |

| ESA S3 | server.ptydatacenter. protegrity | ESA S3 | ESAS3.protegrity.com |

| ESA S4 | server.ptydatacenter. protegrity | ESA S4 | ESAS4.protegrity.com |

| ESA S5 | server.ptydatacenter. protegrity | ESA S5 | ESAS5.protegrity.com |

| 5 | Audit Store – Server | Audit Store Cluster- Primary Site | ESA P1 | insights_cluster | ESA P1 | ESAP1.protegrity.com ESAS1.protegrity.com ESAS2.protegrity.com | Server |

| ESA S1 | insights_cluster | ESA S1 | ESAP1.protegrity.com ESAS1.protegrity.com ESAS2.protegrity.com |

| ESA S2 | insights_cluster | ESA S2 | ESAP1.protegrity.com ESAS1.protegrity.com ESAS2.protegrity.com |

| Audit Store Cluster- DR Site | ESA S3 | insights_cluster | ESA S3 | ESAS3.protegrity.com ESAS4.protegrity.com ESAS5.protegrity.com |

| ESA S4 | insights_cluster | ESA S4 | ESAS3.protegrity.com ESAS4.protegrity.com ESAS5.protegrity.com |

| ESA S5 | insights_cluster | ESA S5 | ESAS3.protegrity.com ESAS4.protegrity.com ESAS5.protegrity.com |

| 6 | Audit Store – Client | es_security_admin | NA | Client |

| 7 | Audit Store REST – Server | Use same certificate created in

entry 5 | Use same certificate created in

entry 5 | Server |

| 8 | Audit Store REST – Client | es_admin | NA | Client |

| 9 | Audit Store PLUG – Client | plug | NA | Client |

| 10 | Audit Store Analytics – Client | insight_analytics | NA | Client |

| 11 | DSG Management-Server | FQDN of DSG where it is applied | Hostname and FQDN of DSG where it

is applied | Server |

| 12 | DSG Admin Tunnel – Server Certificate | FQDN of DSG where it is applied | Hostname and FQDN of DSG where it

is applied | Server |

| 13 | DSG Tunnel – Client Certificate | ProtegrityClient | NA | Client |

2.8.3 - FIPS Compliance Requirements

It is recommended to enable FIPS mode in all the ESAs.

For more information about configuring FIPS mode in ESA, refer FIPS Mode.

2.8.4 - Users and Password Policy

A robust users and password policy is essential to ensure the security of the system by controlling access and maintaining the integrity of user accounts.

The following guidelines outline the key requirements for managing users and passwords within the system:

Password Creation

Enforce strong password creation policies.

- Minimum length: 8 characters

- Complexity: Must include uppercase letters, lowercase letters, numbers, and special characters.

Prohibit the use of common passwords and passwords from known data breaches.

Employ mechanisms to prevent the reuse of previous passwords such as, history of 5 previous passwords.

Password Protection

Use multi-factor authentication (MFA) to provide an additional layer of security.

Password Change Requirements

Require users to change their passwords at regular intervals, for example, every 90 days.

Force password changes immediately if a compromise or suspicion of compromise is detected.

Provide mechanisms for users to securely reset their passwords.

Account Lockout Policies

ESA is configured with account lockout after 3 unsuccessful login attempts.

If an external account manager is used:

Implement account lockout after a specified number of failed login attempts, for example, locking out after 3 unsuccessful attempts.

Define a lockout duration or require administrative intervention to unlock accounts.

For more information about password policy for appliance users, refer Password Policy for all appliance users.

2.8.5 - File Integrity Monitoring

Ensure File Integrity Monitoring scheduled task is enabled in ESA.

For more information about the file integrity monitoring, refer Working with File Integrity.

2.8.6 - Delete Default System Certificates

To enhance the security of your system, it is strongly recommended that all default system certificates must be deleted after the application of custom certificates, as outlined in the Certificate Requirements. Implementing the custom certificates ensures that the encryption credentials are unique to your organization and align with your specific security protocols.

2.8.7 - Uninstall Consul

For enhanced security, it is advisable to uninstall Consul from the ESA. Consul necessitates the inclusion of ’localhost’ in the Subject Alternative Name (SAN) field of certificates, which can introduce potential vulnerabilities.

To maintain a secure environment and adhere to best practices, TAC can be configured without integrating with Consul.

For more information about configuring TAC without consul, refer Configuring a Trusted Appliance Cluster (TAC) without Consul Integration.

2.9 - Data Security Gateway (DSG)

The DSG is a flexible platform that applies security operations on the network to protect sensitive data in various environments, including on-premises, virtualized, and cloud. It safeguards data across SaaS applications, web interfaces, APIs, and file transfers using Configuration over Programming (CoP) profiles.

Architecture diagram for DSG v3.3.0.0

Architecture diagram for ESA v10.0.1 with v3.3.0.0

Architecture diagram for DSG v3.3.0.0 in TAC

| Component | Active Flow | Failover Flow |

|---|

| Deployment of Rulesets from ESA | _____ | - - - - - - |

| Policy Download | _____ | - - - - - - |

| Forwarding of Audit Events to ESA | _____ | - - - - - - |

Communication Flow

DSG-1: DSG node configured during DSG patch installation in ESA.

DSG-2 to DSG-n: Other DSGs in TAC

Below table describes communication flows as depicted in diagrams above.

Communication Flow

| Flow | Request Initiator | Destination | Port | Protocol | Flow Description | Configuration |

Deployment of Rulesets from ESA | ESA P1 | DSG-1 | 443 | TLS | Step-1: ESA P1 initiates HTTPs request to DSG-1

directly without GTM/LTM to send command for DSGs to pull

rulesets from ESA P1. If DSG-1 is down, then ESA P1

connects to any of the DSGs i.e. DSG-2 to DSG-n | Primary Active Flow: Sticky to ESA P1 with other ESAs

as standby ESA P1 -> DSG-1 DR Flow: Sticky to

ESA S3 with other ESAs as standby ESA S3 -> DSG-1 |

| DSG node configured during DSG patch installation in

ESA | All other DSGs in TAC | 8300 | TLS | Step-2: DSG forwards the command to pull rulesets to

all other DSGs in TAC | Not Applicable |

| All DSGs in TAC | ESA P1 | 443 | TLS | Step-3: All DSGs in TAC pulls rulesets from ESA P1

parallelly | Primary Active Flow: Sticky to ESA P1 with other ESAs

as standby All DSGs in TAC -> ESA P1 DR Flow: Sticky to

ESA S3 with other ESAs as standby All DSGs in TAC ->

ESA S3 |

Policy Download | Pepserver in the Protector node | Service Dispatcher in ESA | 8443 | TLS | - Through GTM.

- Through LTM-1 for active flow and LTM-2 for failover

flow to Service Dispatcher in ESA.

| Primary Active Flow: Sticky to ESA P1 with other ESAs

as standby Protector 9.1 ->GTM ->LTM-1 ->ESA

P1 DR Flow: Sticky to ESA S3 with other ESAs as

standby Protector 9.1 ->GTM ->LTM-2 ->ESA S3 |

Forwarding of Audit Events to ESA | Log Forwarder in the protector node | Insight in ESA | 9200 | TLS | - Through GTM.

- Through LTM-1 for active flow and LTM-2 for failover

flow to Insight in ESA.

| Primary Active Flow: Routed to all ESAs in the Primary

Site Protector 9.1/10.0 ->GTM ->LTM-1 ->ESA P1,

S1,S2 DR Flow: Routed to all ESAs in the DR

Site Protector 9.1/10.0 ->GTM ->LTM-2 ->ESA S3,

S4,S5 |

Forwarding of Audit Events to External SIEM using the

ESA | Log Forwarder in the protector node | TD-Agent in ESA | 24224/ 24284 | Non-TLS/TLS | - Through GTM.

- Through LTM-1 for active flow and LTM-2 for failover

flow to Insight in ESA.

| Primary Active Flow: Routed to all ESAs in the Primary

Site Protector 9.1/10.0 ->GTM ->LTM-1 ->ESA P1,

S1,S2 -> External SIEM DR Flow: Routed to all ESAs in

the DR Site Protector 9.1/10.0 -> GTM -> LTM-2 ->

ESA S3, S4,S5 -> External SIEM |

2.9.1 - Installing and Configuring DSG

Assumptions

This section assumes that there is no prior installation of DSG product and installation is happening from scratch.

GTM and LTM are provisioned and installed. For information about prescribed configurations for GTM or LTM, refer Recommended Traffic Manager.

Pre-requisites

Ensure there is good network connectivity between the machine where DSG is going to be installed and all the ESAs, and they can communicate with each other.

Ensure ESAs in both Primary site- ESA P1, S1, S2 and DR site- ESA S3, S4, S5 are up and running.

Ensure that ESAs in both sites are in TAC.

Ensure that PIM is initialized on all the ESAs.

Ensure that ESAs in Primary site are in Audit Store Cluster and ESAs in DR site are in a separate Audit Store Cluster.

Ensure all the ESAs in the cluster and DSGs in the cluster, and that ESAs and DSGs themselves are reachable using hostname or FQDN.

1. Installing and Configuring the DSGs

Install DSGs of version 3.3.0.0.

For more information about installing DSG 3.3.0.0, refer Installing the DSG.

Create TAC. Create TAC in one of the DSGs installed in the previous step.

Join DSGs to TAC. Join the rest of the DSGs to the TAC created in the previous step.

Upload and Install DSG Management Server Certificates. Upload and install DSG Management Server certificates in each of the DSGs individually. Ensure the SAN field in each of the certificates has the hostname and FQDN of the DSG node it is going to be installed in.

Perform ESA communication from all the DSGs. For all the options in ESA communication except for Update host settings for DSG, provide GTM IP, hostname, or FQDN as applicable.

For more information about performing set ESA communication, refer Setting up ESA communication.

2.1 Update Host Settings for DSG

For Update host settings for DSG in ESA communication, provide Primary ESA P1’s FQDN/hostname as applicable.

For more information about performing set ESA communication, refer Setting up ESA communication.

3. Install DSG Patch on all the ESAs in the Primary and DR site

Install DSG 3.3.0.0 patch on all ESAs in both sites, that is, ESA P1, S1, S2 in the primary site and ESA S3, S4, S5 in the DR site.

3.1 Provide DSG Details During Patch Installation

During the prompt for DSG details during patch installation, provide any of the DSG’s FQDN/hostname in TAC. Ensure the same DSG FQDN or hostname is provided during patch installation in all other ESAs.

4. Perform Post Installation Steps in all ESAs

For information to perform post installation steps, refer Post installation/upgrade steps.

5. Upload and Apply DSG Admin Tunnel Certificates

Upload and apply DSG Admin tunnel certificates from Web UI in ESA P1.

For more information regarding uploading and applying DSG Admin tunnel certificates, refer Upload Certificate/Keys.

6. Create and Deploy DSG Tunnels and Ruleset

6.1 Create Tunnels and Ruleset

Create tunnels and rulesets from the Web UI in ESA P1.

For more information related to creating tunnels, refer Tunnels.

For more information related to creating rulesets, refer Ruleset Reference.

6.2 Deploy Rulesets

Click on the Deploy button from the DSG’s Cluster page in ESA P1 to deploy rulesets in all the DSGs present in the TAC.

For more information related to deploying rulesets, refer Deploying configurations to the cluster.

7. Check Health Status of DSGs under Cluster Page

After the deployment of rulesets is successful, check the health status of DSGs in TAC from the DSG’s Cluster page in ESA P1. All the DSGs should show health status as green.

8. Ensure TAC Replication Job Includes DSG Configuration

Ensure TAC replication job also includes DSG’s configuration to be replicated to all the ESAs in TAC, that is, from Primary ESA P1 to all the Secondary ESAs S1, S2, S3, S4, S5.

Make sure to follow these steps meticulously to ensure a seamless installation and configuration process.

2.9.2 - Upgrading ESA with DSG

Pre-requisites

All the ESAs must be on v9.2.0.0.

All the DSGs must be on v3.2.0.0 HF-1.

ESAs and DSGs must be in a single TAC.

Ensure there is good network connectivity between the machine where DSG is going to be installed and all the ESAs, and they can communicate with each other.

Ensure ESAs in both Primary site - ESA P1, S1, S2 and DR site - ESA S3, S4, S5 are up and running.

Ensure all the ESAs in the cluster and DSGs in the cluster, and that ESAs and DSGs are reachable using hostname or FQDN.

Important: The ESA v10 only supports protectors having the PEP server version 1.2.2+42 and later. Hence, before proceeding with ESA upgrade, check for the installed protector version. If the protector version is below 1.2.2+42, then it would lead to failure of ESA upgrade. If the protector version is below 1.2.2+42, then remove the registered protectors from Policy Dashboard. For more information on instructions to identify installed protector version, refer documentation section Identifying the protector version.

Canary Upgrade

The Canary upgrade involves re-imaging the existing DSG instances to the newer version one by one using ISO or cloud image as applicable. This could be performed by re-using the same instance or spawning a new instance for DSG and terminating the older version DSGs.

Important: There will be downtime of DSGs during upgrade. However, the downtime can be minimized by spawning the fresh DSGs of version 3.3.0.0 in parallel to upgrading ESAs.

This section explains the upgrade flow of ESAs and DSGs only. It does not consider the presence of 9.1.0.0 protectors apart from DSG.

If DSGs are installed along with other 9.1 protectors, then refer Upgrading ESA with DSGs and 9.1 Protectors.

Canary upgrade is the prescribed way of upgrading DSGs. For alternative ways of upgrading DSGs, refer Upgrade process.

Perform the following steps to upgrade DSGs with ESAs.

1. Pre-Upgrade Steps

Backup all ESAs.

- On-Premise: Perform a full OS backup of all ESAs at both sites.

- Cloud Premises: Take snapshots of each instance to ensure a restore point is available should any issues arise during the upgrade process.

For more information about backup, refer Backup the appliance OS for on-premise ESAs and section 9.1.3 Backing Up on Cloud Platforms.

Delete TAC replication job from Primary ESA P1.

Follow the below steps to disable TAC replication scheduled task.

On the Primary ESA P1’s Web UI, navigate to System > Task Scheduler.

Click on the TAC replication scheduled task.

Click on Remove.

Click on “Apply” button to apply the changes.

2. Upgrading the ESAs in DR Site

Remove all the ESAs at the DR site from the TAC.

It is required to remove all the ESAs at the DR site from the TAC before proceeding with upgrading them.

Upgrade ESAs at the DR Site sequentially. Commence the upgrade by focusing on the ESAs located at the DR site.

Follow the below sequence:

Upgrade ESA S3

Upgrade ESA S4

Upgrade ESA S5

Prerequisites to understand about the pre-requisites.

Upgrade Paths to ESA v10.0.1 to understand upgrade paths to ESA v10.0.1.

Upgrading from v9.2.0.1 for steps to upgrade from ESA v9.2.0.1 to ESA v10.0.1.

Post Upgrade steps to perform post upgrade of ESA.

Ensure each ESA is fully upgraded before proceeding to the next ESA.

3. Post Upgrade Validation of ESAs in DR Site

Conduct thorough validation of the upgraded ESAs at the DR site to confirm operational integrity and successful upgrade. Perform following validations in all the ESAs.

Login to ESA Web UI.

Check for correctness of the version under About.

Navigate to Key Management > Key Stores in ESA Web UI and ensure that External Keystore configurations are intact.

Navigate to Settings > Users and check that External Groups settings are intact.

Navigate to Audit Store > Cluster Management and check if ESA S3, ESA S4 and ESA S5 are visible under Nodes tab and Cluster Status is shown as GREEN.

4. Pre-Upgrade Steps for DSG

Remove existing DSGs from TAC. It is required to remove all the DSGs from the TAC before proceeding with further upgrade steps.

As mentioned at the start of this section, it is expected to have downtime of DSGs. Hence, at this step, stop all the existing DSGs.

5. Upgrading the ESAs in Primary Site

Remove all the ESAs at the Primary site from the TAC before upgrading them.

Upgrade ESAs at the Primary Site sequentially.

Follow the below sequence for upgrading all the ESAs in the primary site:

Upgrade ESA P1

Upgrade ESA S1

Upgrade ESA S2

Prerequisites to understand about the pre-requisites.

Upgrade Paths to ESA v10.0.1 to understand upgrade paths to ESA v10.0.1.

Upgrading from v9.2.0.1 for steps to upgrade from ESA v9.2.0.1 to ESA v10.0.1.

Post Upgrade steps to perform post upgrade of ESA.

Ensure each ESA is fully upgraded before proceeding to the next ESA.

6. Post Upgrade Validation of ESAs in Primary Site

Validate Primary Site ESAs Post Upgrade.

Conduct thorough validation of the upgraded ESAs at the Primary site to confirm operational integrity and successful upgrade.

Perform following validations in all the ESAs.

Login to ESA Web UI.

Check for correctness of the version under About.

Navigate to Key Management > Key Stores in ESA Web UI and ensure that External Keystore configurations are intact.

Navigate to Settings > Users and check that External Groups settings are intact.

Navigate to Audit Store > Cluster Management and check if ESA P1, ESA S1 and ESA S2 are visible under Nodes tab and Cluster Status is shown as GREEN.

7. Installing and Configuring the DSGs

Create fresh DSGs of version 3.3.0.0. Perform this step in parallel to Upgrading the ESAs in Primary Site. This is to minimize the DSG downtime. Create DSGs v3.3.0.0 using ISO or cloud image as applicable.

For more information about installing DSG 3.3.0.0, refer Installing the DSG.

Create a new TAC with re-imaged DSGs. Starting DSG v3.3.0.0, ESAs and DSGs should be separate TAC. Hence, create a new TAC with DSGs re-imaged at above step.

Upload and install DSG Management Server certificates in each of the DSGs individually. Ensure the SAN field in each of the certificates has the hostname and FQDN of the DSG node it is going to be installed in.

8. Creating TAC of all ESAs in Primary and DR sites

Create TAC in Primary ESA P1. It is required to create TAC in Primary ESA P1 first before joining other ESAs in TAC.

Join all the Secondary ESAs to the TAC from both sites. Join all the secondary ESAs that is, ESA S1, ESA S2 in Primary site and all the ESAs in DR site, that is, ESA S3, ESA S4 and ESA S5 to the existing TAC created in the above step 1 with Primary ESA P1.

Perform ESA communication from all the DSGs. For all the options in ESA communication except for Update host settings for DSG, provide GTM IP, hostname or FQDN as applicable. For more information about performing set ESA communication, refer Setting up ESA communication.

9.1 Update Host Settings for DSG

For Update host settings for DSG in ESA communication, provide Primary ESA P1’s FQDN or hostname as applicable. For more information about performing set ESA communication, refer Setting up ESA communication.

10. Install DSG Patch on all the ESAs in the Primary and DR site

Install DSG 3.3.0.0 patch on all ESAs in the Primary and DR site, that is, ESA P1, S1, S2, S3, S4, S5.

10.1 Provide DSG Details During Patch Installation

During the prompt for DSG details during patch installation, provide any of the running DSG’s FQDN or hostname in TAC. Ensure the same DSG FQDN/hostname is provided during DSG patch installation in all other ESAs.

11. Perform Post Installation Steps in All ESAs in the Primary and DR site

For information about performing post installation steps in all the ESAs, refer Post installation/upgrade steps.

12. Check DSG’s Cluster Page in ESA

Check if all the DSGs installed are listed in under Cloud Gateway > Cluster page in ESA.

13. Deploy Rulesets

Click on the Deploy button from the DSG’s Cluster page in ESA P1 to deploy rulesets in all the DSGs present in the TAC. For more information related to deploying rulesets, refer Deploying configurations to the cluster.

14. Check Health Status of DSGs from Cluster Page

After the deployment of rulesets is successful, check the health status of DSGs in TAC from the DSG’s Cluster page in ESA P1. All the DSGs should show health status as green.

15. Check for DSG nodes status in Policy Management Dashboard

Login to ESA P1 Web UI.

Navigate to Policy Management in ESA P1 Web UI and check if Datastores shows all the DSG nodes registrations as GREEN or Ok and Policy Deploy Status as GREEN or Ok.

16. Validate Protector Operations

Confirm that DSGs can perform data security operations post-upgrade of the ESAs.

Verify that audit events are being forwarded successfully to the ESAs.

Create or Enable Scheduler tasks in Primary site ESAs. Create or enable all the scheduler tasks in Primary site ESAs as mentioned in section Scheduler Tasks.

17. Terminate the older version DSGs

With successful upgrade of DSGs and confirming its working with Validate Protector Operations, terminate all the older version DSGs which were stopped at step 2 in Pre-Upgrade Steps for DSG to free up resources.

Additional Considerations

Documentation: Maintain detailed records of the upgrade procedure for future reference.

Troubleshooting: Have contingency plans in place to address potential issues arising during the upgrade.

Support: Utilize Protegrity support services for guidance or troubleshooting assistance as needed.

Make sure to follow these steps meticulously to ensure a seamless upgrade and configuration process.

2.10 - Upgrading ESA with 9.1.0.0 Protectors

This section describes steps to upgrade ESAs with 9.1.0.0 protectors already installed. This section does not consider DSGs being installed.

To ensure compatibility and leverage new features, security fixes and enhancements, it is necessary to upgrade the ESA to the latest version. This section outlines the required steps for upgrading from a previous version, applicable to both on-premise and cloud platforms.

If DSGs are installed along with other 9.1.0.0 protectors, then refer Upgrading ESA with DSGs and 9.1.0.0 Protectors.

If only DSGs are installed and other 9.1.0.0 protectors are not installed, then refer Upgrading DSG.

Important: The steps mentioned in this section will ensure zero downtime of 9.1.0.0 Protectors during ESA upgrade.

Important Points for ESA Upgrade

Ensure that following important points are adhered.

Freeze Policy and Ruleset Changes

Before upgrading the ESA, ensure all policy and ruleset changes are frozen.

No changes to the policies and rulesets should be made until the completion of the ESA upgrade.

Freeze Configurations in ESA

Prior to upgrading the ESA, freeze all configurations within the ESA. Ensure no configuration changes are made to any components in any of the ESAs until the upgrade is complete.

If DSGs are being used, then upgrade the DSGs first. Following that, proceed with upgrading the ESAs.

Refer section Upgrading DSG for steps and recommendations related to upgrading DSGs.

This section elaborates on the upgrade and configuration process for ESAs as per the Deployment with Default Audit logging flow to ESA Architecture diagram.

For more information about upgrading ESA, refer Upgrading ESA to v10.

Important: The ESA v10 only supports protectors having the PEP server version 1.2.2+42 and later. Hence, before proceeding with ESA upgrade, check for the installed protector version. If the protector version is below 1.2.2+42, then it would lead to failure of ESA upgrade. If the protector version is below 1.2.2+42, then remove the registered protectors from Policy Dashboard.

For more information on instructions to identify installed protector version, refer Identifying the protector version.

Upgrade Steps

- Backup all ESAs.

On-Premise: Perform a full OS backup of all ESAs at both sites.

Cloud Premises: Take snapshots of each instance to ensure a restore point is available should any issues arise during the upgrade process.

Delete TAC replication job from Primary ESA P1.

To disable TAC replication scheduled task, follow these steps:

- On the Primary ESA P1’s Web UI, navigate to System > Task Scheduler.

- Click the TAC replication scheduled task.

- Click Remove.

- Click the Apply button to apply the changes.

Ensure all the pre-requisites are followed before proceeding with the upgrade of each ESA.

For more information about the prerequisites, refer Prerequisites.

Remove all the ESAs at the DR site from the TAC. It is required to remove all the ESAs at the DR site from the TAC before proceeding with upgrading them.

Upgrade ESAs at the DR Site sequentially. Commence the upgrade by focusing on the ESAs located at the DR site. Follow the sequence mentioned below.

Upgrade ESA S3

Upgrade ESA S4.

Upgrade ESA S5.

For more information, refer the following:

Prerequisites to understand about the pre-requisites.

Upgrade Paths to ESA v10.0.1 to understand upgrade paths to ESA v10.0.1.

Upgrading from v9.2.0.1 for steps to upgrade from ESA v9.2.0.1 to ESA v10.0.1.

Post Upgrade steps to perform post upgrade of ESA.

Ensure each ESA is fully upgraded before proceeding to the next ESA.

Validate DR Site ESAs Post Upgrade.

- Redirect GTM to LTM2. Adjust configurations to redirect the GTM so that it points to LTM2. This ensures that protectors communicate with the upgraded ESAs at the DR site.

Important: At this stage, do not add any new protectors. The validations mentioned in the below steps are required to be performed using the existing protectors.

Check for 9.1.0.0 protectors status in Policy Management Dashboard.

- Log in to ESA S3 Web UI.

- Navigate to Policy Management in ESA S3 Web UI and check if Datastores shows all the protector registrations as GREEN or Ok and Policy Deploy Status as GREEN or Ok.

Validate Protector Operations.

Confirm that protectors can perform data security operations after upgrading the ESAs.

Verify that audit events are being forwarded successfully to the ESAs.

Remove all the ESAs at the Primary site from the TAC. It is required to remove all the ESAs at the Primary site from the TAC before proceeding with upgrading them.

Upgrade ESAs at the Primary Site sequentially.

a. Follow the below sequence for upgrading all the ESAs in the primary site:

- Upgrade ESA P1.

- Upgrade ESA S1.

- Upgrade ESA S2.

b. Ensure each ESA is fully upgraded before proceeding to the next ESA.

For more information, refer the following:

Prerequisites to understand about the pre-requisites.

Upgrade Paths to ESA v10.0.1 to understand upgrade paths to ESA v10.0.1.

Upgrading from v9.2.0.1 for steps to upgrade from ESA v9.2.0.1 to ESA v10.0.1.

Post Upgrade steps to perform post upgrade of ESA.

Validate Primary Site ESAs post upgrade.

a. Conduct thorough validation of the upgraded ESAs at the primary site to confirm operational integrity and successful upgrade.

b. Perform following validations in all the ESAs.

Log in to ESA Web UI.

Navigate to Key Management > Key Stores in ESA Web UI and ensure that External Keystore configurations are intact.

Navigate to Settings > Users and check that External Groups settings are intact.

Navigate to Audit Store > Cluster Management and check if ESA P1, ESA S1 and ESA S2 are visible under Nodes tab and Cluster Status is GREEN.

Redirect GTM to LTM1. Reconfigure the GTM to point back to LTM1, allowing protectors to resume communication with the ESAs at the primary site.

At this point, Nodes Connectivity Status of some or all the nodes is shown as red (Error) or yellow (Warning) under Policy Management -> Data Stores in ESA P1 Web UI.

Perform the following steps to reset the node status to green (OK)-

Log in to ESA P1 Web UI.

Navigate to Policy Management > Data Stores.

Select nodes showing status as red(Error) or yellow (Warning) and click on delete button to remove the entry.

Important: If there are many pepserver nodes registered, ensure to delete the nodes in a batch of 200.

After deleting the registered nodes as suggested above, pepserver nodes will get re-registered with ESA and status will become green (OK).

Validate Protector Operations.

Confirm that protectors can perform data security operations post upgrading the ESAs.

Verify that audit events are being forwarded successfully to the ESAs.

Form a TAC including all the ESAs in both Primary and DR site. Form a TAC including all the ESAs in Primary site, that is, ESA P1, ESA S1, and ESA S2 and all the ESAs in DR site, that is, ESA S3, ESA S4 and ESA S5.

Create Scheduler tasks in Primary site ESAs. Create all the scheduler tasks in Primary site ESAs as mentioned in section Scheduler Tasks.

Migrate Audit logs from DR site ESAs to Primary site ESAs. When the traffic from protectors was redirected to the DR site ESAs as part of step 8 above, audit logs will be generated in those ESAs. Those audit logs need to be migrated to Primary site ESAs.

Before proceeding with executing the steps, take a note of the following:

Take a note of the time in hours/days that the protectors were pointed to DR site ESAs.

Take a note of the indexes that were created under Audit Store > Cluster Management page’s Indices tab for the time frame noted in above step.

Take a note of the ILM exported indexes that were created under the directory /opt/protegrity/insight/archive in each of the ESAs in DR site for the time frame noted in above step 1.

To migrate Audit logs from DR site ESAs to Primary site ESAs, perform the following steps:

Log in to the web UI of ESA S3 in the DR site.

Perform ILM Export of all the indexes noted at step b above.

For more information about performing ILM Export, refer Exporting logs.

Log in to OS console of ESA S3. Navigate to the directory /opt/protegrity/insight/archive.

Copy all the exported index files generated by ILM Export operation at

step 2 and transfer to ESA S2 in primary site under directory /opt/protegrity/insight/archive.

Additionally, log in to all the ESAs containing ILM exported index files noted at

step 3 above and copy them to ESA S2 under directory /opt/protegrity/insight/archive.

Finally, perform ILM Import of all the index files copied from ESAs in DR site as per step 4 and step 5.

For more information related to ILM Import, refer Importing logs.

Additional Considerations

Documentation: Maintain detailed records of the upgrade procedure for future reference.

Troubleshooting: Have contingency plans in place to address potential issues arising during the upgrade.

Support: Utilize Protegrity support services for guidance or troubleshooting assistance as needed.

By following these structured steps, the upgrade and configuration of ESAs will be executed effectively, ensuring minimal downtime, and maintaining system integrity.

2.11 - Upgrading ESA with DSGs and 9.1.0.0 Protectors

This section describes steps to upgrade ESAs and DSGs with running 9.1.0.0 protectors in backward compatibility mode.

Important Notes

The steps mentioned in this section will ensure zero downtime of 9.1.0.0 Protectors during the event of ESA upgrade.

There will be downtime of DSGs during upgrade. However, the downtime can be minimized by spawning the fresh DSGs of version 3.3.0.0 in parallel to upgrading ESAs.

Important Points for ESA Upgrade

Ensure that following important points are adhered:

Freeze Policy and Ruleset Changes

Before upgrading the ESA, ensure all policy and ruleset changes are frozen.

No changes to the policies and rulesets should be made until the completion of the ESA upgrade.

Freeze Configurations in ESA

Prior to upgrading the ESA, freeze all configurations within the ESA. Ensure no configuration changes are made to any components in any of the ESAs until the upgrade is complete.

If DSGs are being used, then upgrade the DSGs first. Following that, proceed with upgrading the ESAs. Refer section Upgrading DSG for steps and recommendations related to upgrading DSGs.

This section elaborates on the upgrade and configuration process for ESAs as per the Deployment with Default Audit logging flow to ESA Architecture diagram. For more information about upgrading ESA, refer Upgrading ESA to v10.

Important: The ESA v10 only supports protectors having the PEP server version 1.2.2+42 and later. Hence, before proceeding with ESA upgrade, check for the installed protector version. If the protector version is below 1.2.2+42, then it would lead to failure of ESA upgrade. If the protector version is below 1.2.2+42, then remove the registered protectors from Policy Dashboard. For more information on instructions to identify installed protector version, refer Identifying the protector version.

Perform the following steps to upgrade ESAs and DSGs with running 9.1.0.0 protectors in backward compatibility mode.

1. Pre-Upgrade Steps

Backup all ESAs.

On-Premise: Perform a full OS backup of all ESAs at both sites.

Cloud Premises: Take snapshots of each instance to ensure a restore point is available should any issues arise during the upgrade process.

Refer section Backup the appliance OS for on-premise ESAs and section 9.1.3 Backing Up on Cloud Platforms.

Delete TAC replication job from Primary ESA P1.

Follow the below steps to disable TAC replication scheduled task:

- On the Primary ESA P1’s Web UI, navigate to System > Task Scheduler.

- Click on the TAC replication scheduled task.

- Click Remove.

- Click the Apply button to apply the changes.

2. Upgrading the ESAs in DR Site

Remove all the ESAs from the DR site from the TAC. It is required to remove all the ESAs at the DR site from the TAC before proceeding with upgrading them.

Upgrade ESAs at the DR Site sequentially. Commence the upgrade by focusing on the ESAs located at the DR site. Follow the below sequence:

- Upgrade ESA S3

- Upgrade ESA S4.

- Upgrade ESA S5.

Prerequisites to understand about the pre-requisites.

Upgrade Paths to ESA v10.0.1 to understand upgrade paths to ESA v10.0.1.

Upgrading from v9.2.0.1 for steps to upgrade from ESA v9.2.0.1 to ESA v10.0.1.

Post Upgrade steps to perform post upgrade of ESA.