This is the multi-page printable view of this section. Click here to print.

Protegrity AI Team Edition

- 1: Introduction to Protegrity AI Team Edition

- 2: Overview of Protegrity AI Team Edition

- 3: Infrastructure

- 3.1: Preparing for Protegrity AI Team Edition

- 3.2: Configuring Authentication for Protegrity AI Team Edition

- 3.3: Protegrity Provisioned Cluster

- 3.3.1: Installing PPC

- 3.3.1.1: Prerequisites

- 3.3.1.2: Preparing for PPC deployment

- 3.3.1.3: Deploying PPC

- 3.3.2: Accessing PPC using a Linux machine

- 3.3.3: Installing Features and Protectors

- 3.3.4: Login to PPC

- 3.3.4.1: Prerequisites

- 3.3.4.2: Log in to PPC

- 3.3.5: Accessing the PPC CLI

- 3.3.5.1: Prerequisites

- 3.3.5.2: Accessing the PPC CLI

- 3.3.6: Deleting PPC

- 3.3.7: Restoring the PPC

- 3.4: Working with Insight

- 3.4.1: Overview of the dashboards

- 3.4.2: Working with Discover

- 3.4.2.1: Understanding the Insight indexes

- 3.4.2.2: Understanding the index field values

- 3.4.2.3: Index entries

- 3.4.2.4: Log return codes

- 3.4.2.5: Protectors security log codes

- 3.4.2.6: Additional log information

- 3.4.3: Viewing the dashboards

- 3.4.4: Viewing visualizations

- 3.4.5: Index State Management (ISM)

- 3.4.6: Backing up and restoring indexes

- 3.4.7: Working with alerts

- 3.5: Protegrity REST APIs

- 3.5.1: Accessing the Protegrity REST APIs

- 3.5.2: View the Protegrity REST API Specification Document

- 3.5.3: Using the Common REST API Endpoints

- 3.5.4: Using the Authentication and Token Management REST APIs

- 3.5.5: Using the Policy Management REST APIs

- 3.5.6: Using the Encrypted Resilient Package REST APIs

- 3.5.7: Roles and Permissions

- 3.6: Protegrity Command Line Interface (CLI) Reference

- 3.6.1: Administrator Command Line Interface (CLI) Reference

- 3.6.1.1: Configuring SAML SSO

- 3.6.2: Using the Insight Command Line Interface (CLI)

- 3.6.3: Policy Management Command Line Interface (CLI) Reference

- 3.7: Troubleshooting

- 3.8: Replacing the default Certificate Authority (CA) with a Custom CA in PPC

- 4: Governance and Policy

- 4.1: Protegrity Policy Manager

- 4.1.1: Prerequisites for Installing the Policy Workbench

- 4.1.2: Installing Policy Workbench

- 4.1.3: Uninstalling the Protegrity Policy Manager

- 4.1.4: Backing up the Policy Workbench

- 4.1.5: Restoring the Policy Workbench

- 4.1.6: Workbench Roles and Permissions

- 4.1.7: Troubleshooting the Protegrity Policy Manager

- 4.2: Protegrity Agent

- 4.2.1: Prerequisites

- 4.2.2: Roles and Permissions

- 4.2.2.1: Required Roles and Permissions

- 4.2.2.2: Working with Roles

- 4.2.3: Installing Protegrity Agent

- 4.2.3.1: Installing Protegrity Agent

- 4.2.4: Configuring Protegrity Agent

- 4.2.5: Using Protegrity Agent

- 4.2.5.1: Accessing Protegrity Agent UI

- 4.2.5.2: Working with Protegrity Agent

- 4.2.5.3: Samples for using Protegrity Agent

- 4.2.6: Uninstalling Protegrity Agent

- 4.2.7: Appendix - Features and Capabilities and Limitations

- 4.2.8: Appendix - Backup and Restore

- 4.3: Sample Protection Workflows

- 4.3.1: Policy Workflow

- 4.3.1.1: Initialize Policy Management

- 4.3.1.2: Prepare Data Element

- 4.3.1.3: Create Member Source

- 4.3.1.4: Create Role

- 4.3.1.5: Assign Member Source to Role

- 4.3.1.6: Create Policy Shell

- 4.3.1.7: Define Rule with Data Element and Role

- 4.3.1.8: Create Datastore

- 4.3.1.9: Deploy Policy to a Datastore

- 4.3.1.10: Confirm Deployment

- 4.3.2: Create a policy to protect Credit Card Number (CCN)

- 4.3.2.1: Initialize Policy Management

- 4.3.2.2: Prepare Data Element

- 4.3.2.2.1: Create Mask

- 4.3.2.3: Create Member Source

- 4.3.2.3.1: Test New Member Source

- 4.3.2.4: Create Role

- 4.3.2.5: Assign Member Source to Role

- 4.3.2.5.1: Synchronize Member Source

- 4.3.2.6: Create Policy Shell

- 4.3.2.7: Define Rule with Data Element and Role

- 4.3.2.8: Create Datastore

- 4.3.2.9: Deploy Policy to a Datastore

- 4.3.2.10: Confirm Deployment

- 4.3.3: Create a policy to protect Date of Birth (DOB)

- 4.3.3.1: Initialize Policy Management

- 4.3.3.2: Prepare Data Element

- 4.3.3.3: Create Member Source

- 4.3.3.3.1: Test the Member Source

- 4.3.3.4: Create Role

- 4.3.3.5: Assign Member Source to Role

- 4.3.3.5.1: Synchronize Member Source

- 4.3.3.6: Create Policy Shell

- 4.3.3.7: Define Rule with Data Element and Role

- 4.3.3.8: Create Datastore

- 4.3.3.9: Deploy Policy to Datastore

- 4.3.3.10: Confirm Deployment

- 4.3.4: Full Script Examples

- 5: Data Discovery

- 5.1: Prerequisites

- 5.2: Installing Data Discovery

- 5.3: Configuring Data Discovery

- 5.4: Uninstalling Data Discovery

- 5.5: Troubleshooting

- 5.6: Logging usage metrics

- 6: AI Security

- 6.1: Semantic Guardrails

- 7: Data Privacy

- 7.1: Protegrity Anonymization

- 7.1.1: Prerequisites

- 7.1.2: Installing Protegrity Anonymization

- 7.1.3: Configuring Protegrity Anonymization

- 7.1.4: Protegrity Anonymization Python SDK Installation

- 7.1.5: Uninstalling and Cleanup Protegrity Anonymization

- 7.2: Protegrity Synthetic Data

- 8: Protectors

- 8.1: Cloud Protector

- 8.2: Application Protector

- 8.2.1: Application Protector

- 8.2.1.1: Application Protector Java

- 8.2.1.1.1: Installing the Application Protector Java

- 8.2.1.1.2: Uninstalling the Application Protector Java

- 8.2.1.2: Application Protector Python

- 8.2.1.2.1: Installing the Application Protector Python

- 8.2.1.2.2: Uninstalling the Application Protector Python

- 8.2.1.3: Application Protector .Net

- 8.2.1.3.1: Installing the Application Protector .Net

- 8.2.1.3.2: Uninstalling the Application Protector .Net

- 8.2.2: Application Protector Java Container

- 8.2.3: REST Container

- 8.2.3.1: Installing the REST Container

- 8.3: Repository Protector

- 9: AI Team Edition for Microsoft Azure

- 9.1: Protegrity Provisioned Cluster

- 9.1.1: Prerequisites

- 9.1.2: Preparing for PPC deployment

- 9.1.3: Deploying PPC

- 9.1.4: Login to PPC

- 9.1.5: Accessing the PPC CLI

- 9.1.6: Deleting PPC

- 9.1.7: Installing Features

- 9.1.8: Troubleshooting

- 9.2: Protectors

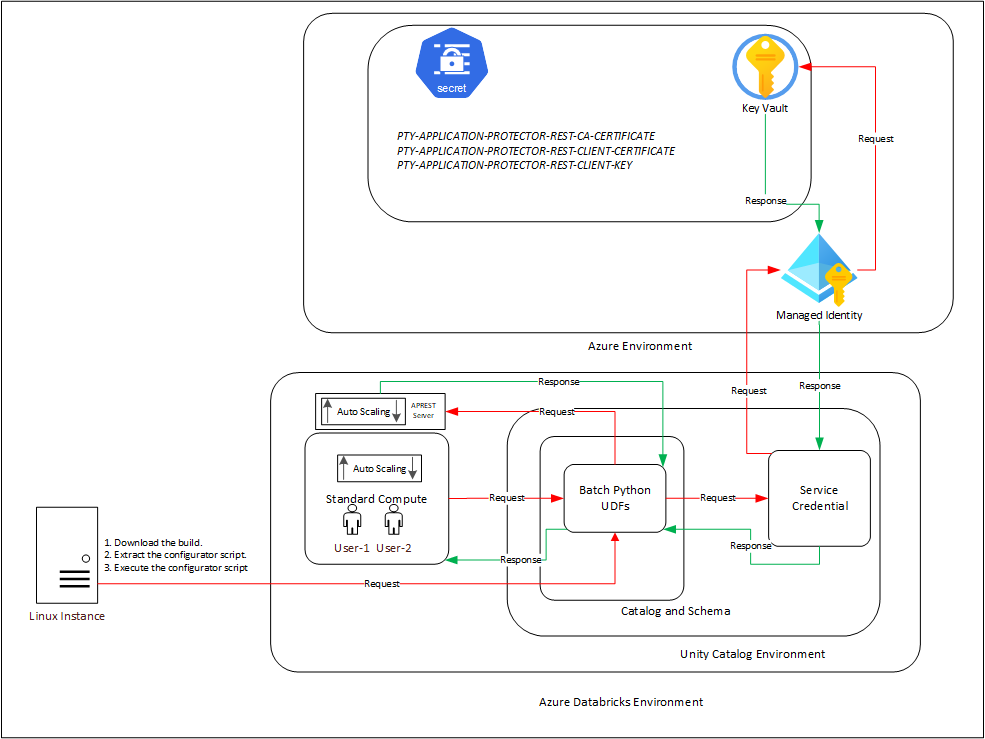

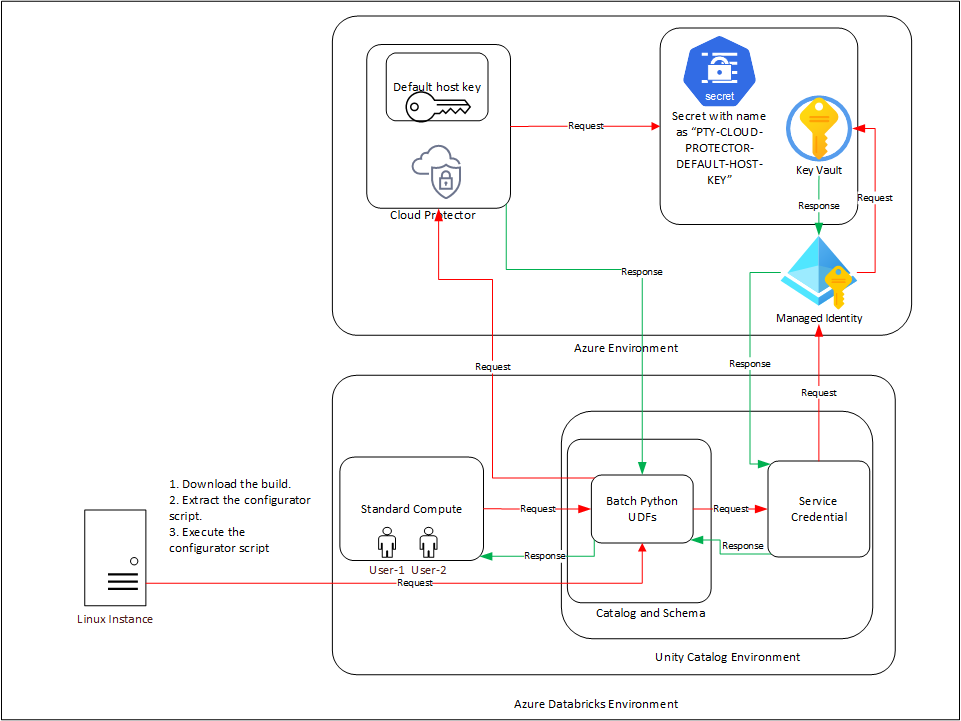

- 9.2.1: Azure Databricks

- 9.2.1.1: Understanding the architecture

- 9.2.1.1.1: For the Application Protector REST Approach

- 9.2.1.1.2: For the Cloud Protector Approach

- 9.2.1.2: System Requirements

- 9.2.1.2.1: For the Application Protector REST Approach

- 9.2.1.2.2: For the Cloud Protector Approach

- 9.2.1.3: Preparing the Environment

- 9.2.1.3.1: Extracting the Installation Package

- 9.2.1.3.2: Working with the Configurator Script

- 9.2.1.3.3: Retrieving the IP Address

- 9.2.1.3.4: Uploading the Secrets to the Azure Key Vault

- 9.2.1.4: Installing the Protector

- 9.2.1.4.1: Creating the User Defined Functions

- 9.2.1.5: Configuring the Protector

- 9.2.1.5.1: Editing the Cluster Configuration

- 9.2.1.6: Uninstalling the Protector

- 9.2.1.6.1: Dropping the User Defined Functions

- 9.2.1.7: User Defined Functions and APIs

- 9.2.1.7.1: Unity Catalog Batch Python UDFs

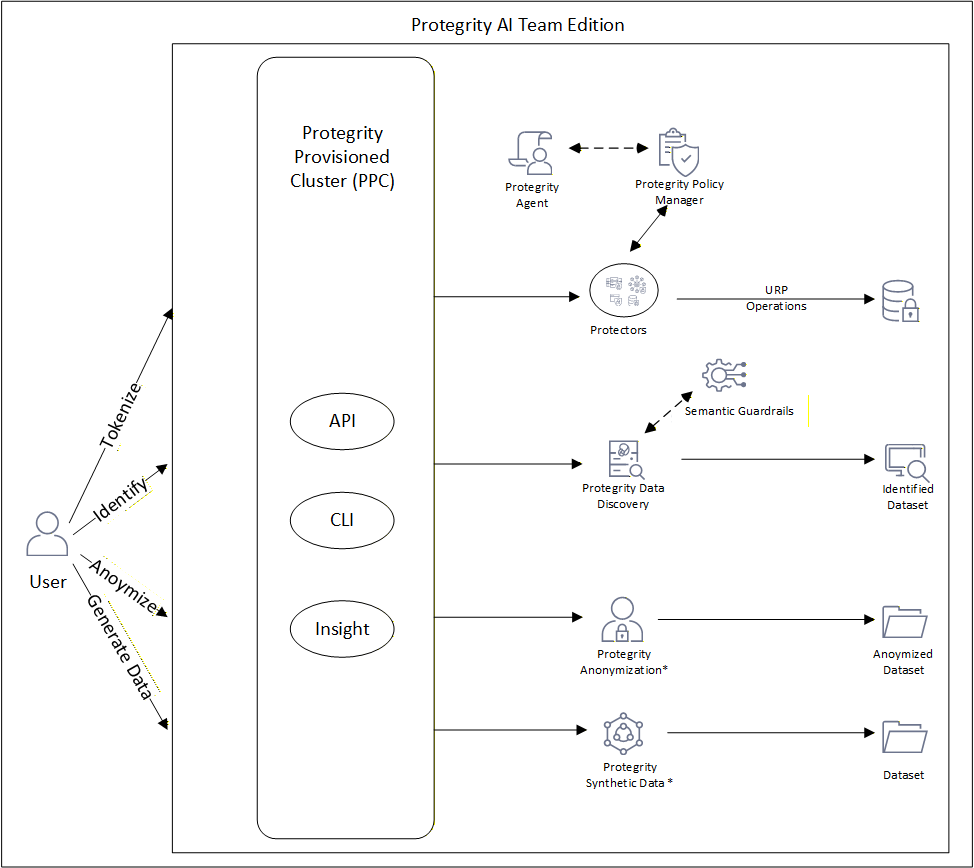

1 - Introduction to Protegrity AI Team Edition

Protegrity AI Team Edition is a container-based data protection solution designed for teams and mid-enterprise organizations that need to safeguard sensitive data across AI, GenAI, and analytics workloads.

It delivers core Protegrity capabilities, including governance, discovery, protection, and privacy. This is provided in a lightweight, containerized form factor that emphasizes fast deployment, simplified operations, and consistent enforcement of data security policies across environments.

Built on a modular, microservices architecture, it moves away from the legacy appliance model to align with modern DevOps practices. The result is a deployment that scales easily, integrates natively with existing CI/CD pipelines, and supports governing agents and securing departmental data.

Purpose and Audience

Protegrity AI Team Edition is intended for:

- Organizations seeking to protect data used in AI or analytics pipelines.

- Teams that require fast deployment cycles and simplified upgrades.

- Customers who need enterprise-grade data protection in a form that can start small, operate independently, and later scale into Protegrity AI Enterprise Edition.

2 - Overview of Protegrity AI Team Edition

The Protegrity AI Team Edition introduces a modern, container-based approach to data protection built on a microservices architecture. It enables organizations to evaluate how Protegrity’s methods, such as, policy management, anonymization, discovery, and semantic controls, integrate into AI and analytics pipelines.

2.1 - Architecture and Design Principles

Protegrity AI Team Edition delivers core Protegrity capabilities. This includes governance, discovery, protection, privacy, and semantic controls. It is provided in a lightweight, containerized form factor that emphasizes fast deployment, simplified operations, and consistent enforcement of data security policies across environments. It is designed around five engineering goals: ease of deployment, high availability, scalability, extensibility, and maintainability.

| Goal | Implementation Details |

|---|---|

| Ease of Deployment | - OpenTofu templates provision a Kubernetes environment (EKS) with minimal manual intervention. - Helm Charts deploy and configure all components for consistent, reproducible setups. - Because each component runs as a container image, upgrades and patches follow standard CI/CD workflows. |

| High Availability | - Kubernetes manages service health and redundancy automatically. - No Trusted Appliance Cluster (TAC) required. - No external load balancers required. - No manual replication required. |

| Scalability | - The system scales horizontally and vertically through Kubernetes-native scale-up and scale-down mechanisms. - Administrators can adjust resources dynamically as workloads grow or shrink without redeployment. |

| Extensibility | - New capabilities are introduced by adding new container images and Helm configurations. - Allows incremental feature expansion without redesign. |

| Maintainability | - Kubernetes simplifies lifecycle management. - Updating a container image replaces an older version automatically, avoiding downtime and manual patching. |

2.2 - Protegrity Common Services

All deployments include a standardized set of common services delivered by a microservices architecture provide routing, security, and audit capabilities for all features and protectors.

| Service | Description |

|---|---|

| Authentication and Authorization | Provides user and service credential validation with role-based access enforcement. |

| Backup and Restore | Creates periodic backup of the cluster and indexes for restoration during Disaster Management. |

| Certificate Management | Manages and validates TLS certificates for inbound and inter-service communication. |

| Common Ingress Controller | The main entry point for all API and service traffic to the cluster. |

| Insight | Provides logging and auditing capabilities using OpenSearch for event storage and Insight Dashboard for visualization and reporting. |

2.3 - Compatible Features

The various features compatible with Protegrity AI Team Edition are provided here.

* - Available for purchase as an add-on. Can be installed as an individual product.

| Feature | Description |

|---|---|

| Anonymization | Apply statistical privacy models such as k-anonymity, l-diversity, and t-closeness to sensitive datasets. |

| Data Discovery | Automatically identify structured and unstructured sensitive data through pattern matching and machine learning classification. |

| Policy Manager | Define and manage data protection policies that govern tokenization, masking, and anonymization. |

| Protegrity Agent | Intelligent assistant for automated policy creation, data classification recommendations, and guided configuration of protection workflows. |

| Semantic Guardrails | Apply contextual and runtime safeguards to AI and analytics workflows to prevent data leakage or misuse. |

| Synthetic Data | Generate tabular synthetic datasets for development, testing, and AI model validation without exposing real sensitive data. |

Protegrity Protectors

Protegrity AI Team Edition protectors enable organizations to embed data protection directly where data is processed, inside applications, analytics engines, or cloud-native data systems. The protectors use the Workbench for obtaining the policy for processing. The Protegrity Agent is available for creating and working with policies in the Workbench.

Application Protectors

Application protectors provide data protection directly within applications or runtime containers. They are suitable for teams developing secure APIs or microservices that handle sensitive data in languages such as Java, Python, or .NET.

| Name | Description | Part Number |

|---|---|---|

| Application Protector – Java Container | Protects data within Java-based containers, such as OpenShift and EKS. | ApplicationProtector_RHUBI-9-64_x86-64_Generic.K8S.JRE-1.8_10.1 |

| Application Protector – REST Container | Provides REST-based protection services for containerized workloads. | REST_RHUBI-9-64_x86-64_K8S_10.1 |

| Application Protector – Python (Linux) | Protegrity Application Protector for Python environments. | ApplicationProtector_Linux-ALL-64_x86-64_PY-3.11_10.0 |

| Application Protector – Java (Linux) | Standard Java runtime protector. | ApplicationProtector_Linux-ALL-64_x86-64_JRE-1.8-64_10.0 |

| Application Protector – .NET | Protegrity Application Protector for Microsoft .NET applications. | ApplicationProtector_WIN-ALL-64_x86-64_NET-STD-2.0-64_10.0 |

Repository Protectors

Repository protectors allow you to apply data protection directly within persistent data stores, enabling sensitive data to remain protected at rest while still being used for analytics and AI workloads.

These protectors consist of Big Data Protectors for Amazon EMR, Databricks, and CDP Data Hub and Cloud-Native Data Warehouse protectors for analytics environments such as Snowflake, Redshift, and Athena.

| Name | Description | Part Number |

|---|---|---|

| Big Data Protector – Amazon EMR | Provides data protection within Amazon EMR clusters. | BigDataProtector_Linux-ALL-64_x86-64_EMR-7.9-64_10.0 |

| Big Data Protector – Databricks | Enables tokenization and masking for Databricks on AWS. | BigDataProtector_Linux-ALL-64_x86-64_AWS.Databricks-17.3-64_10.0.1 |

| Big Data Protector – CDP Data Hub | Supports Cloudera DataWorks Platform deployments on AWS. | BigDataProtector_Linux-ALL-64_x86-64_AWS.Generic.CDP-Datahub-7.3-64_10.0 |

| Cloud Native Data Warehouse Protector – Snowflake | Integrates with Snowflake for secure, compliant analytics on AWS. | CP_SVRL-ALL-64_x86-64_AWS.Snowflake_4.0 |

| Cloud Native Data Warehouse Protector – Redshift | Provides protection for Amazon Redshift queries and transformations. | CP_SVRL-ALL-64_x86-64_AWS.Redshift_4.0 |

| Cloud Native Data Warehouse Protector – Athena | Applies protection to Amazon Athena query execution. | CP_SVRL-ALL-64_x86-64_AWS.Athena_4.0 |

| Cloud Storage Protector – Amazon S3 | Applies protection for Amazon S3. | CSP-S3_SVRL-ALL-64_x86-64_AWS.S3_2.0 |

Cloud API

The Cloud API protector extends Protegrity protection to AWS serverless and API-based workloads. It is typically used for securing transient data handled by AWS Lambda or similar function-based architectures.

| Name | Description | Part Number |

|---|---|---|

| CloudProtect – Cloud API – AWS | Protegrity CloudProtect using AWS Serverless Functions. | CP_SVRL-ALL-64_x86-64_AWS.API_4.0 |

3 - Infrastructure

3.1 - Preparing for Protegrity AI Team Edition

Ensure that the following prerequisites are met. If a feature is not required, skip the requirements in that section.

Infrastructure

Prerequisites for Protegrity Provisioned Cluster (PPC)

For more information, refer to Prerequisites.

Governance and Policy

Prerequisites for Protegrity Policy Manager

For more information, refer to Prerequisites.

Prerequisites for Protegrity Agent

For more information, refer to Prerequisites.

Prerequisites for Data Discovery

For more information, refer to Prerequisites.

AI Security

Prerequisites for Semantic Guardrails

For more information, refer to Prerequisites.

Data Privacy

Prerequisites for Protegrity Anonymization

For more information, refer to Prerequisites.

Prerequisites for Protegrity Synthetic Data

For more information, refer to Prerequisites.

3.2 - Configuring Authentication for Protegrity AI Team Edition

Log into My.Protegrity and obtain the necessary credentials and certificates. This portal hosts all products and features included in your Protegrity contract.

Deploy Using PCR

Use the steps provided here for deploying PPC and the features directly from the PCR.

Log in to the My.Protegrity portal.

Navigate to Product Management > Explore Products > AI Team Edition.

Create an access token to obtain the Username and Secret. Store these credentials carefully, they are required for connecting to https://registry.protegrity.com:9443 and performing registry operations.

Click Access Tokens.

Click Create Access Token.

Click Export To File to save the credentials.

Click I Understand That I Cannot See This Again.

Deploy to Own Registry

Use the steps provided here for pulling the artifacts from PCR and deploying PPC and the features to the organization-hosted registry using standard authentication.

Prerequisites:

For ECR: Ensure that the required AWS credentials are available and set.

Ensure that the jumpbox has connectivity to the Protegrity Container Registry (PCR) and your container registry.

Ensure that the user logged in to the jumpbox is the

rootuser or hassudoeraccess.Ensure that the following tools are installed:

- docker or podman: Must be installed and running. If podman is used, identify the podman directory and create a symbolic link to docker using the following commands:

which podman ln -s /bin/podman /bin/dockerhelm: Kubernetes package manager used to pull and manage Helm charts required for deploying Protegrity AI Team Edition components from an OCI‑compliant registry. Helm v3+ must be installed.

curl: Command‑line HTTP client used by the pull scripts to interact with OCI Distribution APIs, including making authenticated requests to the Protegrity Container Registry.

jq: Lightweight JSON processor used to parse and extract information from the

artifacts.jsonfile that defines the set of artifacts to be pulled and pushed.oras: OCI Registry As Storage (ORAS) client used to pull non‑container, generic OCI artifacts from the registry that are not handled by standard container tooling.

Run the following command to confirm readiness before proceeding:

docker --version && helm version && oras version && jq --version && curl --version

Steps to configure the certificates:

Log in to the My.Protegrity portal.

Navigate to Product Management > Explore Products > AI Team Edition.

Create an access token to obtain the Username and Secret.

Note: Store these credentials carefully, they are required for performing registry operations.

Click Access Tokens.

Click Create Access Token.

Click Export To File to save the credentials.

Click I Understand That I Cannot See This Again.

Obtain the artifacts for setting up the AI Team Edition.

From the Product Management > Explore Products > AI Team Edition page of the My.Protegrity portal, click Download Pull Script. A compressed file is downloaded.

Copy the compressed file to an empty directory on the jumpbox.

Extract the compressed file.

The following files are available:

- artifacts.json: The list of artifacts that are obtained.

- pull_all_artifacts.sh: The script to pull the artifacts from the PCR.

- tag_push_artifacts.sh: The script to tag and push the artifacts to your container registry.

Navigate to the extracted directory. Do not update the contents of the

artifacts.jsonfile.Run the

pull scriptto pull the artifacts to your jumpbox using the following command:./pull_all_artifacts.sh --url https://registry.protegrity.com:9443 --user <username_from_portal> --password <access_key_from_portal> --json artifacts.jsonEnsure that single quotes are used to specify the username and password in the command.

Run the following command to tag and push the artifacts to your container registry.

Sample command for ECR:

./tag_push_artifacts.sh --ecr-uri 123456789012.dkr.ecr.us-east-1.amazonaws.com --region us-east-1 --json artifacts.jsonSample command for Harbor:

./tag_push_artifacts.sh --url https://harbor.example.com --user <your_harbor_username> --password <your_harbor_password> --json artifacts.jsonEnsure that single quotes are used to specify the username and password in the command.

Validate that all the artifacts are successfully pushed to your registry.

Deploy to Own Registry Using mTLS

This section explains how to set up mTLS authentication when using your own container registry. Perform these steps to establish secure, certificate‑based trust and prevent unauthorized access during image pulls and service communication.

Prerequisites:

For ECR: Ensure that the required AWS credentials are available and set.

Ensure that the jumpbox has connectivity to the Protegrity Container Registry (PCR) and your container registry.

Ensure that the user logged in to the jumpbox is the

rootuser or hassudoeraccess.Ensure that the following tools are installed:

- docker or podman: Must be installed and running. If podman is used, identify the podman directory and create a symbolic link to docker using the following commands:

which podman ln -s /bin/podman /bin/dockerhelm: Kubernetes package manager used to pull and manage Helm charts required for deploying Protegrity AI Team Edition components from an OCI‑compliant registry. Helm v3+ must be installed.

curl: Command‑line HTTP client used by the pull scripts to interact with OCI Distribution APIs, including making authenticated requests to the Protegrity Container Registry.

jq: Lightweight JSON processor used to parse and extract information from the

artifacts.jsonfile that defines the set of artifacts to be pulled and pushed.oras: OCI Registry As Storage (ORAS) client used to pull non‑container, generic OCI artifacts from the registry that are not handled by standard container tooling.

Run the following command to confirm readiness before proceeding:

docker --version && helm version && oras version && jq --version && curl --version

Steps to configure the certificates:

Log in to the My.Protegrity portal.

Navigate to Product Management > Explore Products > AI Team Edition.

Create an access token to obtain the Username and Secret.

Note: Store these credentials carefully, they are required for performing registry operations.

Click Access Tokens.

Click Create Access Token.

Click Export To File to save the credentials.

Click I Understand That I Cannot See This Again.

Generate a CSR file for registering the jumpbox with the Protegrity Container Registry.

Open a terminal or command prompt.

Generate a private key.

openssl genrsa -out private.key 2048Create the CSR using the private key.

openssl req -new -key private.key -out request.csrSpecify the following details for the certificate:

- Country (C): Two-letter code (for example, US)

- State/Province (ST)

- City/Locality (L)

- Organization (O): Legal company name

- Organizational Unit (OU): Department (optional)

- Common Name (CN): Domain (for example, www.example.com)

- Email Address: Email address

View the CSR file.

cat request.csr

Create the client certificate to connect to the registry. This step is required only when your security policies mandates mutual TLS (mTLS) for a two-way certificate verification between your environment and the Protegrity Container Registry.

Click Client Certificates.

Click Create Client Certificate.

Click Browse to upload your CSR. Refer to the previous step if you do not have a CSR.

Click Create Client Certificate to generate the client certificate.

From the Client Certificate tab, click Download Client Certificate from the Actions column to download a compressed file with the certificates.

Copy or upload the certificates to the jumpbox.

Warning: Ensure that the same filenames and extensions are used that are provided in the following steps.

Ensure to login to the jumpbox as the

rootuser.Navigate to the

/etc/docker/directory. For podman, navigate to/etc/containers/.Create the

certs.ddirectory.Open the

certs.ddirectory.Create the

registry.protegrity.comdirectory.Copy the compressed file with the certificate to the

/etc/docker/certs.d/registry.protegrity.comdirectory. For podman, navigate to/etc/containers/certs.d/registry.protegrity.com.Extract the compressed file.

The extracted file contains the following certificates:

- protegrityteameditioncontainerregistry_protegrity-usa-inc.crt

- TrustedRoot.crt

- DigiCertCA.crt

Navigate to the extracted directory.

Concatenate the contents of

TrustedRoot.crtandDigiCertCA.crtto a new file calledca.crt.cat TrustedRoot.crt DigiCertCA.crt > ca.crtRename the client certificate file.

``` mv protegrityteameditioncontainerregistry_protegrity-usa-inc.crt client.cert ```Copy the client and CA certificates to

/etc/docker/certs.d/registry.protegrity.com. For podman, copy the certificates to/etc/containers/certs.d/registry.protegrity.com.Copy the

client.keythat was generated to the/etc/docker/certs.d/registry.protegrity.comdirectory. If the/certs.d/registry.protegrity.comdirectory does not exist, then create the directories. For podman, use the/etc/containers/certs.d/registry.protegrity.comdirectory.Copy the Docker registry’s CA certificate to the system’s trusted CA store to establish SSL/TLS trust for that registry. A sample command for RHEL 10.1 is provided here:

For docker: ``` sudo cp /etc/docker/certs.d/registry.protegrity.com/ca.crt /etc/pki/ca-trust/source/anchors/ ``` For podman: ``` sudo cp /etc/containers/certs.d/registry.protegrity.com/ca.crt /etc/pki/ca-trust/source/anchors/ ```- Rebuild the system’s trusted CA bundle. A sample command for RHEL 10.1 is provided here.

``` update-ca-trust ```Restart the container service.

For docker:

service docker restartFor podman:

service podman restart

Obtain the artifacts for setting up the AI Team Edition.

From the Product Management > Explore Products > AI Team Edition page of the My.Protegrity portal, click Download Pull Script. A compressed file is downloaded.

Copy the compressed file to an empty directory on the jumpbox.

Extract the compressed file.

The following files are available:

- artifacts.json: The list of artifacts that are obtained.

- pull_all_artifacts.sh: The script to pull the artifacts from the PCR.

- tag_push_artifacts.sh: The script to tag and push the artifacts to your container registry.

Navigate to the extracted directory. Do not update the contents of the

artifacts.jsonfile.Run the

pull scriptto pull the artifacts to your jumpbox using the following command:For docker:

./pull_all_artifacts.sh --url https://registry.protegrity.com --user <username_from_portal> --password <access_key_from_portal> --json artifacts.json --cert-file /etc/docker/certs.d/registry.protegrity.com/client.cert --key-file /etc/docker/certs.d/registry.protegrity.com/client.keyFor podman:

./pull_all_artifacts.sh --url https://registry.protegrity.com --user <username_from_portal> --password <access_key_from_portal> --json artifacts.json --cert-file /etc/containers/certs.d/registry.protegrity.com/client.cert --key-file /etc/containers/certs.d/registry.protegrity.com/client.keymTLS uses a client certificate and the port 443 to connect to the Protegrity Container Registry. Also, ensure that the certificate files are named as ca.crt, client.cert, and client.key. Ensure that single quotes are used to specify the username and password in the command.

Run the following command to tag and push the artifacts to your container registry.

Sample command for ECR:

./tag_push_artifacts.sh --ecr-uri 123456789012.dkr.ecr.us-east-1.amazonaws.com --region us-east-1 --json artifacts.jsonSample command for Harbor:

./tag_push_artifacts.sh --url https://harbor.example.com --user <your_harbor_username> --password <your_harbor_password> --json artifacts.jsonEnsure that single quotes are used to specify the username and password in the command.

Validate that all the artifacts are successfully pushed to your registry.

3.3 - Protegrity Provisioned Cluster

Beyond infrastructure, Protegrity Provisioned Cluster (PPC) introduces a suite of common Protegrity Common Services (PCS), that act as the backbone for Protegrity AI Team Edition features. These include ingress control for secure traffic routing, certificate management for request validation, and robust authentication and authorization services. PPC also integrates Insight for audit logging and analytics, leveraging OpenSearch and OpenDashboards for visualization and compliance reporting. Along with this foundation, AI Team Edition delivers advanced capabilities such as policy management, anonymization, data discovery, semantic guardrails, and synthetic data generation. All these features are orchestrated within the PPC cluster. This modular approach ensures scalability, security, and flexibility, making PPC a strategic enabler for organizations adopting cloud-first and containerized environments.

3.3.1 - Installing PPC

The Protegrity Provisioned Cluster PPC is the core framework that forms the AI Team Edition. It is designed to deliver a modern, cloud-native experience for data security and governance. Built on Kubernetes, PPC uses a containerized architecture that simplifies deployment and scaling. Using OpenTofu scripts and Helm charts, administrators can stand up clusters with minimal manual intervention, ensuring consistency and reducing operational overhead.

Perform the following steps to set up and deploy the PPC:

3.3.1.1 - Prerequisites

Updating the Roles and Permissions using JSON

The roles and permissions are updated using the JSONs.

From the AWS Console, navigate to IAM > Policies > Create policy > JSON, and create the following JSONs.

Note: Before using the provided JSON, replace the

AWS_ACCOUNT_IDandREGIONvalues with those of the account and region where the resources are being deployed.

- Creating KMS key and S3 bucket

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "ReadOnlyAccess",

"Effect": "Allow",

"Action": [

"eks:DescribeClusterVersions",

"ec2:DescribeInstances",

"ec2:DescribeVolumes",

"s3:ListAllMyBuckets",

"iam:ListUsers",

"ec2:RunInstances",

"ec2:DescribeInstances",

"ec2:DescribeVolumes",

"ec2:CreateKeyPair",

"ec2:DescribeImages"

],

"Resource": "*"

},

{

"Sid": "ScopedS3AndKMS",

"Effect": "Allow",

"Action": [

"s3:ListBucket",

"s3:PutEncryptionConfiguration",

"s3:GetEncryptionConfiguration",

"kms:CreateKey",

"kms:PutKeyPolicy",

"kms:GetKeyPolicy"

],

"Resource": [

"arn:aws:s3:::*",

"arn:aws:kms:*:<AWS_ACCOUNT_ID>:key/*"

]

},

{

"Sid": "SelfServiceIAM",

"Effect": "Allow",

"Action": [

"iam:ListSSHPublicKeys",

"iam:ListServiceSpecificCredentials",

"iam:GetLoginProfile",

"iam:ListAccessKeys",

"iam:CreateAccessKey"

],

"Resource": "arn:aws:iam::<AWS_ACCOUNT_ID>:user/${aws:username}"

},

{

"Sid": "EC2KeyPairPermission",

"Effect": "Allow",

"Action": [

"ec2:CreateKeyPair",

"ec2:DescribeKeyPairs"

],

"Resource": [

"*"

]

}

]

}

- EC2 Service Policy

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "DenyEC2Instances",

"Effect": "Deny",

"Action": "ec2:RunInstances",

"Resource": "arn:aws:ec2:*:*:instance/*",

"Condition": {

"StringLike": {

"ec2:InstanceType": [

"p*",

"g*",

"inf*",

"trn*",

"x*",

"u-*",

"z*",

"mac*"

]

}

}

},

{

"Sid": "ReadOnlyDescribeListEC2RegionRestricted",

"Effect": "Allow",

"Action": [

"ec2:DescribeVpcs",

"ec2:DescribeSubnets",

"ec2:DescribeVpcAttribute",

"ec2:DescribeTags",

"ec2:DescribeSecurityGroups",

"ec2:DescribeSecurityGroupRules",

"ec2:DescribeLaunchTemplates",

"ec2:DescribeLaunchTemplateVersions",

"ec2:DescribeNetworkInterfaces",

"ec2:DescribeAccountAttributes"

],

"Resource": "*",

"Condition": {

"StringEquals": {

"aws:RequestedRegion": [

"<REGION>"

]

}

}

},

{

"Sid": "EC2LifecycleAndSecurity",

"Effect": "Allow",

"Action": [

"ec2:CreateSecurityGroup",

"ec2:DeleteSecurityGroup",

"ec2:AuthorizeSecurityGroupIngress",

"ec2:AuthorizeSecurityGroupEgress",

"ec2:RevokeSecurityGroupIngress",

"ec2:RevokeSecurityGroupEgress",

"ec2:CreateLaunchTemplate",

"ec2:DeleteLaunchTemplate",

"ec2:CreateTags",

"ec2:DeleteTags"

],

"Resource": [

"arn:aws:ec2:*:*:security-group/*",

"arn:aws:ec2:*:*:launch-template/*",

"arn:aws:ec2:*:*:instance/*",

"arn:aws:ec2:*:*:network-interface/*",

"arn:aws:ec2:*:*:subnet/*",

"arn:aws:ec2:*:*:vpc/*",

"arn:aws:ec2:*:*:image/*",

"arn:aws:ec2:*:*:volume/*",

"arn:aws:ec2:*:*:snapshot/*"

]

}

]

}

- EKS Service Policy

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "ReadOnlyDescribeListEKSVersionsRegionRestricted",

"Effect": "Allow",

"Action": [

"eks:DescribeAddonVersions"

],

"Resource": "*",

"Condition": {

"StringEquals": {

"aws:RequestedRegion": [

"<REGION>"

]

}

}

},

{

"Sid": "ReadOnlyDescribeListEKS",

"Effect": "Allow",

"Action": [

"eks:DescribeCluster",

"eks:DescribeAddon",

"eks:DescribePodIdentityAssociation",

"eks:DescribeNodegroup",

"eks:ListAddons",

"eks:ListPodIdentityAssociations"

],

"Resource": [

"arn:aws:eks:*:<AWS_ACCOUNT_ID>:cluster/*",

"arn:aws:eks:*:<AWS_ACCOUNT_ID>:nodegroup/*",

"arn:aws:eks:*:<AWS_ACCOUNT_ID>:addon/*",

"arn:aws:eks:*:<AWS_ACCOUNT_ID>:podidentityassociation/*"

]

},

{

"Sid": "EKSLifecycleAndTag",

"Effect": "Allow",

"Action": [

"eks:CreateCluster",

"eks:UpdateClusterVersion",

"eks:UpdateClusterConfig",

"eks:CreateNodegroup",

"eks:UpdateNodegroupConfig",

"eks:UpdateNodegroupVersion",

"eks:DeleteNodegroup",

"eks:CreateAddon",

"eks:UpdateAddon",

"eks:DeleteAddon",

"eks:CreatePodIdentityAssociation",

"eks:DeletePodIdentityAssociation",

"eks:TagResource",

"eks:ListClusters"

],

"Resource": [

"arn:aws:eks:*:<AWS_ACCOUNT_ID>:cluster/*",

"arn:aws:eks:*:<AWS_ACCOUNT_ID>:nodegroup/*",

"arn:aws:eks:*:<AWS_ACCOUNT_ID>:addon/*",

"arn:aws:eks:*:<AWS_ACCOUNT_ID>:podidentityassociation/*"

]

},

{

"Sid": "AllowEKSNodegroupSLR",

"Effect": "Allow",

"Action": [

"iam:GetRole",

"iam:CreateServiceLinkedRole"

],

"Resource": "arn:aws:iam::<AWS_ACCOUNT_ID>:role/aws-service-role/eks-nodegroup.amazonaws.com/AWSServiceRoleForAmazonEKSNodegroup"

},

{

"Sid": "EKSDeleteClusterV6",

"Effect": "Allow",

"Action": "eks:DeleteCluster",

"Resource": "arn:aws:eks:*:<AWS_ACCOUNT_ID>:cluster/*"

}

]

}

- IAM Service Policy

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "DenyAdminPolicyAttachment",

"Effect": "Deny",

"Action": [

"iam:AttachRolePolicy",

"iam:PutRolePolicy"

],

"Resource": "arn:aws:iam::<AWS_ACCOUNT_ID>:role/eks-*",

"Condition": {

"ArnLike": {

"iam:PolicyARN": [

"arn:aws:iam::aws:policy/AdministratorAccess",

"arn:aws:iam::aws:policy/PowerUserAccess",

"arn:aws:iam::aws:policy/*FullAccess"

]

}

}

},

{

"Sid": "DenyInlinePolicyEscalation",

"Effect": "Deny",

"Action": [

"iam:PutRolePolicy",

"iam:PutUserPolicy",

"iam:PutGroupPolicy"

],

"Resource": "*"

},

{

"Sid": "ReadOnlyDescribeListIAMScoped",

"Effect": "Allow",

"Action": [

"iam:GetRole",

"iam:ListRolePolicies",

"iam:ListAttachedRolePolicies",

"iam:ListInstanceProfilesForRole",

"iam:GetInstanceProfile",

"iam:GetPolicy",

"iam:GetPolicyVersion",

"iam:ListPolicyVersions",

"iam:ListAccessKeys"

],

"Resource": [

"arn:aws:iam::<AWS_ACCOUNT_ID>:role/eks-*",

"arn:aws:iam::<AWS_ACCOUNT_ID>:instance-profile/eks-*",

"arn:aws:iam::<AWS_ACCOUNT_ID>:policy/eks-*"

]

},

{

"Sid": "ReadOnlyDescribeListUnavoidableStar",

"Effect": "Allow",

"Action": "iam:ListRoles",

"Resource": "*"

},

{

"Sid": "IAMLifecycleRolesPoliciesInstanceProfiles",

"Effect": "Allow",

"Action": [

"iam:CreateRole",

"iam:TagRole",

"iam:CreatePolicy",

"iam:DeletePolicy",

"iam:DeletePolicyVersion",

"iam:TagPolicy",

"iam:AttachRolePolicy",

"iam:DetachRolePolicy",

"iam:CreateInstanceProfile",

"iam:TagInstanceProfile",

"iam:AddRoleToInstanceProfile",

"iam:RemoveRoleFromInstanceProfile",

"iam:DeleteInstanceProfile"

],

"Resource": [

"arn:aws:iam::<AWS_ACCOUNT_ID>:role/eks-*",

"arn:aws:iam::<AWS_ACCOUNT_ID>:policy/eks-*",

"arn:aws:iam::<AWS_ACCOUNT_ID>:instance-profile/eks-*"

]

},

{

"Sid": "EKSDeleteRoles",

"Effect": "Allow",

"Action": "iam:DeleteRole",

"Resource": "arn:aws:iam::<AWS_ACCOUNT_ID>:role/eks*"

},

{

"Sid": "PassRoleOnlyToEKS",

"Effect": "Allow",

"Action": "iam:PassRole",

"Resource": "arn:aws:iam::<AWS_ACCOUNT_ID>:role/eks-*",

"Condition": {

"StringEquals": {

"iam:PassedToService": [

"eks.amazonaws.com",

"ec2.amazonaws.com",

"eks-pods.amazonaws.com",

"pods.eks.amazonaws.com"

]

}

}

},

{

"Sid": "PassRoleForEKSPodIdentityRoles",

"Effect": "Allow",

"Action": "iam:PassRole",

"Resource": [

"arn:aws:iam::<AWS_ACCOUNT_ID>:role/eks-*-karpenter-role",

"arn:aws:iam::<AWS_ACCOUNT_ID>:role/eks-*-backup-recovery-utility-role"

]

}

]

}

- KMS Service Policy

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "KMSCreateAndList",

"Effect": "Allow",

"Action": [

"kms:CreateKey",

"kms:ListAliases"

],

"Resource": "*"

},

{

"Sid": "KMSKeyManagementScoped",

"Effect": "Allow",

"Action": [

"kms:PutKeyPolicy",

"kms:GetKeyPolicy",

"kms:DescribeKey",

"kms:GenerateDataKey",

"kms:Decrypt",

"kms:TagResource",

"kms:UntagResource",

"kms:EnableKeyRotation",

"kms:GetKeyRotationStatus",

"kms:ListResourceTags",

"kms:ScheduleKeyDeletion",

"kms:CreateAlias",

"kms:DeleteAlias"

],

"Resource": [

"arn:aws:kms:*:<AWS_ACCOUNT_ID>:key/*",

"arn:aws:kms:*:<AWS_ACCOUNT_ID>:alias/*"

]

}

]

}

- S3 Service Policy

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "S3EncryptionConfigAndStateScoped",

"Effect": "Allow",

"Action": [

"s3:ListBucket",

"s3:GetEncryptionConfiguration",

"s3:PutEncryptionConfiguration",

"s3:GetObject",

"s3:PutObject",

"s3:DeleteObject",

"s3:CreateBucket",

"s3:GetBucketTagging",

"s3:GetBucketPolicy",

"s3:GetBucketAcl",

"s3:GetBucketCORS",

"s3:PutBucketTagging",

"s3:GetBucketWebsite",

"s3:GetBucketVersioning",

"s3:GetAccelerateConfiguration",

"s3:GetBucketRequestPayment",

"s3:GetBucketLogging",

"s3:GetLifecycleConfiguration",

"s3:GetReplicationConfiguration",

"s3:GetBucketObjectLockConfiguration",

"s3:DeleteBucket"

],

"Resource": "arn:aws:s3:::*",

"Condition": {

"StringEquals": {

"aws:RequestedRegion": [

"<REGION>"

],

"aws:PrincipalAccount": "<AWS_ACCOUNT_ID>"

}

}

}

]

}

Description for the JSON components

This section provides information for the permissions mentioned in the JSON file.

IAM Roles

Contact your IT team to create the necessary IAM roles with the following permissions to create and manage AWS EKS resources.

| IAM Role | Required Policies |

|---|---|

| Amazon EKS cluster IAM Role Manages the Kubernetes cluster. | - AmazonEKSBlockStoragePolicy - AmazonEKSClusterPolicy - AmazonEKSComputePolicy - AmazonEKSLoadBalancingPolicy - AmazonEKSNetworkingPolicy - AmazonEKSVPCResourceController - AmazonEKSServicePolicy - AmazonEBSCSIDriverPolicy |

| Amazon EKS node IAM Role Communicates with the node. | - AmazonEBSCSIDriverPolicy - AmazonEC2ContainerRegistryReadOnly - AmazonEKS_CNI_Policy - AmazonEKSWorkerNodePolicy - AmazonSSMManagedInstanceCore |

These policies are managed by AWS. For more information about AWS managed policies, refer to AWS managed policies for Amazon Elastic Kubernetes Service in the AWS documentation.

AWS IAM Permissions

The AWS IAM user or role to install PPC must have permissions to create and manage Amazon EKS clusters and the required supporting AWS resources.

EC2 Permissions

| Category | Required Permissions |

|---|---|

| Networking & VPC | ec2:DescribeVpcs ec2:DescribeSubnets ec2:DescribeVpcAttribute ec2:DescribeTags ec2:DescribeNetworkInterfaces |

| Security Groups | ec2:DescribeSecurityGroups ec2:DescribeSecurityGroupRules ec2:CreateSecurityGroup ec2:DeleteSecurityGroup ec2:AuthorizeSecurityGroupIngress ec2:AuthorizeSecurityGroupEgress ec2:RevokeSecurityGroupIngress ec2:RevokeSecurityGroupEgress |

| Launch Templates | ec2:DescribeLaunchTemplates ec2:DescribeLaunchTemplateVersions ec2:CreateLaunchTemplate ec2:DeleteLaunchTemplate |

| Instances | ec2:RunInstances |

| Tagging | ec2:CreateTags ec2:DeleteTags |

EKS Permissions

| Category | Required Permissions |

|---|---|

| Cluster Management | eks:CreateCluster eks:DescribeCluster |

| Node Groups | eks:CreateNodegroup eks:DescribeNodegroup |

| Add-ons | eks:CreateAddon eks:DescribeAddon eks:DescribeAddonVersions eks:DeleteAddon eks:ListAddons |

| Pod Identity Associations | eks:CreatePodIdentityAssociation eks:DescribePodIdentityAssociation eks:DeletePodIdentityAssociation eks:ListPodIdentityAssociations |

| Tagging | eks:TagResource |

IAM Permissions

| Category | Required Permissions |

|---|---|

| Roles & Policies | iam:CreateRole iam:DeleteRole iam:TagRole iam:GetRole iam:ListRoles iam:AttachRolePolicy iam:DetachRolePolicy iam:ListRolePolicies iam:ListAttachedRolePolicies |

| Policies | iam:CreatePolicy iam:DeletePolicy iam:TagPolicy iam:GetPolicy iam:GetPolicyVersion iam:ListPolicyVersions |

| Instance Profiles | iam:CreateInstanceProfile iam:DeleteInstanceProfile iam:TagInstanceProfile iam:GetInstanceProfile iam:AddRoleToInstanceProfile iam:RemoveRoleFromInstanceProfile iam:ListInstanceProfilesForRole |

| Service-linked Role | iam:CreateServiceLinkedRole |

S3 Permissions

| Required Permissions |

|---|

| s3:ListBucket |

| s3:PutEncryptionConfiguration |

| s3:GetEncryptionConfiguration |

KMS Permissions

| Required Permissions |

|---|

| kms:CreateKey |

| kms:PutKeyPolicy |

| kms:GetKeyPolicy |

Jump box or local machine

A dedicated EC2 instance (RHEL 10 , Debian 12/13) for deployment.

AWS Account Details

A valid AWS account where Amazon EKS will be deployed. The AWS account ID and AWS region must be identified in advance, as all resources will be provisioned in the selected region.

Service Quotas

Verify that the AWS account has sufficient service quotas to support the deployment. At a minimum, ensure adequate limits for the following:

- EC2 instances based on node group size and instance types.

- VPC and networking limits, including subnets, route tables, and security groups.

- Elastic IP addresses and Load balancers.

If required, request quota increases through the AWS Service Quotas console before proceeding.

Service Control Policies (SCPs)

The AWS account must not have SCPs that restrict required permissions. In particular, SCPs must not block the following actions:

- eks:*

- ec2:*

- iam:PassRole

Restrictive SCPs may prevent successful cluster creation and resource provisioning.

Virtual Private Cloud (VPC)

- An existing VPC must be available in the target AWS region.

- The VPC should be configured to support Amazon EKS workloads.

Subnet Requirements

- At least two private subnets must be available.

- Subnets must be distributed across two or more Availability Zones (AZs).

Specify an AWS Region other than us-east-1

By default, the installation deploys resources in the us-east-1 AWS Region. The AWS Region is currently hardcoded in the Terraform configuration and must be manually updated to deploy to a different region.

Note: The AWS Region is defined in the

iac_setup/scripts/iac/variables.tffile.

To update the AWS Region, perform the following steps:

Open the

variables.tffile in a text editor.Locate the text

default = "us-east-1".Replace

us-east-1with the required AWS Region. For example,"us-west-1".Save the file.

Additional Step for Regions Outside North America

If you are deploying in an AWS Region outside North America, the OS image configuration must also be updated.

In the same

variables.tffile, locate the textdefault = "BOTTLEROCKET_x86_64_FIPS".Update the value to

default = "BOTTLEROCKET_x86_64".Save the file.

Creating AWS KMS Key and S3 Bucket

Amazon S3 Bucket: An Amazon S3 bucket is required to store critical data such as backups, configuration artifacts, and restore metadata used during installation and recovery workflows. Using a dedicated S3 bucket helps ensure data durability, isolation, and controlled access during cluster operations.

AWS KMS Key: An AWS KMS customer‑managed key is required to encrypt data stored in the S3 bucket. This ensures that sensitive data is protected at rest and allows customers to manage encryption policies, key rotation, and access control in accordance with their security requirements.

Note: The KMS key must allow access to the IAM roles used by the EKS cluster and related services.

The following section explains how to create AWS KMS Key and S3 Bucket. This can be done from the AWS Web UI or using the script.

- Create a KMS key for backup bucket

The KMS key created is referenced during installation and restore using its KMS ARN, and is validated by the installer.

Before you begin, ensure to have:

Access to the AWS account where the KMS key is created.

The KMS key can be in the same AWS account as the S3 bucket, or in a different, cross‑account AWS account.

The user running the installer must have the permission

kms:DescribeKeyto describe the KMS key. Without this permission, installation and restore fails.

The steps to create a KMS key are available at https://docs.aws.amazon.com/. Follow the KMS key creation steps, but ensure to select the following configurations.

On the Key configuration page:

Select Key type as Symmetric.

Select Key usage as Encrypt and decrypt.These settings are required for encrypting and decrypting S3 objects used by backup and restore operations.

On the Key Administrative Permissions page, select the users or roles that can manage the key. The key administrators do not automatically get permission to encrypt or decrypt data, unless these permissions are explicitly granted.

On the Define key usage permissions page, grant permissions to the principals that will use the key.

The user or role running the installation and restore must have the permission

kms:DescribeKeyto describe the key. This permission is mandatory because the installer validates the KMS key before proceeding. Without this, the installation or restore procedure fails, especially in cross‑account KMS scenarios.On the Edit key policy - optional page, click Edit.

The KMS key policy controls the access to the encryption key and must be applied before creating the S3 bucket.

Note: If you are using AWS SSO IAM Identity Center, ensure that the IAM role ARN specified in the KMS key policy includes the full SSO path prefix:

aws-reserved/sso.amazonaws.com/.

For example:arn:aws:iam::<ACCOUNT_ID>:role/aws-reserved/sso.amazonaws.com/<SSO_ROLE_NAME>

Omitting this path results in KMS key policy creation failures with anInvalidArnException.The following example shows a key policy that:

- Allows the PPC bootstrap user to verify the KMS key.

- Allows the IAM role to encrypt and decrypt EKS backups.

cat > kms-key-policy.json << 'EOF'

{

"Version": "2012-10-17",

"Id": "key-resource-policy-0",

"Statement": [

{

"Sid": "Allow KMS administrative actions only, no key usage permissions.",

"Effect": "Allow",

"Principal": {

"AWS": "arn:aws:iam::<<ADMIN_AWS_ACCOUNT>>:root"

},

"Action": [

"kms:Create*",

"kms:Describe*",

"kms:Enable*",

"kms:List*",

"kms:Put*",

"kms:Update*",

"kms:Revoke*",

"kms:Disable*",

"kms:Get*",

"kms:Delete*",

"kms:ScheduleKeyDeletion",

"kms:CancelKeyDeletion"

],

"Resource": "*"

},

{

"Sid": "Allow user running bootstrap.sh script of the PPC to verify the KMS key.",

"Effect": "Allow",

"Principal": {

"AWS": "<<SSO_OR_IAM_USER_ACCOUNT_ARN>>"

},

"Action": "kms:DescribeKey",

"Resource": "*"

},

{

"Sid": "Allow backup recovery utility and EKS Node roles KMS key usage permissions, Replace <<<<CLUSTER_NAME>>>> with the name of your EKS cluster.",

"Effect": "Allow",

"Principal": {

"AWS": "arn:aws:iam::<<DEPLOYMENT_AWS_ACCOUNT>>:root"

},

"Action": [

"kms:Decrypt",

"kms:Encrypt",

"kms:ReEncryptFrom",

"kms:ReEncryptTo",

"kms:GenerateDataKey",

"kms:GenerateDataKeyWithoutPlaintext",

"kms:GenerateDataKeyPair",

"kms:GenerateDataKeyPairWithoutPlaintext",

"kms:DescribeKey"

],

"Resource": "*",

"Condition": {

"ArnLike": {

"aws:PrincipalArn": [

"arn:aws:iam::<<DEPLOYMENT_AWS_ACCOUNT>>:role/eks-<<CLUSTER_NAME>>-backup-recovery-utility-role",

"arn:aws:iam::<<DEPLOYMENT_AWS_ACCOUNT>>:role/eks-<<CLUSTER_NAME>>-node-role"

]

}

}

}

]

}

EOF

Update the values of the following based on the environment:

DEPLOYMENT_AWS_ACCOUNT- AWS account ID.CLUSTER_NAME- EKS cluster name.SSO_OR_IAM_USER_ACCOUNT_ARN- ARN of the IAM role used to run the bootstrap script. The ARN format depends on your authentication method:IAM role – Use the ARN returned by

aws sts get-caller-identity.AWS SSO (IAM Identity Center) – Convert the session ARN returned by

aws sts get-caller-identityto a full IAM role ARN before using it in the KMS key policy.

Note: If you are using AWS SSO (IAM Identity Center), the ARN returned by

aws sts get-caller-identityis a session ARN and cannot be used directly in an AWS KMS key policy. AWS KMS requires the full IAM role ARN, including theaws-reserved/sso.amazonaws.com/path. Without this, KMS key policy creation fails withInvalidArnException.

Retrieving the IAM role ARN for KMS key policy

To identify the role used to run the bootstrap script, run the following command:

aws sts get-caller-identity --query Arn --output text

IAM role: Use the returned ARN directly.

arn:aws:iam::<DEPLOYMENT_AWS_ACCOUNT>:role/your-role-nameAWS SSO (IAM Identity Center): The command returns a session ARN, which must be converted.

Do not use the session ARN:

arn:aws:sts::<<DEPLOYMENT_AWS_ACCOUNT>>:assumed-role/AWSReservedSSO_PermissionSetName_abc123/john.doe@company.comUse this converted IAM role ARN in KMS policy:

arn:aws:iam::<<DEPLOYMENT_AWS_ACCOUNT>>:role/aws-reserved/sso.amazonaws.com/AWSReservedSSO_PermissionSetName_abc123To convert:

- Replace

arn:aws:sts::witharn:aws:iam::. - Replace

assumed-role/withrole/aws-reserved/sso.amazonaws.com/. - Remove the session suffix (everything after the last /).

- Replace

Important: Before initiating restore, review and update the KMS key policy to reflect the restore

CLUSTER_NAME. Even if the policy was already configured for the source cluster, it must be updated for the new restore cluster. If the policy continues to reference the source cluster name, the IAM role created during restore cannot decrypt the backup data, causing the restore to fail.

After the KMS key is created, note the KMS key ARN. This KMS key ARN is required while creating the S3 backup bucket.

- Create an AWS S3 Bucket encrypted with SSE‑KMS

The S3 bucket encrypted with SSE‑KMS is used as a backup bucket during installation and restore.

Before you begin, ensure to have:

Access to the AWS account where the S3 bucket will be created.

Permission to create S3 bucket.

The user running the installer must have permission to describe the KMS key. Without this permission, installation and restore fails.

The steps to create an AWS S3 bucket are available at https://docs.aws.amazon.com/. Follow the S3 bucket creation steps, but ensure to set the following configurations as mentioned below.

In the Default Encryption section:

Select Encryption type as Server-side encryption with AWS Key Management Service keys (SSE-KMS).

Select the AWS KMS key ARN.

If the KMS key is in a different AWS account than the S3 bucket, then the key will not appear in the AWS console dropdown. In this case, enter the KMS key ARN manually.

Enable Bucket Key.

Automating AWS KMS Key and S3 Bucket Creation

This section describes how to use the optional resiliency initialization script to automatically create an AWS KMS key and an encrypted S3 bucket. This script can be used only after dowloading and extracting the PCT.

The S3 bucket and KMS key will be created in the same AWS account using this script. Cross-account KMS configurations are not supported with this script. For cross account KMS configurations, follow the steps mentioned in the tab Using AWS Web UI.

This automated approach is an alternative to the manual creation of the S3 bucket and KMS key using the AWS Web UI. Running this script is optional and not required for standard setup.

Before running the script, ensure the following:

- You have permissions to:

- Create S3 buckets.

- Create AWS KMS keys.

- Modify KMS key policies.

- AWS credentials can be configured during script execution.

If required permissions are missing, the script fails during readiness checks.

The resiliency initialization script automates the following tasks:

- Creates an AWS KMS key.

- Creates an S3 bucket.

- Associates the S3 bucket with the KMS key.

- Enables encryption on the S3 bucket.

- Outputs the S3 bucket ARN and KMS key ARN for future reference.

The script is available in the extracted build under the bootstrap-scripts directory. Run the script from the bootstrap-scripts directory to view a list of available parameters and options.

```bash

cd <extracted_folder>/bootstrap-scripts

./init-resiliency.sh --help

```

The following parameters are mandatory when running the resiliency script:

- AWS region

- EKS cluster name

The EKS cluster name is required because:

- It identifies and authorizes an IAM role.

- The IAM role is referenced in the KMS key policy.

- The same cluster name must also be provided in the bootstrap script. If the cluster name differs between this script and the bootstrap script, backup operations fail.

Note: Before running the bootstrap or resiliency scripts as the root user on RHEL, ensure that /usr/local/bin (and the AWS CLI binary path, if applicable) is included in the $PATH. Alternatively, run the script using a non-root user (such as ec2-user) where /usr/local/bin is already part of the default PATH.

Run the following command to initiate AWS KMS Key and S3 bucket creation:

./bootstrap-scripts/init-resiliency.sh --aws-region <AWS_region> --bucket-name <backup_bucket_name> --cluster-name <EKS_cluster_name>

The script prompts for AWS access key, secret key, and session token.

After running the script, the following confirmation message appears.

Do you want to proceed with creating the S3 bucket and KMS key? (yes/no) :

Type yes to proceed with S3 bucket creation and AWS KMS key.

After the setup is complete, the output displays details of the generated S3 bucket ARN and the KMS key ARN. Note these values for future reference.

3.3.1.2 - Preparing for PPC deployment

This section describes the steps to download and extract the recipe for deploying the PPC.

Note: If you have set up the jump box previously, then from

/deployment/iac_setup/directory, run themake cleancommand. This ensures that the local repository on the jump box and the clusters are cleaned up before proceeding with a new installation.

Warning: Do not install or manage multiple clusters from the same working directory. Each cluster deployment maintains its own Terraform/OpenTofu state, and reusing a directory can overwrite state files, causing loss of cluster tracking and unintended cleanup behavior.

Use a dedicated directory, and jump box, where possible, per cluster, and always verify the active kubectl context before running cleanup commands such asmake clean.

Log in to the My.Protegrity portal.

Navigate to Product Management > Explore Products > AI Team Edition.

From the Release list, select a release version.

From Platform and Feature Installation, click the Download Product icon.

Create a

deploymentdirectory on the jumpbox.mkdir deployment && cd deploymentCopy the archive to the

deploymentdirectory on the jumpbox.Extract the archive.

tar -xvf PPC-K8S-64_x86-64_AWS-EKS_1.0.0.x

3.3.1.3 - Deploying PPC

Before you begin

Before running the bootstrap or resiliency scripts as the root user on RHEL, ensure that /usr/local/bin (and the AWS CLI binary path, if applicable) is included in the $PATH. Alternatively, run the script using a non-root user (such as ec2-user) where /usr/local/bin is already part of the default PATH.

By default, the installation is configured to use the us-east-1 AWS region. If you plan to install the product in a different region, update the region value in the iac_setup/scripts/iac/variables.tf file before starting the installation.

For more information on updating the AWS region, refer to Specify an AWS Region other than

us-east-1.

The repository provides a bootstrap script that automatically installs or updates the following software on the jump box:

- AWS CLI - Required to communicate with your AWS account.

- OpenTofu - Required to manage infrastructure as code.

- kubectl - Required to communicate with the Kubernetes cluster.

- Helm - Required to manage Kubernetes packages.

- Make - Required to run the OpenTofu automation scripts.

- jq - Required to parse JSON.

The bootstrap script also checks if you have the required permissions on AWS. It then sets up the EKS cluster and installs the microservices required for deploying the PPC.

The bootstrap script asks for variables to be set to complete your deployment. Follow the instructions on the screen:

./bootstrap.sh

The script prompts for the following variables.

Enter Cluster Name

The following characters are allowed:

- Lowercase letters:

a-z - Numbers:

0-9 - Hyphens:

-

The following characters are not allowed:

- Uppercase letters:

A-Z - Underscores:

_ - Spaces

- Any special characters such as:

/ ? * + % ! @ # $ ^ & ( ) = [ ] { } : ; , . - Leading or trailing hyphens

- More than 31 characters

Note: Ensure that the cluster name does not exceed 31 characters. Cluster names longer than this limit can cause the bootstrap script to fail in subsequent installation steps.

If the installation fails because the cluster name exceeds the 31-character limit, correct the name and re-run the script.- Correction: Choose a cluster name with 31 characters or fewer.

- Retry: Execute the installation command again with the updated name. The script will automatically handle the update and proceed with the bootstrap process.

- Lowercase letters:

Enter a VPC ID from the table

The script automatically retrieves the available VPCs. Enter the VPC ID where the cluster must be created.

Querying for subnets in VPC…

The script queries for the available VPC subnets and prompts to enter two private subnet IDs. Specify two private subnet IDs from different availability zones.

The script then automatically updates the VPC CIDR block based on the VPC details.Enter FQDN

This is the Fully Qualified Domain Name for the ingress.

Warning: Ensure that the FQDN does not exceed 50 characters and only the following characters are used:

- Lowercase letters:

a-z - Numbers:

0-9 - Special characters:

- .

- Lowercase letters:

Enter S3 Backup Bucket Name

An AWS S3 bucket encrypted with SSE‑KMS for storing backup data for disaster recovery.

Use a dedicated S3 bucket per cluster for backup and restore operations to ensure data and encryption isolation. Sharing a bucket across clusters increases the risk of cross-cluster data access or decryption due to IAM misconfiguration. Dedicated buckets with unique IAM policies eliminate this risk.

During disaster management, OpenSearch restores only those snapshots that are created using the daily-insight-snapshots policy. For more information, refer to Backing up and restoring indexes.

Enter Image Registry Endpoint

The image repository from where the container images are retrieved. Use

registry.protegrity.com:9443for using the Protegrity Container Registry (PCR), else use the local repository endpoint for the local repository.Expected format:

[:port]. Do not include ‘https://’ Note: The container registry endpoint must be a FQDN (Fully Qualified Domain Name). Sub-paths like, my-registry.com/v2/path, are not supported by the OCI distribution specification.

Enter Registry Username []

Enter the username for the registry mentioned in the previous step. Leave this entry blank if the registry does not require authentication.

Enter Registry Password or Access Token

Enter Password or Access Token for the registry. Input is masked with

*characters. Press Enter to keep the current value. Leave this entry blank if the registry does not require authentication.After providing all information, the following confirmation message appears.

Configuration updated successfully.

Would you like to proceed with the setup now?

Proceed? (yes/no):

Type yes to initiate the setup.

Note: The cluster creation process can take 10-15 minutes.

If the session is terminated during installation due to network issues, power outage, and so on, then the installation stops. To restart the installation, run the following commands:

# Navigate to setup directory

cd iac_setup

# Clean up all resources

make clean

# Navigate to setup directory

./boostrap.sh

Warning: Do not install or manage multiple clusters from the same working directory. Each cluster deployment maintains its own Terraform/OpenTofu state, and reusing a directory can overwrite state files, causing loss of cluster tracking and unintended cleanup behavior.

Use a dedicated directory, and jump box, where possible, per cluster, and always verify the active kubectl context before running cleanup commands such asmake clean.

To check the active kubectl context, run the following command:kubectl config current-context

3.3.2 - Accessing PPC using a Linux machine

Before you begin

Ensure that the following prerequisites are met.

- A Linux machine is available and running.

- AWS CLI is installed and configured.

- Kubernetes command-line tool is installed.

Perform the following steps to access PPC using a separate Linux machine.

Log in to Linux machine with root credentials.

Configure AWS credentials, using the following command.

aws configureVerify that AWS credentials are working, using the following command.

aws sts get-caller-identityIf the Kubernetes command-line tool is not available, then install the Kubernetes command-line tool, using the following command.

kubectl version --client 2>/dev/null || { echo "Installing kubectl..." curl -LO "https://dl.k8s.io/release/$(curl -L -s https://dl.k8s.io/release/stable.txt)/bin/linux/amd64/kubectl" chmod +x kubectl sudo mv kubectl /usr/local/bin/ kubectl version --client }Set up the Kubernetes command-line tool and access the cluster, using the following command.

aws eks update-kubeconfig --region <region_name> --name <cluster_name>Verify the access to the cluster, using the following command.

kubectl get nodes

3.3.3 - Installing Features and Protectors

Before you begin

Ensure that PPC is successfully installed before installing the features or protectors.

Installing Features

The following table lists the available features.

| Feature | Description |

|---|---|

| Data Discovery | Installing Data Discovery |

| Semantic Guardrails | Installing Semantic Guardrails |

| Protegrity Agent | Installing Protegrity Agent |

| Anonymization | Installing Anonymization |

| Synthetic Data | Installing Synthetic Data |

Installing Protectors

The following table lists the available protectors.

| Protector | Description |

|---|---|

| Application Protector | Installing Application Protector |

| Repository Protector | Installing Repository Protector |

| Application Protector Java Container | Installing Application Protector Java Container |

| Rest Container | Installing Rest Container |

| Cloud Protector | Installing Cloud Protector |

3.3.4 - Login to PPC

3.3.4.1 - Prerequisites

Use Route 53 configuration on AWS to resolve the PPC FQDN specified during the installation to the internal load balancer.

- Ensure that the instance is using the AWS-provided DNS server, such as, VPC CIDR + 2.

- Verify that

enableDnsHostnamesandenableDnsSupportare set to true in the VPC settings. - Verify the Security Group of the load balancer. Ensure that Inbound traffic is allowed on the required ports, such as, 80 and 443, from the client instance’s IP or Security Group.

- Keep the following information ready:

- VPC ID: The ID of the VPC for the client instances and the Load Balancer. For example, vpc-0123456789.

- Internal ELB DNS Name: The DNS name of the load balancer. For example, internal-abcdefghi123456-123456789.us-east-1.amazonaws.com.

- Target FQDN: The FQDN for PPC. For example, mysite.aws.com.

Find the AWS Load Balancer address.

kubectl get gateway -AThe output appears similar to the following:

NAMESPACE NAME CLASS ADDRESS PROGRAMMED AGE api-gateway pty-main envoy internal-abcdefghi123456-123456789.us-east-1.elb.amazonaws.com TrueMap the PPC FQDN to the load balancer using Route 53.

For more information about configuring Route 53, refer to the AWS documentation.

3.3.4.2 - Log in to PPC

Access the PPC using the FQDN provided during the installation process.

Enter the username and password for the admin user to log in and view the Insight Dashboard.

If Protegrity Agent is installed, then the Protegrity Agent dashboard appears. Click Insight to open the Insight Dashboard. For more information about Protegrity Agent, refer to Using Protegrity Agent.

3.3.5 - Accessing the PPC CLI

3.3.5.1 - Prerequisites

To access the PPC CLI, ensure that the following prerequisites are met.

SSH Keys: The SSH private key that corresponds to the public key configured in the

pty-clipod is required.Network Access: Ensure to have network connectivity to the cluster.

Resolve FQDN: Use Route 53 configuration on AWS to resolve the PPC FQDN specified during the installation to the internal load balancer. For more information, refer to Prerequisites.

For Linux/macOS Users

The private key to access the CLI pod will be in the /deployment/keys directory. The key file is authorized_keys.

From the /deployment/keys directory:

ssh -i authorized_keys -p 22 ptyitusr@<user-provided-fqdn>

With options to skip host key checking:

ssh -i authorized_keys -o StrictHostKeyChecking=no -o UserKnownHostsFile=/dev/null -p 22 ptyitusr@<user-provided-fqdn>

For Windows Users

The private key to access the CLI pod will be in the /deployment/keys directory. The key file is authorized_keys. Copy the key file to a directory on the local Windows machine.

Using Windows SSH Client (Windows 10/11 with OpenSSH):

ssh -i C:\path\to\copied\file\authorized_keys -p 22 ptyitusr@<user-provided-fqdn>Using PuTTY:

- Host Name:

<user-provided-fqdn> - Port:

22 - Connection Type:

SSH - Under Connection > SSH > Auth, browse and select your private key file (.ppk format)

- Username:

ptyitusr

- Host Name:

3.3.5.2 - Accessing the PPC CLI

Once connected, the Protegrity CLI welcome banner displays. Enter the following parameters when prompted:

- Username: Application username

- Password: Application password

For more information about the default credentials, refer to the Release Notes.

The CLI supports two main command categories:

pim: Policy Information Management commands for data protection policiesadmin: User, Role, Permission, and Group management commands

Note: Ensure that at least one additional backup administrator user is configured with the same administrative privileges as the primary admin user.

If the primary admin account is locked or its credentials are lost, restoring the system from a backup may be the only recovery option.

3.3.6 - Deleting PPC

Uninstalling Features and Protectors

To uninstall features and protectors, refer the relevant documentation.

Cleaning up the EKS Resources

To destroy all created resources, including the EKS cluster and related components, run the following commands.

# Navigate setup directory

cd iac_setup

# Clean up all resources

make clean

Executing this command destroys the PPC and all related components.

3.3.7 - Restoring the PPC

Before you begin

Before starting a restore, ensure the following conditions are met:

An existing backup is available. Backups are taken automatically as part of the default installation using scheduled backup mechanisms. These backups are stored in an AWS S3 bucket configured during the original installation.

Access to the original backup AWS S3 bucket. During restore, the same S3 bucket that was used during the original installation must be specified.

Before initiating the restore, review and update the KMS key policy to reflect the restore cluster name. Even if the policy was already configured for the source cluster, it must be updated for the new restore cluster. If the policy continues to reference the source cluster name, the IAM role created during restore cannot decrypt the backup data, causing the restore to fail.

Permissions to read from the S3 bucket. The user performing the restore must have sufficient permissions to access the backup data stored in the bucket.

A new Kubernetes cluster is created. Restore is performed as part of creating a new cluster, not on an existing one. Restore is only supported during a fresh installation flow.

While the backup is taken from the source cluster, do not perform Create, Read, Update, or Delete (CRUD) operations on the source cluster. This ensures backup consistency and prevents data corruption during restore.

Before restoring to a new cluster, if the source cluster is accessible, disable the backup operations on the source cluster by setting the backup storage location to read‑only. This ensures that no additional backup data is written during the restore process.

To disable the backup operation on the source cluster, run the following command:

kubectl patch backupstoragelocation default -n pty-backup-recovery --type merge -p '{"spec":{"accessMode":"ReadOnly"}}'If the source cluster is not accessible, this step can be skipped.

During Disaster management, the backup data is used to restore the cluster and the OpenSearch indexes using snapshots. However, Insight restores OpenSearch data only from the most recent snapshot created by the daily-insight-snapshots policy.

For more information, refer to Backing up and restoring indexes.

Warning: Do not install or manage multiple clusters from the same working directory. Each cluster deployment maintains its own Terraform/OpenTofu state, and reusing a directory can overwrite state files, causing loss of cluster tracking and unintended cleanup behavior.

Use a dedicated directory, and jump box, where possible, per cluster, and always verify the active kubectl context before running cleanup commands such asmake clean.

The repository provides a bootstrap script that automatically installs or updates the following software on the jump box:

- AWS CLI - Required to communicate with your AWS account.

- OpenTofu - Required to manage infrastructure as code.

- kubectl - Required to communicate with the Kubernetes cluster.

- Helm - Required to manage Kubernetes packages.

- Make - Required to run the OpenTofu automation scripts.

- jq - Required to parse JSON.

The bootstrap script also checks if you have the required permissions on AWS. It then sets up the EKS cluster and installs the microservices required for deploying the PCS on a PPC.

Note: Before running the bootstrap or resiliency scripts as the root user on RHEL, ensure that /usr/local/bin (and the AWS CLI binary path, if applicable) is included in the $PATH. Alternatively, run the script using a non-root user (such as ec2-user) where /usr/local/bin is already part of the default PATH.

Run the following command to initiate restore using an existing backup:

./bootstrap.sh --restore

The bootstrap script asks for variables to be set to complete the deployment. Follow the instructions on the screen.

The --restore command enables the restore mode for the installation. It initiates restoration of data from the configured backup bucket. This process must be followed on a fresh installation.

The script prompts for the following variables.

Enter Cluster Name

- Ensure that the cluster name does not match the name of the source cluster. Reusing an existing cluster name during restore can lead to discrepancies during cluster installation.

- This same cluster name must already be updated in the KMS key policy. If this update is not performed, the restore process fails because the new cluster cannot decrypt the backup data.

- Ensure that the cluster name does not exceed 31 characters. Cluster names longer than this limit can cause the bootstrap script to fail in subsequent installation steps.

If the installation fails because the cluster name exceeds the 31-character limit, correct the name and re-run the script.- Correction: Choose a cluster name with 31 characters or fewer.

- Retry: Execute the installation command again with the updated name. The script will automatically handle the update and proceed with the bootstrap process.

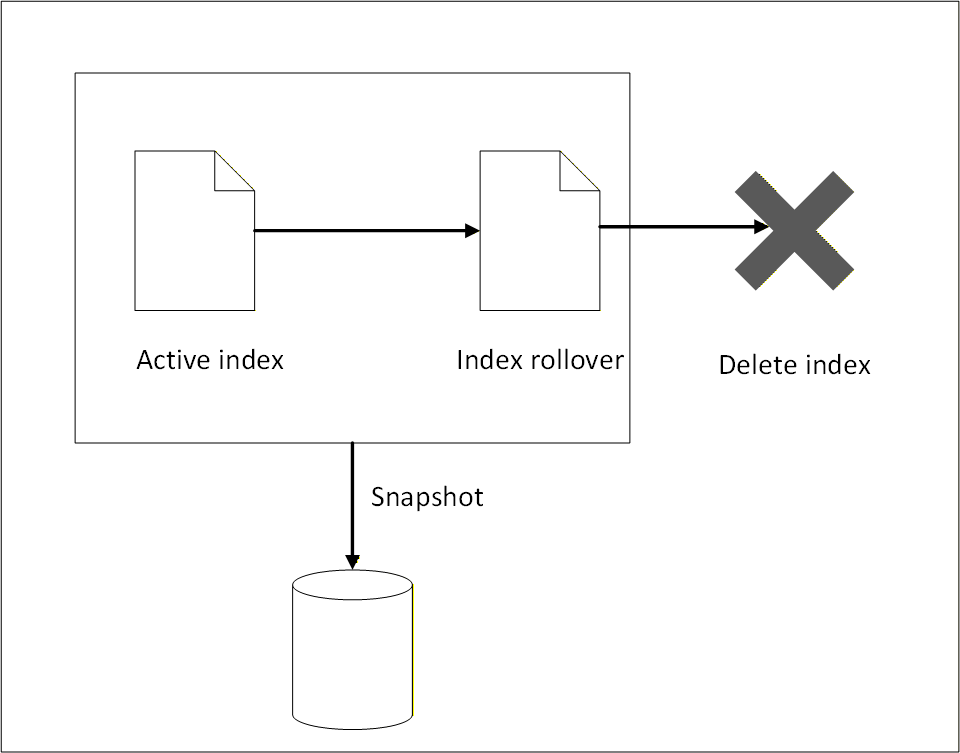

- Correction: Choose a cluster name with 31 characters or fewer.