This is the multi-page printable view of this section. Click here to print.

Protegrity AI Team Edition

- 1: Introduction to Protegrity AI Team Edition

- 2: Overview

- 3: Features

- 3.1: NFA

- 3.1.1: Installing NFA

- 3.1.1.1: Prerequisites

- 3.1.1.2: Setting up and Deploying the NFA

- 3.1.2: Protegrity REST APIs

- 3.1.2.1: Accessing the Protegrity REST APIs

- 3.1.2.2: View the Protegrity REST API Specification Document

- 3.1.2.3: Using the Common REST API Endpoints

- 3.1.2.4: Using the Policy Management REST APIs

- 3.1.2.5: Using the Encrypted Resilient Package REST APIs

- 3.1.2.6: Using the Authentication and Token Management REST APIs

- 3.1.3: Protegrity Command Line Interface (CLI) Reference

- 3.2: Data Discovery

- 3.2.1: Installing Data-Discovery

- 3.3: Gen AI

- 3.3.1: Semantic Guardrails

- 3.3.1.1: Prerequisites

- 3.3.1.2: Installing Semantic Guardrails

- 3.3.1.3: Testing the Semantic Guardrails deployment with NFA

- 3.3.1.4: Configuring Semantic Guardrails with NFA

- 3.3.1.5: Uninstalling Semantic Guardrails

- 3.3.2: Protegrity Agent

- 3.3.2.1: Prerequisites

- 3.3.2.2: Installing Protegrity Agent

- 3.3.2.2.1: Installing Protegrity Agent

- 3.3.2.2.2: Post deployment configurations

- 3.3.2.2.3: Accessing Protegrity Agent

- 3.3.2.3: Configuring Protegrity Agent with NFA

- 3.3.2.4: Using Protegrity Agent

- 3.3.2.5: Appendix - Features and Capabilities

- 3.4: Protectors

- 3.4.1: Application Protector

- 3.4.1.1: Application Protector Java

- 3.4.1.1.1: Installing the Application Protector Java

- 3.4.1.2: Application Protector Python

- 3.4.1.2.1: Installing the Application Protector Python

- 3.4.1.3: Application Protector .Net

- 3.4.1.3.1: Installing the Application Protector .Net

- 3.4.2: Big Data Protector

- 3.4.2.1: Amazon EMR Protector

- 3.4.2.2: CDP-AWS-DataHub Protector

- 3.4.2.2.1: Installing the CDP-AWS-DataHub Protector

- 3.4.3: Application Protector Java Container

- 3.4.4: REST Container

- 3.4.4.1: Installing the REST Container

- 3.4.5: Cloud Protector

- 3.5: Gen AI Add-ons

- 3.5.1: Anonymization

- 3.5.1.1: Installing Anonymization

- 3.5.1.2: Uninstalling and Cleanup Anonymization

- 3.5.2: Synthetic Data

- 3.5.2.1: Installing Synthetic Data

- 3.5.2.2: Uninstalling and Cleanup Synthetic Data

1 - Introduction to Protegrity AI Team Edition

Protegrity AI Team Edition is a container-based data protection solution designed for teams and mid-enterprise organizations that need to safeguard sensitive data across AI, GenAI, and analytics workloads.

It delivers core Protegrity capabilities, including governance, discovery, protection, and privacy. This is provided in a lightweight, containerized form factor that emphasizes fast deployment, simplified operations, and consistent enforcement of data security policies across environments.

Built on a modular, microservices architecture, it moves away from the legacy appliance model to align with modern DevOps practices. The result is a deployment that scales easily, integrates natively with existing CI/CD pipelines, and supports governing agents and securing departmental data.

Purpose and Audience

Protegrity AI Team Edition is intended for:

- Organizations seeking to protect data used in AI or analytics pipelines.

- Teams that require fast deployment cycles and simplified upgrades.

- Customers who need enterprise-grade data protection in a form that can start small, operate independently, and later scale into Protegrity AI Enterprise Edition.

Tech Preview Release Model

A Tech Preview is a controlled early release that allows customers to test new capabilities in real environments before general availability. It lets selected customers peek behind the curtain to try emerging features, validate real-world fit, and provide input on product development before the final, fully supported release. It is not feature-complete, may change based on input, and typically comes without SLA-grade support.

- Release Date: November 17, 2025

- Intended Use: Testing, training, demonstrations, and feedback.

- Known Limitations:

- No Graphical User Interface (GUI).

- Policy management limited to create/delete operations.

- No backup/restore.

- No audit store policy management.

- Not upgradable to GA.

- No license enforcement.

- Restrictions:

- Not for production use.

- Does not include all capabilities planned for version 1.0.

- Setup may require direct assistance from Protegrity.

2 - Overview

The Protegrity AI Team Edition Tech Preview introduces a modern, container-based approach to data protection built on a microservices architecture. It enables organizations to evaluate how Protegrity’s methods, such as, policy management, anonymization, discovery, and semantic controls, integrate into AI and analytics pipelines.

Architecture and Design Principles

Protegrity AI Team Edition delivers core Protegrity capabilities. This includes governance, discovery, protection, privacy, and semantic controls. It is provided in a lightweight, containerized form factor that emphasizes fast deployment, simplified operations, and consistent enforcement of data security policies across environments. It is designed around five engineering goals: ease of deployment, high availability, scalability, extensibility, and maintainability.

| Goal | Implementation Details |

|---|---|

| Ease of Deployment | - OpenTofu templates provision a Kubernetes environment (EKS, ECS, or Docker Compose) with minimal manual intervention. - Helm Charts deploy and configure all components for consistent, reproducible setups. - Because each component runs as a container image, upgrades and patches follow standard CI/CD workflows. |

| High Availability | - Kubernetes manages service health and redundancy automatically. - No Trusted Appliance Cluster (TAC) required. - No external load balancers required. - No manual replication required. |

| Scalability | - The system scales horizontally and vertically through Kubernetes-native scale-up and scale-down mechanisms. - Administrators can adjust resources dynamically as workloads grow or shrink without redeployment. |

| Extensibility | - New capabilities are introduced by adding new container images and Helm configurations. - Allows incremental feature expansion without redesign. |

| Maintainability | - Kubernetes simplifies lifecycle management. - Updating a container image replaces an older version automatically, avoiding downtime and manual patching. |

Core Services

All deployments include a standardized set of common services delivered by a microservices architecture provide routing, security, and audit capabilities for all features and protectors.

| Service | Description |

|---|---|

| Common Ingress Controller | The main entry point for all API and service traffic to the cluster. |

| Certificate Management | Manages and validates TLS certificates for inbound and inter-service communication. |

| Authentication and Authorization | Provides user and service credential validation with role-based access enforcement. |

| Routing to Feature Endpoints | Directs traffic to the appropriate running service or feature container. |

| Insight | Provides logging and auditing capabilities using OpenSearch for event storage and OpenDashboards for visualization and reporting. |

Feature Set

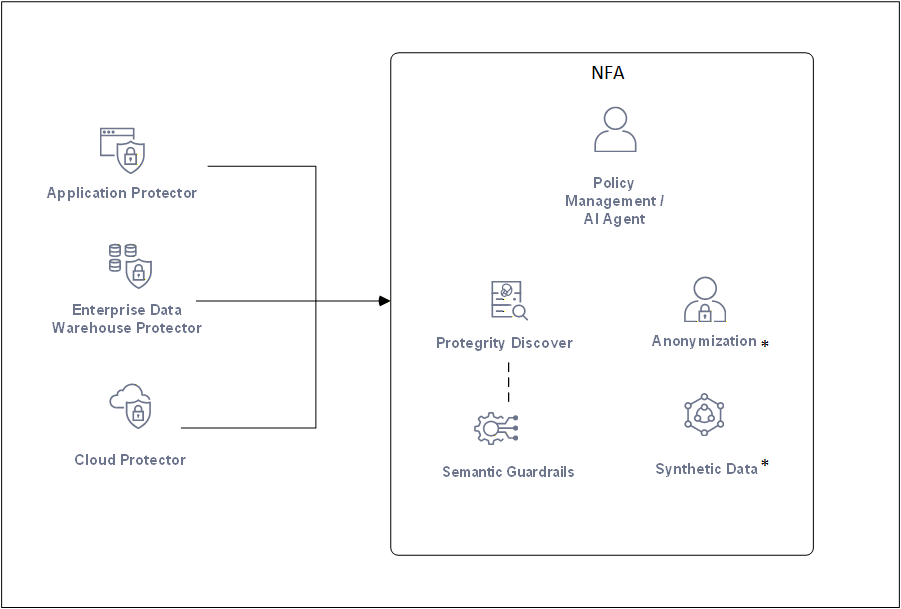

The Tech Preview release of Protegrity AI Team Edition includes a limited but functional subset of capabilities for evaluation.

The various features compatible with Protegrity AI Team Edition are provided here.

* - Available for purchase as an add-on.

| Feature | Description |

|---|---|

| Policy Management | Define and manage data protection policies that govern tokenization, masking, and anonymization. |

| Data Discovery | Automatically identify structured and unstructured sensitive data through pattern matching and machine learning classification. |

| Semantic Guardrails | Apply contextual and runtime safeguards to AI and analytics workflows to prevent data leakage or misuse. |

| Anonymization | Apply statistical privacy models such as k-anonymity, l-diversity, and t-closeness to sensitive datasets. |

| Synthetic Data | Generate tabular synthetic datasets for development, testing, and AI model validation without exposing real sensitive data. |

Protegrity Protectors

Protegrity AI Team Edition protectors enable organizations to embed data protection directly where data is processed, inside applications, analytics engines, or cloud-native data systems.

Application Protectors

Application protectors provide data protection directly within applications or runtime containers. They are suitable for teams developing secure APIs or microservices that handle sensitive data in languages such as Java, Python, or .NET.

| Name | Description | Part Number |

|---|---|---|

| Immutable Application Protector – Java Container | Protects data within Java-based containers, such as OpenShift and EKS. | ApplicationProtector_Java_RHUBI_K8S |

| Immutable Application Protector – REST Container | Provides REST-based protection services for containerized workloads. | REST_RHUBI-9-64_x86-64_K8S |

| Application Protector – Python | Protegrity Application Protector for Python environments. | ApplicationProtector_Linux-ALL-64_x86-64_PY-3.11 |

| Application Protector – Java | Standard Java runtime protector. | ApplicationProtector_Linux-ALL-64_x86-64_JRE-1.8-64 |

| Application Protector – .NET | Protegrity Application Protector for Microsoft .NET applications. | ApplicationProtector_WIN-ALL-64_x86-64_NET-STD-2.0-64 |

Cloud API Protector

The Cloud API protector extends Protegrity protection to AWS serverless and API-based workloads. It is typically used for securing transient data handled by AWS Lambda or similar function-based architectures.

| Name | Description | Part Number |

|---|---|---|

| CloudProtect – Cloud API – AWS | Protegrity CloudProtect using AWS Serverless Functions. | CP_SVRL-ALL-64_x86-64_AWS.API |

Cloud-Native Data Warehouse Protectors

Cloud-Native Data Warehouse protectors apply field-level protection inside analytics environments such as Snowflake, Redshift, and Athena. These protectors preserve query usability and analytical fidelity while maintaining data confidentiality.

| Name | Description | Part Number |

|---|---|---|

| Cloud Native Data Warehouse Protector – Snowflake | Integrates with Snowflake for secure, compliant analytics across clouds. | CP_SVRL-ALL-64_x86-64_AWS.Snowflake |

| Cloud Native Data Warehouse Protector – Redshift | Provides protection for Amazon Redshift queries and transformations. | CP_SVRL-ALL-64_x86-64_AWS.Redshift |

| Cloud Native Data Warehouse Protector – Athena | Applies protection to Amazon Athena query execution. | CP_SVRL-ALL-64_x86-64_AWS.Athena |

Big Data Protectors

Big Data protectors integrate with large-scale analytics and data lake environments to secure data during ETL, batch, or stream processing operations.

| Name | Description | Part Number |

|---|---|---|

| Big Data Protector – Amazon EMR | Provides data protection within Amazon EMR clusters. | BigDataProtector_Linux-ALL-64_x86-64_EMR-7.x-64 |

| Big Data Protector – Databricks | Enables tokenization and masking for Databricks across AWS, Azure, and GCP. | BigDataProtector_Linux-ALL-64_x86-64_AWS.Databricks-15.4-64 |

| Big Data Protector – CDP Data Hub | Supports Cloudera DataWorks Platform deployments CROSS AWS, Azure, AND GCP. | BigDataProtector_Linux-ALL-64_x86-64_AWS.Generic.CDP-Datahub-7.3-64 |

Features Included in the AI Team Edition

The following features are available with the AI Team Edition:

- Policy Management using AI Agent

- Data Discovery

- Semantic Guardrails

- Any one protector from each of the following families:

- Application protector

- Cloud protector

- Big Data protector

The following features are additional add-ons that must be purchased separately:

- Anonymization

- Synthetic Data

3 - Features

Installing NFA

Before using the AI Team Edition features, install the NFA.

Installing the Gen AI features

Use the automated installer provided or use manual steps for installing the GenAI features. Ensure that the namespaces used are consistent, else features installed using manual steps cannot be removed using the automated installer.

- Log in to the NFA CLI.

- Navigate to the

deployment/iac-setupdirectory. - Run the following command.

./install_features.sh

- Enter the number for the feature that must be installed.

Bootstrapping Policy Management

Note: This is an optional step. Please run this if you need to initialize the pim and create sample data elements, datastores, policies etc for testing purposes. This script will not deploy them, it is up to the end user to decide what gets deployed.

Generating Access Token for Authentication

Navigate back to the root directory (deployment/) to run the bootstrap script:

# From iac-setup directory, go back to root

cd ..

# Generate access token

TOKEN=$(curl -s -i -X 'POST' 'https://eclipse.aws.protegrity.com/pty/v1/auth/login/token' \

-H 'Content-Type: application/x-www-form-urlencoded' \

-d 'loginname=admin' \

-d 'password=Admin123!' \

-k \

| grep -i 'pty_access_jwt_token:' \

| awk '{print $2}' \

| tr -d '\r')

Note: The variable $TOKEN is utilized in subsequent scripts. Therefore, users should execute the above block and initialize the $TOKEN variable prior to running the scripts below.

Running the Bootstrap Script

./bootstrap-pim.sh --host eclipse.aws.protegrity.com --token $TOKEN

Expected output:

Configured host: eclipse.aws.protegrity.com

Wait for devops to come alive

Devops is alive

Bootstrap environment...

Bootstrap done

Wait for hubcontroller to come alive

Hubcontroller is alive

Creating datastores...

Creating roles...

Adding members...

Creating custom alphabet...

Creating masks...

Creating data elements...

Creating policies...

Creating rules...

Creating trusted applications...

Finished!

Note: This example assumes there is an /etc/hosts entry for eclipse.aws.protegrity.com, which points to the address listed for the above load balancer.

Deploying Policies

After the PIM has been initialized, and the resources have been created, you can deploy datastores, applications, and policies.

List available resources:

# List datastores

curl -k -H "Authorization: Bearer $TOKEN" -X GET https://eclipse.aws.protegrity.com/pty/v2/pim/datastores

# List applications

curl -k -H "Authorization: Bearer $TOKEN" -X GET https://eclipse.aws.protegrity.com/pty/v2/pim/applications

# List policies

curl -k -H "Authorization: Bearer $TOKEN" -X GET https://eclipse.aws.protegrity.com/pty/v2/pim/policies

Deploy a combination:

curl -k -H "Authorization: Bearer $TOKEN" -H "content-type: application/json" -X POST https://eclipse.aws.protegrity.com/pty/v2/pim/datastores/1/deploy -d '{"policies": ["1"], "applications": ["1"]}'

Where 1 is the UID from the above requests.

Compatibility list

The following containers and versions are compatible with the AI Team Edition.

| Feature | Version |

|---|---|

| NFA | 0.9.0 |

| Data Discovery | ver |

| Gen AI | |

| Semantic Guardrails | ver |

| AI Agent | ver |

| Protectors | |

| Application Protectors | |

| Java | ver |

| Python | ver |

| .Net | ver |

| Big Data Protector | |

| Amazon EMR | ver |

| CDP-AWS-DataHub | ver |

| Containers | |

| Application Protector Java Container | 10.0 |

| REST Container | 10.0 |

| Cloud | |

| Product | ver |

| Gen AI Add-ons | |

| Anonymization | ver |

| Sytnthetic Data | ver |

3.1 - NFA

Beyond infrastructure, NFA introduces a suite of common microservices that act as the backbone for AI Team Edition features. These include ingress control for secure traffic routing, certificate management for request validation, and robust authentication and authorization services. NFA also integrates Insight for audit logging and analytics, leveraging OpenSearch and OpenDashboards for visualization and compliance reporting. On top of this foundation, AI Team Edition delivers advanced capabilities such as policy management, anonymization, data discovery, semantic guardrails, and synthetic data generation. All these features are orchestrated within the NFA cluster. This modular approach ensures scalability, security, and flexibility, making NFA a strategic enabler for organizations adopting cloud-first and containerized environments.

3.1.1 - Installing NFA

The New Foundational Architecture (NFA) is the core framework that forms the AI Team Edition. It is designed to deliver a modern, cloud-native experience for data security and governance. Built on Kubernetes, NFA uses a containerized architecture that simplifies deployment and scaling. Using OpenTofu scripts and Helm charts, administrators can stand up clusters with minimal manual intervention, ensuring consistency and reducing operational overhead.

3.1.1.1 - Prerequisites

Ensure that you have completed the following prerequisites before deploying the NFA.

Baseline EKS Cluster Setup

This section provides instructions for deploying the NFA baseline environment on an AWS EKS cluster using OpenTofu and Make utility.

Software Prerequisites

Before you begin installing the NFA, ensure you have the following prerequisites met:

- IAM Roles: Contact your IT team to create the necessary IAM roles with the following permissions to create and manage AWS EKS resources:

- Amazon EKS cluster IAM Role: Required to manage the Kubernetes cluster. This user requires the following policies:

- AmazonEKSBlockStoragePolicy - This is a managed policy by AWS.

- AmazonEKSClusterPolicy - This is a managed policy by AWS.

- AmazonEKSComputePolicy - This is a managed policy by AWS.

- AmazonEKSLoadBalancingPolicy - This is a managed policy by AWS.

- AmazonEKSNetworkingPolicy - This is a managed policy by AWS.

- AmazonEKSVPCResourceController - This is a managed policy by AWS.

- AmazonEKSServicePolicy - This is a managed policy by AWS.

- AmazonEBSCSIDriverPolicy - This is a managed policy by AWS.

- Amazon EKS node IAM Role: Required to communicate with the node. This user requires the following policies:

- AmazonEBSCSIDriverPolicy - This is a managed policy by AWS.

- AmazonEC2ContainerRegistryReadOnly - This is a managed policy by AWS.

- AmazonEKS_CNI_Policy - This is a managed policy by AWS.

- AmazonEKSWorkerNodePolicy - This is a managed policy by AWS.

- AmazonSSMManagedInstanceCore - This is a managed policy by AWS.

- Amazon EKS node IAM Role: Required to communicate with the node. This user requires the following policies:

- AmazonEBSCSIDriverPolicy - This is a managed policy by AWS.

- AmazonEC2ContainerRegistryReadOnly - This is a managed policy by AWS.

- AmazonEKS_CNI_Policy - This is a managed policy by AWS.

- AmazonEKSWorkerNodePolicy - This is a managed policy by AWS.

- AmazonSSMManagedInstanceCore - This is a managed policy by AWS.

For more information about AWS managed policies, refer to the section AWS managed policies for Amazon Elastic Kubernetes Service in the AWS documentation.

- Jump box or local machine - Use a dedicated EC2 instance (RHEL 10 , Debian 13, Debian 12, or Ubuntu 24.04) for deployment.

3.1.1.2 - Setting up and Deploying the NFA

Perform the following steps to set up and deploy the NFA:

Downloading and Extracting the Recipe for Deploying NFA

This section describes the steps to download and extract the recipe for deploying the NFA.

Note: If you have set up the jump box previously, then run the

make cleancommand. This ensures that the local repository on the jump box and the clusters are cleaned up before proceeding with a new installation.

Download the Team-Edition-All-64_x86-64_0.9.0.12.tar archive from repository and extract it:

# Create deployment directory

mkdir deployment && cd deployment

# Download the archive

wget https://artifactory.protegrity.com/artifactory/eclipse/eclipse-init/latest/Team-Edition-All-64_x86-64_0.9.0.12.tar

# Extract the archive

tar -xvf Team-Edition-All-64_x86-64_0.9.0.12.tar

Installing the NFA

The repository provides a bootstrap script that automatically installs or updates the following software on the jump box:

- AWS CLI - Required to communicate with your AWS account.

- OpenTofu - Required to manage infrastructure as code.

- kubectl - Required to communicate with the Kubernetes cluster.

- Helm - Required to manage Kubernetes packages.

- Make - Required to run the OpenTofu automation scripts.

- jq - Required to parse JSON.

The bootstrap script also checks if you have the required permissions on AWS. It then sets up the EKS cluster and installs the microservices required for deploying the NFA.

The bootstrap script asks for variables to be set to complete your deployment. Follow the instructions on the screen:

./bootstrap.sh

The script will prompt for the following variables. Press ENTER to accept the default value.

eks_cluster_name - Name of the EKS cluster. The default value is

opencluster-v1.The following characters are allowed:

- Lowercase letters:

a-z - Numbers:

0-9 - Hyphens:

-

The following characters are not allowed:

- Uppercase letters:

A-Z - Underscores:

_ - Spaces

- Any special characters such as:

/ ? * + % ! @ # $ ^ & ( ) = [ ] { } : ; , . - Leading or trailing hyphens

- More than 50 characters

For guidelines on creating an Amazon EKS cluster, refer to the section Create an Amazon EKS cluster in the Amazon EKS User Guide.

- Lowercase letters:

vpc_id: The script automatically queries for the available VPCs. Type the VPC ID that is available.

private subnet ID - The script automatically queries for the available VPC subnets. You are prompted to enter two private subnet IDs. Specify two private subnet IDs from different regions.

The script then automatically updates the VPC CIDR block based on your VPC details.ingress_fqdn - Fully Qualified Domain Name for your ingress. The default value is

eclipse.aws.protegrity.com.global_image_reg - Global image repository from where the container images will be retrieved. The default value is

artifactory.protegrity.com:443.Enter the registry user name. If you are using artifactory as the global image repository, then leave this value blank.

Enter registry password. If you are using artifactory as the global image repository, then leave this value blank.

A variables.tf.bak backup file is created that saves a copy of all the values that you have specified for the variables. After you enter all the values, you are prompted to apply the changes to the variables.tf file. Type yes to apply the changes.

Note: The cluster creation process can take 10-15 minutes.

If the session is terminated during installation due to network issues, power outage, and so on, then the installation stops. To restart the installation, run the following commands:

make clean

./boostrap.sh

Accessing the NFA UI

To access the Web UI, you need to map the Ingress hostname to the Load Balancer’s IP address in your local hosts file.

Get Ingress Details: Find the hostname and the AWS Load Balancer address.

kubectl get ingress -AThe output will look something like this:

NAME CLASS HOSTS ADDRESS PORTS AGE eclipse <none> eclipse.aws.protegrity.com internal-a1412...us-east-1.elb.amazonaws.com 80 20mFind the Load Balancer IP: Use

pingon theADDRESSfrom the output above to resolve its IP address.ping internal-a1412824c35d942aa9373733bd3b8cca-391878848.us-east-1.elb.amazonaws.comCopy the IP address from the

pingoutput (e.g.,192.0.2.1).Update Your Hosts File: Add an entry to your local hosts file to map the hostname to the IP address.

- Linux/macOS:

/etc/hosts - Windows:

C:\Windows\System32\drivers\etc\hosts

Example Entry:

192.0.2.1 eclipse.aws.protegrity.com- Linux/macOS:

Access the UI: You can now access the Web UI in your browser.

- URL:

https://eclipse.aws.protegrity.com - Default User Credentials: user: admin, password: Admin123!

- URL:

Prerequisites

- SSH Keys: You need the SSH private key that corresponds to the public key configured in the pty-cli pod.

- Network Access: Ensure you have network connectivity to the cluster.

- Hosts File Update: Same as the Web UI access, update your hosts file to map

eclipse.aws.protegrity.comto the load balancer IP.

The private key to access the CLI pod will be in the iac-setup directory. The key file is ssh_host_rsa_key.

For Linux/macOS Users

From the iac-setup directory:

ssh -i ssh_host_rsa_key -p 22 ptyitusr@eclipse.aws.protegrity.com

With options to skip host key checking:

ssh -i ssh_host_rsa_key -o StrictHostKeyChecking=no -o UserKnownHostsFile=/dev/null -p 22 ptyitusr@eclipse.aws.protegrity.com

For Windows Users

Using Windows SSH Client (Windows 10/11 with OpenSSH):

ssh -i C:\path\to\iac-setup\ssh_host_rsa_key -p 22 ptyitusr@eclipse.aws.protegrity.comUsing PuTTY:

- Host Name:

eclipse.aws.protegrity.com - Port:

22 - Connection Type:

SSH - Under Connection > SSH > Auth, browse and select your private key file (.ppk format)

- Username:

ptyitusr

- Host Name:

CLI Usage

Once connected, you’ll see the Protegrity CLI welcome banner. You’ll be prompted for:

- Username: Your Protegrity application username (default:

admin) - Password: Your Protegrity application password (default:

Admin123!)

The CLI supports two main command categories:

pim: Policy Information Management commands for data protection policiesadmin: User, Role, Permission, and Group management commands

Getting Started with the CLI

Please refer the documentation for the CLI here: Protegrity Command Line Interface (CLI) Reference

Installing Protegrity AI Team Edition Features

This section describes how to install the Protegrity AI Team Edition features on the NFA.

- Run the following command to navigate to the

iac-setupdirectory.

cd deployment/iac-setup

- Run the following command to install the Protegrity AI Team Edition features.

# From iac-setup directory

./install_features.sh

The Protegrity AI Team Edition Features installer for Tech Preview appears. It shows the following options:

--- Install ---

1. Install Data Discovery

2. Install Semantic Guardrails

3. Install Anonymization

4. Install Synthetic Data

--- Uninstall ---

5. Uninstall Data Discovery

6. Uninstall Semantic Guardrails

7. Uninstall Anonymization

8. Uninstall Synthetic Data

--- Other ---

9. List Installed Products

10. Exit

Type any value from 1 to 4 to install the Protegrity AI Team Edition features.

The corresponding option is executed.

For example, if you type

1, then the installer installs Data Discover on the NFA.After the command is executed, press ENTER to continue.

Note: Before installing Semantic Guardrails, you need to install Data Discovery. If you try and install Semantic Guardrails without installing Data Discovery, then you are first prompted to install Data Discovery.

Type any values from 5 to 8 to uninstall any feature that you have already installed. A prompt appears to confirm the installation. Type

yto uninstall the feature.After the command is executed, press ENTER to continue.

Type

9to list the features that have been installed.The installer checks the status of all the products and then displays the installation status for each product.

Type

10to exit the installer.

The installation logs are saved in the /tmp/protegrity_installer.log file.

Bootstrapping Policy Management

Note: This is an optional step. Please run this if you need to initialize the pim and create sample data elements, datastores, policies etc for testing purposes. This script will not deploy them, it is up to the end user to decide what gets deployed.

Generating Access Token for Authentication

Navigate back to the root directory (eclipse-init/) to run the bootstrap script:

# From iac-setup directory, go back to root

cd ..

# Generate access token

TOKEN=$(curl -s -i -X 'POST' 'https://eclipse.aws.protegrity.com/pty/v1/auth/login/token' \

-H 'Content-Type: application/x-www-form-urlencoded' \

-d 'loginname=admin' \

-d 'password=Admin123!' \

-k \

| grep -i 'pty_access_jwt_token:' \

| awk '{print $2}' \

| tr -d '\r')

Note: The variable $TOKEN is utilized in subsequent scripts. Therefore, users should execute the above block and initialize the $TOKEN variable prior to running the scripts below.

Running the Bootstrap Script

./bootstrap-pim.sh --host eclipse.aws.protegrity.com --token $TOKEN

Expected output:

Configured host: eclipse.aws.protegrity.com

Wait for devops to come alive

Devops is alive

Bootstrap environment...

Bootstrap done

Wait for hubcontroller to come alive

Hubcontroller is alive

Creating datastores...

Creating roles...

Adding members...

Creating custom alphabet...

Creating masks...

Creating data elements...

Creating policies...

Creating rules...

Creating trusted applications...

Finished!

Note: This example assumes there is an /etc/hosts entry for eclipse.aws.protegrity.com, which points to the address listed for the above load balancer.

Deploying Policies

After the PIM has been initialized, and the resources have been created, you can deploy datastores, applications, and policies.

List available resources:

# List datastores

curl -k -H "Authorization: Bearer $TOKEN" -X GET https://eclipse.aws.protegrity.com/pty/v2/pim/datastores

# List applications

curl -k -H "Authorization: Bearer $TOKEN" -X GET https://eclipse.aws.protegrity.com/pty/v2/pim/applications

# List policies

curl -k -H "Authorization: Bearer $TOKEN" -X GET https://eclipse.aws.protegrity.com/pty/v2/pim/policies

Deploy a combination:

curl -k -H "Authorization: Bearer $TOKEN" -H "content-type: application/json" -X POST https://eclipse.aws.protegrity.com/pty/v2/pim/datastores/1/deploy -d '{"policies": ["1"], "applications": ["1"]}'

Where 1 is the UID from the above requests.

Troubleshooting

- Permission denied (publickey): Ensure you’re using the correct private key that matches the authorized_keys in the pod

- Connection refused: Verify the load balancer IP and hosts file configuration

- Key format issues: Ensure your private key is in the correct format (OpenSSH format for Linux/macOS, .ppk for PuTTY)

Management commands

The following section lists the commands to manage the Kubernetes cluster.

Available Makefile Targets

From the iac-setup directory:

makeormake run: Deploys the entire stackmake clean: Destroys all resources created by setup and cleans up Helm/Kubernetes componentsmake check-tools: Verifies that all required CLI tools are installed and available in your PATH

Cleaning up the EKS Resources

To destroy all created resources, including the EKS cluster and related components, run the following commands.

# Navigate setup directory

cd iac-setup

# Clean up all resources

make clean

3.1.2 - Protegrity REST APIs

The Protegrity REST APIs include the following APIs:

- Policy Management REST APIs The Policy Management REST APIs are used to create or manage policies.

- Encrypted Resilient Package APIs

The Encrypted Resilient Package REST APIs include the REST API that is used to encrypt and export a resilient package, which is used by the resilient protectors.

For more information on how the REST API is used to export the encrypted resilient package in an immutable policy deployment, refer to the section DevOps Approach for Application Protector.

3.1.2.1 - Accessing the Protegrity REST APIs

The following section lists the requirements for accessing the Protegrity REST APIs.

Available endpoints - Protegrity has enabled the following endpoints to access the REST APIs.

- Base URL

- https://{ESA IP address or Hostname}/pty/<Version>/<API>

Where:

- ESA IP address or Hostname: Specifies the IP address or Hostname of the ESA.

- Version: Specifies the version of the API.

- API: Endpoint of the REST API.

Authentication - You can access the REST APIs using basic authentication, client certificates, or tokens. The authentication depends on the type of REST API that you are using. For more information about accessing the REST APIs using these authentication mechanisms, refer to the section Accessing REST API Resources.

Authorization - You must assign the permissions to roles for accessing the REST APIs. For more information about the roles and permissions required, refer to the section Managing Roles.

3.1.2.2 - View the Protegrity REST API Specification Document

The steps mentioned in this section contains the usage of Docker containers and services to download and launch the images for Swagger Editor within a Docker container.

For more information about Docker, refer to the Docker documentation.

The following example uses Swagger Editor to view the REST API specification document. In this example, JSON Web Token (JWT) is used to authenticate the REST API.

Install and start the Swagger Editor.

Download the Swagger Editor image within a Docker container using the following command.

docker pull swaggerapi/swagger-editorLaunch the Docker container using the following command.

docker run -d -p 8888:8080 swaggerapi/swagger-editorPaste the following address on a browser window to access the Swagger Editor using the specified host port.

http://localhost:8888/Download the REST API specification document using the following command.

curl -H "Authorization: Bearer ${TOKEN}" "https://<NFA IP address or Hostname>/pty/<Version>/<API>/doc" -H "accept: application/x-yaml" --output api-doc.yamlIn this command:

- TOKEN is the environment variable that contains the JWT token used to authenticate the REST API.

- <Version> is the version number of the API. For example,

v1orv2. - <API> is the API for which you want to download the OpenAPI specifications document. For example, specify the value as

pimto download the OpenAPI specifications for the Policy Management REST API. Similarly, specify the value asauthto download the OpenAPI specifications for the Authentication and Token Management API.

For more information about the Policy Management REST APIs, refer to the section Using the Policy Management REST APIs.

For more information about the Authentication and Token Management REST APIs, refer to the section Using the Authentication and Token Management REST APIs

Drag and drop the downloaded api-doc.yaml* file into a browser window of the Swagger Editor.

Generating the REST API Samples Using the Swagger Editor

Perform the following steps to generate samples using the Swagger Editor.

Open the api-doc.yaml* file in the Swagger Editor.

On the Swagger Editor UI, click on the required API request.

Click Try it out.

Enter the parameters for the API request.

Click Execute.

The generated Curl command and the URL for the request appears in the Responses section.

3.1.2.3 - Using the Common REST API Endpoints

The following section specifies the common operations that are applicable to all the Protegrity REST APIs.

The Base URL for each API will change depending on the version of the API being used. The following table specifies the version that you must use when executing the common operations for each API.

| REST API | Version in the Base URL <Version> |

|---|---|

| Policy Management | v2 |

| Encrypted Resilient Package | v1 |

| Authentication and Token Management | v1 |

Common REST API Endpoints

The following table lists the common operations for the Protegrity REST APIs.

| REST API | Description |

|---|---|

| /version | Retrieves the service versions that are supported by the Protegrity REST APIs on the ESA. |

| /health | This API request retrieves the health information for the Protegrity REST APIs and identifies whether the corresponding service is running. |

| /doc | This API request retrieves the API specification document. |

| /log | This API request retrieves current log level set for the corresponding REST API logs. |

| /log | This API request changes the log level for the REST API service during run-time. The level set through this resource is persisted until the corresponding service is restarted. This log level overrides the log level defined in the configuration. |

Retrieving the Supported Service Versions

This API retrieves the service versions that are supported by the corresponding REST API service on the ESA.

- Base URL

- https://{ESA IP address}/pty/<Version>/<API>

- Path

- /version

- Method

- GET

CURL request syntax

curl -H "Authorization: Bearer TOKEN" -X GET "https://{ESA IP address}/pty/<Version>/<API>/version"

In this command, Token indicates the JWT token used for authenticating the API.

Alternatively, you can also store the JWT token in an environment variable named TOKEN, as shown in the following command.

curl -H "Authorization: Bearer ${TOKEN}" -X GET "https://{ESA IP address}/pty/<Version>/<API>/version"

Authentication credentials

TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs.

Sample CURL request

curl -H "Authorization: Bearer ${TOKEN}" -X GET "https://10.10.101.43/pty/v1/rps/version"

This sample request uses the JWT token authentication.

Sample CURL response

{"version":"1.8.1","buildVersion":"1.8.1-alpha+605.gfe6b1d.master"}

Retrieving the Service Health Information

This API request retrieves the health information of the REST API service and identifies whether the service is running.

- Base URL

- https://{ESA IP address}/pty/<Version>/<API>

- Path

- /health

- Method

- GET

CURL request syntax

curl -H "Authorization: Bearer <TOKEN>" -X GET "https://{ESA IP address}/pty/<Version>/<API>/health"

In this command, Token indicates the JWT token used for authenticating the API.

Alternatively, you can also store the JWT token in an environment variable named TOKEN, as shown in the following command.

curl -H "Authorization: Bearer ${TOKEN}" -X GET "https://{ESA IP address}/pty/<Version>/<API>/health"

Authentication credentials

TOKEN - Enviroment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs.

Sample CURL request

curl -H "Authorization: Bearer ${TOKEN}" -X GET "https://10.10.101.43/pty/v2/pim/health"

This sample request uses the JWT token authentication.

Sample CURL response

{

"isHealthy" : true

}

Where,

- isHealthy: true - Indicates that the service is up and running.

- isHealthy: false - Indicates that the service is down.

Retrieving the API Specification Document

This API request retrieves the API specification document.

- Base URL

- https://{ESA IP address}/pty/<Version>/<API>

- Path

- /doc

- Method

- GET

CURL request syntax

curl -H "Authorization: Bearer <Token>" -X GET "https://{ESA IP address}/pty/<Version>/<API>/doc"

In this command, Token indicates the JWT token used for authenticating the API.

Alternatively, you can also store the JWT token in an environment variable named TOKEN, as shown in the following command.

curl -H "Authorization: Bearer ${TOKEN}" -X GET "https://{ESA IP address}/pty/<Version>/<API>/doc"

Authentication credentials

TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs.

Sample CURL requests

curl -H "Authorization: Bearer ${TOKEN}" -X GET "https://10.10.101.43/pty/v1/rps/doc"

curl -H "Authorization: Bearer ${TOKEN}" -X GET "https://10.10.101.43/pty/v1/rps/doc" -o "rps.yaml"

These sample requests uses the JWT token authentication.

Sample CURL responses

The Encrypted Resilient Package API specification document is displayed as a response. If you have specified the “-o” parameter in the CURL request, then the API specification is copied to a file specified in the command. You can use the Swagger UI to view the API specification document.

Retrieving the Log Level

This API request retrieves current log level set for the REST API service logs.

- Base URL

- https://{ESA IP address}/pty/<Version>/<API>

- Path

- /log

- Method

- GET

CURL request syntax

curl -H "Authorization: Bearer <TOKEN>" -X GET "https://{ESA IP address}/pty/<Version>/<API>/log"

In this command, Token indicates the JWT token used for authenticating the API.

Alternatively, you can also store the JWT token in an environment variable named TOKEN, as shown in the following command.

curl -H "Authorization: Bearer ${TOKEN}" -X GET "https://{ESA IP address}/pty/<Version>/<API>/log"

Authentication credentials

TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs.

Sample CURL request

curl -H "Authorization: Bearer ${TOKEN}" -X GET "https://10.10.101.43/pty/v2/pim/log"

This sample request uses the JWT token authentication.

Sample CURL response

{

"level": "INFO"

}

Setting Log Level for the REST API Service Log

This API request changes the REST API service log level during run-time. The level set through this resource persists until the corresponding service is restarted. This log level overrides the log level defined in the configuration.

- Base URL

- https://{ESA IP address}/pty/<Version>/<API>

- Path

- /log

- Method

- POST

CURL request syntax

curl -X POST "https://{ESA IP Address}/pty/<Version>/<API>/log" -H "Authorization: Bearer <TOKEN>" -H "accept: application/json" -H "Content-Type: application/json" -d "{\"level\":\"log level\"}"

In this command, Token indicates the JWT token used for authenticating the API.

Alternatively, you can also store the JWT token in an environment variable named TOKEN, as shown in the following command.

curl -X POST "https://{ESA IP Address}/pty/<Version>/<API>/log" -H "Authorization: Bearer ${TOKEN}" -H "accept: application/json" -H "Content-Type: application/json" -d "{\"level\":\"log level\"}"

Authentication credentials

TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs.

Request body elements

log level

Set the log level. The log level can be set to SEVERE, WARNING, INFO, CONFIG, FINE, FINER, or FINEST.

Sample CURL request

curl -X POST "https://{ESA IP Address}/pty/v1/rps/log" -H "Authorization: Bearer ${TOKEN}" -H "accept: application/json" -H "Content-Type: application/json" -d "{\"level\":\"SEVERE\"}"

This sample request uses the JWT token authentication.

Sample response

The log level is set successfully.

3.1.2.4 - Using the Policy Management REST APIs

The user accessing these APIs must have the Security Officer permission for write access and the Security Viewer permission for read-only access.

For more information about the roles and permissions required, refer to the section Managing Roles.

The Policy Management API uses the v2 version.

If you want to perform common operations using the Policy Management REST API, then refer the section Using the Common REST API Endpoints.

The following table provides section references that explain usage of some of the Policy Management REST APIs. It includes an example workflow to work with the Policy Management functions. If you want to view all the Policy Management APIs, then use the /doc API to retrieve the API specification.

| REST API | Section Reference |

|---|---|

| Policy Management initialization | Initializing the Policy Management |

| Creating an empty manual role that will accept all users | Creating a Manual Role |

| Create data elements | Create Data Elements |

| Create policy | Create Policy |

| Add roles and data elements to the policy | Adding roles and data elements to the policy |

| Create a default data store | Creating a default datastore |

| Deploy the data store | Deploying the Data Store |

| Get the deployment information | Getting the Deployment Information |

Initializing the Policy Management

This section explains how you can initialize Policy Management to create the keys-related data and the policy repository.

- Base URL

- https://{ESA IP address or Hostname}/pty/v2

- Authentication credentials

- TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs. - Path

- /pim/init

- Method

- POST

Sample Request

curl -H "Authorization: Bearer ${TOKEN}" -X POST "https://{ESA IP address or Hostname}:443/pty/v2/pim/init" -H "accept: application/json"

This sample request uses the JWT token authentication.

Creating a Manual Role

This section explains how you can create a manual role that accepts all the users.

For more information about working with roles, refer to the section Working with Roles.

- Base URL

- https://{ESA IP address or Hostname}/pty/v2

- Authentication credentials

- TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs. - Path

- /pim/roles

- Method

- POST

Sample Request

curl -H "Authorization: Bearer ${TOKEN}" -X POST "https://{ESA IP address or Hostname}:443/pty/v2/pim/roles" -H "accept: application/json" -H "Content-Type: application/json" -d "{\"name\":\"ROLE\",\"mode\":\"MANUAL\",\"allowAll\": true}

This sample request uses the JWT token authentication.

Creating Data Elements

This section explains how you can create data elements.

For more information about working with data elements, refer to the section Working with Data Elements.

- Base URL

- https://{ESA IP address or Hostname}/pty/v2

- Authentication credentials

- TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs. - Path

- /pim/roles

- Method

- POST

Sample Request

curl -H "Authorization: Bearer ${TOKEN}" -X POST "https://{ESA IP address or Hostname}:443/pty/v2/pim/dataelements" -H "accept: application/json" -H "Content-Type: application/json" -d "{\"name\": \"DE_ALPHANUM\",\"description\": \"DE_ALPHANUM\",\"alphaNumericToken\":{\"tokenizer\":\"SLT_1_3\",\"fromLeft\": 0,\"fromRight\": 0,\"lengthPreserving\": true, \"allowShort\": \"YES\"}}"

This sample request uses the JWT token authentication.

Creating Policy

This section explains how you can create a policy.

For more information about working with policies, refer to the section Creating Policies.

- Base URL

- https://{ESA IP address or Hostname}/pty/v2

- Authentication credentials

- TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs. - Path

- /pim/policies

- Method

- POST

Sample Request

curl -H "Authorization: Bearer ${TOKEN}" -X POST "https://{ESA IP address or Hostname}:443/pty/v2/pim/policies" -H "accept: application/json" -H "Content-Type: application/json" -d "{\"name\":\"POLICY\",\"description\": \"POLICY\", \"template\":{\"access\":{\"protect\":true,\"reProtect\":true,\"unProtect\":true},\"audit\":{\"success\":{\"protect\":false,\"reProtect\":false,\"unProtect\":false},\"failed\":{\"protect\":false,\"reProtect\":false,\"unProtect\":false}}}}"

This sample request uses the JWT token authentication.

Adding Roles and Data Elements to a Policy

This section explains how you can add roles and data elements to a policy.

For more information about adding roles and data elements to a policy, refer to the sections Adding Data Elements to Policy and Adding Roles to Policy respectively.

- Base URL

- https://{ESA IP address or Hostname}/pty/v2

- Authentication credentials

- TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs. - Path

- /pim/policies/1/rules

- Method

- POST

Sample Request

curl -H "Authorization: Bearer ${TOKEN}" -X POST "https://{ESA IP address or Hostname}:443/pty/v2/pim/policies/1/rules" -H "accept: application/json" -H "Content-Type: application/json" -d "{\"role\":\"1\",\"dataElement\":\"1\",\"noAccessOperation\":\"EXCEPTION\",\"permission\":{\"access\":{\"protect\":true,\"reProtect\":true,\"unProtect\":true},\"audit\":{\"success\":{\"protect\":false,\"reProtect\":false,\"unProtect\":false},\"failed\":{\"protect\":false,\"reProtect\":false,\"unProtect\":false}}}}"

This sample request uses the JWT token authentication.

Creating a Default Data Store

This section explains how you can create a default data store.

For more information about working with data stores, refer to the section Creating a Data Store.

- Base URL

- https://{ESA IP address or Hostname}/pty/v2

- Authentication credentials

- TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs. - Path

- /pim/datastores

- Method

- POST

Sample Request

curl -H "Authorization: Bearer ${TOKEN}" -X POST "https://{ESA IP address or Hostname}:443/pty/v2/pim/datastores" -H "accept: application/json" -H "Content-Type: application/json" -d "{\"name\":\"DS\",\"description\": \"DS\", \"default\":true}"

This sample request uses the JWT token authentication.

Deploying the Data Store

This section explains how you can deploy policies or trusted applications linked to a specific data store or multiple data stores.

For more information about deploying the Data Store, refer to the section Deploying Data Stores to Protectors.

Deploying a Specific Data Store

This section explains how you can deploy policies and trusted applications linked to a specific data store. The specifications provided for the specific data store are applied and becomes the end-result.

Note: If you deploy an array with empty policies or trusted applications, or both, then the connected protectors contain empty definitions for these respective items.

- Base URL

- https://{ESA IP address or Hostname}/pty/v2

- Authentication credentials

- TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs. - Path

- /pim/datastores/{dataStoreUid}/deploy

- Method

- POST

Sample Request

curl -H "Authorization: Bearer ${TOKEN}" -X POST "https://{ESA IP address or Hostname}:443/pty/v2/pim/datastores/{dataStoreUid}/deploy" -H "accept: application/json" -H "Content-Type: application/json" -d "{\"policies\":[\"1\"],\"applications\":[\"1\"]}"

This sample request uses the JWT token authentication.

Deploying Data Stores

This section explains how you can deploy data stores, which can contain the linking of either the policies or trusted applications, or both for the deployment.

Note: If you deploy a data store containing an array with empty policies or trusted applications, or both, then the connected protectors contain empty definitions for these respective items.

- Base URL

- https://{ESA IP address or Hostname}/pty/v2

- Authentication credentials

- TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs. - Path

- /pim/deploy

- Method

- POST

Sample Request

curl -H "Authorization: Bearer ${TOKEN}" -X POST "https://{ESA IP address}:443/pty/v2/pim/deploy" -H "accept: application/json" -H "Content-Type: application/json" -d "{\"dataStores\":[{\"uid\":\"1\",\"policies\":[\"1\"],\"applications\":[\"1\"]},{\"uid\":\"2\",\"policies\":[\"2\"],\"applications\":[\"2\"]}]}"

This sample request uses the JWT token authentication.

Getting the Deployment Information

This section explains how you can check the complete deployment information. This service returns the list of the data stores with the connected policies and trusted applications.

Note: The result might contain data store information that is pending deployment after combining the Policy Management operations performed through the ESA Web UI and PIM API.

- Base URL

- https://{ESA IP address or Hostname}/pty/v2

- Authentication credentials

- TOKEN - Environment variable containing the JWT token.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs. - Path

- /pim/deploy

- Method

- GET

Sample Request

curl -H "Authorization: Bearer ${TOKEN}" -X GET "https://{ESA IP address or Hostname}:443/pty/v2/pim/deploy" -H "accept: application/json"

This sample request uses the JWT token authentication.

3.1.2.5 - Using the Encrypted Resilient Package REST APIs

The Encrypted Resilient Package API is only used by the Immutable Resilient protectors.

Before you begin:

Ensure that the concept of resilient protectors and necessity of a resilient package is understood.

For more information on how the REST API is used to export the encrypted resilient package in an immutable policy deployment, refer to the section DevOps Approach for Application Protector.Ensure that the RPS service is running on the ESA.

The user accessing this API must have the Export Resilient Package permission.

For more information about the roles and permissions required, refer to the section Managing Roles.

The Encrypted Resilient Package API uses the v1 version.

If you want to perform common operations using the Encrypted Resilient Package API, then refer the section Using the Common REST API Endpoints.

The following table provides a section reference to the Encrypted Resilient Package API.

| REST API | Section Reference |

|---|---|

| Exporting the resilient package | Exporting Resilient Package |

Exporting Resilient Package Using GET Method

This API request exports the resilient package that can be used with resilient protectors. You can use the Basic authentication, Certificate authentication, and JWT authentication for encrypting and exporting the resilient package.

Warning: Do not modify the package that has been exported using the RPS Service API. If you modify the exported package, then the package will get corrupted.

The resilient package that has been exported using the Encrypted Resilient Package API is not FIPS-compliant.

- Base URL

- https://<ESA IP address or Hostname>/pty/v1/rps

- Path

- /export

- Method

- GET

- CURL request syntax

- Export API

curl -H "Authorization: Bearer <TOKEN>" -X GET https://<ESA IP address or Hostname>/pty/v1/rps/export/<fingerprint>?version=1\&coreVersion=1 -H "Content-Type: application/json" -o rps.json - In this command, TOKEN indicates the JWT token used for authenticating the API.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs.

- Query Parameters

- fingerprint

- Specify the fingerprint of the Data Store Export Key. The fingerprint is used to identify which Data Store to export and which export key to use for protecting the resilient package. The user with the Security Officer permissions must share the fingerprint of the Export Key with the user who is executing this API.

version

- Set the schema version of the exported resilient package that is supported by the specific protector.

coreVersion

- Set the Core policy schema version that is supported by the specific protector.

- Sample CURL request

- Export API

curl -H "Authorization: Bearer $<TOKEN>" -X GET https://<ESA IP address or Hostname>/pty/v1/rps/export/a7fdbc0cccc954e00920a4520787f0a08488db8e0f77f95aa534c5f80477c03a?version=1\&coreVersion=1 -H "Content-Type: application/json" -o rps.jsonThis sample request uses the JWT token authentication.

- Sample response

- The

rps.jsonfile is exported using the public key associated with the specified fingerprint.

Protect the encrypted resilient package with standard file permissions to ensure that only the dedicated protectors can access the package.

Exporting Resilient Package Using POST Method (Deprecated)

Note: The POST method of the Export API has been deprecated. A DevOps user can use this API with any public-private key pair of their choosing. Instead of the POST method, it is recommended to use the GET method for exporting a protected resilient package.

If you want to disable this API, contact Protegrity Support.

This API request exports the resilient package that can be used with resilient protectors. You can use the Basic authentication, Certificate authentication, and JWT authentication for encrypting and exporting the resilient package.

Warning: Do not modify the package that has been exported using the RPS Service API. If you modify the exported package, then the package will get corrupted.

The resilient package that has been exported using the Encrypted Resilient Package API is not FIPS-compliant.

- Base URL

- https://<ESA IP address or Hostname>/pty/v1/rps

- Path

- /export

- Method

- POST

- CURL request syntax

- Export API - KEK

curl -H "Authorization: Bearer <TOKEN>" -X POST https://<ESA IP address or Hostname>/pty/v1/rps/export\?version=1\&coreVersion=1 -H "Content-Type: application/json" --data '{ "kek":{ "publicKey":{ "label": "<Key_name>", "algorithm": "<RSA_Algorithm>", "value": "<Value of publickey>" } }' -o rps.json - In this command, TOKEN indicates the JWT token used for authenticating the API.

For more information about creating a JWT token, refer to the section Generating JWT for REST APIs.

Note: You can download the resilient package only from the IP address that is part of the allowed servers list connected to a Data Store. This is only applicable for the 10.0.x and 10.1.0 protectors.

- Query parameters

- version

- Set the schema version of the exported resilient package that is supported by the specific protector.

coreVersion

- Set the Core policy schema version that is supported by the specific protector.

- Request body elements

- Encryption Method

- The kek encryption can be used to protect the exported file.

| Encryption Method | Sub-elements | Description |

|---|---|---|

| kek\publicKey | label | Name of the publicKey. |

| algorithm | The RPS API supports the following algorithms:

| |

| value | Specify the value of the publicKey. |

- Sample CURL request

- Export API - KEK

curl -H "Authorization: Bearer $<TOKEN>" -X POST https://<ESA IP address or Hostname>/pty/v1/rps/export\?version=1\&coreVersion=1 -H "Content-Type: application/json" --data '{ "kek":{ "publicKey":{ "label": "key_name", "algorithm": "RSA_OAEP_SHA256", "value": "-----BEGIN PUBLIC KEY-----MIICIjANBgkqhkiG9w0BAQEFAAOCAg8AMIICCgKCAgEA1eq9vH5Dq8pwPqOSqB0YdY6ehBRNWCgYhh9z1X093id+42eTRDHMOpLXRLhOMdgOeeyEsue1s5ZEOKY9j2TcaVTRwLhSMfacjugfiknnUESziUi9mt+XFnSgk7n4t5EF7fjvriOQvHCp24xCbtwKQlOT3x4zUs/REyJ8FXSrFEvrzbb/mEFfYhp2J6c90CKYqbDX6SFW8WjphDb/kgqg/KfT8AlsllAnci4CZ+7u0Iw7GsRvEvrVUCbBsXfB7InTst3hTc4A7iiY36kSEn78mXtfLjWiMpzEBxOteohmXKgSAynI7nI8c0ZhHSoZLUSJ2IQUi25ho8uxd/v3fedTTD91zRTxMJKw8XDrwjXllH7FGgsWBUenkO2lRlfIYBDctjv1MB+QJlNo+gOTGg8sJ1czBm20VQHHcyHpCKNu2gKzqWqSU6iGcwGXPCKY8/yEpNyPVFS/i7GAp10jO+QdOBskPviiLFN5kMh05ZGBpyNvfAQantwGv15Ip0RJ3LTQbKE62DAGNcdP6rizwm9SSt0WcG58OenBX5eB0gWBRrZI5s3EkhThYXyxbvFWObMWb/3jMsE+O22NvqAxWSasPR1zS1WBf25ush3v6BGBO4Frl5kBRrTCSSfAZBDha5VqXOqR1XIdQKf8wKn5DSScpMRuyf3ymRGQf915CC7zwp0CAwEAAQ==-----END PUBLIC KEY-----"} } }' -o rps.jsonThis sample request uses the JWT token authentication.

- Sample response

- The

rps.jsonfile is exported.

Protect the encrypted resilient package with standard file permissions to ensure that only the dedicated protectors can access the package.

3.1.2.6 - Using the Authentication and Token Management REST APIs

The Authentication and Token Management API uses the v2 version.

If you want to perform common operations using the Authentication and Token REST API, then refer the section Using the Common REST API Endpoints.

The following table provides section references that explain usage of some of the Authentication and Token REST APIs. It includes sample examples to work with the Authentication and Token functions. If you want to view all the Authentication and Token APIs, then use the /doc API to retrieve the API specification.

| Category | REST API |

|---|---|

| Token Management | Generate token |

| Refresh token | |

| Roles and Permissions Management | List all permissions |

| List all roles | |

| Update roles | |

| Create new role | |

| Delete roles | |

| User Management | Create user endpoint |

| Fetch users | |

| Update user endpoint | |

| Fetch user by ID | |

| Delete user endpoint | |

| Update user password endpoint | |

| Group Management | Fetch groups |

| Create group endpoint | |

| Update group endpoint | |

| Delete group endpoint | |

| SAML SSO Configuration | List SAML providers |

| Create SAML provider endpoint | |

| Get SAML provider | |

| Update SAML provider endpoint | |

| Delete SAML provider endpoint |

Token Management

The following section lists the commonly used APIs to manage tokens.

Generate token

This API explains how you can generate an access token for authenticating the APIs.

- Base URL

- https://{NFA IP address or Hostname}/pty/v1

- Path

- /auth/login/token

- Method

- POST

Request Body

- loginname: User name for authentication.

- password: Password for authentication.

Result This API returns JWT access token in the response header and the refresh token in the response body. You can use the refresh token in the Refresh token API to obtain new access tokens without logging again.

Sample Request

curl -X 'POST' \

'https://nfa.aws.protegrity.com/pty/v1/auth/login/token' \

-H 'accept: application/json' \

-H 'Content-Type: application/x-www-form-urlencoded' \

-d 'loginname=<User name>&password=<Password>!'

The login name and password are default for the MVP.

Sample Response

The following response appears for the status code 200, if the API is invoked successfully.

Response body

{

"status": 0,

"data": {

"refreshToken": "eyJhbGciOiJIUzUxMiIsInR5cCcZQ",

"expiresIn": 1800,

"refreshExpiresIn": 1800

},

"messages": []

}

Response header

content-length: 832

content-type: application/json

date: Thu,16 Oct 2025 10:30:53 GMT

pty_access_jwt_token: eyJhbGciOiJSUzI1NiIsInR4YRUw

strict-transport-security: max-age=31536000; includeSubDomains

Refresh token

This API explains how to refresh an access token using the refresh token.

- Base URL

- https://{NFA IP address or Hostname}/pty/v1

- Path

- /auth/login/refresh

- Method

- POST

Request Body

- refreshToken: Refresh token for getting a new access token.

Result This API returns a new JWT access token in the response header and a new refresh token in the response body. You can use this refresh token to obtain new access tokens without logging again.

Sample Request

curl -X 'POST' \

'https://nfa.aws.protegrity.com/pty/v1/auth/login/token/refresh' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{

"refreshToken": "eyJhbGciOiJIUzUxMiIsInR5cCINGFeZEf8hw"

}

'

Sample Response

The following response appears for the status code 200, if the API is invoked successfully.

Response body

{

"status": 0,

"data": {

"refreshToken": "MSkVFZiRl3Jkwi0uJQ",

"expiresIn": 1800,

"refreshExpiresIn": 1799

},

"messages": []

}

Response header

content-length: 832

content-type: application/json

date: Thu,16 Oct 2025 10:36:28 GMT

pty_access_jwt_token: eyJhbGciOiJSUzI1Nim95VHqh00vHfr8ip9RhyO-4FcxQ

strict-transport-security: max-age=31536000; includeSubDomains

Roles and Permissions Management

The following section lists the commonly used APIs for managing user roles and permissions.

List all permissions

This API lists all the permissions that are applicable to the user.

- Base URL

- https://{NFA IP address or Hostname}/pty/v1

- Path

- /auth/permissions

- Method

- GET

Request Body No parameters.

Result This API returns a list of all the permissions available for the logged in user.

Sample Request

curl -X 'GET' \

'https://nfa.aws.protegrity.com/pty/v1/auth/permissions' \

-H 'accept: application/json' \

-H 'Authorization: Bearer <Access_token>'

This sample request uses the access token for authentication.

For more information about generating the access token, refer to the section Generate token.

Sample Response

The following response appears for the status code 200, if the API is invoked successfully.

Response body

[

{

"id": "faf70fd8-1c6d-4209-b64a-e9db37bbf58b",

"name": "keycloak_viewer",

"description": "Keycloak Viewer",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "b0ae18f9-fb85-4b1e-86fe-51b9f0fd8e53",

"name": "cli_admin",

"description": "Command Line Admin",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "cfb4d416-85f2-4b5b-a7d9-95bedfb5c57f",

"name": "can_access_ap",

"description": "Permission to access application",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "f03fcfcd-0aa9-4fcb-862f-01227f093516",

"name": "can_create_token",

"description": "Can Create JWT Token",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "ab434af4-8a49-430b-8306-9089744cd37c",

"name": "keycloak_manager",

"description": "Keycloak Manager",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "46142cfb-be47-4936-b399-7afcb4eea2cc",

"name": "web_viewer",

"description": "Appliance web viewer",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "1ced685a-7a02-4820-84bd-1dafa6273e13",

"name": "uma_protection",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "f0a84500-e19a-4be2-a712-b01cc94b448d",

"name": "can_proxy_ap",

"description": "Proxy for a an application",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "efe391ed-032e-494e-ac62-20368cb4e41e",

"name": "can_export_certificates",

"description": "Can download protector certificates",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "43e7648d-49a8-4813-bf86-3d5bf31bfe7c",

"name": "Shell_Accounts",

"description": "Accounts that have cli access",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "0bb2cd71-01c4-4634-b77f-2d65dcdb5e8e",

"name": "can_login_web",

"description": "Web Login Permission",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "ce93275f-5cd2-4905-8237-89f4dbf2cc48",

"name": "web_admin",

"description": "Appliance web manager",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "e70d3988-4dfe-4411-b6df-c2181eb16b03",

"name": "security_officer",

"description": "Security Officer",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "aee1f91e-08fa-4c14-94ad-1d3b1cf08d4b",

"name": "nfa_insight",

"description": "Access to Insights and Insight Dashboard",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

},

{

"id": "28f7ad8b-1d7c-49e0-95c8-6bde2a4bfba5",

"name": "can_fetch_package",

"description": "Can download resilient packages",

"composite": false,

"clientRole": true,

"containerId": "a09679da-b559-4856-8d29-6d39aa6485a1"

}

]

List all roles

This API lists all the roles applicable to the user.

- Base URL

- https://{NFA IP address or Hostname}/pty/v1

- Path

- /auth/roles

- Method

- GET

Request Body No parameters.

Result This API returns a list of all the roles available for the logged in user.

Sample Request

curl -X 'GET' \

'https://nfa.aws.protegrity.com/pty/v1/auth/roles' \

-H 'accept: application/json' \

-H 'Authorization: Bearer <Access_token>'

This sample request uses the access token for authentication.

For more information about generating the access token, refer to the section Generate token.

Sample Response

The following response appears for the status code 200, if the API is invoked successfully.

Response body

[

{

"name": "directory_admin",

"description": "Directory Administrator",

"composite": true,

"permissions": [

"keycloak_manager"

]

},

{

"name": "policy_proxy_user",

"description": "Policy Proxy User",

"composite": true,

"permissions": [

"can_proxy_ap"

]

},

{

"name": "security_viewer",

"description": "Security Administrator Viewer",

"composite": true,

"permissions": [

"keycloak_viewer",

"can_login_web"

]

},

{

"name": "policy_user",

"description": "Policy User",

"composite": true,

"permissions": [

"can_access_ap"

]

},

{

"name": "security_admin",

"description": "Security Administrator",

"composite": true,

"permissions": [

"cli_admin",

"can_create_token",

"web_admin",

"keycloak_manager",

"security_officer",

"eclipse_insight",

"can_fetch_package",

"can_export_certificates"

]

}

]

Update role

This API enables you to update an existing role and its permissions.

- Base URL

- https://{NFA IP address or Hostname}/pty/v1

- Path

- /auth/roles

- Method

- PUT

Request Body

- name: Role name.

- description: Description of the role.

- permissions: List of permissions that need to be updated for the existing role.

Result This API updates the existing role and its permissions.

Sample Request

curl -X 'PUT' \

'https://nfa.aws.protegrity.com/pty/v1/auth/roles' \

-H 'accept: application/json' \

-H 'Authorization: Bearer <Access_token>' \

-H 'Content-Type: application/json' \

-d '{

"name": "admin",

"description": "Administrator role",

"permissions": [

"perm1",

"perm2"

]

}'

This sample request uses the access token for authentication.

For more information about generating the access token, refer to the section Generate token.

Sample Response

The following response appears for the status code 200, if the API is invoked successfully.

Response body

{

"role_name": "admin",

"status": "updated"

}

Create role

This API enables you to create a role.

- Base URL

- https://{NFA IP address or Hostname}/pty/v1

- Path

- /auth/roles

- Method

- POST

Request Body

- name: Role name.

- description: Description of the role.

- permissions: List of permissions that need to created for the existing role.

Result This API creates a roles with the requested permissions.

Sample Request

curl -X 'POST' \

'https://nfa.aws.protegrity.com/pty/v1/auth/roles' \

-H 'accept: application/json' \

-H 'Authorization: Bearer <Access_token>' \

-H 'Content-Type: application/json' \

-d '{

"name": "admin",

"description": "Administrator role",

"permissions": [

"perm1",

"perm2"

]

}'

This sample request uses the access token for authentication.

For more information about generating the access token, refer to the section Generate token.

Sample Response

The following response appears for the status code 200, if the API is invoked successfully.

Response body

{

"role_name": "admin",

"status": "created"

}

Delete role

This API enables you to delete a role.

- Base URL

- https://{NFA IP address or Hostname}/pty/v1

- Path

- /auth/roles

- Method

- DELETE

Request Body

- name: Role name.

Result This API deletes the specific role.

Sample Request

curl -X 'POST' \

'https://nfa.aws.protegrity.com/pty/v1/auth/roles' \

-H 'accept: application/json' \

-H 'Authorization: Bearer <Access_token>' \

-H 'Content-Type: application/json' \

-d '{

"name": "admin"

}'

This sample request uses the access token for authentication.

For more information about generating the access token, refer to the section Generate token.

Sample Response

The following response appears for the status code 200, if the API is invoked successfully.

Response body

{

"role_name": "admin",

"status": "deleted"

}

User Management

The following section lists the commonly used APIs for managing users.

Create user endpoint

This API enables you to create a user.

- Base URL

- https://{NFA IP address or Hostname}/pty/v1

- Path

- /auth/users

- Method

- POST

Request Body

- username: Name of the user. This is a mandatory field

- email: Email of the user.

- firstName: First name of the user.

- lastName: Last name of the user.

- enabled: Enable the user.

- password: Password for the user.

- roles: Roles to be assigned to the user.

- groups: Groups in which the user is included.

Result This API creates a user with a unique user ID.

Sample Request

curl -X 'POST' \

'https://nfa.aws.protegrity.com/pty/v1/auth/users' \

-H 'accept: application/json' \

-H 'Authorization: Bearer <Access_token>' \

-H 'Content-Type: application/json' \

-d '{

"username": "alpha",

"email": "alpha@example.com",

"firstName": "Alpha",

"lastName": "User",

"enabled": true,

"password": "StrongPassword123!",

"roles": [

"directory_admin"

]

}'

This sample request uses the access token for authentication.

For more information about generating the access token, refer to the section Generate token.

Sample Response