Introduction

Learn about data privacy.

Organizations today collect vast amounts of personal data, providing valuable insights into individuals’ habits, purchasing trends, health, and preferences. This information helps businesses refine their strategies, develop products, and drive success. However, much of this data is highly sensitive and private, requiring organizations to implement robust protection measures that align with compliance requirements and business needs.

To safeguard personal data, pseudonymization can be used to replace direct identifiers with encrypted or tokenized values, allowing data to be processed while minimizing direct exposure to sensitive attributes. Because pseudonymized data can be re-identified with authorized access to the decryption or tokenization mechanism, it enables controlled data usage while maintaining privacy. However, as more fields—particularly quasi-identifiers—are pseudonymized to prevent re-identification, the overall utility of the data may decrease. Attributes like ZIP codes, birthdates, or demographic details may not be personally identifiable on their own, but when combined, they can reveal an individual’s identity. Protecting these fields strengthens privacy but may also limit their analytical value. Striking the right balance between security and usability is essential for compliance while preserving meaningful insights.

For scenarios requiring a higher level of privacy protection, anonymization provides an additional layer of security by ensuring that not only PII but also quasi-identifiers are generalized, redacted, or transformed. This prevents re-identification even when multiple data points are analyzed together. Anonymization techniques include removing or obfuscating key attributes, generalizing data to broader categories (e.g., replacing an exact address with just the city or state). By implementing anonymization, organizations can retain the analytical value of data while eliminating the risk of re-identification, ensuring compliance with privacy regulations and ethical data practices.

1 - Business cases

A few business cases to understand more about the importance of data privacy.

Consider the following business cases:

- Case 1: A hospital wants to share patient data with a third-party research lab. The privacy of the patient, however, must be preserved.

- Case 2: An organization requires customer data from several credit unions to create training data. The data will be used to train machine learning models looking for new insights. The customers, however, have not agreed for their data to be used.

- Case 3: An organization which must be compliant with GDPR, CCPA, or other privacy regulations requires to keep some information beyond the period that meets regulations.

- Case 4: An organization requires raw data to train their software for machine learning.

In all these cases, data forms an integral part of the source for continuing the business process or analysis. Additionally, only what was done is required in all the cases, who did it does not have any value in the data. In this case, the personal information about the individual users can be removed from the dataset. This removes the personal factor from the data and at the same time retains the value of the data from the business point of view. This data, since it does not have any private information, is also pulled from the legal requirements governing the data.

Thus, revisiting the business cases, the data in each case can be valuable after processing it in the following ways:

- In case 1, all private information can be removed from the data and sent to the research lab for analysis.

- In case 2, all private information must be scrubbed from the data before the data can be used. After scrubbing, the data will be generalized in such a way that the data can be used for machine learning, since no one will be able to identify individuals in the anonymized dataset.

- In case 3, by anonymizing the data, the Data Subject is removed, and the data is no longer in scope for privacy compliance.

- In case 4, a generalized form of the data can be obtained.

Removing data manually to remove private information would take a lot of time and effort, especially if the dataset consists of millions of records, with file sizes of several GBs. Running a find and replace or just deleting columns might remove important fields that might make the dataset useless for further analysis. Additionally, a combination of remaining attributes (such as, date of birth, postcode, gender) may be enough to re-identify the data subject.

Protegrity Anonymization applies various privacy models to the data, removing direct identifiers and applying generalization to the remaining indirect identifiers, to ensure that no single data subject can be identified.

2 - Data security and data privacy

Understand the difference between data security and data privacy.

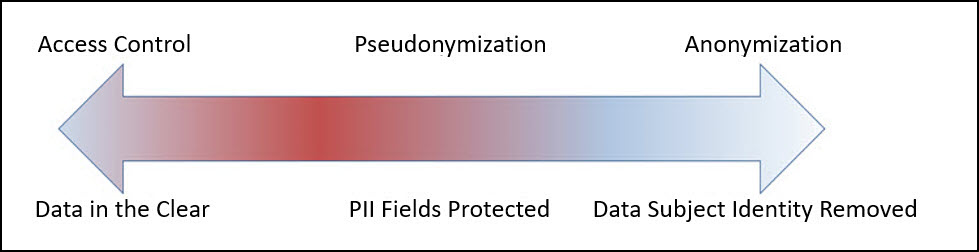

Most organizations understand the need to secure access to personally identifiable information. Sensitive values in records are often protected at rest (storage), in transit (network) and in use (fine-grained access control), through a process known as de-identification. De-Identification is a spectrum, where data security and data privacy issues must be balanced with data usability.

Pseudonymization

Pseudonymization is the process of de-identification by substituting sensitive values with a consistent, non-sensitive value. This is most often accomplished through encryption, tokenization, or dynamic data masking. Access to the process for re-identification (decryption, detokenization, unmasking) is controlled, so that only users with a business requirement will see the sensitive values.

Advantages:

- The original data can be obtained again.

- Only authorized users can view the original data from protected data.

- It processes each record and cell (intersection of a record and column) individually.

- This process is faster than anonymization.

Disadvantages

Access-Control Dependency: Pseudonymized data remains linkable to its original form if authorized users have access to the decryption or tokenization mechanism, which requires strict security controls.

Regulatory Considerations: Since pseudonymization allows re-identification under controlled access, it may not meet the same compliance exemptions as anonymization under certain privacy regulations.

Increased Security Overhead: Additional security measures are needed to protect the tokenization keys and manage access controls, ensuring only authorized users can reverse the process.

Limited Protection for Quasi-Identifiers: While direct identifiers are typically tokenized, quasi-identifiers (e.g., birthdates, ZIP codes) may still pose a re-identification risk if not generalized or redacted.

Using tokenized data might make analysis incorrect and or less useful (e.g., changing time related attributes).

The tokenized data is still private from the users perspective.

Further processing is required to retrieve the original data.

Additional security is required to secure the data and the keys used for working with data.

Anonymization

Anonymization is the process of de-identification which irreversibly redacts, aggregates, and generalizes identifiable information on all data subjects in a dataset. This method ensures that while the data retains value for various use cases, analytics, data democratization, sharing with 3rd parties, and so on, the individual data subject can no longer be identified in the dataset.

Advantages:

- Anonymized datasets can be used for analysis with typically low information loss.

- An individual user cannot be identified from the anonymized dataset.

- Enables compliance with privacy regulation.

Disadvantages

- Being an irreversible process, the original data cannot be obtained again. This is required for some use cases.

- This process is slower than pseudonymization because multiple passes must be made on the set to anonymize it.

3 - Importance and types of data

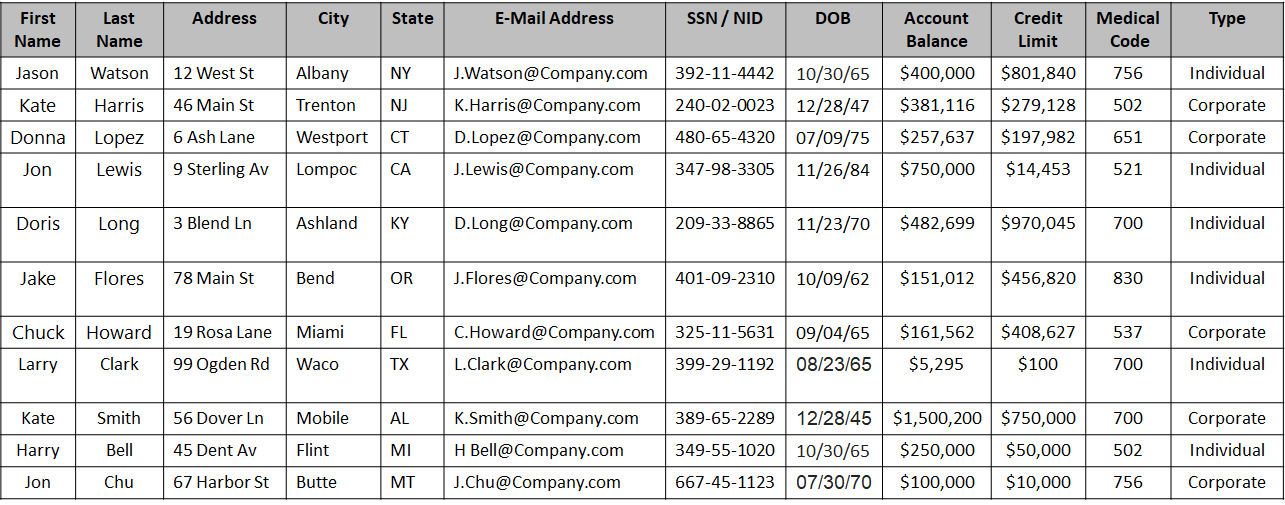

A record consists of all the information pertaining to a user. This record consists of different fields of information, such as, first name, last name, address, telephone number, age, and so on.

These records might be linked with other records, such as, income statements or medical records to provide valuable information. The various fields as a whole, called a record, is private and is user-centric. However, the individual fields may or may not be personal. Accordingly, based on the privacy level, the following data classifications are available:

- Direct Identifier: Identity Attributes can identify an individual with the value alone. These attributes are unique to an individual in a dataset and at times even in the world. It is personal and private to the user. For example, name, passport, Social Security Number (SSN), mobile number, and so on.

- Quasi-Identifier or Indirect Identifier: Quasi-Identifying Attributes are identifying characteristic about a data subject. However, you cannot identify an individual with the quasi-identifier alone. For example, date of birth or an address. Moreover, the individual pieces of data in a quasi-identifier might not be enough to identify a single individual. Take the example of date of birth, the year might be common to many individuals and would be difficult to narrow down to a single individual. However, if the dataset is small, then it might be easy to identify an individual using this information.

- Data about data subject: Data about the data subject is typically the data that is being analyzed. This data might exist in the same table or a different related table of the dataset. It provides valuable information about the dataset and is very helpful for analysis. This data may or might not be private to an individual. For example, salary, account balance, or credit limit. However, like quasi-identifiers, in a small dataset, this data might be unique to an individual. Additionally, this data can be classified as follows:

- Sensitive Attributes: This data may disclose something like a health condition which in a small result set may identify a single individual.

- Insensitive Attributes: This data is not associated with a privacy risk and is common information, such as, the type of bank accounts in a bank, individual or business.

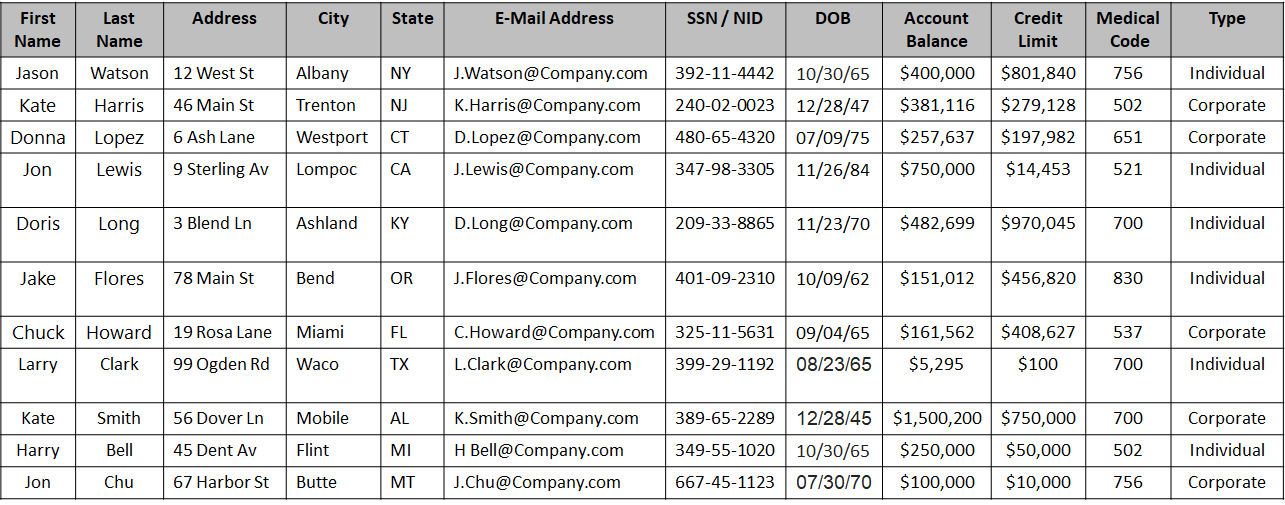

A sample dataset is shown in the following figure:

Based on the type of data, the columns in the above table can be classified as follows:

| Type | Field Names | Description |

|---|

| Direct Identifier | First Name, Last Name, Address with city and state, E-Mail Address, SSN / NID | The data in these fields are enough to identify an individual. |

| Quasi-Identifier | City, State, Date of Birth | The data in these fields could be the same for more than one individual.

Note: Address could be a direct identifier if a single individual is present from a particular state. |

| Sensitive Attribute | Account Balance, Credit Limit, Medical Code | The data is important for analysis, however, in a small dataset it is easy to de-identify an individual. |

| Insensitive Attribute | Type | The data is general information making it difficult to de-identify an individual. |

4 - Data anonymization techniques

The privacy models are techniques for anonymizing data.

Important terminology

- De-identification:

General term for any process of removing the association between a set of identifying data and the data subject.

- Pseudonymization:

Particular type of data de-identification that both removes the association with a data subject and adds an association between a particular set of characteristics relating to the data subject and one or more pseudonyms.

- Anonymization:

Process that removes the association between the identifying dataset and the data subject.

Anonymization is another subcategory of de-identification. Unlike pseudonymization, it does not provide a means by which the information may be linked to the same person across multiple data records or information systems.

Hence reidentification of anonymized data is not possible.

Note: As defined in ISO/TS 25237:2008.

Anonymization models

k-anonymity:

K-anonymity can be described as a “hiding in the crowd”. Each quasi-identifier tuple occurs in at least k records for a dataset with k-anonymity.

Definition: if each individual is part of a larger group, then any of the records in this group could correspond to a single person.

l-diversity:

The l-diversity model is an extension of the k-anonymity and adds the promotion of intra-group diversity for sensitive values in the anonymization mechanism.

The l-diversity model handles some of the weaknesses in the k-anonymity model where protected identities to the level of k-individuals is not equivalent to protecting the corresponding sensitive values that were generalized or suppressed,

especially when the sensitive values within a group exhibit homogeneity.

t-closeness:

t-closeness is a further refinement of l-diversity. The t-closeness model extends the l-diversity model by treating the values of an attribute distinctly by taking into account the distribution of data values for that attribute.

5 - How Protegrity Anonymization Works

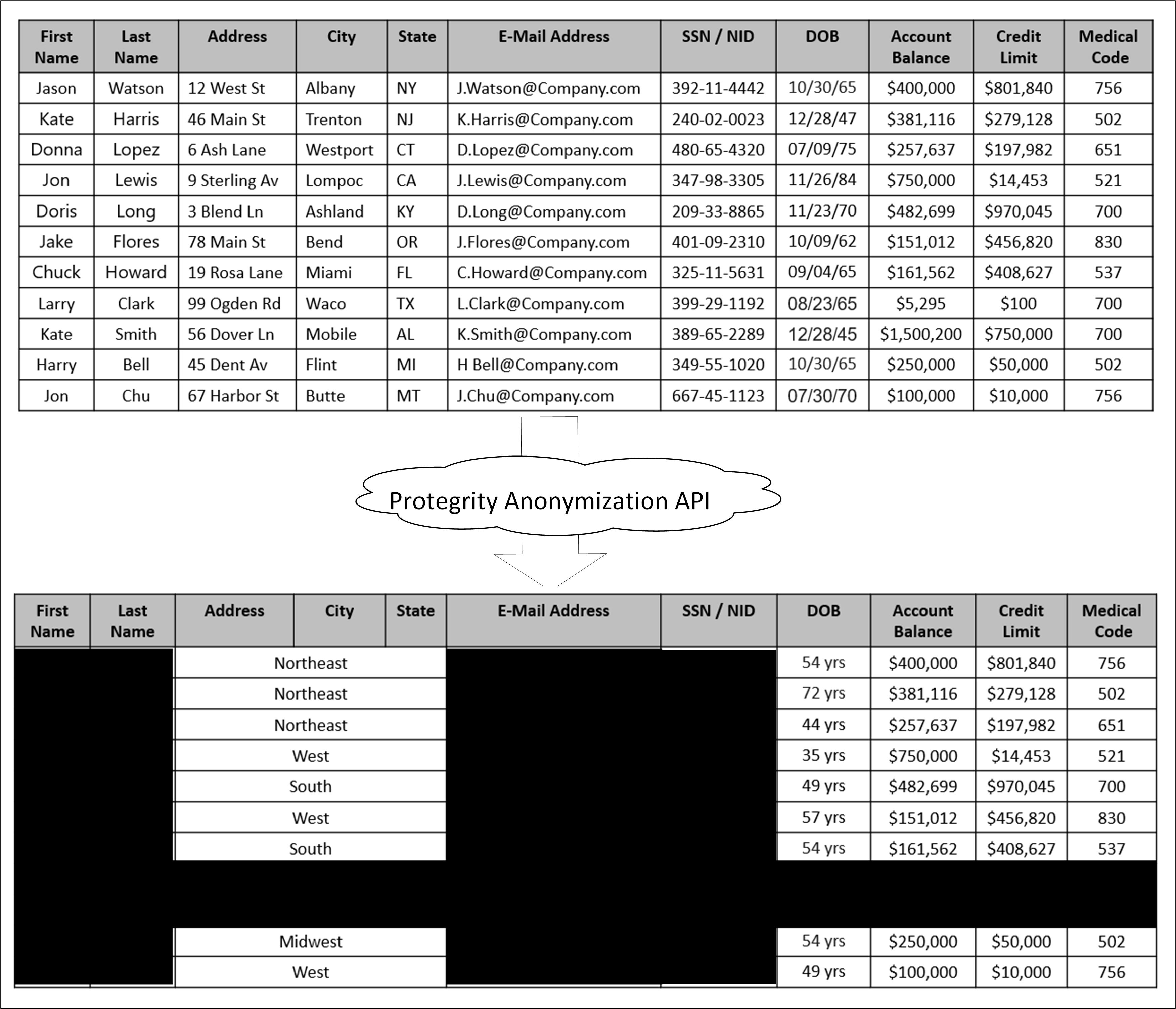

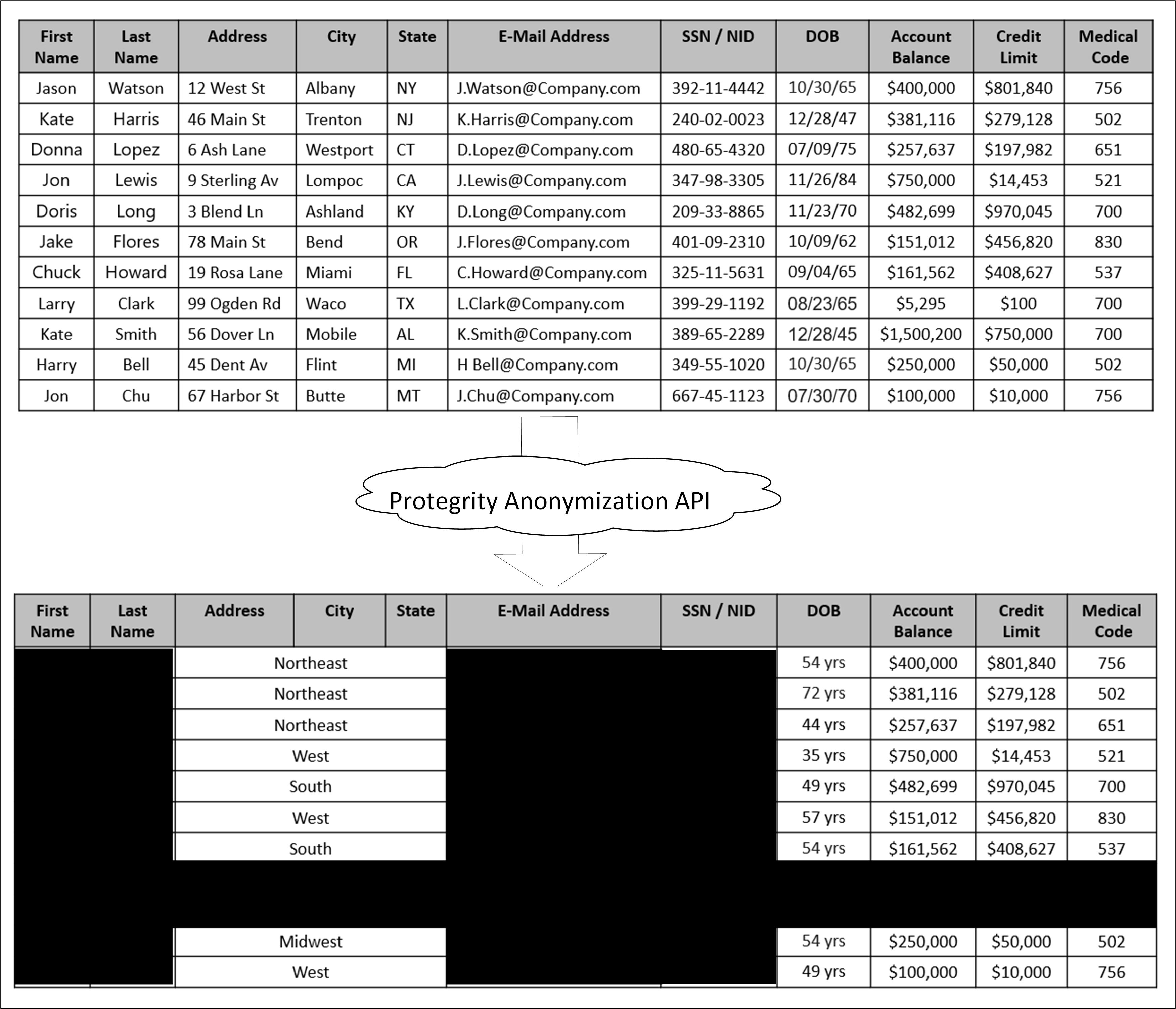

Protegrity Anonymization takes as input a dataset, removes direct identifiers, transforms quasi identifiers, and applies privacy models, and outputs an anonymized dataset. Additionally, the three privacy models are used to calculate the risk of re-identification. They also generalize and remove direct identifiers.

Protegrity Anonymization is a software solution that processes data by removing personal information and transforming the remaining details to protect privacy.

In simple terms, it takes raw data as input, applies techniques like generalization and summarization, and outputs anonymized data that can still be used for analysis—without revealing individual identities. The following figure illustrates this process.

As shown in the above image, a sample table is fed as input into Protegrity Anonymization. The private data that can be used to identify a particular individual is removed from the table. The final table with anonymized information is provided as output. The output table shows data loss due to column and row removals during Anonymization. This data loss is necessary to mitigate the risk of de-identification.

The anonymized data is used for analytics and data sharing. However, a standard set of attacks is defined to assess the effectiveness of Anonymization against different attack vectors. The de-identification attacks can be from a prosecutor, journalist, or marketer. The prosecutor’s attack is known as the worst case attack since the target individual is known.

- In prosecutor, the attacker has prior knowledge about a specific person whose information is present in the dataset. The attacker matches this pre-existing information with the information in the dataset and identifies an individual.

- In journalist, the attacker uses the prior information that is available. However, this information might not be enough to identify a person in the dataset. Here, the attacker might find additional information about the person using public records and narrow down the records to de-identify the individual.

- In marketer, the attacker tries to de-identify as many people as possible from the dataset. This is a hit or miss strategy and many individuals identified might be incorrect. However, even though a lot of individuals de-identified might be incorrect, it is an issue if even few individuals are identified.

For more information about risk metrics, refer to Protegrity Anonymization Risk Metrics.