How Protegrity Anonymization Works

Protegrity Anonymization is a software solution that processes data by removing personal information and transforming the remaining details to protect privacy.

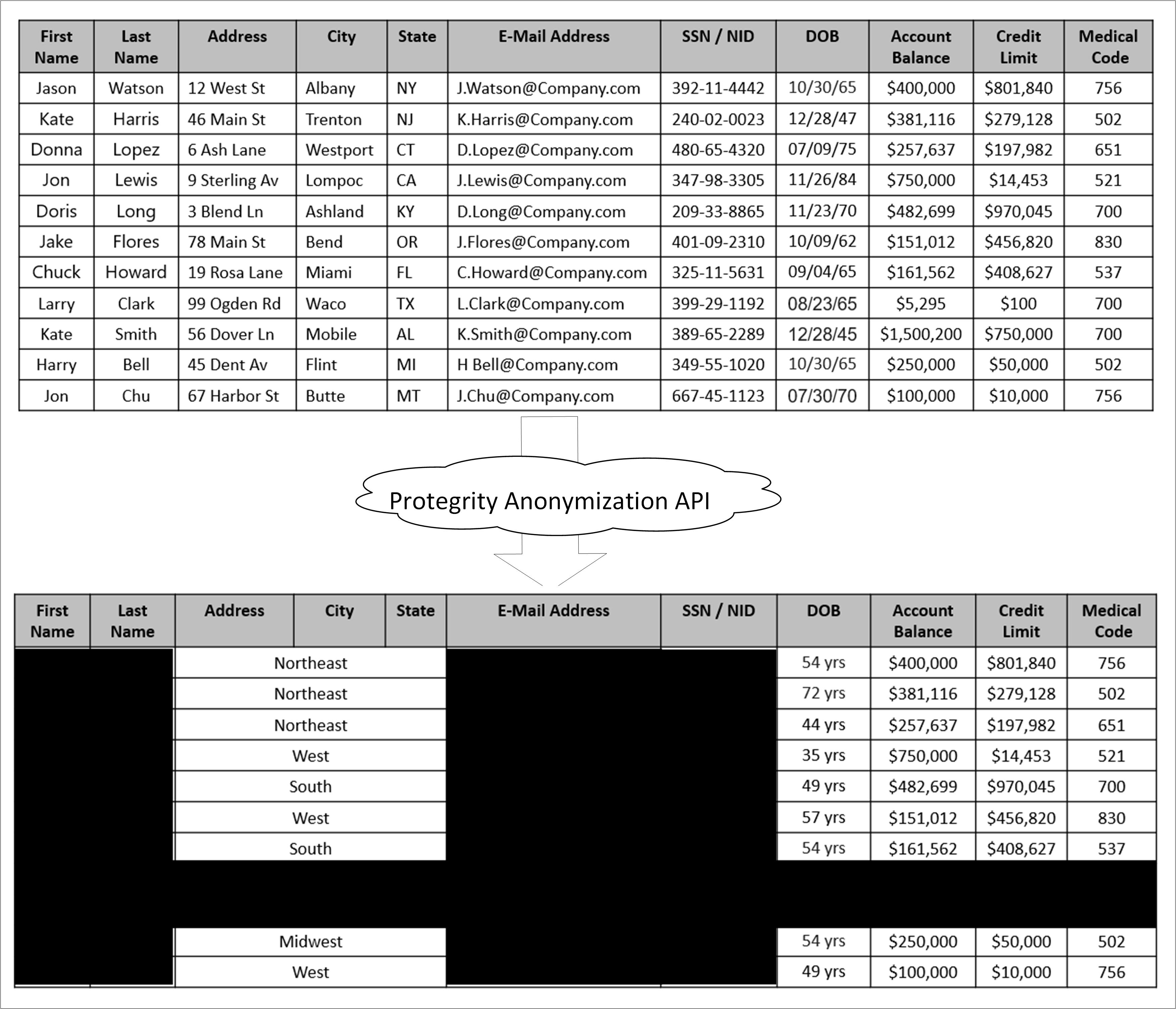

In simple terms, it takes raw data as input, applies techniques like generalization and summarization, and outputs anonymized data that can still be used for analysis—without revealing individual identities. The following figure illustrates this process.

As shown in the above image, a sample table is fed as input into Protegrity Anonymization. The private data that can be used to identify a particular individual is removed from the table. The final table with anonymized information is provided as output. The output table shows data loss due to column and row removals during Protegrity Anonymization. This data loss is necessary to mitigate the risk of de-identification.

The anonymized data is used for analytics and data sharing. However, a standard set of attacks is defined to assess the effectiveness of Protegrity Anonymization against different attack vectors. The de-identification attacks can be from a prosecutor, journalist, or marketer. The prosecutor’s attack is known as the worst case attack since the target individual is known.

- In prosecutor, the attacker has prior knowledge about a specific person whose information is present in the dataset. The attacker matches this pre-existing information with the information in the dataset and identifies an individual.

- In journalist, the attacker uses the prior information that is available. However, this information might not be enough to identify a person in the dataset. Here, the attacker might find additional information about the person using public records and narrow down the records to de-identify the individual.

- In marketer, the attacker tries to de-identify as many people as possible from the dataset. This is a hit or miss strategy and many individuals identified might be incorrect. However, even though a lot of individuals de-identified might be incorrect, it is an issue if even few individuals are identified.

For more information about risk metrics, refer to Protegrity Anonymization Risk Metrics.

Feedback

Was this page helpful?