This is the multi-page printable view of this section. Click here to print.

AWS

- 1: Cloud API

- 1.1: Overview

- 1.2: Architecture

- 1.3: Installation

- 1.3.1: Prerequisites

- 1.3.2: Pre-Configuration

- 1.3.3: Protect Service Installation

- 1.3.4: Policy Agent Installation

- 1.3.5: Audit Log Forwarder Installation

- 1.3.6:

- 1.3.7:

- 1.3.8:

- 1.3.9:

- 1.4: REST API

- 1.4.1: Authorization

- 1.4.2: HTTP Status Codes

- 1.4.3: Payload Encoding

- 1.4.4: TLS Mutual Authentication

- 1.4.5: v1 Specification

- 1.4.6: Legacy Specification

- 1.5: Performance

- 1.6: Audit Logging

- 1.7: No Access Behavior

- 1.8: Known Limitations

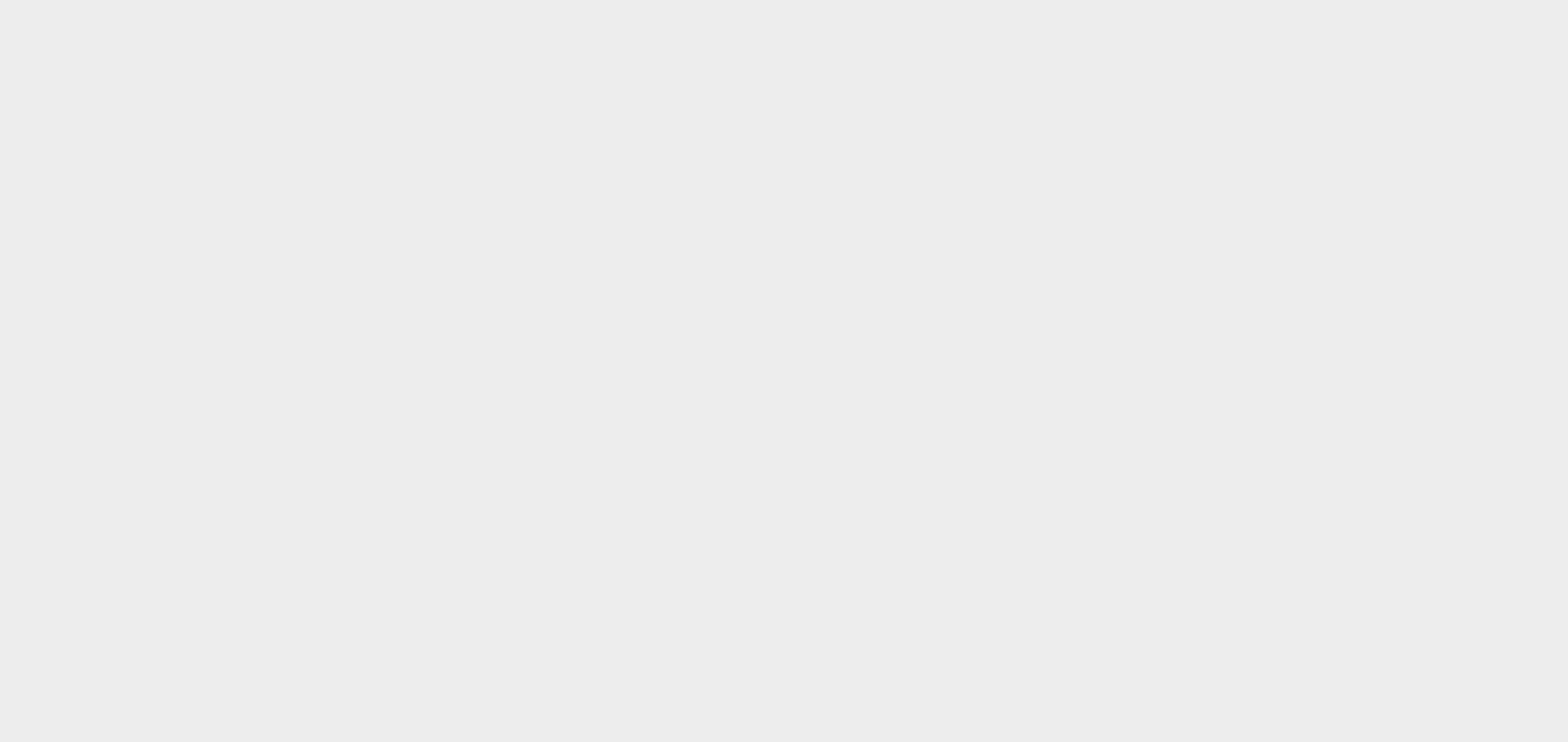

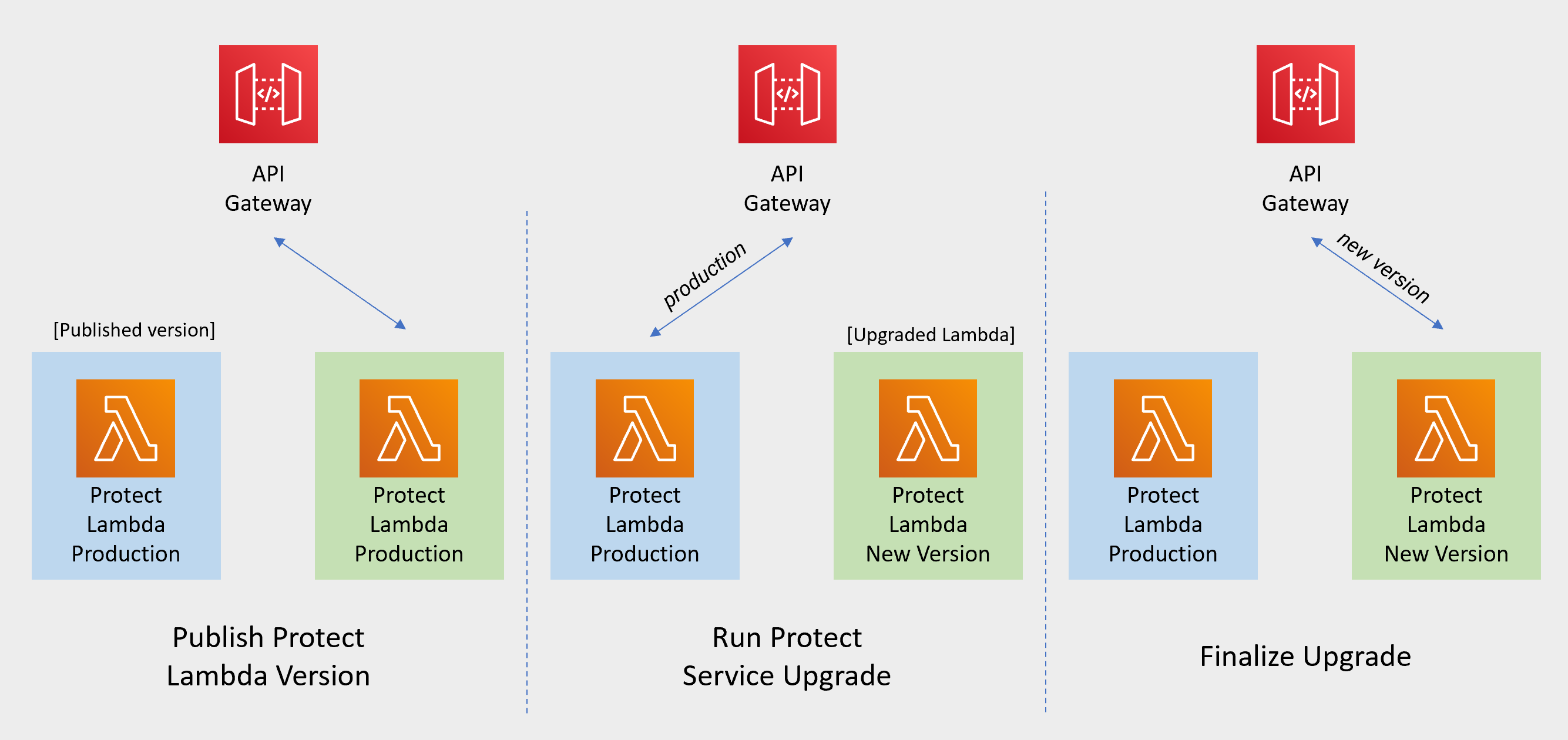

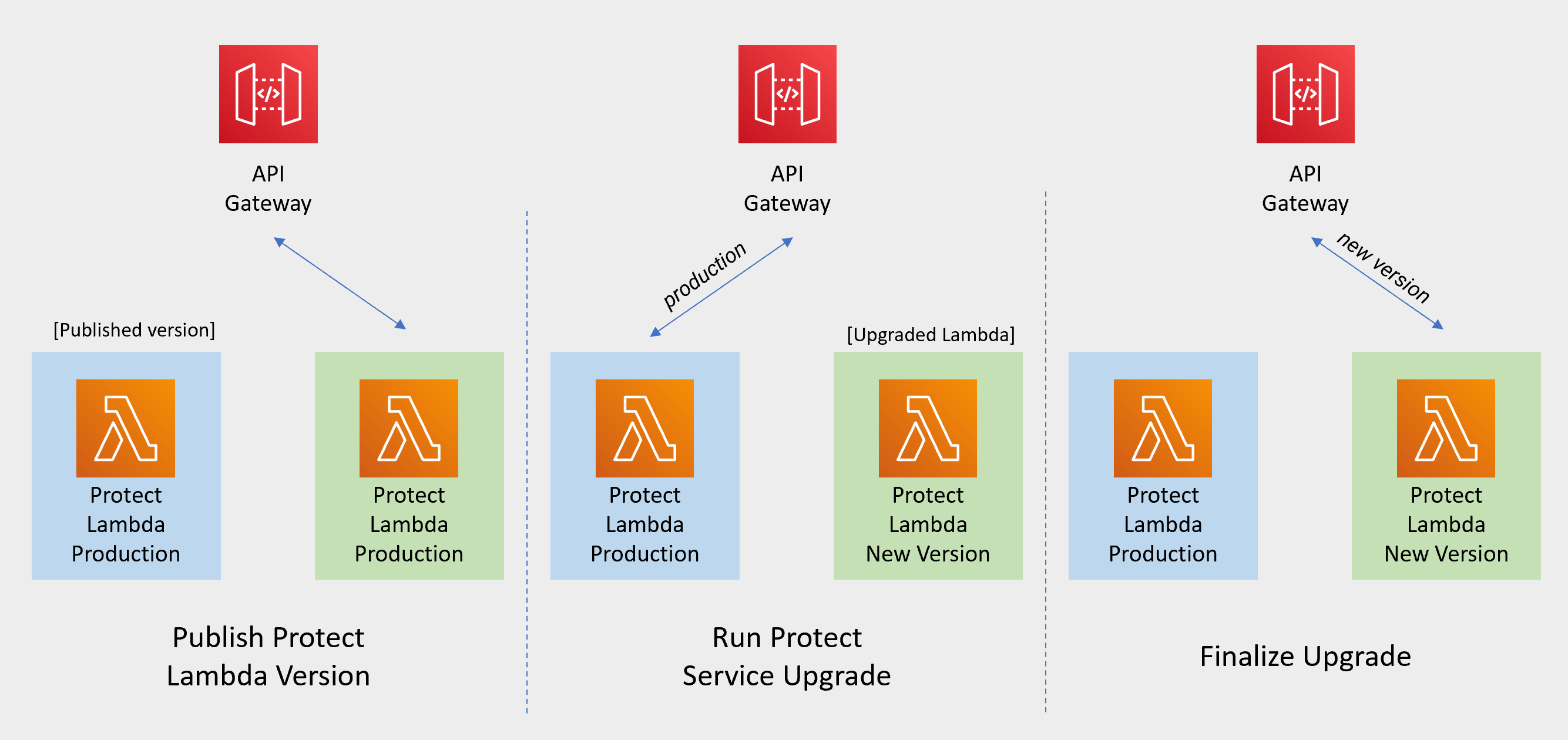

- 1.9: Upgrading To The Latest Version

- 1.10: Appendices

- 1.10.1: ADFS Federation using AWS Cognito User Pools

- 1.10.2: Installing the Policy Agent and Protector in Different AWS Accounts

- 1.10.3: Integrating Cloud Protect with PPC (Protegrity Provisioned Cluster)

- 1.10.4: Invoke Lambda Directly

- 1.10.5: Policy Agent - Custom VPC Endpoint Hostname Configuration

- 1.10.6: Protection Methods

- 1.10.7: Configuring Regular Expression to Extract Policy Username

- 1.10.8: Associating ESA Data Store With Cloud Protect Agent

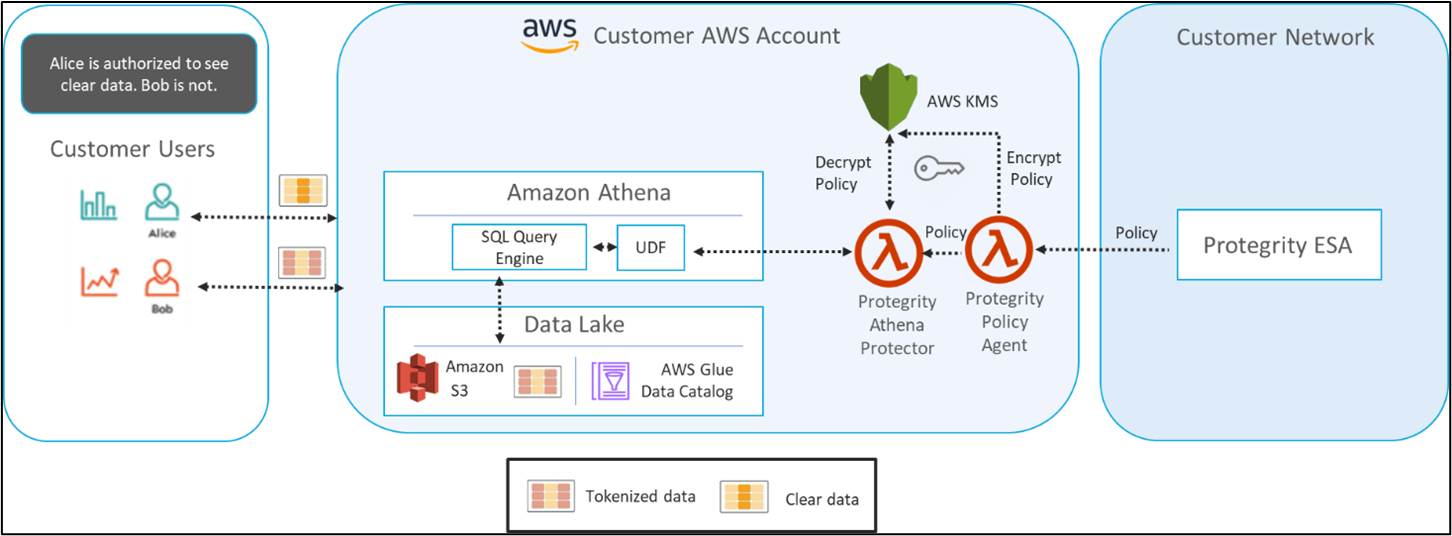

- 2: Athena

- 2.1: Overview

- 2.2: Architecture

- 2.3: Installation

- 2.3.1: Prerequisites

- 2.3.2: Pre-Configuration

- 2.3.3: Protect Service Installation

- 2.3.4: Policy Agent Installation

- 2.3.5: Audit Log Forwarder Installation

- 2.3.6:

- 2.3.7:

- 2.3.8:

- 2.3.9:

- 2.3.10:

- 2.3.11:

- 2.3.12:

- 2.4: Understanding Athena Objects

- 2.5: Performance

- 2.6: Audit Logging

- 2.7: No Access Behavior

- 2.8: Known Limitations

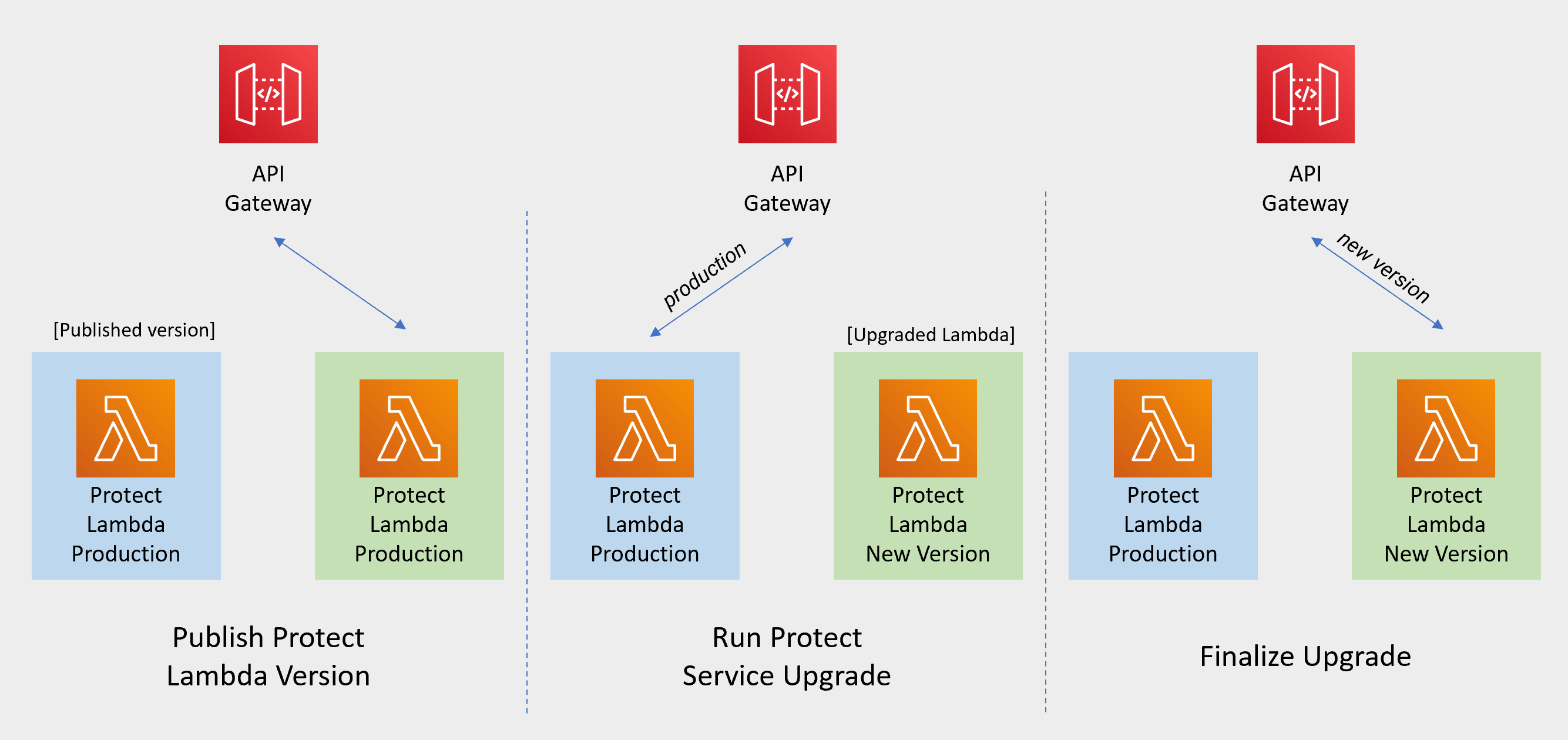

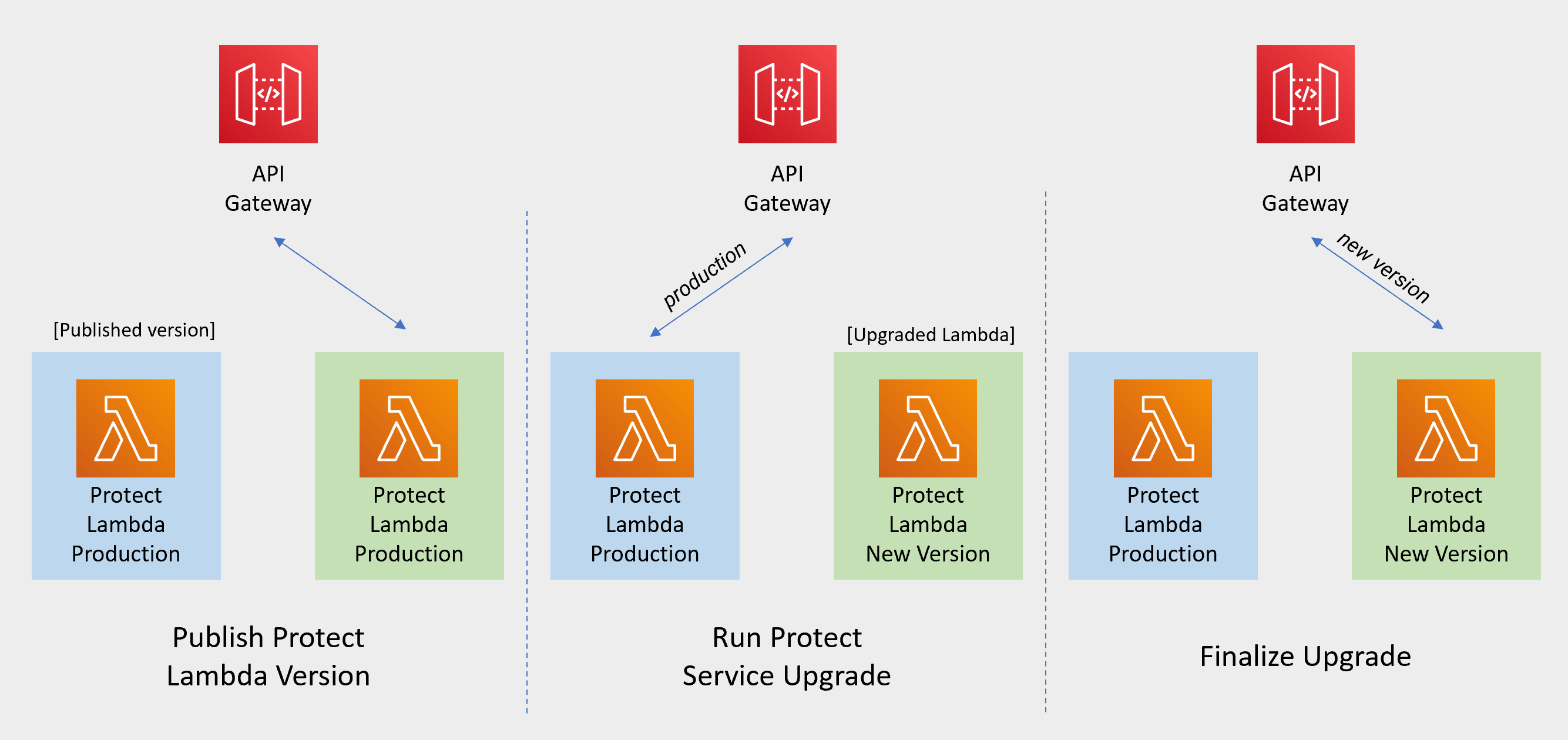

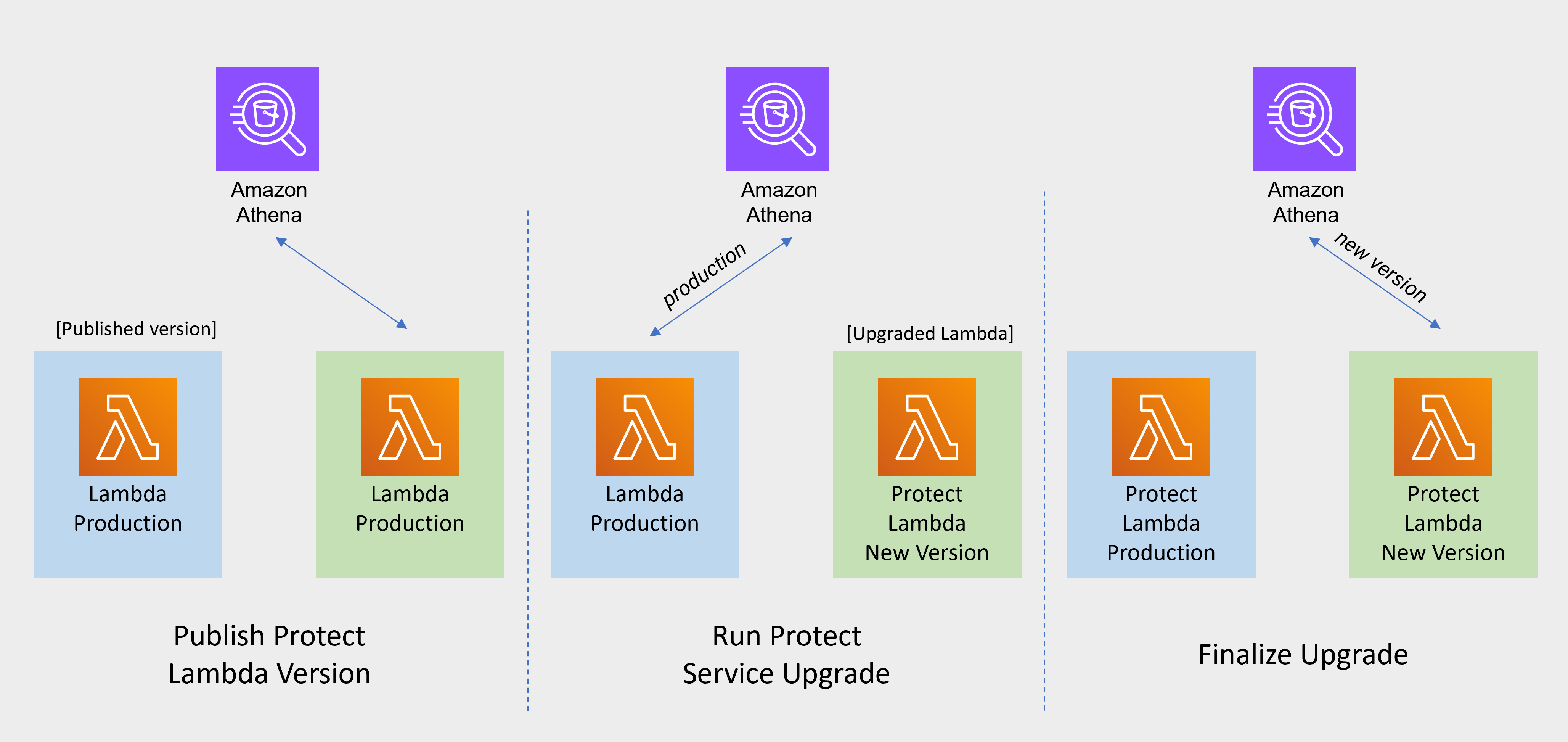

- 2.9: Upgrading To The Latest Version

- 2.10: Appendices

- 2.10.1: Sample Athena External Functions

- 2.10.2: Installing the Policy Agent and Protector in Different AWS Accounts

- 2.10.3: Integrating Cloud Protect with PPC (Protegrity Provisioned Cluster)

- 2.10.4: Security Recommendations

- 2.10.5: Policy Agent - Custom VPC Endpoint Hostname Configuration

- 2.10.6: Protection Methods

- 2.10.7: Configuring Regular Expression to Extract Policy Username

- 2.10.8: Associating ESA Data Store With Cloud Protect Agent

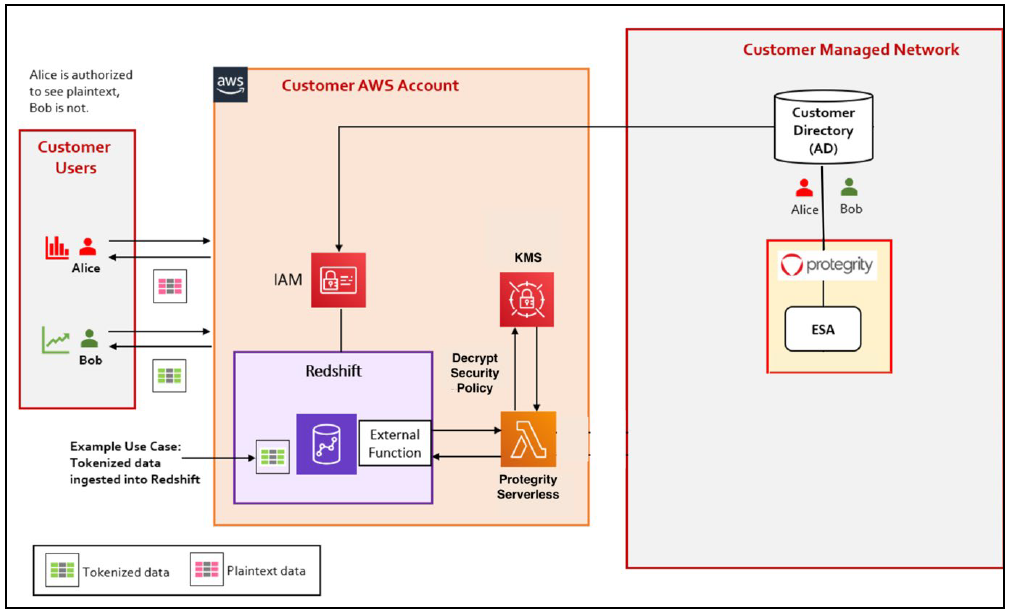

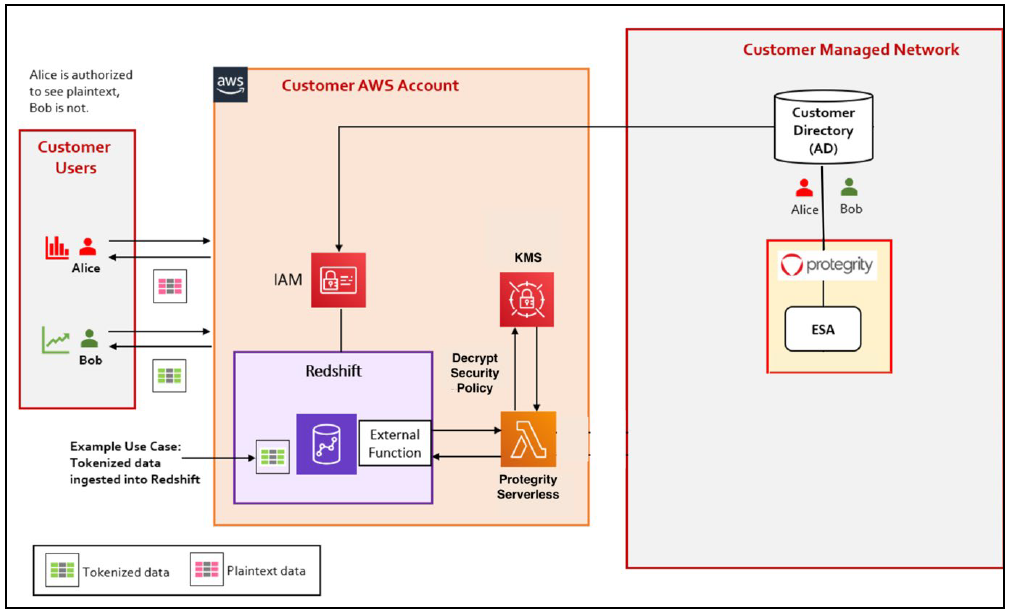

- 3: Redshift

- 3.1: Overview

- 3.2: Architecture

- 3.3: Installation

- 3.3.1: Prerequisites

- 3.3.2: Pre-Configuration

- 3.3.3: Protect Service Installation

- 3.3.4: Policy Agent Installation

- 3.3.5: Audit Log Forwarder Installation

- 3.3.6:

- 3.3.7:

- 3.3.8:

- 3.4: Understanding Redshift Objects

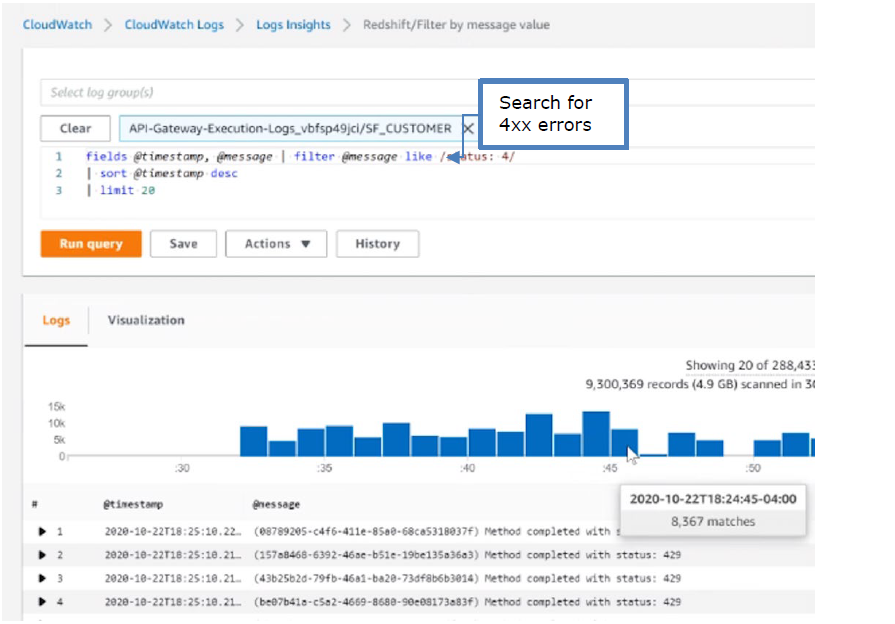

- 3.5: Performance

- 3.6: Audit Logging

- 3.7: No Access Behavior

- 3.8: Known Limitations

- 3.9: Upgrading To The Latest Version

- 3.10: Appendices

- 3.10.1: Installing the Policy Agent and Protector in Different AWS Accounts

- 3.10.2: Integrating Cloud Protect with PPC (Protegrity Provisioned Cluster)

- 3.10.3: Policy Agent - Custom VPC Endpoint Hostname Configuration

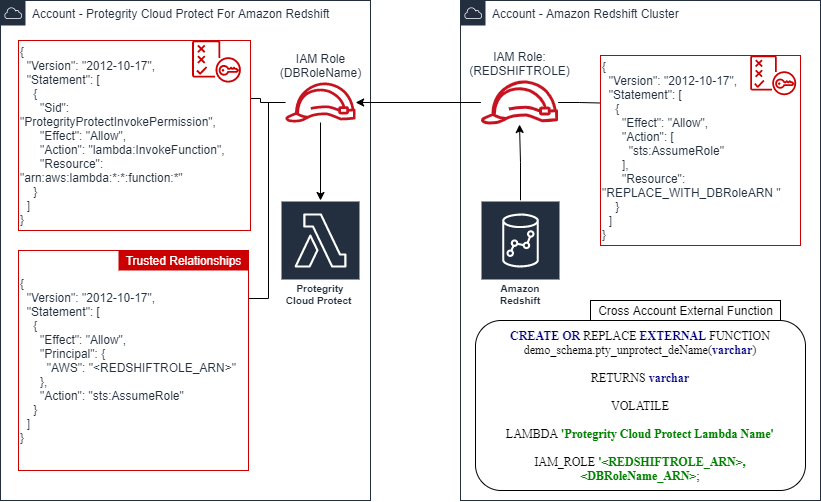

- 3.10.4: Redshift Cross-Account Configuration

- 3.10.5: Sample Redshift External Function

- 3.10.6: Configuring Regular Expression to Extract Policy Username

- 3.10.7: Associating ESA Data Store With Cloud Protect Agent

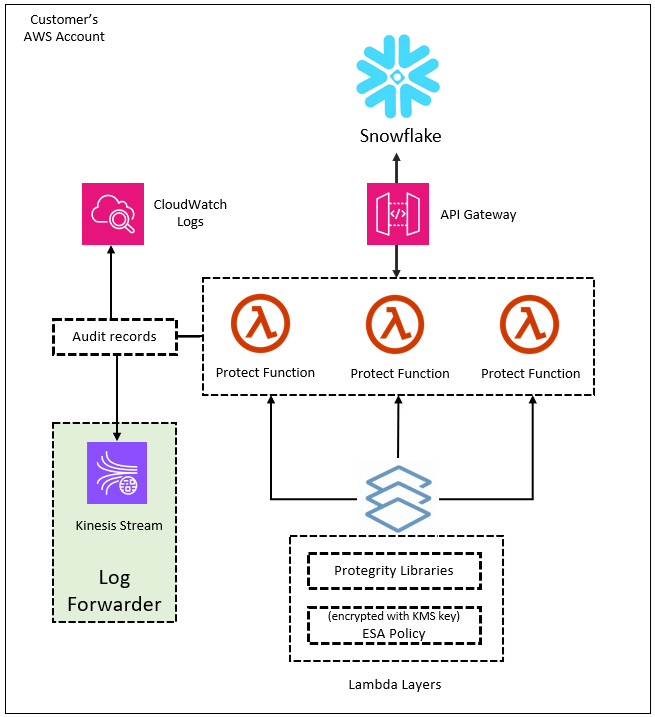

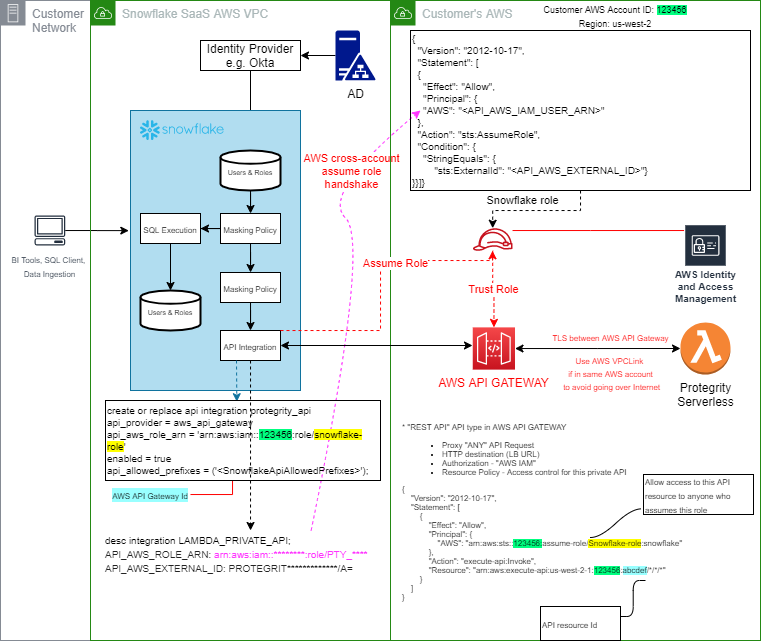

- 4: Snowflake

- 4.1: Overview

- 4.2: Installation

- 4.2.1: Pre-Configuration

- 4.2.2: Prerequisites

- 4.2.3: Protect Service Installation

- 4.2.4: Policy Agent Installation

- 4.2.5: Audit Log Forwarder Installation

- 4.2.6:

- 4.2.7:

- 4.2.8:

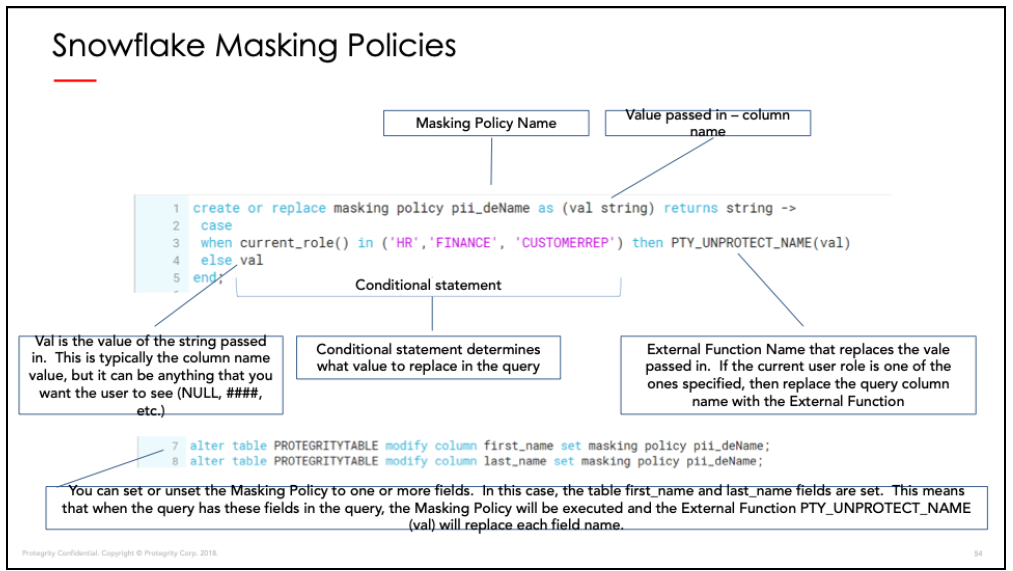

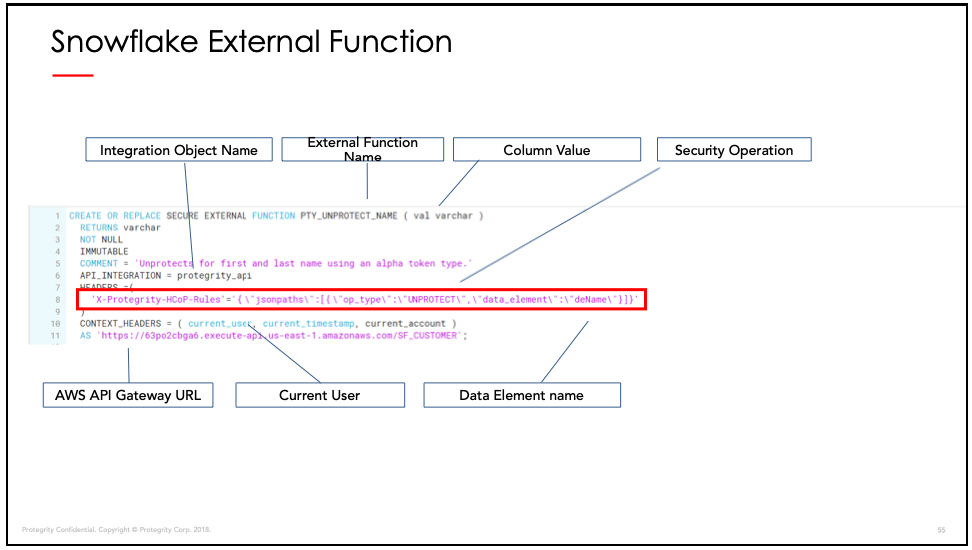

- 4.3: Understanding Snowflake Objects

- 4.3.1: External Functions

- 4.3.2: Snowflake Masking Policies

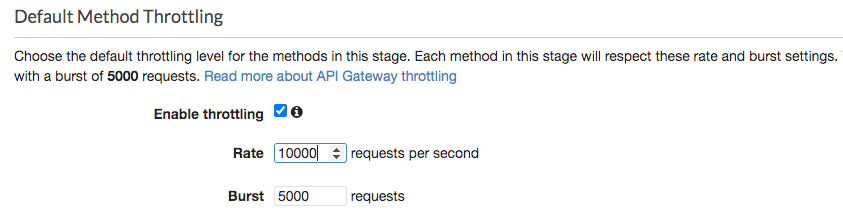

- 4.4: Performance

- 4.4.1: Performance Considerations

- 4.4.2: Sample Benchmarks

- 4.4.3: Concurrency

- 4.4.4: Concurrency Tuning

- 4.4.5: Log Forwarder Performance Tuning

- 4.4.6:

- 4.4.7:

- 4.4.8:

- 4.4.9:

- 4.5: Audit Logging

- 4.6: No Access Behavior

- 4.7: Upgrading To The Latest Version

- 4.8: Known Limitations

- 4.9: Appendices

- 4.9.1: Sample Snowflake External Function

- 4.9.2: Installing the Policy Agent and Protector in Different AWS Accounts

- 4.9.3: Integrating Cloud Protect with PPC (Protegrity Provisioned Cluster)

- 4.9.4: Policy Agent - Custom VPC Endpoint Hostname Configuration

- 4.9.5: Protection Methods

- 4.9.6: Configuring Regular Expression to Extract Policy Username

- 4.9.7: Associating ESA Data Store With Cloud Protect Agent

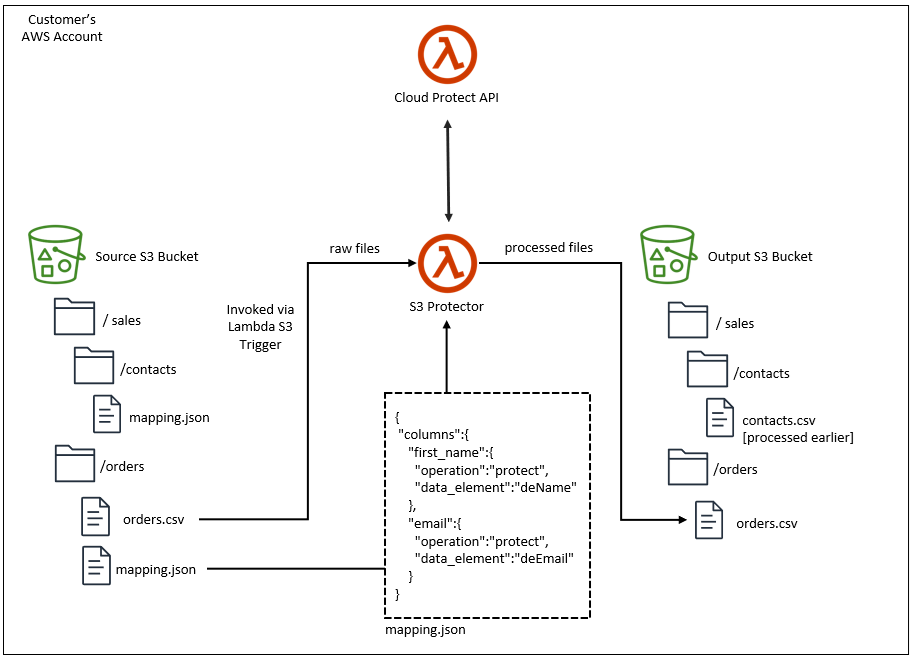

- 5: Amazon S3

- 5.1: Overview

- 5.2: Architecture

- 5.3: Installation

- 5.3.1: Prerequisites

- 5.3.2: Pre-Configuration

- 5.3.3: S3 Protector Service Installation

- 5.3.4:

- 5.3.5:

- 5.3.6:

- 5.4: Mapping File Configuration

- 5.4.1: Mapping File

- 5.4.2: Column Mapping Rules

- 5.5: Performance

- 5.6: Known Limitations

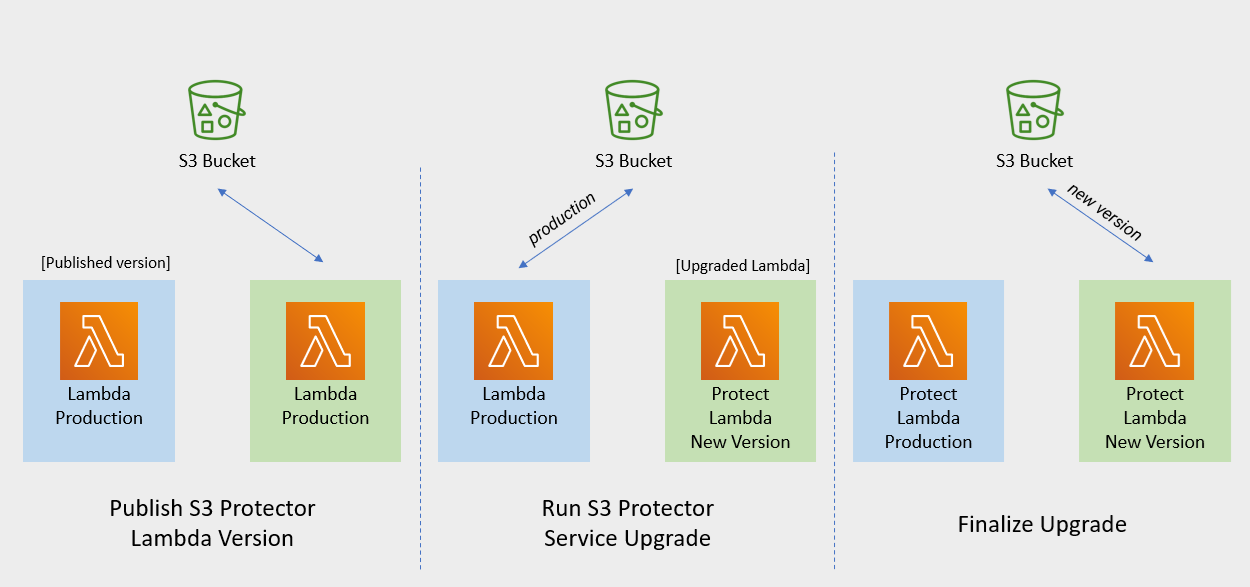

- 5.7: Upgrading To The Latest Version

- 5.8: Appendices

- 6:

- 7:

- 8:

- 9:

- 10:

- 11:

- 12:

- 13:

- 14:

- 15:

1 - Cloud API

This section describes the high-level architecture of Protegrity Cloud API, installation procedures, and performance guidance. This section focuses on Protegrity specific aspects and should be consumed in conjunction with the corresponding AWS documentation.

This guide may also be used with the Protegrity Enterprise Security Administrator Guide, which explains the mechanism for managing the data security policy.

1.1 - Overview

Solution Overview

Cloud API Protector on AWS is a cloud-native, serverless product for fine-grained data protection. This enables the invocation of Protegrity data protection cryptographic methods in cloud-native serverless technology. The benefits of serverless include rapid auto-scaling, performance, low administrative overhead, and reduced infrastructure costs compared to a server-based solution.

This product provides a data protection API end-point for clients. The product is designed to scale elastically and yield reliable query performance under extremely high concurrent loads. During idle use, the serverless product will scale completely down, providing significant savings in Cloud compute fees.

Protegrity utilizes a data security policy maintained by an Enterprise Security Administrator (ESA), similar to other Protegrity products. Using a simple REST API interface, authorized users can perform both de-identification (protect) and re-identification (unprotect) operations on data. A user’s individual capabilities are subject to privileges and policies defined by the Enterprise Security Administrator.

Analytics on Protected Data

Protegrity’s format and length preserving tokenization scheme make it possible to perform analytics directly on protected data. Tokens are join-preserving so protected data can be joined across datasets. Often statistical analytics and machine learning training can be performed without the need to re-identify protected data. However, a user or service account with authorized security policy privileges may re-identify subsets of data using the Cloud API Protector on AWS service.

Features

Cloud API Protector on AWS incorporates Protegrity’s patent-pending vaultless tokenization capabilities into cloud-native serverless technology. Combined with an ESA security policy, the protector provides the following features:

- Role-based access control (RBAC) to protect and unprotect (re-identify) data depending on the user privileges.

- Policy enforcement features of other Protegrity application protectors.

For more information about the available protection options, such as, data types, Tokenization or Encryption types, or length preserving and non-preserving tokens, refer to Protection Methods Reference.

1.2 - Architecture

Protector Deployment Architecture

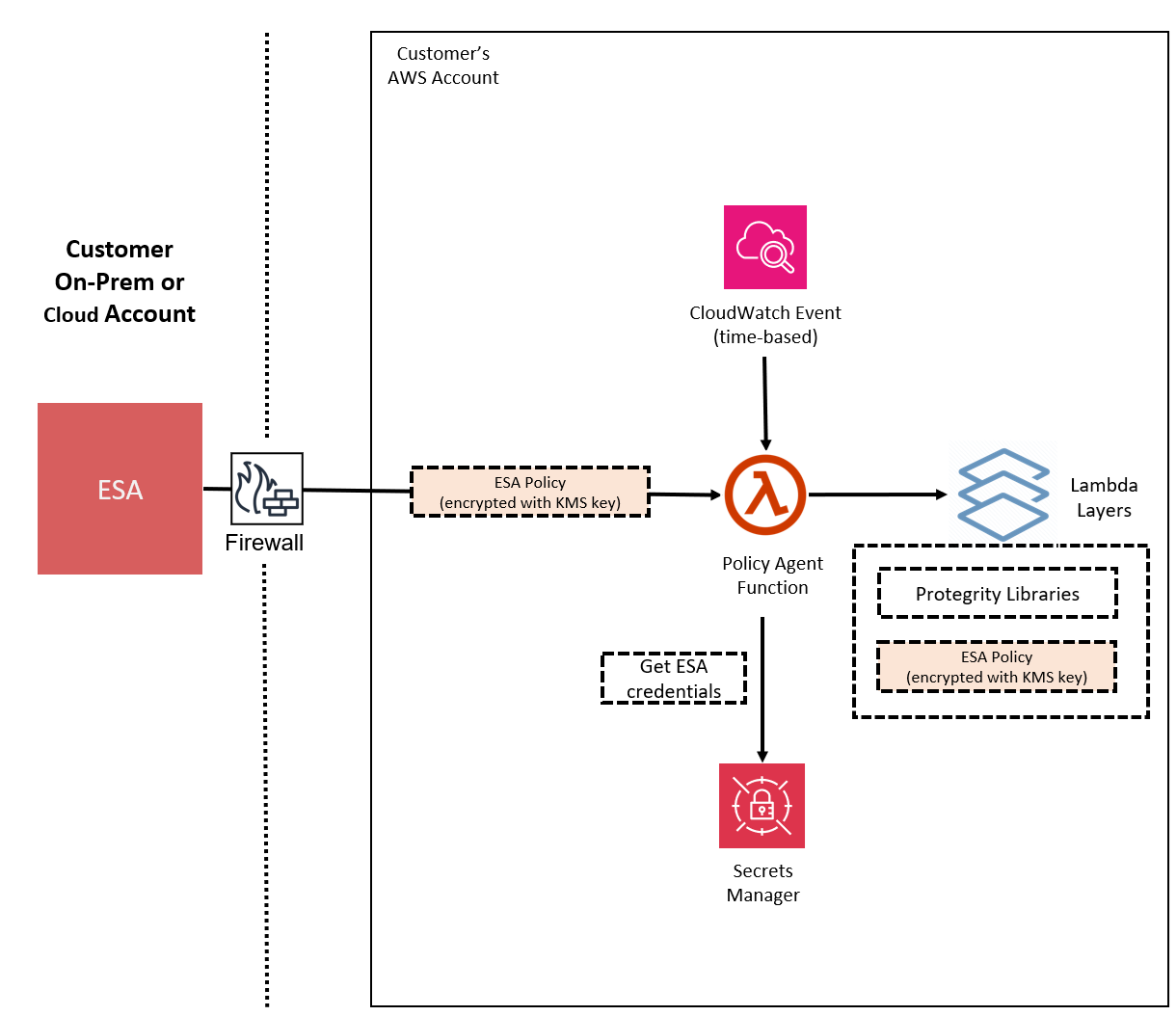

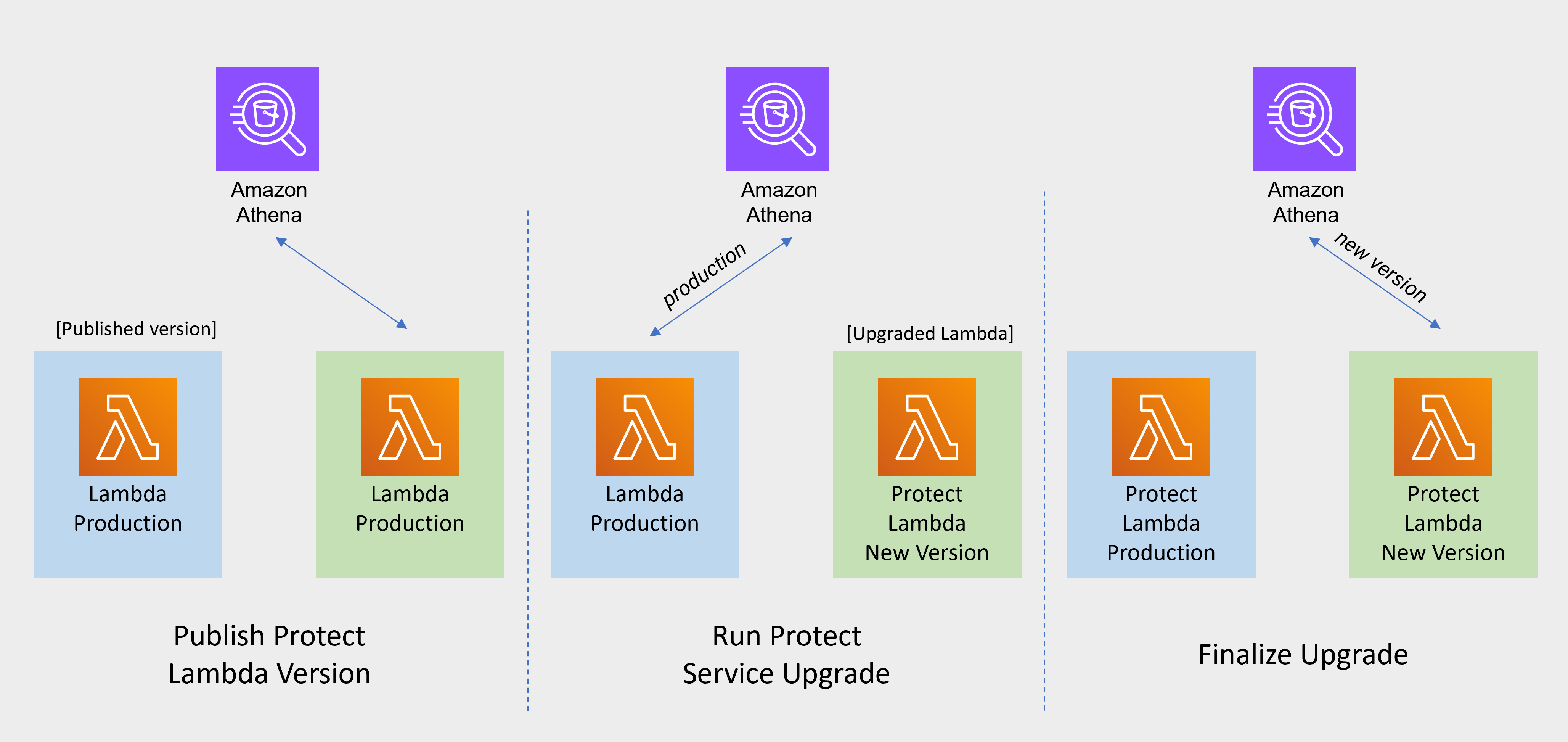

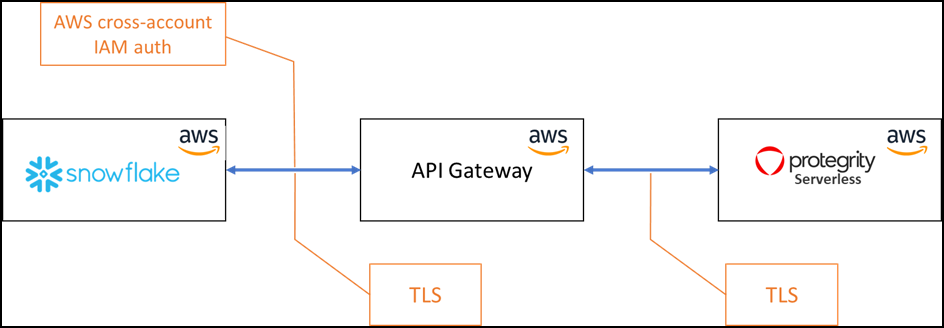

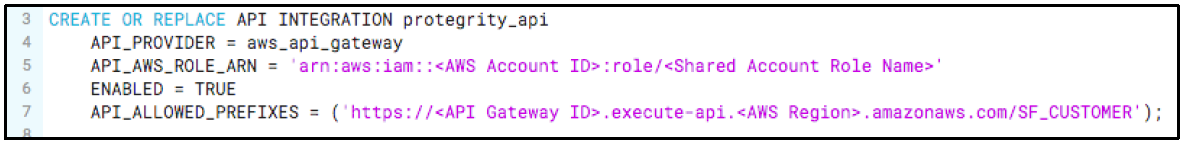

The product will be deployed in the Customer’s AWS account. The product incorporates Protegrity’s vaultless tokenization engine within an AWS Lambda Function. The encrypted data security policy from an ESA is deployed as a static resource through an Amazon Lambda Layer. The policy is decrypted in memory at runtime within the Lambda. This architecture allows Protegrity Serverless to be highly available and scale very quickly without direct dependency on any other Protegrity services.

The product exposes a remote data protection service. Each REST request includes a micro-batch of data to process and the data element type. The function applies the data security policy including user authorization and returns a corresponding response.

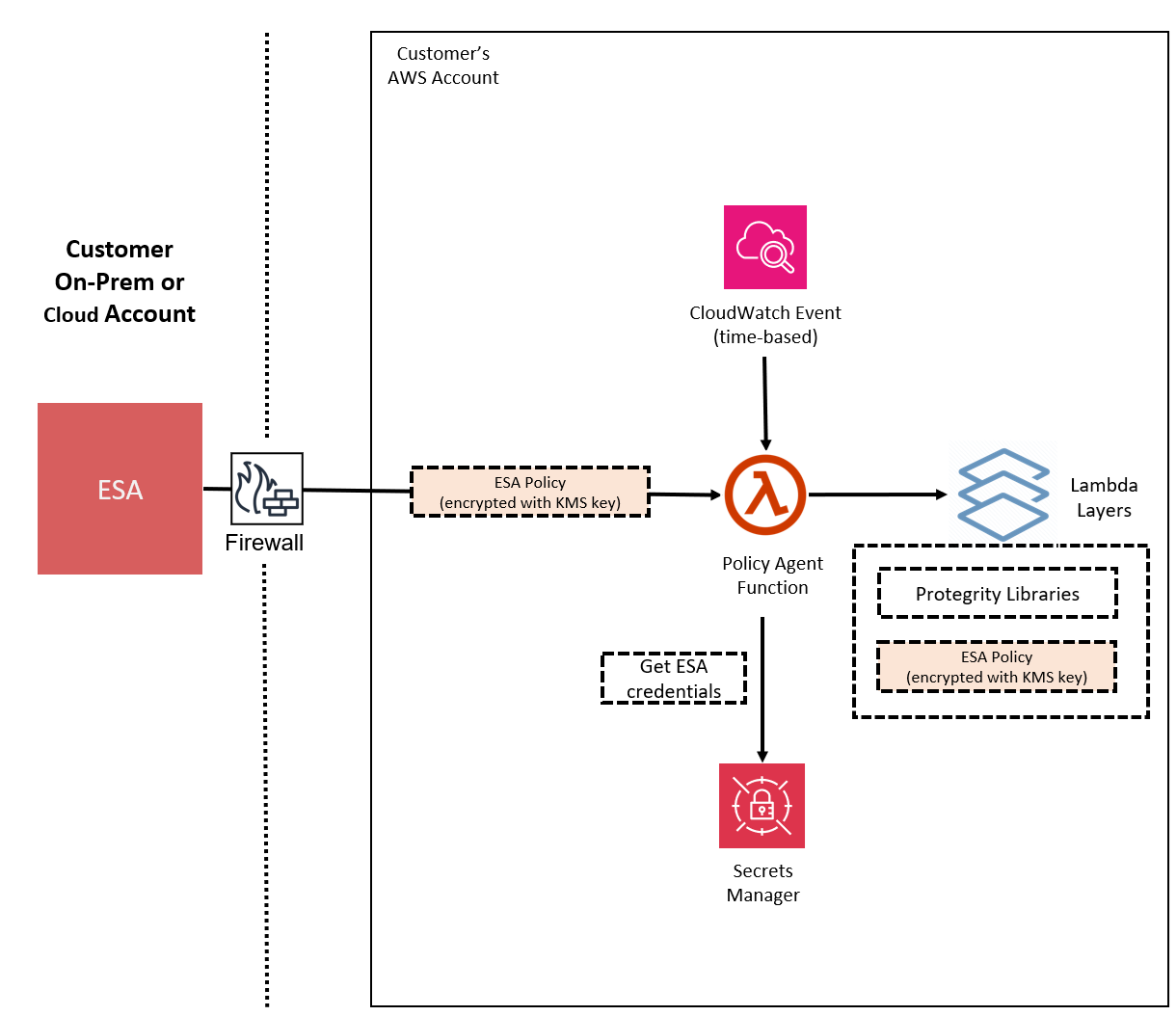

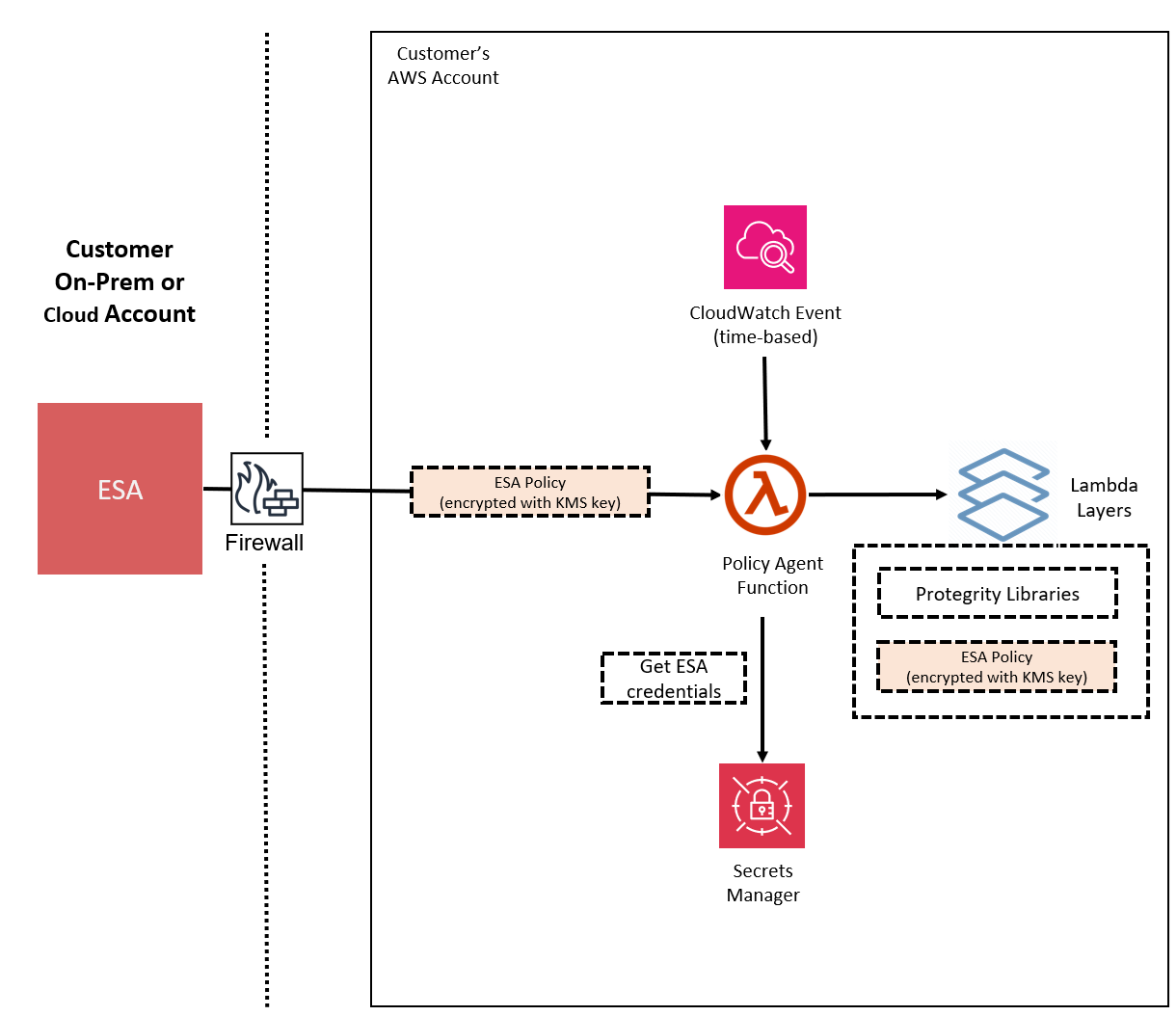

When used with an Enterprise Security Administrator (ESA) application, the security policy is synchronized through another serverless component called the Protegrity Policy Agent. The agent operates on a configurable schedule, fetches the policy from the ESA, performs additional envelope encryption using Amazon KMS, and deploys the policy into the Lambda Layer used by the serverless product. This solution can be configured to automatically provision the static policy or the final step can be performed on-demand by an administrator. The policy takes effect immediately for all new requests. There is no downtime for users during this process.

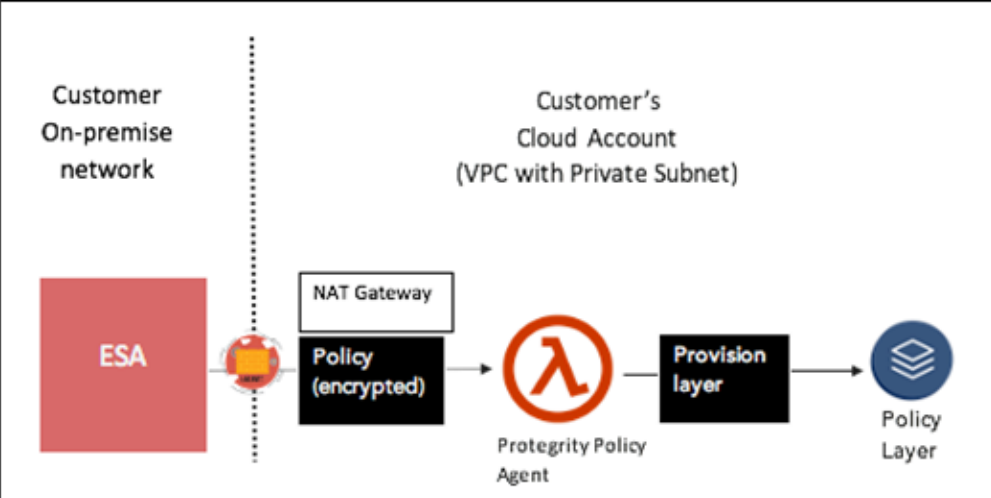

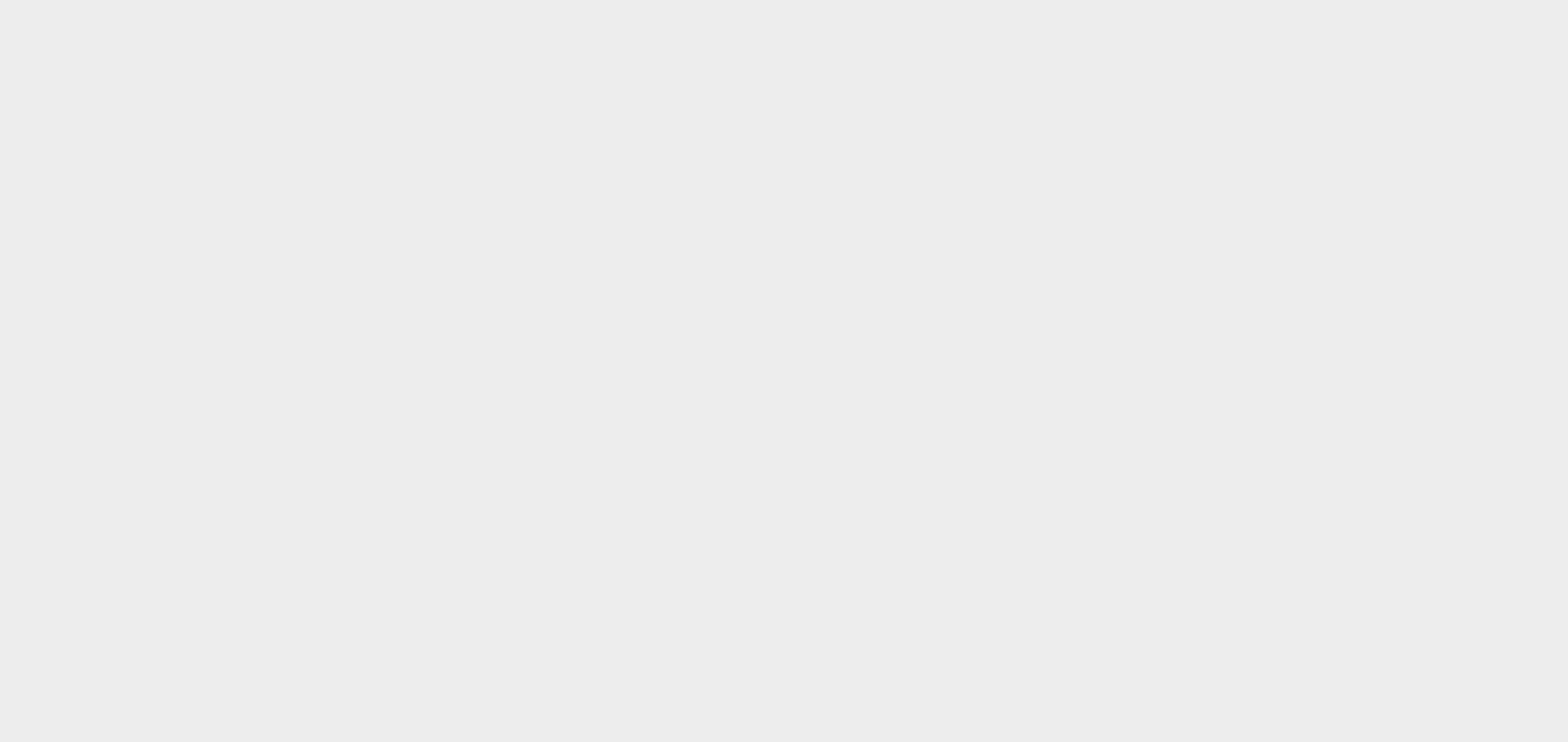

The following diagram shows the high-level architecture described above.

The following diagram shows a reference architecture for synchronizing the security policy from ESA.

The Protegrity Policy Agent requires network access to an Enterprise Security Administrator (ESA). Most organizations install the ESA on-premise. Therefore, it is recommended that the Policy Agent is installed into a private subnet with a Cloud VPC using a NAT Gateway to enable this communication through a corporate firewall.

The ESA is a soft appliance that must be pre-installed on a separate server. It is used to create and manage security policies.

For more information about installing the ESA, and creating and managing policies, refer the Policy Management Guide.

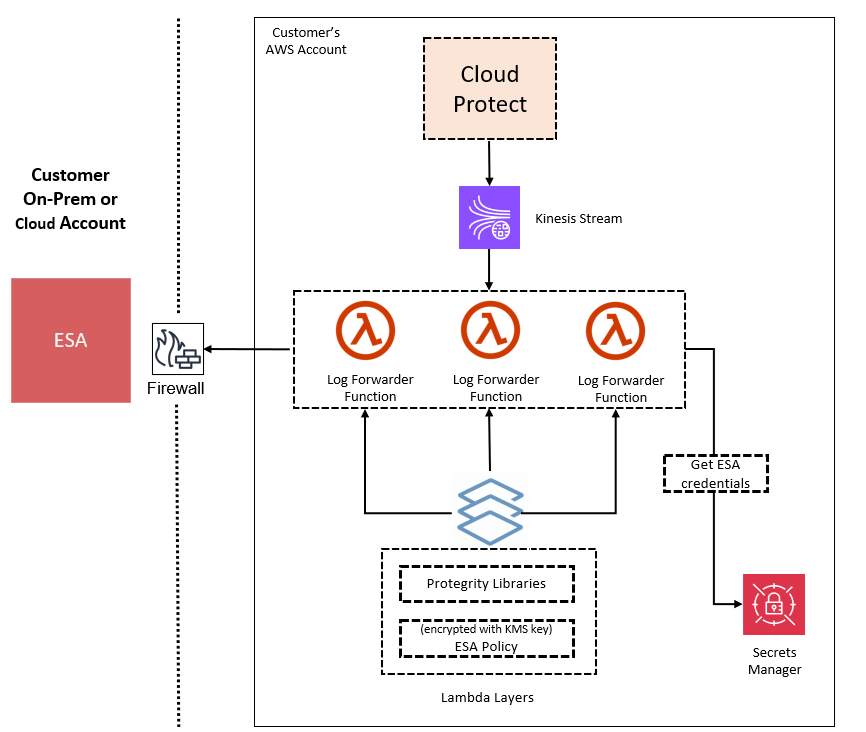

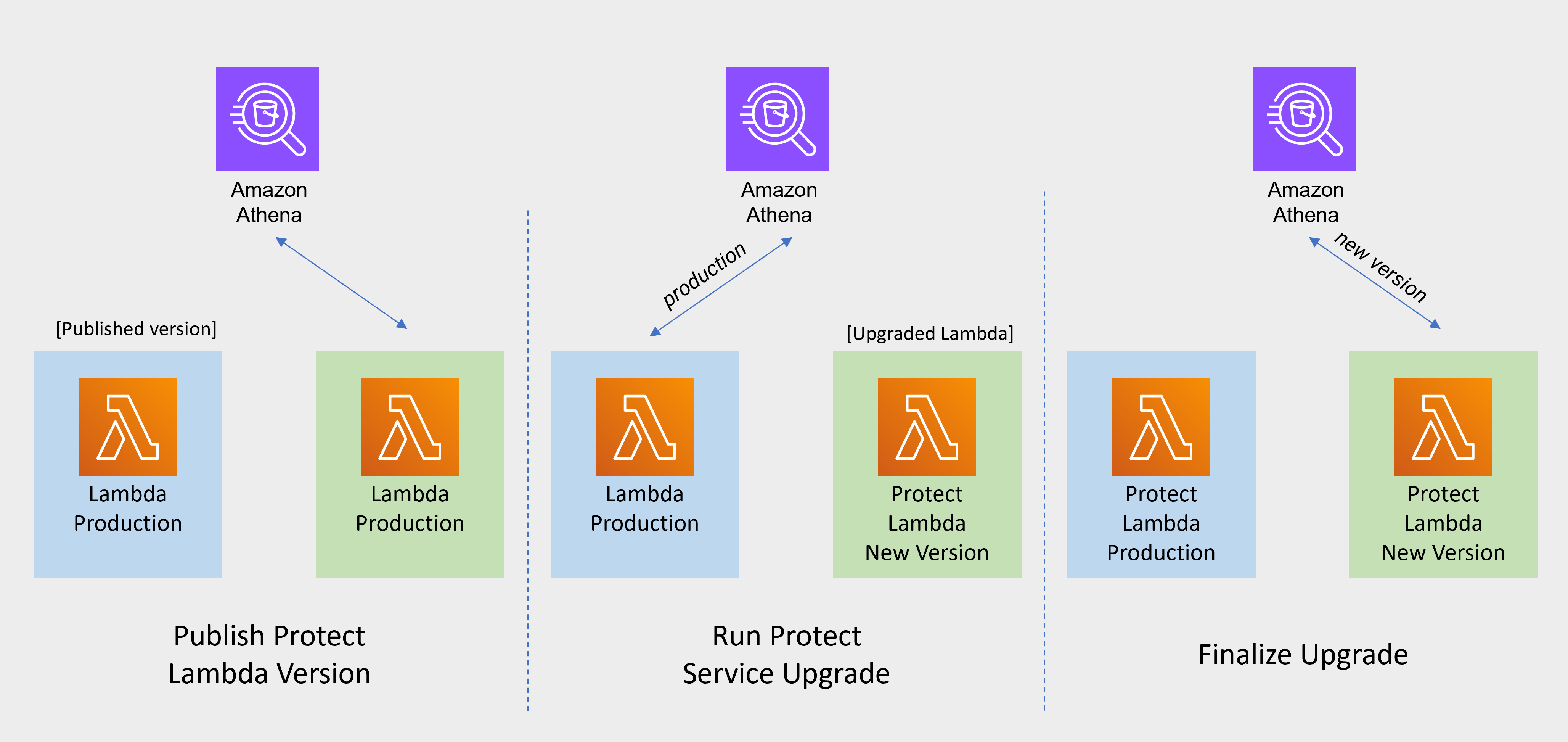

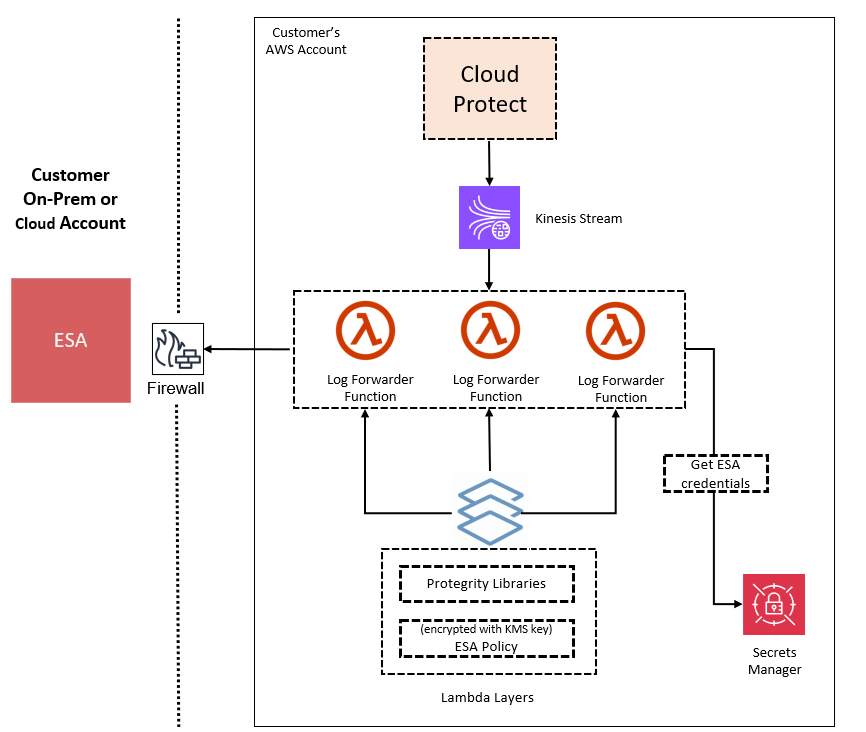

Log Forwarding Architecture

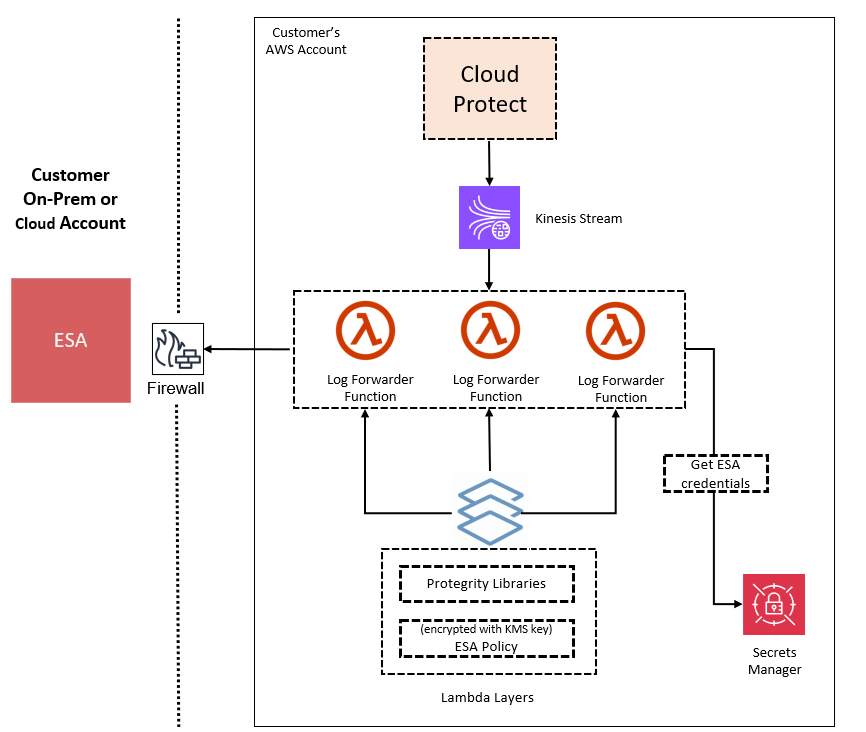

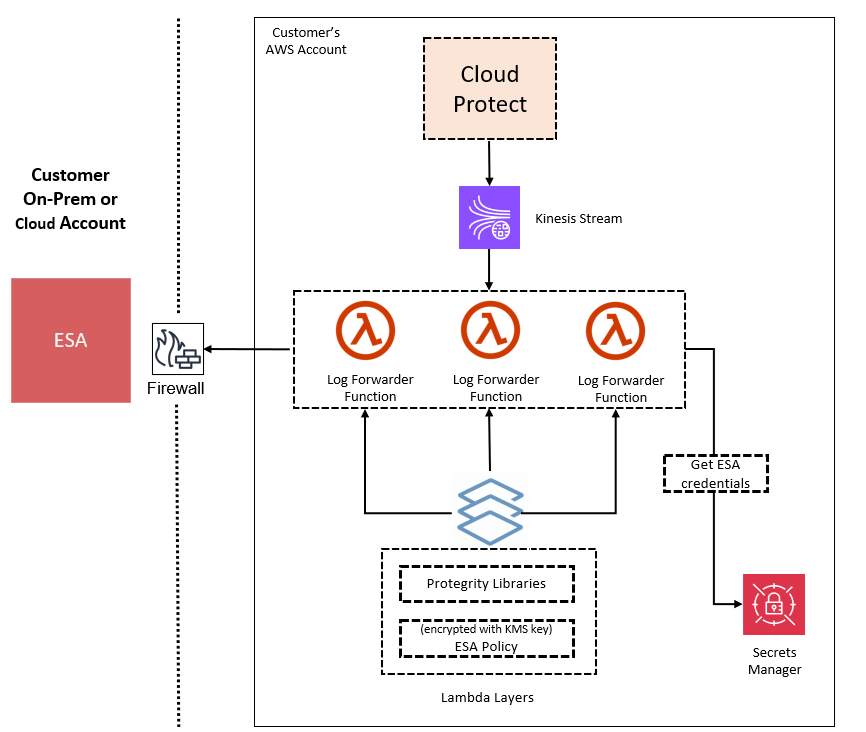

Audit logs are by default sent to CloudWatch as long as the function’s execution role has the necessary permissions. The Protegrity Product can also be configured to send audit logs to ESA. Such configuration requires deploying Log Forwarder component which is available as part of Protegrity Product deployment bundle. The diagram below shows additional resources deployed with Log Forwarder component.

The log forwarder component includes Amazon Kinesis data stream and the forwarder Lambda function. Amazon Kinesis stream is used to batch audit records before sending them to forwarder function, where similar audit logs are aggregated before sending to ESA. Aggregation rules are described in the Protegrity Log Forwarding guide. When the protector function is configured to send audit logs to log forwarder, audit logs are aggregated on the protector function before sending to Amazon Kinesis. Due to specifics of the Lambda runtime lifecycle, audit logs may take up to 15 minutes before being sent to Amazon Kinesis. Protector function exposes configuration to minimize this time which is described in the protector function installation section.

The security of audit logs is ensured by using HTTPS connection on each link of the communication between protector function and ESA. Integrity and authenticity of audit logs is additionally checked on log forwarder which verifies individual logs signature. The signature verification is done upon arrival from Amazon Kinesis before applying aggregation. If signature cannot be verified, the log is forwarded as is to ESA where additional signature verification can be configured. Log forwarder function uses client certificate to authenticate calls to ESA.

To learn more about individual audit log entry structure and purpose of audit logs, refer to Audit Logging section in this document. Installation instructions can be found in Audit Log Forwarder installation.

The audit log forwarding requires network access from the cloud to the ESA. Most organizations install the ESA on-premise. Therefore, it is recommended that the Log Forwarder Function is installed into a private subnet with a Cloud VPC using a NAT Gateway to enable this communication through a corporate firewall.

Access Control

The following mechanisms are available for controlling and restricting access to the endpoint:

- IAM policy: The IAM resource policy controls which IAM users or services may invoke Protect operations. IAM policies can be applied to the API Gateway and/or the Lambda directly depending on allowable access patterns and the client.

- JWT tokens: The Lambda can be configured to use JSON Web Tokens (JWT) with optional verification. JSON Web Tokens (JWT) are an open, industry-standard RFC 7519 method for representing claims securely between two parties. JWT provides a mechanism for implementing custom authentication or integrating with AWS Cognito.

AWS Resources

Access and authorization between various AWS services involved in this architecture are achieved through IAM resource policies. For instance, the Amazon Lambda resource-based policy can restrict requests to the Amazon API Gateway or optionally allow direct invocation to the Lambda function itself. The installation steps provide a default recommended configuration. Alternative IAM role configurations are shown in the appendices in this document.

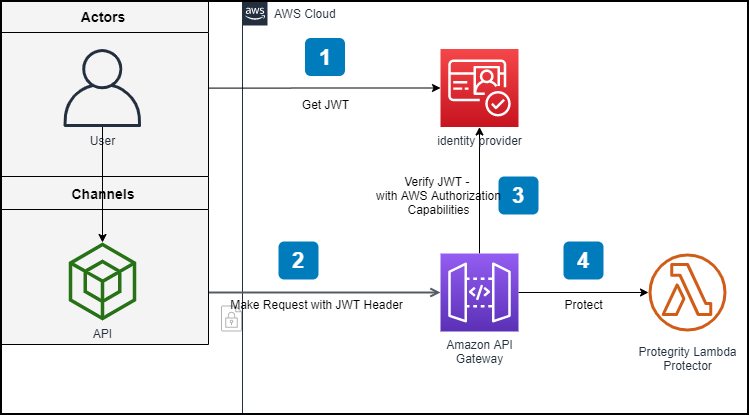

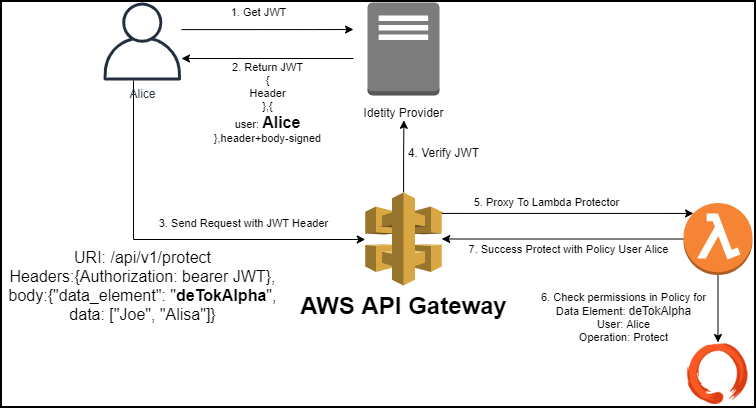

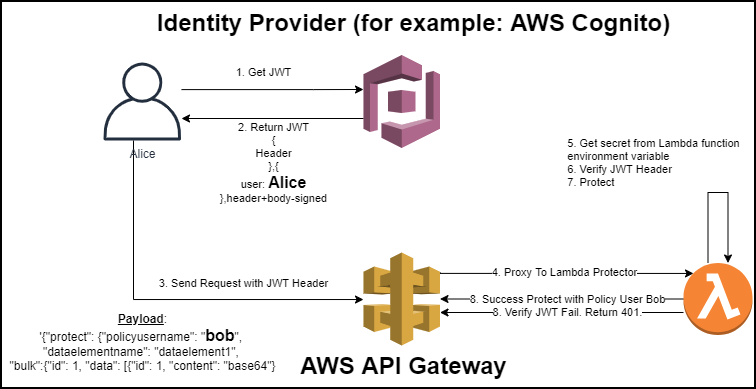

REST API Authentication

AWS API Gateway supports multiple mechanisms for controlling and managing access to the product.

In standard solutions, AWS API Gateway will authorize access tokens generated in the identity provider. When setting up an AWS API Gateway method to require an authorization, customers can leverage AWS Signature Version 4 or Lambda authorizers to support their organization’s bearer token auth strategy.

https://docs.aws.amazon.com/apigateway/latest/developerguide/apigateway-control-access-to-api.html

REST API Authorization

Once the request is authenticated and authorized in the API Gateway, Protegrity Lambda Protector validates the user received in the authorization header of JWT, and the data element and security operations (protect or unprotect) from the payload with Protegrity Security Policy.

If the API Gateway is not used or configured to verify JWT tokens, the product can be configured to perform the JWT verification in the Lambda function itself.

1.3 - Installation

1.3.1 - Prerequisites

AWS Services

The following table describes the AWS services that may be a part of your Protegrity installation.

Service | Description |

|---|---|

Lambda | Provides serverless compute for Protegrity protection operations and the ESA integration to fetch policy updates or deliver audit logs. |

API Gateway | Provides the endpoint and access control. |

KMS | Provides secrets for envelope policy encryption/decryption for Protegrity. |

Secrets Manager | Provides secrets management for the ESA credentials . |

S3 | Intermediate storage location for the encrypted ESA policy layer. |

Kinesis | Required if Log Forwarder is to be deployed. Amazon Kinesis is used to batch audit logs sent from protector function to ESA. |

VPC & NAT Gateway | Optional. Provides a private subnet to communicate with an on-prem ESA. |

CloudWatch | Application and audit logs, performance monitoring, and alerts. Scheduling for the policy agent. |

ESA Version Requirements

The Protector and Log Forwarder functions require a security policy from a compatible ESA version.

The table below shows compatibility between different Protector and ESA versions.

Note

For the latest up-to-date information refer to: Protegrity Compatibility Matrix| Protector Version | ESA Version | |||

|---|---|---|---|---|

| 8.x | 9.0 | 9.1 & 9.2 | 10.0 | |

| 2.x | No | Yes | * | No |

| 3.0.x & 3.1.x | No | No | Yes | No |

| 3.2.x | No | No | Yes | * |

| 4.0.x | No | No | No | Yes |

Legend | |

|---|---|

Yes | Protector was designed to work with this ESA version |

No | Protector will not work with this ESA version |

* | Backward compatible policy download supported:

|

Prerequisites

Requirement | Detail |

|---|---|

Protegrity distribution and installation scripts | These artifacts are provided by Protegrity |

Protegrity ESA 10.0+ | The Cloud VPC must be able to obtain network access to the ESA |

AWS Account | Recommend creating a new sub-account for Protegrity Serverless |

Required Skills and Abilities

Role / Skillset | Description |

|---|---|

AWS Account Administrator | To run CloudFormation (or perform steps manually), create/configure a VPC and IAM permissions. |

Protegrity Administrator | The ESA credentials required to extract the policy for the Policy Agent |

Network Administrator | To open firewall to access ESA and evaluate AWS network setup |

Cheat Sheet Recommendation

Tip

During the installation you will need output of steps, such as resources names and ids. We recommend copying the following cheat sheet into a notepad and fill in the information as you progress with the installation.AWS Account ID: ___________________

AWS Region (AwsRegion): ___________________

S3 Bucket name (ArtifactS3Bucket): ___________________

KMS Key ARN (AWS_KMS_KEY_ID): ___________________

ProtectLambdaPolicyName: __________________

Role ARN (LambdaExecutionRoleArn): ___________________

ApiGatewayId: ________________________________

ProtectFunctionName: __________________________

ProtectLayerName: _____________________________

ESA IP address: ___________________

VPC name: ___________________

Subnet name: ___________________

Policy Agent Security Group Id: ___________________

ESA Credentials Secret Name: ___________________

Policy Name: ___________________

Agent Lambda IAM Execution Role Name: ___________________

What’s Next

1.3.2 - Pre-Configuration

Provide AWS sub-account

Identify or create an AWS account where the Protegrity solution will be installed. It is recommended that a new AWS sub-account be created. This can provide greater security controls and help avoid conflicts with other applications that might impact regional account limits. An individual with the Cloud Administrator role will be required for some subsequent installation steps.

AWS Account ID: ___________________

AWS Region (AwsRegion): ___________________

Create S3 bucket for Installing Artifacts

This S3 bucket will be used for the artifacts required by the CloudFormation installation steps. This S3 bucket must be created in the region that is defined in Provide AWS sub-account

Sign in to the AWS Management Console and open the Amazon S3 console.

Change region to the one determined in Provide AWS sub-account

Click Create Bucket.

Enter a unique bucket name:

For example, protegrity-install.us-west-2.example.com

Upload the installation artifacts to this bucket. Protegrity will provide the following three artifacts:

- protegrity-protect-<version>.zip

- protegrity-agent-<version>.zip

- protegrity-external-extension-<version>.zip

- protegrity-sample-policy-<version>.zip

Important

The deployment package you receive from Protegrity must be extracted to reveal the Protegrity artifacts. CloudFormation requires them in the provided .zip format. Do not extract the individual Protegrity artifacts. Upload these artifacts to the S3 bucket created.

S3 Bucket name (ArtifactS3Bucket): ___________________

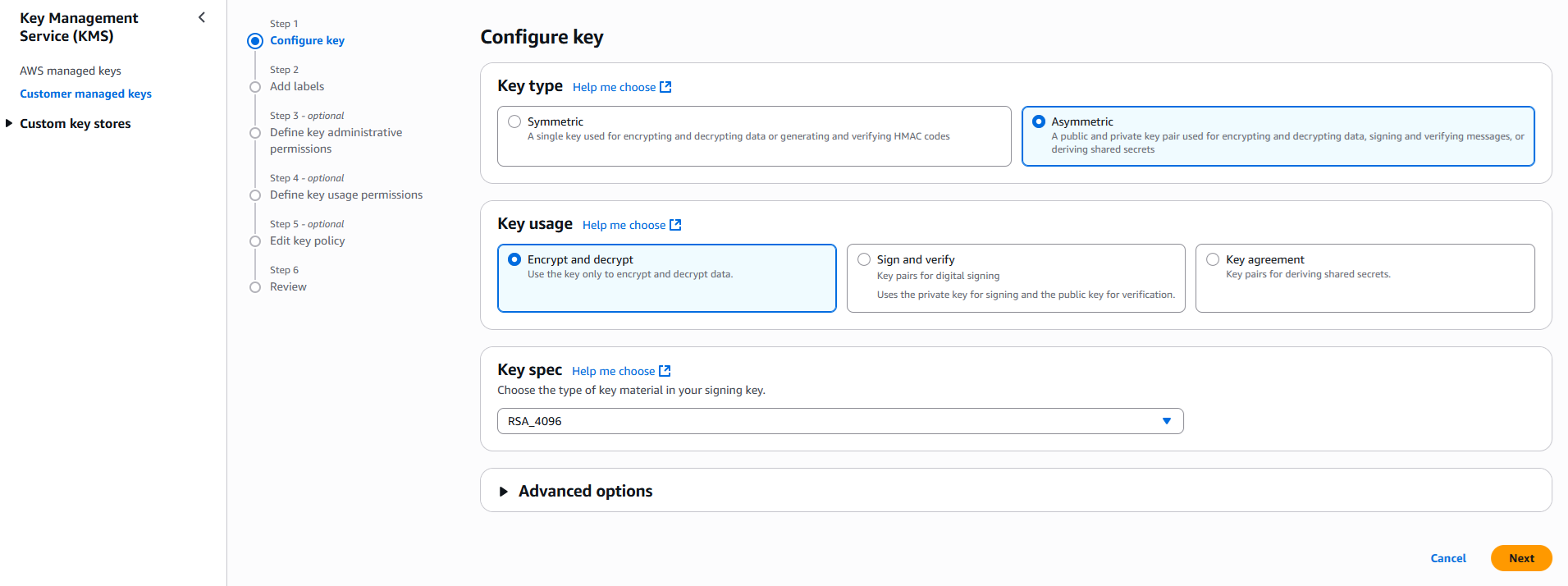

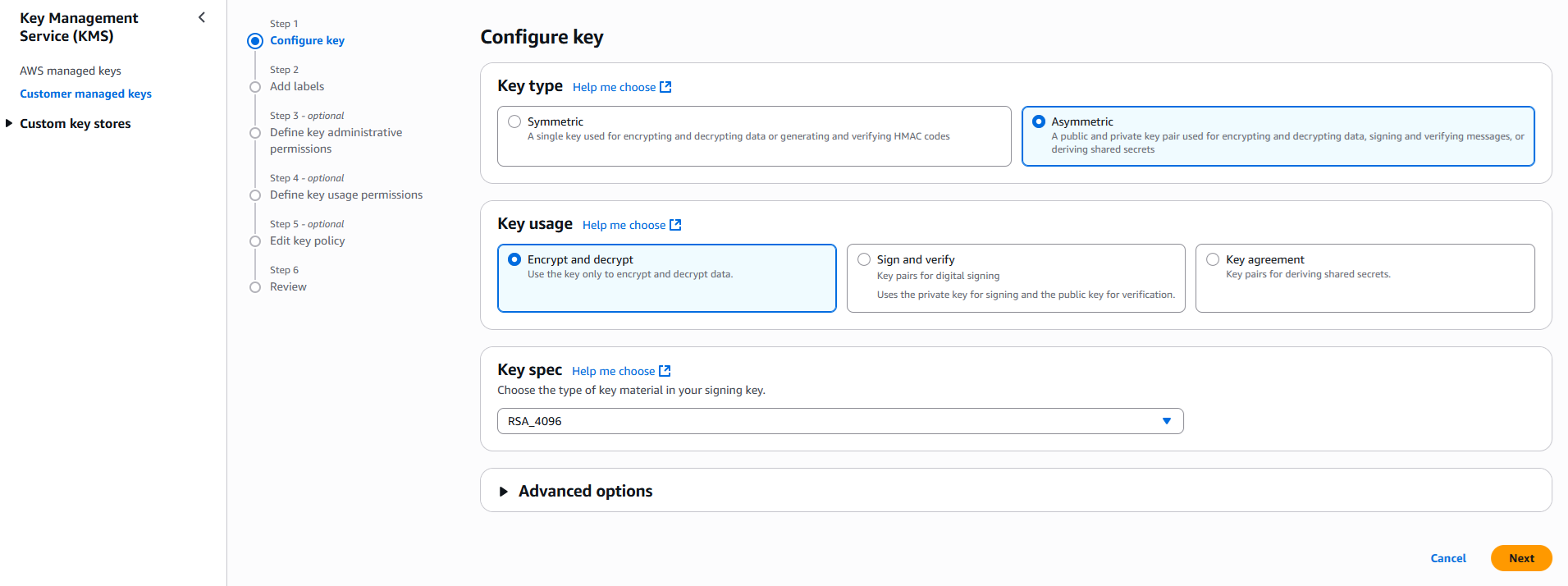

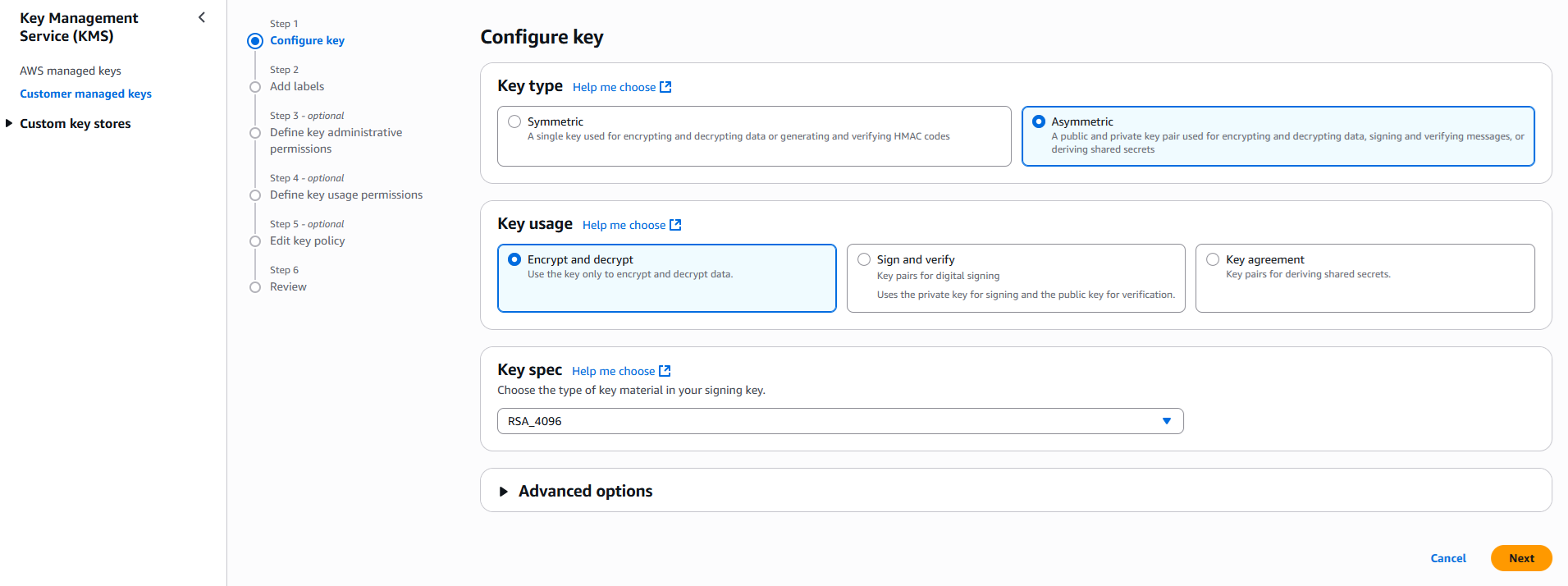

Create KMS Key

The Amazon Key Management Service (KMS) provides the ability for the Protegrity Serverless solution to encrypt and decrypt the Protegrity Security Policy.

Note

It is recommended to host the KMS key in a separate AWS sub-account. This allows dual control, separating the responsibility between the key administrator and the Protegrity Serverless account administrator.To create KMS key:

In the AWS sub-account where the KMS key will reside, select the region.

Navigate to Key Management Service > Create Key.

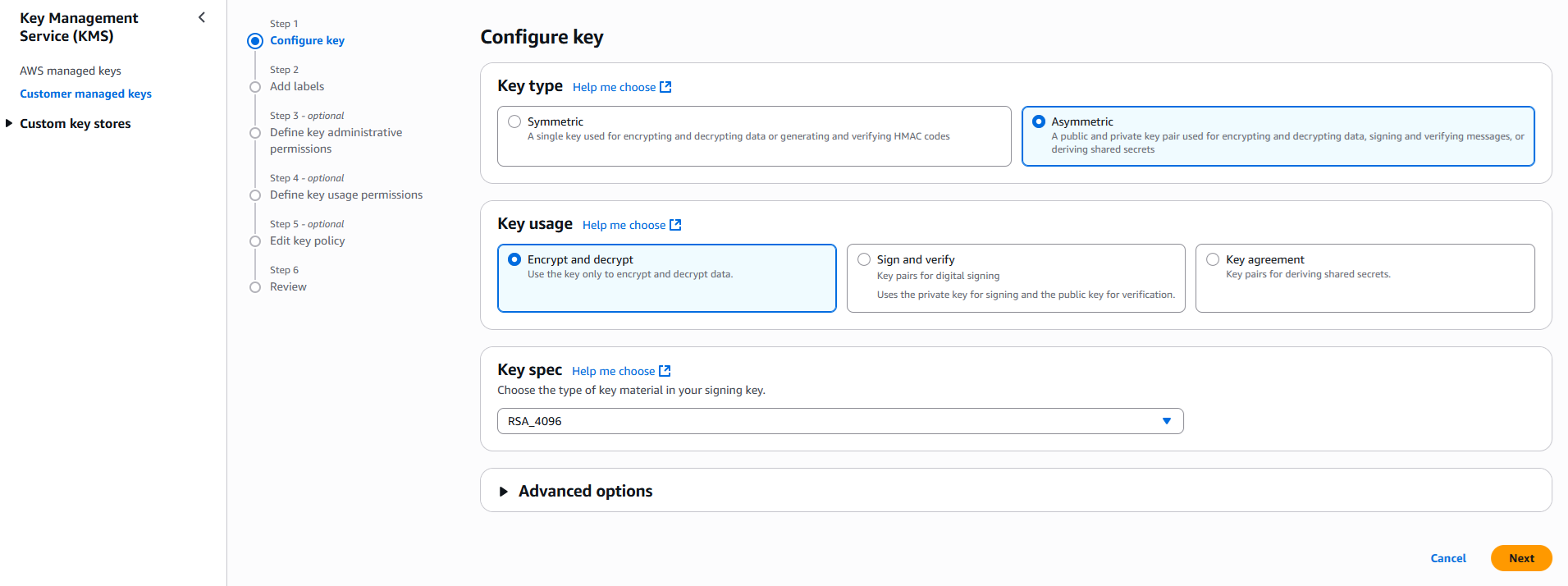

Configure the key settings:

- Key type: Asymmetric

- Key usage: Encrypt and decrypt

- Key spec: RSA_4096

- Click Next

Create alias and optional description, such as, Protegrity-Serverless and click Next.

Define key administrative permissions, the IAM user who will administrate the key.

Note

It is recommended the administrator be different than the administrator of the Protegrity Serverless accountClick Next.

Define the key usage permissions.

In Other AWS accounts, enter the AWS account id used for the Protegrity Serverless installation.

Continue on to create the key. If there is a concern this permission is overly broad, then you can return later to restrict access to the role of two Protegrity Serverless Lambda as principals. Click to open the key in the list and record the ARN.

KMS Key ARN (AWS_KMS_KEY_ID): ___________________

Download the public key from the KMS key. Navigate to the key in KMS console, select the Public key tab, and click Download. Save the PEM file. This public key will be added to the ESA data store as an export key. Refer to Exporting Keys to Datastore for instructions on adding the public key to the data store.

Note

This step is not applicable for ESA versions lower than 10.2.KMS Public Key PEM file: ___________________

What’s Next

1.3.3 - Protect Service Installation

Protect Service Installation

The following sections install the Cloud Protect serverless Lambda function.

Preparation

Ensure that all the steps in Pre-Configuration are performed.

Login to the AWS sub-account console where Protegrity will be installed.

Ensure that the required CloudFormation templates provided by Protegrity are available on your local computer.

Create Protect Lambda IAM Execution Policy

This task defines a policy used by the Protegrity Lambda function to write CloudWatch logs and access the KMS encryption key to decrypt the policy.

Perform the following steps to create the Lambda execution role and required policies:

From the AWS IAM console, select Policies > Create Policy.

Select the JSON tab and copy the following sample policy.

{ "Version": "2012-10-17", "Statement": [ { "Sid": "CloudWatchWriteLogs", "Effect": "Allow", "Action": [ "logs:CreateLogGroup", "logs:CreateLogStream", "logs:PutLogEvents" ], "Resource": "*" }, { "Sid": "KmsDecrypt", "Effect": "Allow", "Action": [ "kms:Decrypt" ], "Resource": [ "arn:aws:kms:*:*:key/*" ] } ] }For the KMS policy, replace the Resource with the ARN for the KMS key created in a previous step.

Select Next, type in a policy name, for example, ProtegrityProtectLambdaPolicy and Create Policy. Record the policy name:

ProtectLambdaPolicyName:__________________

Create Protect Lambda IAM Role

The following steps create the role to utilize the policy defined in Create Protect Lambda IAM Execution Policy.

To create protect lambda IAM execution role:

From the AWS IAM console, select Roles > Create Role.

Select AWS Service > Lambda > Next.

In the list, search and select the policy created in Create Protect Lambda IAM Execution Policy.

Click Next

Type the role name, for example, ProtegrityProtectRole

Click Create role

Record the role ARN.

Role ARN (LambdaExecutionRoleArn): ___________________

Install through CloudFormation

The following steps describe the deployment of the Lambda function.

Access CloudFormation and select the target AWS Region in the console.

Click Create Stack and choose With new resources.

Specify the template.

Select Upload a template file.

Upload the Protegrity-provided CloudFormation template called pty_protect_api_cf.json and click Next.

Specify the stack details. Enter a stack name.

Note

The stack name will be appended to all the services created by the template.Enter the required parameters. All the values were generated in the pre-configuration steps.

Parameter

Description

Default Value

ArtifactS3Bucket

The name of S3 bucket created during the pre-configuration steps

LambdaExecutionRoleArn

The ARN of Lambda role created in the prior step

MinLogLevel

The minimum log level for the protect function.

Supported values: [off, severe, warning, info, config, all]

severe

AllowAssumeUser (Optional)

If 0 is set, the user in the request body will be ignored and the REST API authenticated user will be the acting user.

Supported values: [0, 1]

0

LambdaAuthorization (Optional)

If “jwt” is set, then Authorization header for JWT will be required in the AWS Protect Lambda request. Any other value is ignored and effective policy user is taken from request payload.

Supported values: [“jwt”, “”]

""

jwtUsernameClaim (Optional)

The JWT claim with username.

Common claims: name, preferred_username, cognito:username

Also accepts ordered list of claim names in JSON array format, e.g. [“username”, “email”]

cognito:username

jwtVerify (Optional)

If 1 is set, then jwtSecretBase64 is required. Only applicable when LambdaAuthorization is set to “jwt”. Supported JWT algorithms are: RS256, RS384, RS512. While algorithms HS256, HS384, HS512 are supported, they are not recommended for use.

0

jwtSecretBase64 (Optional)

Required when jwtVerify is set to 1 and Authorization is set to “jwt”. The secret must be provided in base64 encoding. It is recommended to only use public key (asymmetric algorithm).

""

The log forwarder parameters can be provided later after log forwarder is deployed. If you are not planning to deploy log forwarder you can skip this step.

Parameter Description KinesisLogStreamArn The ARN of the AWS Kinesis stream where audit logs will be sent for aggregation AuditLogFlushInterval Time interval in seconds used to accumulate audit logs before sending to Kinesis. Default value: 30. See Log Forwarder Performance section for more details. Click Next with defaults to complete CloudFormation.

After CloudFormation is completed, select the Outputstab in the stack.

Record the following values:

ApiGatewayId: ________________________________

ProtectFunctionName: __________________________

ProtectFunctionProductionAlias: __________________________

ProtectLayerName: _____________________________

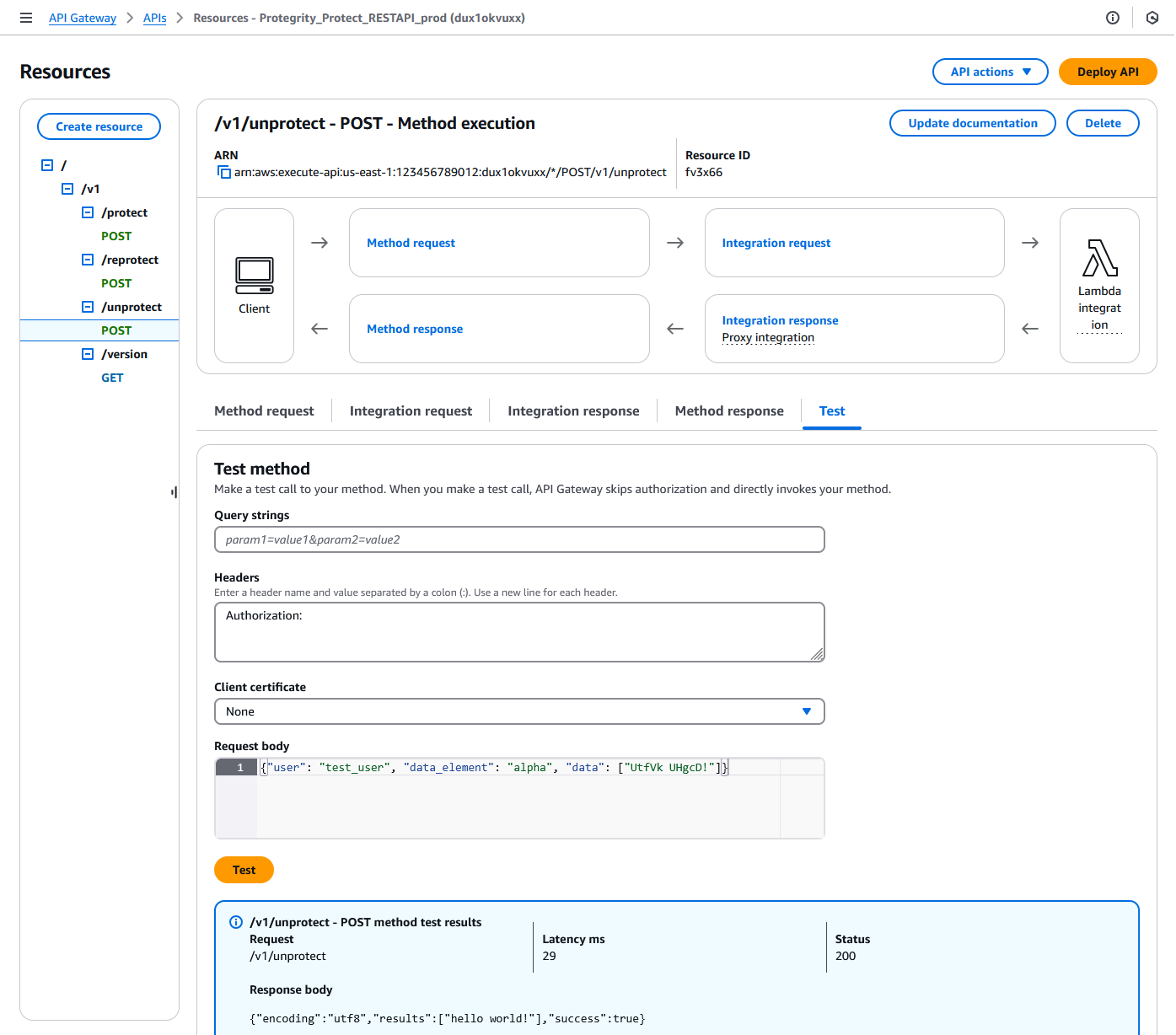

Test Connectivity

Perform the following steps to verify if the API Gateway is working correctly with the Protegrity product.

- In the AWS console Access API Gateway.

Search for API (CloudFormation output ApiGatewayId value).

Select Resources > /v1/unprotect POST.

Click on Test tab.

Provide the following input in Headers.

Authorization:Provide the following input in Request body.

{"user": "test_user", "data_element": "alpha", "data": ["UtfVk UHgcD!"]}Click Test.

Verify the response Status is 200 and the Response Body is as follows:

{"encoding": "utf8", "results": ["hello world!"], "success": true}For example:

Troubleshooting

Error | Action |

|---|---|

5xx error |

If this step fails, then check the console for the meaningful error. |

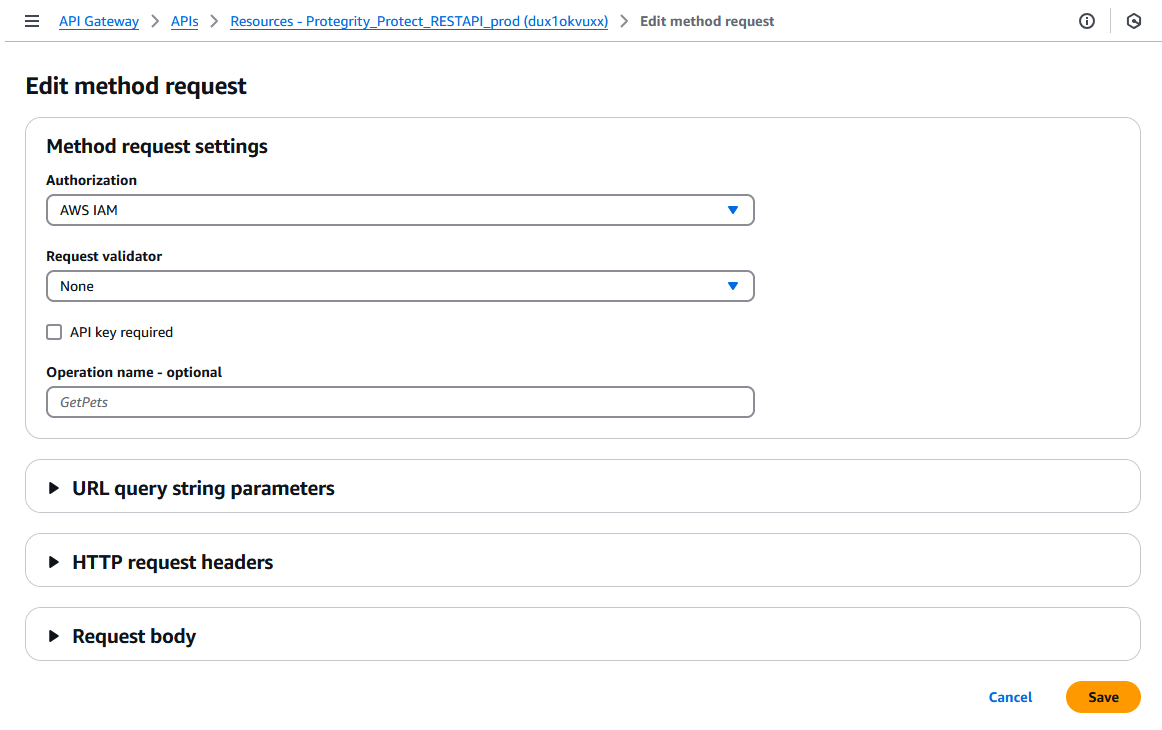

Setting up Authentication

This step describes how to setup an AWS API Gateway Authorization.

By default, the API Gateway is configured to use AWS_IAM Authorization.

- From AWS console access API Gateway.

- If you are using AWS API Gateway Authorizer, ensure that the Authorizer is configured in Authorizers.

- Go to Resources > /v1/protect POST > Method Request tab > Click Edit button

- Select from Authorization. dropdown.

- Click Save button.

- Click Deploy API button to deploy to pty stage.

Protect Lambda Configuration

After Cloud Formation stack is deployed, Protector Lambda can be reconfigured based on the authentication selected in previous stage. From your AWS console navigate to lambda and select following lambda: Protegrity_Protect_RESTAPI_<STACK_NAME>. Scroll down to Environment variables section, select Edit and replace the entries based on the following information.

Environment Variable | Description | Notes |

|---|---|---|

authorization | If “jwt” is set, Authorization header with JWT will be required in the AWS Protect Lambda request. Any other value is ignored and effective policy user is taken from request payload. Supported Values: [“jwt”, “”] | If “jwt” is set, any request without valid JWT in the Authorization header, will result in error from API Gateway: 502 Bad Gateway. Default Value: "" |

allow_assume_user | If 0 is set, API Gateway user will not be used, and the effective user is the JWT user. Supported Values: [0, 1] | Applicable when authorization is set to “jwt”. Default Value: 0 |

jwt_user_claim | The JWT claim with username. Common claims: name, preferred_username, cognito:username | Applicable when authorization is set to “jwt” Default Value: cognito:username |

jwt_verify | If 1 is set, jwt_secret_base64 is required. Only applicable when authorization is set to “jwt”. Supported JWT algorithm RS256, RS384, RS512. While algorithms HS256, HS384, HS512 are supported, they are not recommended for use. | Applicable when authorization is set to “jwt” |

jwt_secret_base64 | Required when jwt_verify is set to 1 and Authorization is set to “jwt”. The secret must be provided in base64 encoding. It is recommended to only use public key (asymmetric algorithm). | Applicable when authorization is set to “jwt” and jwt_verify is set to 1 |

service_user | If set, it will be used as effective policy user. | service_user should be used when the Cloud API should always run as single service user or account no matter what user is in the request. service_user will always take priority over users in the payload and in the JWT header. |

USERNAME_REGEX | If set, the effective policy user will be extracted from the user in the request using supplied regular expression. | See Configuring Regular Expression to Extract Policy Username to learn how to extract username from the request |

What’s Next

1.3.4 - Policy Agent Installation

The following sections will install the Policy Agent. The Policy Agent polls the ESA and deploys the policy to Protegrity Serverless as a static resource. Some of the installation steps are not required for the operation of the software but recommended for establishing a secure environment. Contact Protegrity Professional Services for further guidance on configuration alternatives in the Cloud.

Important

If you are deploying Policy Agent with Protegrity Provisioned Cluster (PPC), refer to the PPC Appendix: Policy Agent Certificate and Key Guidance for specific instructions on obtaining and using the CA certificate and datastore key fingerprint. The steps in this section are specific to ESA and may differ for PPC. Be sure to follow the PPC documentation for the most accurate and up-to-date setup guidance.ESA Server

Policy Agent Lambda requires ESA server running and accessible on TCP port 443.

Note down ESA IP address:

ESA IP Address (EsaIpAddress): ___________________

Certificates on ESA

Note

If you are deploying Policy Agent with Protegrity Provisioned Cluster (PPC), see PPC Appendix: Policy Agent Certificate Guidance for specific instructions on obtaining and using the CA certificate. The steps in this section are specific to ESA and may differ for PPC.Whether your ESA is configured with default self-signed certificate or your corporate CA certificate, Policy Agent can validate authenticity of ESA connection using CA certificate. The process for both scenarios is the same:

- Obtain CA certificate

- Convert CA certificate to a value accepted by Policy Agent

- Provide converted CA certificate value to Policy Agent

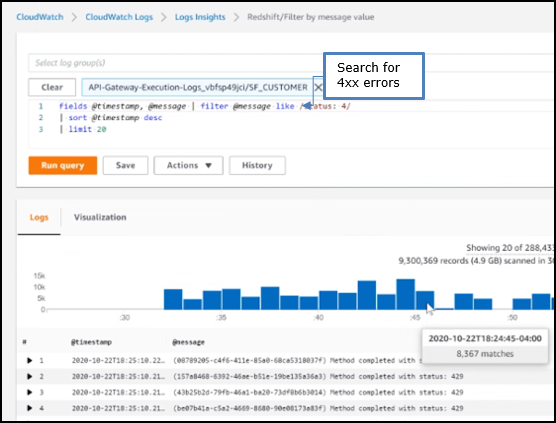

To obtain self-signed CA certificate from ESA:

Log in to ESA Web UI.

Select Settings > Network > Manage Certificates.

Hover over Server Certificate and click on download icon to download the CA certificate.

To convert downloaded CA certificate to a value accepted by Policy Agent, open the downloaded PEM file in text editor and replace all new lines with escaped new line: \n.

To escape new lines from command line, use one of the following commands depending on your operating system:

Linux Bash:

awk 'NF {printf "%s\\n",$0;}' ProtegrityCA.pem > output.txtWindows PowerShell:

(Get-Content '.\ProtegrityCA.pem') -join '\n' | Set-Content 'output.txt'Record the certificate content with new lines escaped.

ESA CA Server Certificate (EsaCaCert): ___________________

This value will be used to set PTY_ESA_CA_SERVER_CERT or PTY_ESA_CA_SERVER_CERT_SECRET Lambda variable in section Policy Agent Lambda Configuration

For more information about ESA certificate management refer to Certificate Management Guide in ESA documentation.

Identify or Create a new VPC

Establish a VPC where the Policy Agent will be hosted. This VPC will need connectivity to the ESA. The VPC should be in the same account and region established in Pre-Configuration.

VPC name: ___________________

VPC Subnet Configuration

Identify or create a new subnet in the VPC where tha Lambda function will be connected to. It is recommended to use a private subnet.

Subnet name: ___________________

NAT Gateway For ESA Hosted Outside AWS Network

If ESA server is hosted outside of the AWS Cloud network, the VPC configured for Lambda function must ensure additional network configuration is available to allow connectivity with ESA. For instance if ESA has a public IP, the Lambda function VPC must have public subnet with a NAT server to allow routing traffic outside of the AWS network. A Routing Table and Network ACL may need to be configured for outbound access to the ESA as well.

VPC Endpoints Configuration

If an internal VPC was created, then add VPC Endpoints, which will be used by the Policy Agent to access AWS services. Policy Agent needs access to the following AWS services:

Type | Service name |

|---|---|

Interface | com.amazonaws.{REGION}.secretsmanager |

Interface | com.amazonaws.{REGION}.kms |

Gateway | com.amazonaws.{REGION}.s3 |

Interface | com.amazonaws.{REGION}.lambda |

Identify or Create Security Groups

Policy Agent and cloud-based ESA appliance use AWS security groups to control traffic that is allowed to leave and reach them. Policy Agent runs on schedule and is mostly concerned with allowing traffic out of itself to ESA and AWS services it depends on. ESA runs most of the time and it must allow Policy Agent to connect to it.

Policy Agent security group must allow outbound traffic using rules described in the table below. To edit security group navigate:

From VPC > Security Groups > Policy Agent Security Group configuration.

| Type | Protocol | Port Range | Destination | Reason |

|---|---|---|---|---|

| Custom TCP | TCP | 443 | Policy Agent Lambda SG | ESA Communication |

| HTTPS | TCP | 443 | Any | AWS Services |

Record Policy Agent security group ID:

Policy Agent Security Group Id: ___________________

Policy Agent will reach out to ESA on port 443. Create following inbound security group rule for cloud-based ESA appliance to allow connections from Policy Agent:

| Type | Protocol | Port Range | Source |

|---|---|---|---|

| Custom TCP | TCP | 443 | Policy Agent Lambda SG |

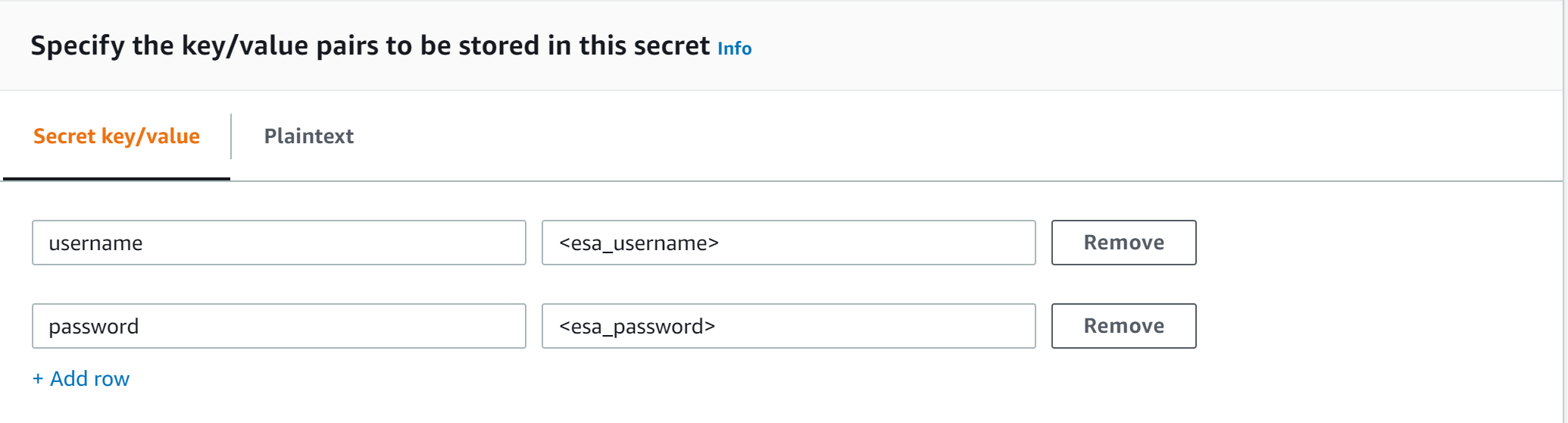

Creating ESA Credentials

Policy Agent Lambda requires ESA credentials to be provided as one of the three options.

Note

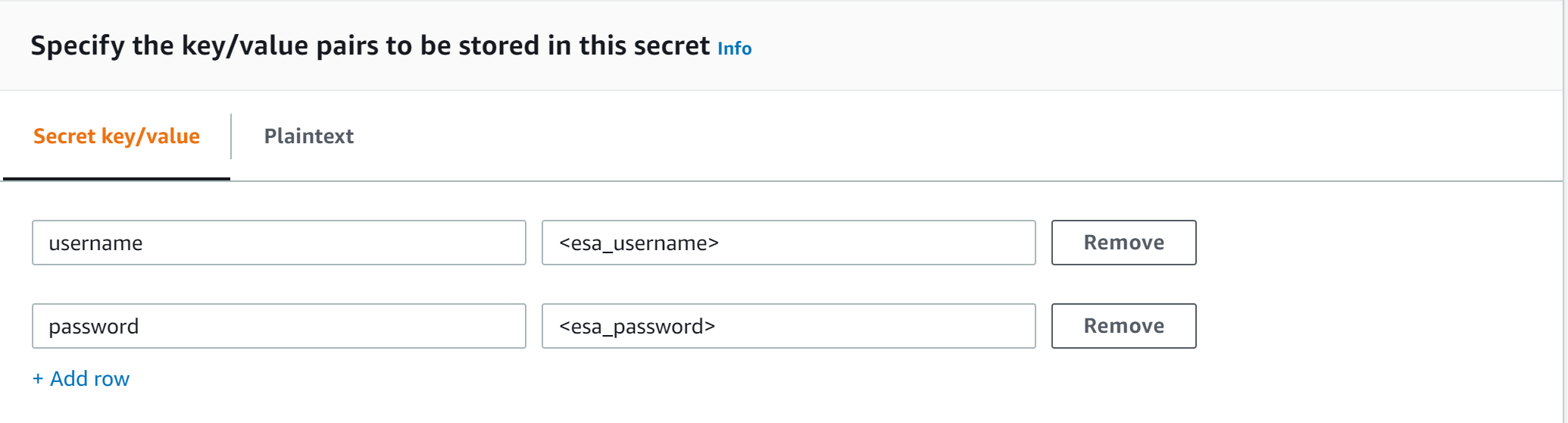

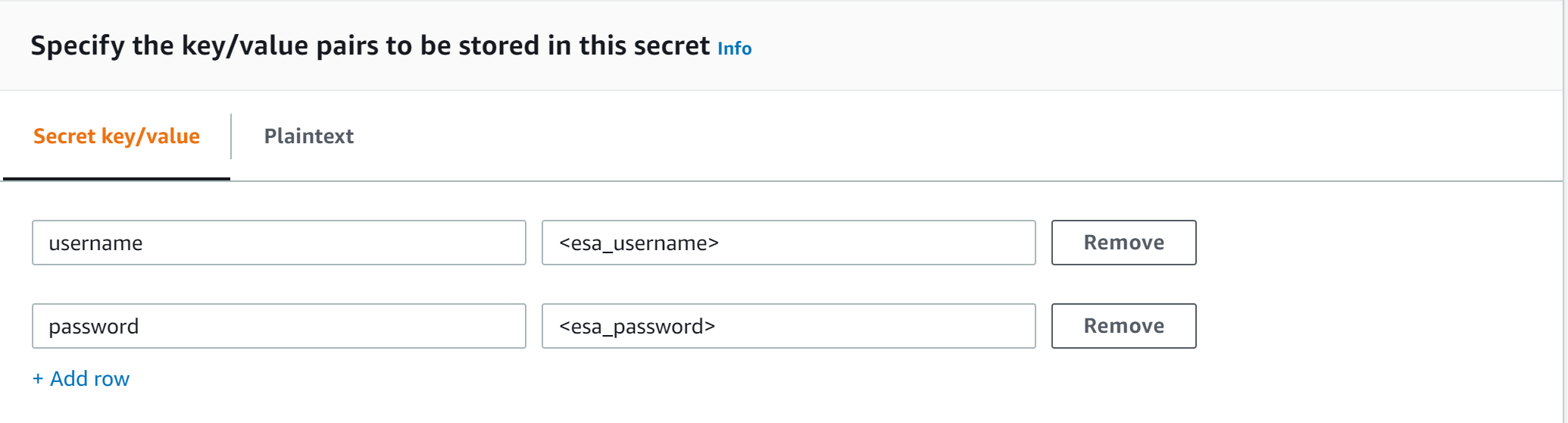

The username and password of the ESA user requires role with Export Resilient Package and Can Create JWT Token permissions. Security Administrator is one of the predefined roles which contains the above permissions, however for separation of duties it is recommended to create custom role.Option 1: Secrets Manager

Creating secrets manager secret with ESA username and password.

From the AWS Secrets Manager Console, select Store New Secret.

Select Other Type of Secrets.

Specify the username and password key value pair.

Select the encryption key or leave default AWS managed key.

Specify the Secret Name and record it.

ESA Credentials Secret Name: __________________

Option 2: KMS Encrypted Password

ESA password is encrypted with AWS KMS symmetric key.

Create AWS KMS symmetric key which will be used to encrypt ESA password. See Create KMS Key for instructions on how to create KMS symmetric key using AWS console.

Record KMS Key ARN.

ESA PASSWORD KMS KEY ARN: __________________

Run AWS CLI command to encrypt ESA password. Below you can find sample Linux aws cli command. Replace <key_arn> with KMS symmetric key ARN.

aws kms encrypt --key-id <key_arn> --plaintext $(echo '<esa_password>' | base64 )Sample output.

{ "CiphertextBlob": "esa_encrypted_password", "KeyId": "arn:aws:kms:region:aws_account:key/key_id ", "EncryptionAlgorithm": "SYMMETRIC_DEFAULT" }Record ESA username and encrypted password.

ESA USERNAME: __________________

ESA ENCRYPTED PASSWORD: __________________

Option 3: Custom AWS Lambda function

With this option ESA username and password are returned by a custom AWS Lambda function. This method may be used to get the username and password from external vaults.

Create AWS Lambda in any AWS supported runtime.

There is no input needed.

The Lambda function must return the following response schema.

response: type: object properties: username: string password: stringFor example,

example output: {"username": "admin", "password": "Password1234"}Sample AWS Lambda function in Python:

import json def lambda_handler(event, context): return {"username": "admin", "password": "password1234"}Warning

Protegrity does not recommend hardcoding ESA username and password in the clear.

Record the Lambda name:

Custom AWS lambda for ESA credentials: _______________

Create Agent Lambda IAM Policy

Follow the steps below to create Lambda execution policies.

Create Agent Lambda IAM policy

From AWS IAM console, select Policies > Create Policy.

Select JSON tab and copy the following snippet.

{ "Version": "2012-10-17", "Statement": [ { "Sid": "EC2ModifyNetworkInterfaces", "Effect": "Allow", "Action": [ "ec2:CreateNetworkInterface", "ec2:DescribeNetworkInterfaces", "ec2:DeleteNetworkInterface" ], "Resource": "*" }, { "Sid": "CloudWatchWriteLogs", "Effect": "Allow", "Action": [ "logs:CreateLogGroup", "logs:CreateLogStream", "logs:PutLogEvents" ], "Resource": "*" }, { "Sid": "LambdaUpdateFunction", "Effect": "Allow", "Action": [ "lambda:UpdateFunctionConfiguration" ], "Resource": [ "arn:aws:lambda:*:*:function:*" ] }, { "Sid": "LambdaReadLayerVersion", "Effect": "Allow", "Action": [ "lambda:GetLayerVersion", "lambda:ListLayerVersions" ], "Resource": "*" }, { "Sid": "LambdaDeleteLayerVersion", "Effect": "Allow", "Action": "lambda:DeleteLayerVersion", "Resource": "arn:aws:lambda:*:*:layer:*:*" }, { "Sid": "LambdaPublishLayerVersion", "Effect": "Allow", "Action": "lambda:PublishLayerVersion", "Resource": "arn:aws:lambda:*:*:layer:*" }, { "Sid": "S3GetObject", "Effect": "Allow", "Action": [ "s3:GetObject" ], "Resource": "arn:aws:s3:::*/*" }, { "Sid": "S3PutObject", "Effect": "Allow", "Action": [ "s3:PutObject" ], "Resource": "arn:aws:s3:::*/*" }, { "Sid": "KmsEncrypt", "Effect": "Allow", "Action": [ "kms:GetPublicKey" ], "Resource": [ "arn:aws:kms:*:*:key/*" ] }, { "Sid": "SecretsManagerGetSecret", "Effect": "Allow", "Action": [ "secretsmanager:GetSecretValue" ], "Resource": [ "arn:aws:secretsmanager:*:*:secret:*" ] }, { "Sid": "LambdaGetConfiguration", "Effect": "Allow", "Action": [ "lambda:GetFunctionConfiguration" ], "Resource": [ "arn:aws:lambda:*:*:function:*" ] } ] }Replace wildcard * with the region, account, and resource name information where required.

This step is required if KMS is used to encrypt ESA password.

Add policy entry below. Replace ESA PASSWORD KMS KEY ARN with the value recorded in Option 2: KMS Encrypted Password.

{ "Sid": "KmsDecryptEsaPassword", "Effect": "Allow", "Action": [ "kms:Decrypt" ], "Resource": [ "**ESA PASSWORD KMS KEY ARN**" ] }Select Next type in the policy name and Create Policy. Record policy name:

Policy Name: ___________________

Create Agent Lambda IAM Role

Perform the following steps to create Agent Lambda execution IAM role.

To create agent Lambda IAM role:

From AWS IAM console, select Roles > Create Role.

Select AWS Service > Lambda > Next.

Select the policy created in Create Agent Lambda IAM policy.

Proceed to Name, Review and Create.

Type the role name, for example, ProtegrityAgentRole and click Confirm.

Select Create role.

Record the role ARN.

Agent Lambda IAM Execution Role Name: ___________________

Corporate Firewall Configuration

If an on-premise firewall is used, then the firewall must allow access from the NAT Gateway to an ESA. The firewall must allow access from the NAT Gateway IP to ESA via port 443 and 443.

CloudFormation Installation

Create the Policy Agent in the VPC using the CloudFormation script provided by Protegrity.

Access the CloudFormation service.

Select the target installation region.

Create a stack with new resources.

Upload the Policy Agent CloudFormation template (file name: pty_agent_cf.json).

Specify the following parameters for Cloud Formation:

Parameter Description Note VPC VPC where the Policy Agent will be hosted Identify or Create a new VPC Subnet Subnet where the Policy Agent will be hosted VPC Subnet Configuration PolicyAgentSecurityGroupId Security Group Id, which allows communication between the Policy Agent and the ESA Identify or Create Security Groups LambdaExecutionRoleArn Agent Lambda IAM execution role ARN allowing access to the S3 bucket, KMS encryption Key, Lambda and Lambda Layer Create Agent Lambda IAM Role ArtifactS3Bucket S3 bucket name with deployment package for the Policy Agent Use S3 Bucket name recorded in Create S3 bucket for Installing Artifacts CreateCRONJob Set to True to create a CloudWatch schedule for the agent to run. Default: False

Policy Agent Lambda Configuration

After the CloudFormation stack is deployed, the Policy Agent Lambda must be configured with parameters recorded in earlier steps. From your AWS Console, navigate to lambda and select the following Lambda.

Protegrity_Agent<STACK_NAME>_

Select Configuration tab and scroll down to the Environment variables section. Select Editand replace all entries with the actual values.

Parameter | Description | Notes |

|---|---|---|

PTY_ESA_IP | ESA IP address or hostname | |

PTY_ESA_CA_SERVER_CERT | ESA self-signed CA certificate or your corporate CA certificate used by policy Agent Lambda to ensure ESA is the trusted server. | Recorded in step Certificates on ESA NoteFor PPC deployments, see PPC Appendix: Policy Agent Certificate Guidance for details on obtaining and using the CA certificate.In case ESA is configured with publicly signed certificates, the PTY_ESA_CA_SERVER_CERT configuration will be ignored. |

PTY_ESA_CA_SERVER_CERT_SECRET | This configuration option fulfills the same function as PTY_ESA_CA_SERVER_CERT but supports larger configuration values, making it the recommended choice. The value should specify the name of the AWS Secrets Manager secret containing the ESA self-signed CA certificate. The secret value should be set to the json with “PTY_ESA_CA_SERVER_CERT” key and PEM formated CA certificate content value as shown below. | Recorded in step Certificates on ESA NoteFor PPC deployments, see PPC Appendix: Policy Agent Certificate Guidance for details on obtaining and using the CA certificate.In case ESA is configured with publicly signed certificates, the PTY_ESA_CA_SERVER_CERT_SECRET configuration will be ignored. When both PTY_ESA_CA_SERVER_CERT and PTY_ESA_CA_SERVER_CERT_SECRET are configured the PTY_ESA_CA_SERVER_CERT_SECRET takes precedence. |

PTY_ESA_CREDENTIALS_SECRET | ESA username and password (encrypted value by AWS Secrets Manager) | |

PTY_DATASTORE_KEY | ESA policy datastore public key fingerprint (64 char long) e.g. 123bff642f621123d845f006c6bfff27737b21299e8a2ef6380aa642e76e89e5. | NoteThis configuration is not applicable for ESA versions lower than 10.2.The export key is the public part of an asymmetric key pair created in a Create KMS Key. A user with Security Officer permissions adds the public key to the data store in ESA via Policy Management > Data Stores > Export Keys. The fingerprint can then be copied using the Copy Fingerprint icon next to the key. Refer to Exporting Keys to Datastore for details. NoteFor PPC deployments, see PPC Appendix: Policy Agent Certificate and Key Guidance for details on obtaining and using the datastore key fingerprint. |

AWS_KMS_KEY_ID | KMS key id or full ARN e.g. arn:aws:kms:us-west-2:112233445566:key/bfb6c4fb-509a-43ac-b0aa-82f1ca0b52d3 | |

AWS_POLICY_S3_BUCKET | S3 bucket where the encrypted policy will be written | S3 bucket of your choice |

AWS_POLICY_S3_FILENAME | Filename of the encrypted policy stored in S3 bucket | Default: protegrity-policy.zip |

AWS_PROTECT_FN_NAME | Comma separated list of Protect function names or ARNs | ProtectFunctionName(s), recorded in CloudFormation Installation |

DISABLE_DEPLOY | This flag can be either 1 or 0. If set to 1, then the agent will not update PTY_PROTECT lambda with the newest policy. Else, the policy will be saved in the S3 bucket and deployed to the Lambda Layer | Default: 0 |

AWS_POLICY_LAYER_NAME | Lambda layer used to store the Protegrity policy used by the PTY_PROTECT function |

|

POLICY_LAYER_RETAIN | Number of policy versions to retain as backup. (e.g. 2 will retain the latest 2 policies and remove older ones). -1 retains all. | Default: 2 |

POLICY_PULL_TIMEOUT | Time in seconds to wait for the ESA to send the full policy | Default: 20s |

ESA_CONNECTION_TIMEOUT | Time in seconds to wait for the ESA response | Default: 5s |

LOG_LEVEL | Application and audit logs verbiage level | Default: INFO Allowed values: DEBUG – the most verbose, INFO, WARNING, ERROR – the least verbose |

PTY_CORE_EMPTYSTRING | Override default behavior. Empty string response values are returned as null values. For instance: (un)protect(’’) -> null (un)protect(’’) -> '' | Default: empty Allowed values: null empty |

PTY_CORE_CASESENSITIVE | Specifies whether policy usernames should be case sensitive | Default: no Allowed values: yes no |

PTY_ADDIPADDRESSHEADER | When enabled, agent will send its source IP address in the request header. This configuration works in conjunction with ESA hubcontroller configuration ASSIGN_DATASTORE_USING_NODE_IP (default=false). See Associating ESA Data Store With Cloud Protect Agent for more information. | Default: yes Allowed values: yes no |

PTY_ESA_USERNAME | Plaintext ESA username which is used together with PTY_ESA_ENCRYPTED_PASSWORD as an optional ESA credentials | Option 2: KMS Encrypted Password Presence of this parameter will cause PTY_ESA_CREDENTIALS_SECRET to be ignored |

PTY_ESA_ENCRYPTED_PASSWORD | ESA password encrypted with KMS symmetric key. Example AWS cli command to generate the value:

| Option 2: KMS Encrypted Password Presence of this parameter will cause PTY_ESA_CREDENTIALS_SECRET to be ignored Value must be base64 encoded |

EMPTY_POLICY_S3 | This flag can be either 1 or 0. If set to 1, then the agent will remove the content of the policy file in S3 bucket, but will keep the checksum in the metadata. Else, the policy will be saved in the S3 bucket and not removed. | Default: 0 |

PTY_ESA_CREDENTIALS_LAMBDA | Lambda function to return ESA credentials | Recorded in step Option 3: Custom AWS Lambda function LAMBDA FOR ESA CREDENTIALS. Presence of PTY_ESA_USERNAME, or PTY_ESA_CREDENTIALS_SECRET will cause this value to be ignored. The Policy Agent Lambda must have network access and IAM permissions to invoke the custom ESA Credentials Lambda you have created in Option 3: Custom AWS Lambda function. |

Test Installation

Open the Lambda and configure Test to execute the lambda and specify the default test event. Wait for around 20 seconds for the Lambda to complete. If policy is downloaded successfully, then a success message appears.

Navigate to the AWS_POLICY_S3_BUCKET bucket and verify that the AWS_POLICY_S3_FILENAME file was created.

Troubleshooting

Lambda Error | Example Error | Action |

|---|---|---|

Task timed out after x seconds | |

|

ESA connection error. Failed to download certificates | ||

Policy Pull takes a long time | |

|

ESA connection error. Failed to download certificates. HTTP response code: 401 | | Ensure that the PTY_ESA_CREDENTIALS_SECRET has correct ESA username and password |

An error occurred (AccessDeniedException) when calling xyz operation | | Ensure that the Lambda execution role has permission to call the xyz operation |

Access Denied to Secret Manager. | |

|

Master Key xyz unable to generate data key | Ensure that the Lambda can access xyz CMK key | |

The S3 bucket server-side encryption is enabled, the encryption key type is SSE-KMS but the Policy Agent execution IAM role doesn’t have permissions to encrypt using the KMS key . | | Add the following permissions to the Policy Agent excution role. NoteWhen the KMS key and the Policy Agent Lambda are in separate accounts, update both the AWS KMS key and the Policy Agent execution role. |

The S3 bucket has bucket policy to only allow access from within the VPC. | | The Policy Agent publishes a new Lambda Layer version, and the Lambda Layer service uploads the policy file from the s3 bucket and the upload request is originated from the AWS service outside the Policy Agent Lambda VPC. Update the S3 bucket resource policy to allow access from AWS Service. Sample security policy to lock down access to the vpc: |

Additional Configuration

Strengthen the KMS IAM policy by granting access only to the required Lambda function(s).

Finalize the IAM policy for the Lambda Execution Role. Ensure to replace wildcard * with the region, account, and resource name information where required.

For example,

"arn:aws:lambda:*:*:function:*" -> "arn:aws:lambda:us-east-1:account:function:function_name"

Policy Agent Schedule

If specified in CloudFormation Installation, the agent installation created a CloudWatch event rule, which checks for policy update on an hourly schedule. This schedule can be altered to the required frequency.

Under CloudWatch > Events > Rules, find Protegrity_Agent_{stack_name}. Click Action > Edit Set the cron expression. A cron expression can easily be defined using CronMaker, a free online tool. Refer to http://www.cronmaker.com.

What’s Next

1.3.5 - Audit Log Forwarder Installation

The following sections show steps how to install Audit Log Forwarder component in the AWS Cloud. The Log Forwarder deployment allows for the audit logs generated by Protector to be delivered to ESA for auditing and governance purposes. Log Forwarder component is optional and is not required for the Protector Service to work properly. See Log Forwarding Architecture section in this document for more information. Some of the installation steps are not required for the operation of the software but recommended for establishing a secure environment. C ontact Protegrity for further guidance on configuration alternatives in the Cloud.

Note

The installation steps below assume that the Log Forwarder is going to be installed in the same AWS account as the corresponding Protect Lambda service. For instructions on how to install Log Forwarder in the AWS account separate than the Protect Lambda, please contact Protegrity.ESA Audit Store Configuration

ESA server is required as the recipient of audit logs. Verify the information below to ensure ESA is accessible and configured properly.

ESA server running and accessible on TCP port 9200 (Audit Store) or 24284 (td-agent).

Audit Store service is configured and running on ESA. Applies when audit logs are output to Audit Store directly or through td-agent. For information related to ESA Audit Store configuration, refer to Audit Store Guide.

(Optional) td-agent is configured for external input. For more information related to td-agent configuration, refer to ESA guide Sending logs to an external security information and event management (SIEM).

Certificates on ESA

Note

This section is optional. If CA certificate is not provided, the Log Forwarder will skip server certificate validation and will connect to ESA without verifying that it is a trusted server.

If you are deploying Log Forwarder with Protegrity Provisioned Cluster (PPC), certificate authorization and CA validation are not supported. Configuration steps related to certificates in this section do not apply to PPC. See Integrating Cloud Protect with PPC (Protegrity Provisioned Cluster): Log Forwarder Setup with PPC for details.

By default, ESA is configured with self-signed certificates, which can optionally be validated using a self-signed CA certificate supplied in the Log Forwarder configuration. If no CA certificate is provided, the Log Forwarder will skip server certificate validation.

Note

Certificate Validation can be bypassed for testing purposes, see section: Install through CloudFormationIf ESA is configured with publicly signed certificates, this section can be skipped since the forwarder Lambda will use the public CA to validate ESA certificates.

To obtain the self-signed CA certificate from ESA:

Download ESA CA certificate from the /etc/ksa/certificates/plug directory of the ESA

After certificate is downloaded, open the PEM file in text editor and replace all new lines with escaped new line: \n.

To escape new lines from command line, use one of the following commands depending on your operating system:

Linux Bash:

awk 'NF {printf "%s\\n",$0;}' ProtegrityCA.pem > output.txtWindows PowerShell:

(Get-Content '.\ProtegrityCA.pem') -join '\n' | Set-Content 'output.txt'Record the certificate content with new lines escaped.

ESA CA Server Certificate (EsaCaCert): ___________________

This value will be used to set PtyEsaCaServerCert cloudformation parameter in section Install through CloudFormation

For more information about ESA certificate management refer to Certificate Management Guide in ESA documentation.

AWS VPC Configuration

Log forwarder Lambda function requires network connectivity to ESA, similar to Policy Agent Lambda function. Therefore, it can be hosted in the same VPC as Policy Agent.

Separate VPC can be used, as long as it provides network connectivity to ESA.

Note

AWS Lambda service uses permissions in log forwarder function execution role to create and manage network interfaces. Lambda creates a Hyperplane ENI and reuses it for other VPC-enabled functions in your account that use the same subnet and security group combination. Each Hyperplane ENI can handle thousands of connections/ports as the Lambda function scales up. If more connections are needed AWS Lambda service creates additional Hyperplane ENIs. There’s no additional charge for using a VPC or a Hyperplane ENI. Refer to AWS official Lambda Hyperplane ENIs docs for more information.VPC Name: ___________________

VPC Subnet Configuration

Log Forwarder can be connected to the same subnet as Policy Agent or separate one as long as it provides connectivity to ESA.

Subnet Name: ___________________

NAT Gateway For ESA Hosted Outside AWS Network

If ESA server is hosted outside of the AWS Cloud network, the VPC configured for Lambda function must ensure additional network configuration is available to allow connectivity with ESA. For instance if ESA has a public IP, the Lambda function VPC must have public subnet with a NAT server to allow routing traffic outside of the AWS network. A Routing Table and Network ACL may need to be configured for outbound access to the ESA as well.

VPC Endpoint Configuration

Log Forwarder Lambda function requires connectivity to Secrets Manager AWS service. If the VPC identified in the steps before has no connectivity to public internet through the NAT Gateway, then the following service endpoint must be configured:

- com.amazonaws.{REGION}.cloudwatch

- com.amazonaws.{REGION}.secretsmanager

- com.amazonaws.{REGION}.kms

Security Group Configuration

Security groups restrict communication between Log Forwarder Lambda function and the ESA appliance. The following rules must be in place for ESA and Log Forwarder Lambda function.

From VPC > Security Groups > Log Forwarder Security Group configuration.

| Type | Protocol | Port Range | Destination | Reason |

|---|---|---|---|---|

| Custom TCP | TCP | 9200 | Log Forwarder Lambda SG | ESA Communication |

Record the name of Log Forwarder security group name.

Log Forwarder Security Group Id: ___________________

The following port must be open for the ESA. If the ESA is running in the Cloud, then create the following security.

Note

If an on-premise firewall is used, then the firewall must allow access from the NAT Gateway to an ESA. The firewall must allow access the NAT Gateway IP access to ESA via port 9200.ESA Security Group configuration

| Type | Protocol | Port Range | Source |

|---|---|---|---|

| Custom TCP | TCP | 9200 | Log Forwarder Lambda SG |

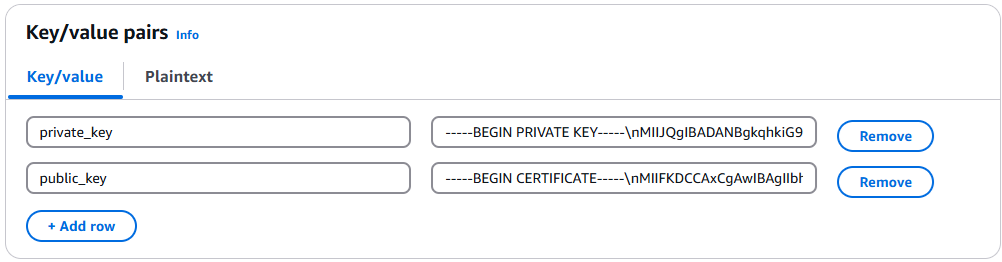

Configure ESA Audit Store Credentials

Note

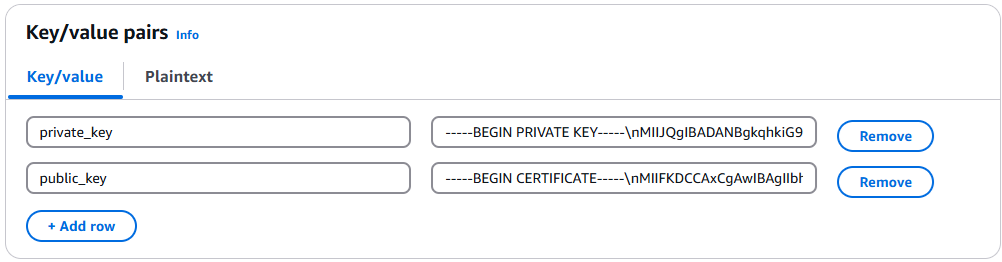

This section is optional. If client certificate authentication is not set up, the Log Forwarder will connect to ESA without authentication credentials.Audit Log Forwarder can optionally authenticate with ESA using certificate-based authentication with a client certificate and certificate key. If used, both the certificate and certificate key will be stored in AWS Secrets Manager.

Download the following certificates from the /etc/ksa/certificates/plug directory of the ESA:

- client.key

- client.pem

After certificates are downloaded, open each PEM file in text editor and replace all new lines with escaped new line: \n. To escape new lines from command line, use one of the commands below depending on your operating system.

Linux Bash:

awk 'NF {printf "%s\\n",$0;}' client.key > private_key.txt

awk 'NF {printf "%s\\n",$0;}' client.pem > public_key.txt

Windows PowerShell:

(Get-Content '.\client.key') -join '\n' | Set-Content 'private_key.pem'

(Get-Content '.\client.pem') -join '\n' | Set-Content 'public_key.pem'

For more information on how to configure client certificate authentication for Audit Store on ESA refer to Audit Store Guide.

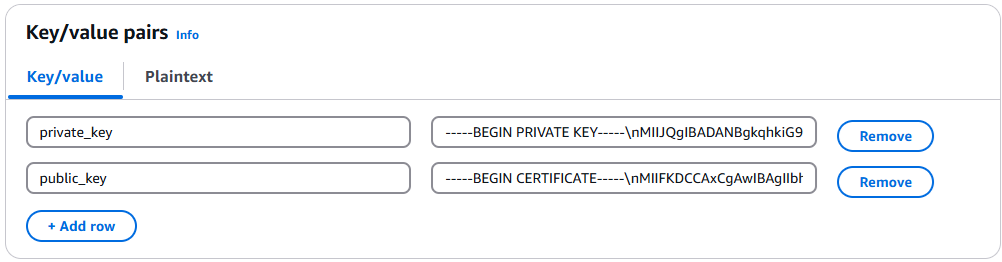

To create secret with ESA client certificate/key pair in AWS Secrets Manager.

From the AWS Secrets Manager Console, select Store New Secret.

Select Other Type of Secrets.

Specify the private_key and public_key value pair.

Select the encryption key or leave default AWS managed key.

Specify the Secret Name and record it below.

ESA Client Certificate/Key Pair Secret Name: ___________________

This value will be used to set PtyEsaClientCertificatesSecretId cloudformation parameter in section Install through CloudFormation

Note

If you are deploying Log Forwarder with PPC, do not configure client certificate authentication. See PPC Appendix: Log Forwarder Certificate Guidance for details.Create Audit Log Forwarder IAM Execution Policy

This task defines a policy used by the Protegrity Log Forwarder Lambda function to write CloudWatch logs, access the KMS encryption key to decrypt the policy and access Secrets Manager for log forwarder user credentials.

Perform the following steps to create the Lambda execution role and required policies:

From the AWS IAM console, select Policies > Create Policy.

Select the JSON tab and copy the following sample policy.

{ "Version": "2012-10-17", "Statement": [ { "Sid": "EC2ModifyNetworkInterfaces", "Effect": "Allow", "Action": [ "ec2:CreateNetworkInterface", "ec2:DescribeNetworkInterfaces", "ec2:DeleteNetworkInterface" ], "Resource": "*" }, { "Sid": "CloudWatchWriteLogs", "Effect": "Allow", "Action": [ "logs:CreateLogGroup", "logs:CreateLogStream", "logs:PutLogEvents" ], "Resource": "*" }, { "Sid": "KmsDecrypt", "Effect": "Allow", "Action": [ "kms:Decrypt" ], "Resource": [ "arn:aws:kms:*:*:key/*" ] }, { "Sid": "KinesisStreamRead", "Effect": "Allow", "Action": [ "kinesis:GetRecords", "kinesis:GetShardIterator", "kinesis:DescribeStream", "kinesis:DescribeStreamSummary", "kinesis:ListShards", "kinesis:ListStreams" ], "Resource": "*" }, { "Sid": "SecretsManagerGetSecret", "Effect": "Allow", "Action": [ "secretsmanager:GetSecretValue" ], "Resource": [ "arn:aws:secretsmanager:*:*:secret:*" ] } ] }For the KMS policy, replace the Resource with the ARN for the KMS key created in a previous step.

Select Review policy, type in a policy name, for example, ProtegrityLogForwarderLambdaPolicy and Confirm. Record the policy name:

LogForwarderLambdaPolicyName:__________________

Create Log Forwarder IAM Role

Perform the following steps to create Log Forwarder execution IAM role.

To create Log Forwarder IAM role:

From AWS IAM console, select Roles > Create Role.

Select AWS Service > Lambda > Next.

Select the policy created in Create Audit Log Forwarder IAM Execution Policy.

Proceed to Name, Review and Create.

Type the role name, for example, ProtegrityForwarderRole and click Confirm.

Record the role ARN.

Log Forwarder IAM Execution Role Name: ___________________

Installation Artifacts

Audit Log Forwarder installation artifacts are part of the same deployment package as the one used for protect and policy agent services. Follow the steps below to ensure the right artifacts are available for log forwarder installation.

Verify that the Protegrity deployment package is available on your local system, if not, you can download it from the Protegrity portal.

Note

If you maintain multiple Protegrity Cloud Protectors, make sure that the deployment package downloaded for Audit Log Forwarder is the same as the one used for Protect service installation.Extract the pty_log_forwarder_cf.json cloud formation file from the deployment package.

Check the S3 deployment bucket identified in section Create S3 bucket for Installing Artifacts. Make sure that all Protegrity deployment zip files are uploaded to the S3 bucket.

Install through CloudFormation

The following steps describe the deployment of the Audit Log Forwarder AWS cloud components.

Access CloudFormation and select the target AWS Region in the console.

Click Create Stack and choose With new resources.

Specify the template.

Select Upload a template file.

Upload the Protegrity-provided CloudFormation template called pty_log_forwarder_cf.json and click Next.

Specify the stack details. Enter a stack name.

Note

The stack name will be appended to all the services created by the template.Enter the required parameters. All the values were generated in the pre-configuration steps.

Parameter | Description | Default Value | Required |

|---|---|---|---|

LogForwarderSubnets | Subnets where the Log Forwarder will be hosted. |

|

|

LogForwarderSecurityGroups | Security Groups, which allow communication between the Log Forwarder and ESA. |

| X |

LambdaExecutionRoleArn | The ARN of Lambda role created in the prior step. |

| X |

ArtifactS3Bucket | Name of S3 bucket created in the pre-configuration step. |

| X |

LogDestinationEsaIp | IP or FQDN of the ESA instance or cluster. |

| X |

AuditLogOutput | Audit log processor to target on ESA. Allowed values: audit-store, td-agent | audit-store | X |

PtyEsaClientCertificatesSecretId | AWS Secrets Manager secret id containing client certificates used for authentication with ESA Audit Store. It is expected that the public key will be stored in a field public_key and the private key in a field named private_key. This parameter is optional. If not provided, Log Forwarder will connect to ESA without client certificate authentication. | ||

EsaTlsDisableCertVerify | Disable certificate verification when connecting to ESA if set to 1. This is only for dev purposes, do not disable in production environment. | 0 | X |

PtyEsaCaServerCert | ESA self-signed CA certificate used by log forwarder Lambda to ensure ESA is the trusted server. Recorded in step Certificates on ESA In case ESA is configured with publicly signed certificates, the PtyEsaCaServerCert configuration will be ignored. |

| |

EsaConnectTimeout | Time in seconds to wait for the ESA response. Minimum value: 1. | 5 | X |

EsaVirtualHost | ESA virtual hostname. This configuration is optional and it can be used when proxy server is present and supports TLS SNI extension. |

|

|

KinesisLogStreamRetentionPeriodHours | The number of hours for the log records to be stored in Kinesis Stream in case log destination server is not available. Minimum value: 24. See Log Forwarder Performance section for more details. | 24 | X |

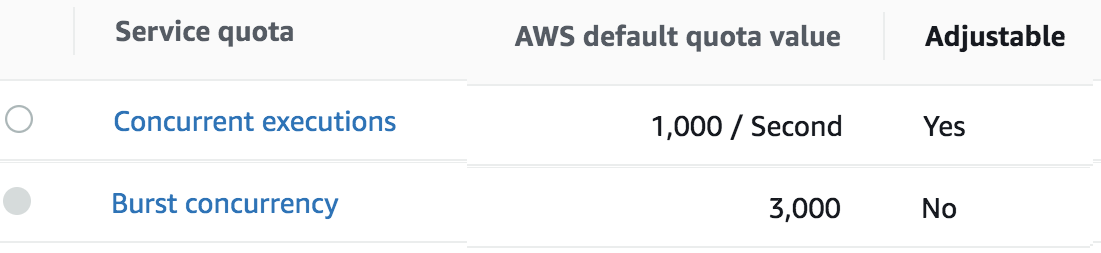

KinesisLogStreamShardCount | The number of shards that the Kinesis log stream uses. For greater provisioned throughput, increase the number of shards. Minimum value: 1. See Log Forwarder Performance section for more details. | 10 | X |

MinLogLevel | Minimum log level for protect function. Allowed Values: off, severe, warning, info, config, all | severe | X |

Click Next with defaults to complete CloudFormation.

After CloudFormation is completed, select the Outputstab in the stack.

Record the following values

KinesisLogStreamArn: ________________________________

Add Kinesis Put Record permission to the Protect Function IAM Role

Login to the AWS account that hosts the Protect Lambda Function.

Search for Protect Lambda Function IAM Execution Role Name created in Create Protect Lambda IAM role.

Under Permissions policies, select Add Permissions > Create inline policy.

In Specify permissions view, switch to JSON.

Copy the policy json from below replacing the placeholder value indicated in the following snippet as <Audit Log Kinesis Stream ARN> with the value recorded in the previous step.

{ "Version": "2012-10-17", "Statement": [ { "Sid": "KinesisPutRecords", "Effect": "Allow", "Action": "kinesis:PutRecords", "Resource": "<Audit Log Kinesis Stream ARN>" } ] }When you are finished, choose Next.

On the Review and create page, type a Name, then choose Create policy.

Test Log Forwarder Installation

Testing in this section validates the connectivity between Log Forwarder and ESA. The sample policy included with the initial installation and test event below are not based on your ESA policy. Any logs forwarded to ESA which are not signed with a policy generated by your ESA will not be added to the audit store.

Install Log Forwarder and configure according to previous sections. Log Forwarder configuration MinLogLevel must be at least info level.

Navigate to the log forwarder lambda function.

Select the Test tab.

Copy the json test event into the Event JSON pane.

{ "Records": [ { "kinesis": { "kinesisSchemaVersion": "1.0", "partitionKey": "041e96d78c778677ce43f50076a8ae3e", "sequenceNumber": "49620336010289430959432297775520367512250709822916263938", "data": "eyJpbmdlc3RfdGltZV91dGMiOiIyMDI2LTAzLTI3VDEzOjIzOjEyLjkwNFoiLCJhZGRpdGlvbmFsX2luZm8iOnsiZGVzY3JpcHRpb24iOiJEYXRhIHVucHJvdGVjdCBvcGVyYXRpb24gd2FzIHN1Y2Nlc3NmdWwuIn0sImNsaWVudCI6e30sImNudCI6NCwiY29ycmVsYXRpb25pZCI6InJzLXF1ZXJ5LWlkOjEyMzQiLCJsZXZlbCI6IlNVQ0NFU1MiLCJsb2d0eXBlIjoiUHJvdGVjdGlvbiIsIm9yaWdpbiI6eyJob3N0bmFtZSI6IlBSTy1VUy1QRjNSSEdGOSIsImlwIjoiMTAuMTAuMTAuMTAiLCJ0aW1lX3V0YyI6MTcxMTU1OTEwMH0sInByb2Nlc3MiOnsiaWQiOjJ9LCJwcm90ZWN0aW9uIjp7ImF1ZGl0X2NvZGUiOjgsImRhdGFlbGVtZW50IjoiYWxwaGEiLCJkYXRhc3RvcmUiOiJTQU1QTEVfUE9MSUNZIiwibWFza19zZXR0aW5nIjoiIiwib2xkX2RhdGFlbGVtZW50IjpudWxsLCJvcGVyYXRpb24iOiJVbnByb3RlY3QiLCJwb2xpY3lfdXNlciI6IlBUWURFViJ9LCJwcm90ZWN0b3IiOnsiY29yZV92ZXJzaW9uIjoiMS4yLjErNTUuZzU5MGZlLkhFQUQiLCJmYW1pbHkiOiJjcCIsInBjY192ZXJzaW9uIjoiMy40LjAuMTQiLCJ2ZW5kb3IiOiJyZWRzaGlmdCIsInZlcnNpb24iOiIwLjAuMS1kZXYifSwic2lnbmF0dXJlIjp7ImNoZWNrc3VtIjoiN0VCMkEzN0FDNzQ5MDM1NjQwMzBBNUUxNENCMTA5QzE0OEJGODYwRjE3NEVCMjMxMTAyMEI3RkE1QTY4MjdGQyIsImtleV9pZCI6ImM0MjQ0MzZhLTExMmYtNGMzZi1iMmRiLTEwYmFhOGI1NjJhNyJ9fQ==", "approximateArrivalTimestamp": 1626878559.213 }, "eventSource": "aws:kinesis", "eventVersion": "1.0", "eventID": "shardId-000000000000:49620336010289430959432297775520367512250709822916261234", "eventName": "aws:kinesis:record", "invokeIdentityArn": "arn:aws:iam::555555555555:role/service-role/TestRole", "awsRegion": "us-east-1", "eventSourceARN": "arn:aws:kinesis:us-east-1:555555555555:stream/CloudProtectEventStream" } ] }Note

The data payload is the base64 encoded audit log. See Audit Logging for detail on audit log contents.Select Test to execute the test event.

Test is successful if the Log Output of test results contains the following log:

[INFO] [kinesis-log-aggregation-format.cpp:77] Aggregated 1 records into 0 aggregated, 1 forwarded and 0 failed recordsIf the log is not present, please consult the Troubleshooting section for common errors and solutions.

Update Protector With Kinesis Log Stream

In this section, Kinesis log stream ARN will be provided to the Protect Function installation.

Note

If the Protector has not been installed, you may provide the KinesisLogStreamArn during protector installation and skip the remainder of this section.Navigate to the Protector CloudFormation stack created in the protector installation section.

Select Update.

Choose Use existing template > Next.

Set parameter KinesisLogStreamArn to the output value recorded in Install through CloudFormation.

Proceed with Next and Submit the changes.

Continue to the next section once stack status indicates UPDATE_COMPLETE.

Update Policy Agent With Log Forwarder Function Target

Log Forwarder Lambda function requires a policy layer which is in sync with the Protegrity Protector. This section will describe the steps to update the policy agent to include updating Log Forwarder Lambda function.

Note

If the policy agent has not been installed, follow the steps in Policy Agent Installation. Set AWS_PROTECT_FN_NAME to include both protector and log forwarder lambda functions.Navigate to the Policy Agent Function created in Policy Agent Installation

Select Configuration > Environment variables > Edit

Edit the value for environment variable AWS_PROTECT_FN_NAME to include the log forwarder function name/arn in the comma separated list of Lambda functions.

Save the changes and continue when update completes

Navigate to Test tab

Add an event {} and select Test to run the Policy Agent function

Verify Log forwarder function was updated to use the policy layer by inspecting the log output. Logs should include the following:

[INFO] 2024-07-09 18:58:04,793.793Z 622d374b-1f73-4123-9a38-abc61973adef iap_agent.policy_deployer:Updating lambda [Protegrity_LogForwarder_<stack ID>] to use layer version [arn:aws:lambda:<aws region>:<aws account number>:layer:Protegrity_Layer_<layer name>:<layer version>]

Test Full Log Forwarder Installation

Install and configure Protegrity Agent, Protector, and Log Forwarder components.

Send a protect operation to the protector using a data element or user which will result in audit log generation

Navigate to the CloudWatch log group for the Protect function

Select the log stream for the test operation and scroll to the latest logs

Expect to see a log similar to the below:

[2024-07-09T19:28:23.158] [INFO] [kinesis-external-sink.cpp:51] Sending 2 logs to Kinesis ... [2024-07-09T19:28:23.218] [INFO] [aws-utils.cpp:206] Kinesis send time: 0.060sNavigate to the CloudWatch log group for the Log Forwarder function

Expect to see a new log stream - it may take several minutes for the stream to start

Select the new stream and scroll to the most recent logs in the stream

Expect to see a log similar to the below:

[2024-07-09T19:32:31.648] [INFO] [kinesis-log-aggregation-format.cpp:77] Aggregated 1 records into 0 aggregated, 1 forwarded and 0 failed records

Troubleshooting

Error | Action |

|---|---|

Log forwarder log contains severe level secrets permissions error: |

|

When testing log forwarder as described in Test Log Forwarder Installation, response contains policy decryption error: |

|

Cloudformation stack creation fails with error: |

|

Severe level kinesis permissions log message in protector function: |

|

TLS errors reported in log forwarder function logs: |

|

1.3.6 -

Cheat Sheet Recommendation

Tip

During the installation you will need output of steps, such as resources names and ids. We recommend copying the following cheat sheet into a notepad and fill in the information as you progress with the installation.AWS Account ID: ___________________

AWS Region (AwsRegion): ___________________

S3 Bucket name (ArtifactS3Bucket): ___________________

KMS Key ARN (AWS_KMS_KEY_ID): ___________________

ProtectLambdaPolicyName: __________________

Role ARN (LambdaExecutionRoleArn): ___________________

ApiGatewayId: ________________________________

ProtectFunctionName: __________________________

ProtectLayerName: _____________________________

ESA IP address: ___________________

VPC name: ___________________

Subnet name: ___________________

Policy Agent Security Group Id: ___________________

ESA Credentials Secret Name: ___________________

Policy Name: ___________________

Agent Lambda IAM Execution Role Name: ___________________

1.3.7 -

Prerequisites

Requirement | Detail |

|---|---|

Protegrity distribution and installation scripts | These artifacts are provided by Protegrity |

Protegrity ESA 10.0+ | The Cloud VPC must be able to obtain network access to the ESA |

AWS Account | Recommend creating a new sub-account for Protegrity Serverless |

1.3.8 -

AWS Services

The following table describes the AWS services that may be a part of your Protegrity installation.

Service | Description |

|---|---|

Lambda | Provides serverless compute for Protegrity protection operations and the ESA integration to fetch policy updates or deliver audit logs. |

API Gateway | Provides the endpoint and access control. |

KMS | Provides secrets for envelope policy encryption/decryption for Protegrity. |

Secrets Manager | Provides secrets management for the ESA credentials . |

S3 | Intermediate storage location for the encrypted ESA policy layer. |

Kinesis | Required if Log Forwarder is to be deployed. Amazon Kinesis is used to batch audit logs sent from protector function to ESA. |

VPC & NAT Gateway | Optional. Provides a private subnet to communicate with an on-prem ESA. |

CloudWatch | Application and audit logs, performance monitoring, and alerts. Scheduling for the policy agent. |

1.3.9 -

Required Skills and Abilities

Role / Skillset | Description |

|---|---|

AWS Account Administrator | To run CloudFormation (or perform steps manually), create/configure a VPC and IAM permissions. |

Protegrity Administrator | The ESA credentials required to extract the policy for the Policy Agent |

Network Administrator | To open firewall to access ESA and evaluate AWS network setup |

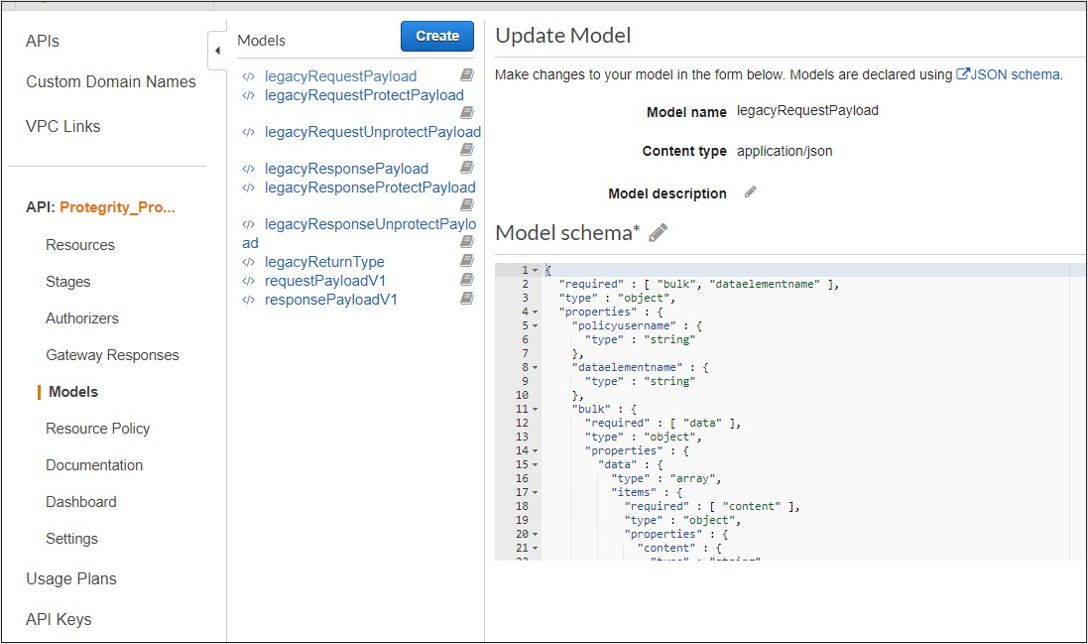

1.4 - REST API

The following sections describe the key concepts in understanding the REST API.

Base URL:

https://{ApiGatewayId}.execute-api.{Region}.amazonaws.com/pty/v1

Once Protegrity Serverless REST API is installed, you can export the OpenAPI documentation file from API Gateway Export API, located in the AWS API Gateway Control Service.

https://docs.aws.amazon.com/apigateway/latest/developerguide/api-gateway-export-api.html

For testing the REST API, we recommend using tools, such as, Postman.

1.4.1 - Authorization

Policy Users

Protegrity Policy roles defines the unique data access privileges for every member. The Protegrity Lambda protects the data with the username sent in either the JWT-formatted authorization header or the request body.

The lambda behavior can be set in the Lambda environment variables as described in Protect Lambda Configuration

| Authorization/allow_assume_user | 0 | 1 |

|---|---|---|

| Empty | User from the request body. / (Throw an error). | User from the request body. |

| JWT | User from JWT payload | User from request body. If not found user from JWT payload. |

JWT Verification

To ensure the integrity of the user, the lambda protect can verify the JWT.

- From your AWS console, navigate to lambda and select the following Lambda: Protegrity_Protect_RESTAPI_<STACK_NAME>

- Scroll down to the Environment variables section, select Edit to replace the entries.

Parameter | Value | Notes |

|---|---|---|

authorization | JWT | |

jwt_verify | 1 |

|

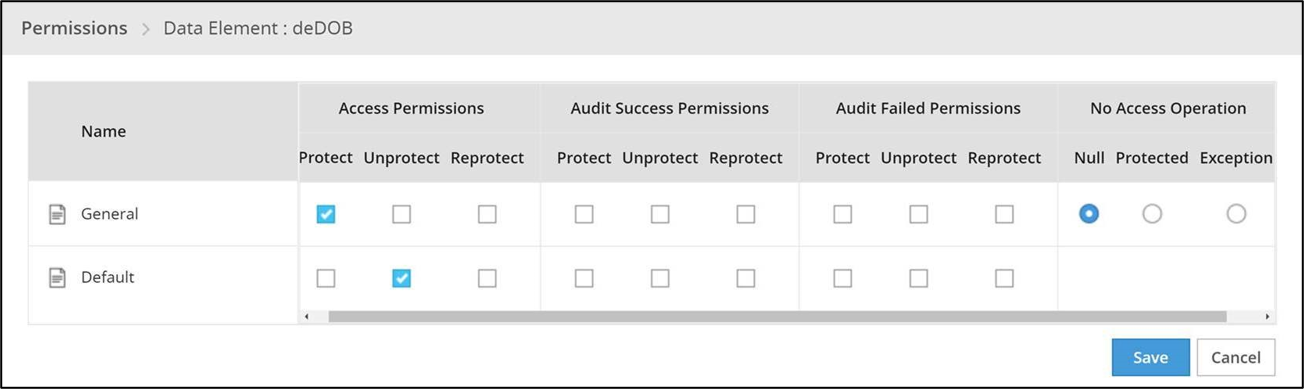

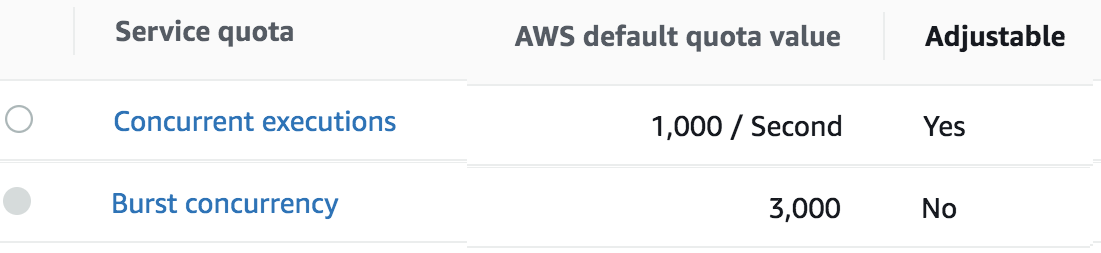

jwt_secret_base64 | Secret in base64 encoding. For example, the value of the public key is as follows. This public key will be stored as follows. | The secret must be in base64. We recommend using RSA public certificates, it is not recommended to keep Hash (symmetric) secrets in the clear. |