This is the multi-page printable view of this section. Click here to print.

Performance

- 1: Performance Considerations

- 2: Sample Benchmarks

- 3: Concurrency

- 4: Concurrency Tuning

- 5: Log Forwarder Performance Tuning

- 6:

- 7:

- 8:

- 9:

1 - Performance Considerations

Performance Considerations

The following factors may cause variation in real performance versus benchmarks:

- Cold startup: The Lambda spends additional time on the initial invocation to decrypt and load the policy into memory. This time can vary between 400 ms and 1200 ms depending on the policy size. Once the Lambda is initialized, subsequent “warm executions” should process quickly.

- Size of policy: The size of the policy impacts cold start performance. Larger policies take more time to initialize.

- Noisy neighbors: There are many multi-tenant components in the Cloud. The same request may differ by 50% between runs regardless of Protegrity. A single execution may not be the best predictor of average performance.

- Lambda memory: AWS provides more virtual cores based on the memory configuration. The initial configuration of 1728 MB provides a good tradeoff between performance and cost with the benchmarked policy. Memory can be increased to optimize for your individual cases.

- Cluster size: Cluster size may make a significant difference depending on the workload.

- Number of operations Number of protect, unprotect and reprotect security operations.

- Lambda concurrency and burst quotas: AWS limits the number of concurrent executions and how quickly lambda can scale to meet demand. This is discussed in an upcoming section of the document.

- Size of data element: Operations on larger text consume time.

2 - Sample Benchmarks

Sample Benchmarks

The following benchmarks were performed against different cluster sizes. These are median times of approximately five runs each. The query unprotected six columns per row (first_name, last_name, email, postal_code, ssn, iban):

| Rows x Cols | # Ops | Small | Medium | Large | XL | 2XL |

|---|---|---|---|---|---|---|

| 100K x 6 cols | 600K | 5.1 | 4 | 4.2 | 4.3 | 4.3 |

| 1M x 6 cols | 6M | 11 | 10 | 11 | 10 | 11 |

| 10M x 6 cols | 60M | 21.5 | 21.5 | 20.5 | 24.5 | 29 |

| 100M x 6 cols | 600M | - | - | 49.5 | 56.5 | 87 |

3 - Concurrency

Concurrency

Snowflake provides guidance on the maximum concurrent requests to the Protegrity API. However, reaching this maximum request depends on additional factors, such as, cluster use and available resources. In addition, depending on the query plan, individual batches may be processed serially across different UDFs.

The formula for theoretical maximum Snowflake concurrency is N * C * M * E * P:

- N - # of servers in the cluster (e.g. 2xl = 32, xl = 16)

- C - # of CPUs. This is typically 8, but depends on the hardware.

- M – parallelism multiplier (fixed to 8)

- E - # of external functions invoked

- P - # of queries in running in parallel

The following table shows this calculation for a single query.

| Cluster size | Predicted concurrent per query * | 1 UDF | 2 UDF | 5 UDF | 10 UDF |

|---|---|---|---|---|---|

| Medium | 4 servers x 8 CPU x 8 = 256 | 256 | 512 | 1,280 | 2,560 |

| X-Large | 16 servers x 8 CPU x 8 = 1,024 | 1,024 | 2,048 | 5,120 | 10,240 |

| 2X-Large | 32 servers x 8 CPU x 8 = 2,048 | 2,048 | 4,096 | 10,240 | 20,480 |

Note

* theoretical maximum concurrent requests based on engineering guidance from Snowflake.4 - Concurrency Tuning

Lambda Tuning

AWS maintains quotas for Lambda concurrent execution. Two of these quotas impact concurrency and compete with other Lambdas in the same account and region:

The concurrent executions quota cap is the maximum number of Lambda instances that can serve requests for an account and region. The default AWS quota may be inadequate based on peak concurrency based on the table in the previous section. This quota can be increased with an AWS support ticket.

The Burst concurrency quota limits the rate at which Lambda will scale to accommodate demand. This quota is also per account and region. The burst quota cannot be adjusted. AWS will quickly scale until the burst limit is reached. After the burst limit is reached, functions will scale at a reduced rate per minute (e.g. 500). If no Lambda instances can serve a request, the request will fail with a 429 Too Many Requests response. Snowflake will generally retry until all requests succeed but may abort if a high percentage of failed responses occur.

The burst limit is a fixed value and varies significantly by AWS region. The highest burst (3,000) is currently available in the following regions: US West (Oregon), US East (N.Virginia), and Europe (Ireland). Other regions can burst between 500 and 1,000. It is recommended to select a Snowflake AWS region with the highest burst limits.

API Gateway Tuning

AWS maintains a Throttle quota for the API Gateway. By default, API Gateway limits concurrent requests to 10,000 requests per second and throttles subsequent traffic with a 429 Too Many Requests error response. This quota applies across all APIs in an account and region.

The API Gateway default quota may need to be increased based on the Concurrency table in Lambda Tuning. Keep in mind that hitting quota limits effectively throttles any other API services in the region.

The API Gateway also limits burst. Burst is the maximum number of concurrent requests that API Gateway can fulfill at any instant without returning 429 Too Many Requests error responses. This limit can be increased by AWS, but is not normally an adjustable.

Enable CloudWatch metrics within the API Gateway to monitor max concurrency and investigate throttling errors. See the Concurrency Troubleshooting section on interpreting CloudWatch metrics.

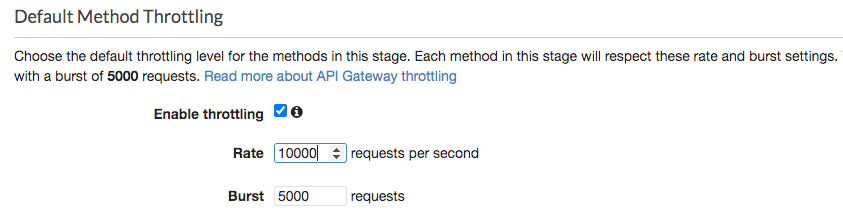

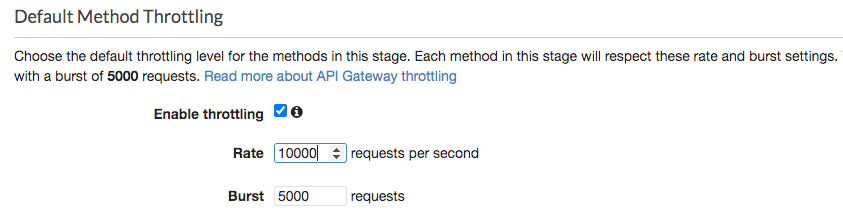

Quotas adjustments are applied for region and account. Throttling is also enabled by default in the API Gateway stage used by the Protegrity Lambda function. The stage configuration throttling must be adjusted if the quota is modified. Stage throttling is shown in the following image.

For example, a test query was executed against a 274 million record table on a 2X-Large Snowflake cluster using a query with 10 UDF columns. Using the reference table in the Concurrency table, the cluster would theoretically generate over 20,000 requests/sec to execute the given query. Using API Gateway’s default settings, the requests exceeding 10,000 requests/sec will be throttled. Therefore, this query may fail intermittently due to a high number of throttling errors.

After requesting a quota increase, AWS modified the account’s API Gateway throttling quota from 10,000 to 24,000, this same query succeeded without throttles. In addition, 8 concurrent queries also succeeded. Eight concurrent queries did not generate 8x the concurrent load due to the cluster’s own resource limitations. This indicates the cluster peaked.

Concurrency Troubleshooting

Hitting up against quota limits may indicate that quota adjustments are required. Exceeding quota limits may cause a Snowflake query to fail or reduce performance. In the worst case, significant throttling can impact the performance of all your API Gateway or Lambda services in the region.

Snowflake is tolerant of a certain ratio of failed requests and automatically retries. If a high percentage of requests fail, then the query may abort and the last error code will display in the console. For example, 429 Too Many Requests.

CloudWatch Metrics can be manually enabled on the API Gateway to reveal if quotas are being reached. Metrics aggregate errors into two buckets that are 4xx and 5xx. CloudWatch logs can be used to access the actual error code. The following table describes how to interpret the CloudWatch metrics.

| Error type | Possible issue | Remedy |

|---|---|---|

| 4xx errors | API Gateway burst or throttle quota exceeded | Request an increase to the API Gateway throttle quota. |

| 5xx errors | Lambda concurrent requests or burst quota exceeded. Verify 4xx errors in Lambda Metrics. | Request an increase the Lambda concurrent request quota |

Note

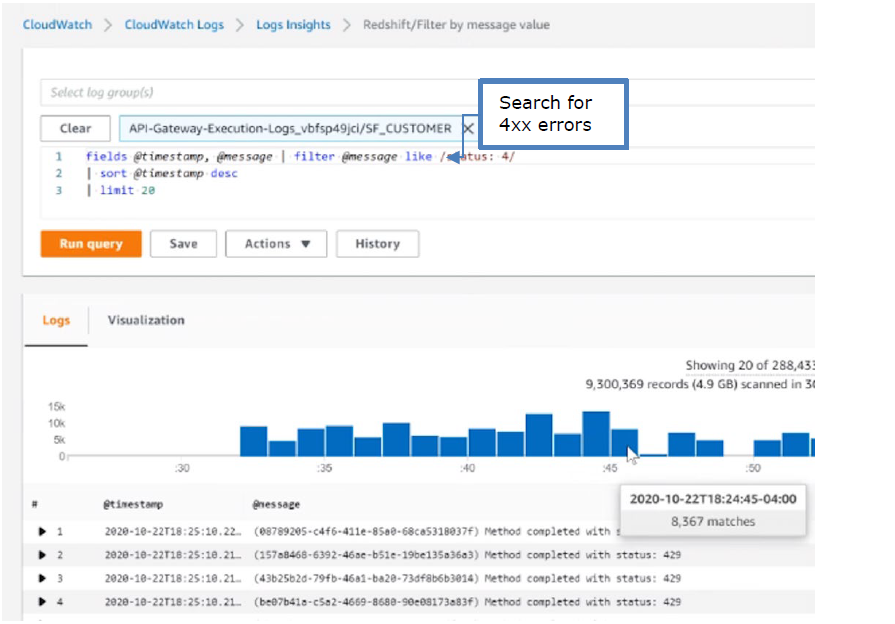

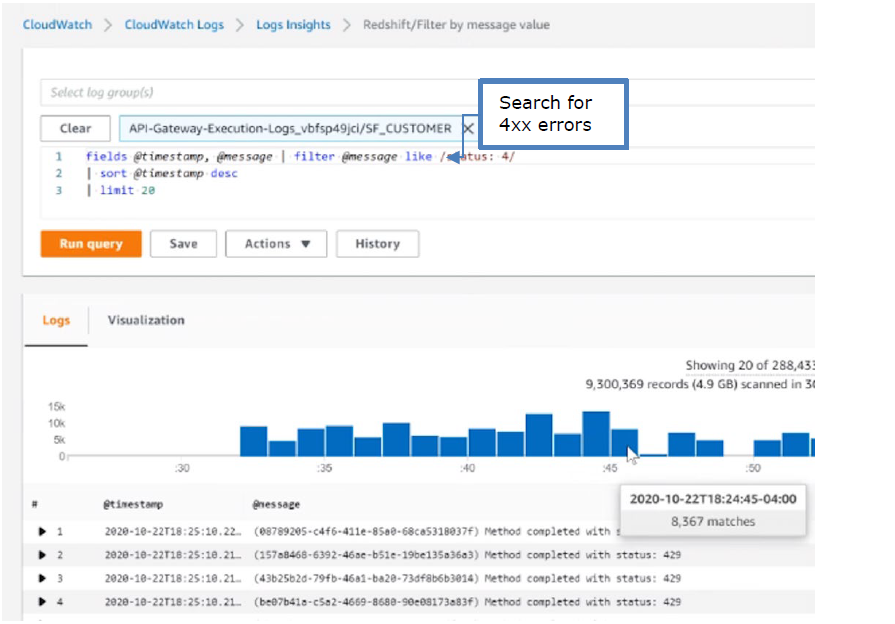

The API Gateway Lambda proxy maps 429 errors from the Lambda service to 500 errors.The following screenshot shows an example of searching CloudWatch Logs using Log Insights:

Cold-Start Performance

Cold-start vs warm execution refers to the state of the Lambda when a request is received. A cold-start undergoes additional initialization, such as, loading the security policy. Warm execution applies to all subsequent requests served by the Lambda. The following table shows an example how these states impact latency and performance.

| Execution state | Avg. Execution Duration | Avg. Total (w/ network latency) |

|---|---|---|

| Cold execution | 438 ms | 522 ms |

| Warm execution | < 2ms | 84 ms |

Note

- Cold execution time will vary based on the physical size of the security policy. A large security policy will result in longer cold startup times.

5 - Log Forwarder Performance Tuning

Log Forwarder Performance

Log forwarder architecture is optimized to minimize the amount of connections and reduce the overall network bandwidth required to send audit logs to ESA. This is achieved with batching and aggregation taking place on two levels. The first level is in protect function instances, where audit logs from consecutive requests to an instance are batched and aggregated. The second level of batching takes place in Amazon Kinesis Stream where log records from different protect function instances are additionally batched and sent to log forwarder function where they are aggregated. This section shows how to configure the deployment to accommodate different patterns of anticipated audit log stream. It also shows how to monitor deployment resources to detect problems before audit records are lost.

Protector Cloud Formation Parameters

AuditLogFlushInterval: Determines the minimum amount of time required for the audit log to be sent to Amazon Kinesis. Changing flush interval may affect the level of aggregation, which in turn may result in different number of connections and different data rates to Amazon Kinesis. Default value is 30 seconds.

Increasing the flush interval may result in higher aggregation of audit logs, in fewer connections to Amazon Kinesis, in higher latency of audit logs arriving to ESA and in higher data throughput.

Lowering the flush interval may result in lower aggregation of audit logs, in more connections to Amazon Kinesis, in lower latency of audit logs arriving to ESA and in lower data throughput.

It is not recommended to reduce the flush interval from default value in production environment as it may overload the Amazon Kinesis service. However, it may be beneficial to reduce flush interval during testing to make audit records appear on ESA faster.

Log Forwarder Cloud Formation Parameters

Amazon KinesisLogStreamShardCount: The number of shards represents the level of parallel streams in the Amazon Kinesis and it is proportional to the throughput capacity of the stream. If the number of shards is too low and the volume of audit logs is too high, Amazon Kinesis service may be overloaded and some audit records sent from protect function may be lost.

Default value is 10, however you are advised to test with a production-like load to determine whether this is sufficient or not.

Amazon KinesisLogStreamRetentionPeriodHours: The time for the audit records to be retained in Amazon Kinesis log stream in cases where log forwarder function is unable to read records from the Kinesis stream or send records to ESA, for example due to a connectivity outage. Amazon Kinesis will retain failed audit records and retry periodically until connectivity with ESA is restored or retention period expires.

Default value is 24 hours, however you are advised to review this value to align it with your Recovery Time Objective and Recovery Point Objective SLAs.

Monitoring Log Forwarder Resources

Amazon Kinesis Stream Metrics: Any positive value in Amazon Kinesis PutRecords throttled records metric indicates that audit logs rate from protect function is too high. The recommended action is to increase the Amazon KinesisLogStreamShardCount or optionally increase the AuditLogFlushInterval.

Log Forwarder Function CloudWatch Logs: If log forwarder function is unable to send logs to ESA, it will log the following message:

[SEVERE] Dropped records: x.Note

When the error message above occurs, the dropped audit records will be preserved in the Amazon Kinesis data stream and retried again according to Amazon Kinesis retry schedule. Records will be retried until Amazon KinesisLogStreamRetentionPeriodHours expires.Protect Function CloudWatch Logs: If protect function is unable to send logs to Amazon Kinesis, it will log the following message:

[SEVERE] Amazon Kinesis error, retrying in x ms (retry: y/z) ..."Any dropped audit log records will be reported with the following log message:

[SEVERE] Failed to send x/y audit logs to Amazon Kinesis.

6 -

API Gateway Tuning

AWS maintains a Throttle quota for the API Gateway. By default, API Gateway limits concurrent requests to 10,000 requests per second and throttles subsequent traffic with a 429 Too Many Requests error response. This quota applies across all APIs in an account and region.

The API Gateway default quota may need to be increased based on the Concurrency table in Lambda Tuning. Keep in mind that hitting quota limits effectively throttles any other API services in the region.

The API Gateway also limits burst. Burst is the maximum number of concurrent requests that API Gateway can fulfill at any instant without returning 429 Too Many Requests error responses. This limit can be increased by AWS, but is not normally an adjustable.

Enable CloudWatch metrics within the API Gateway to monitor max concurrency and investigate throttling errors. See the Concurrency Troubleshooting section on interpreting CloudWatch metrics.

Quotas adjustments are applied for region and account. Throttling is also enabled by default in the API Gateway stage used by the Protegrity Lambda function. The stage configuration throttling must be adjusted if the quota is modified. Stage throttling is shown in the following image.

For example, a test query was executed against a 274 million record table on a 2X-Large Snowflake cluster using a query with 10 UDF columns. Using the reference table in the Concurrency table, the cluster would theoretically generate over 20,000 requests/sec to execute the given query. Using API Gateway’s default settings, the requests exceeding 10,000 requests/sec will be throttled. Therefore, this query may fail intermittently due to a high number of throttling errors.

After requesting a quota increase, AWS modified the account’s API Gateway throttling quota from 10,000 to 24,000, this same query succeeded without throttles. In addition, 8 concurrent queries also succeeded. Eight concurrent queries did not generate 8x the concurrent load due to the cluster’s own resource limitations. This indicates the cluster peaked.

7 -

Cold-Start Performance

Cold-start vs warm execution refers to the state of the Lambda when a request is received. A cold-start undergoes additional initialization, such as, loading the security policy. Warm execution applies to all subsequent requests served by the Lambda. The following table shows an example how these states impact latency and performance.

| Execution state | Avg. Execution Duration | Avg. Total (w/ network latency) |

|---|---|---|

| Cold execution | 438 ms | 522 ms |

| Warm execution | < 2ms | 84 ms |

Note

- Cold execution time will vary based on the physical size of the security policy. A large security policy will result in longer cold startup times.

8 -

Concurrency Troubleshooting

Hitting up against quota limits may indicate that quota adjustments are required. Exceeding quota limits may cause a Snowflake query to fail or reduce performance. In the worst case, significant throttling can impact the performance of all your API Gateway or Lambda services in the region.

Snowflake is tolerant of a certain ratio of failed requests and automatically retries. If a high percentage of requests fail, then the query may abort and the last error code will display in the console. For example, 429 Too Many Requests.

CloudWatch Metrics can be manually enabled on the API Gateway to reveal if quotas are being reached. Metrics aggregate errors into two buckets that are 4xx and 5xx. CloudWatch logs can be used to access the actual error code. The following table describes how to interpret the CloudWatch metrics.

| Error type | Possible issue | Remedy |

|---|---|---|

| 4xx errors | API Gateway burst or throttle quota exceeded | Request an increase to the API Gateway throttle quota. |

| 5xx errors | Lambda concurrent requests or burst quota exceeded. Verify 4xx errors in Lambda Metrics. | Request an increase the Lambda concurrent request quota |

Note

The API Gateway Lambda proxy maps 429 errors from the Lambda service to 500 errors.The following screenshot shows an example of searching CloudWatch Logs using Log Insights:

9 -

Lambda Tuning

AWS maintains quotas for Lambda concurrent execution. Two of these quotas impact concurrency and compete with other Lambdas in the same account and region:

The concurrent executions quota cap is the maximum number of Lambda instances that can serve requests for an account and region. The default AWS quota may be inadequate based on peak concurrency based on the table in the previous section. This quota can be increased with an AWS support ticket.

The Burst concurrency quota limits the rate at which Lambda will scale to accommodate demand. This quota is also per account and region. The burst quota cannot be adjusted. AWS will quickly scale until the burst limit is reached. After the burst limit is reached, functions will scale at a reduced rate per minute (e.g. 500). If no Lambda instances can serve a request, the request will fail with a 429 Too Many Requests response. Snowflake will generally retry until all requests succeed but may abort if a high percentage of failed responses occur.

The burst limit is a fixed value and varies significantly by AWS region. The highest burst (3,000) is currently available in the following regions: US West (Oregon), US East (N.Virginia), and Europe (Ireland). Other regions can burst between 500 and 1,000. It is recommended to select a Snowflake AWS region with the highest burst limits.