This is the multi-page printable view of this section. Click here to print.

Protectors

- 1: Configuration Parameters for Protector

- 2: Installing Resilient Package (RP) Agent on Linux or Unix and Windows

- 3: Installing Resilient Package (RP) Proxy on Linux or Unix and Windows

- 4: Configuration Parameters for RPP outside of the ESA

- 5: Installing Log Forwarder on Linux and Windows

- 6: Memory Utilization in Resilient Protectors

- 7: Verification of Signed Protector Build

- 8: Protection Method Reference

- 8.1: Protegrity Tokenization

- 8.1.1: Tokenization Support by Protegrity Products

- 8.1.2: Delimiters

- 8.1.3: Tokenization Properties

- 8.1.3.1: Data Type and Alphabet

- 8.1.3.2: Static Lookup Table (SLT) Tokenizers

- 8.1.3.3: From Left and From Right Settings

- 8.1.3.4: Internal Initialization Vector (IV)

- 8.1.3.5: Minimum and Maximum Input Length

- 8.1.3.5.1: Calculating Token Length

- 8.1.3.6: Length Preserving

- 8.1.3.7: Short Data Tokenization

- 8.1.3.8: Case-Preserving and Position-Preserving Tokenization

- 8.1.3.8.1: Case-Preserving Tokenization

- 8.1.3.8.2: Position-Preserving Tokenization

- 8.1.3.9: External Initialization Vector (EIV)

- 8.1.3.9.1: Tokenization Model with External IV

- 8.1.3.9.2: External IV Tokenization Properties

- 8.1.3.10: Truncating Whitespaces

- 8.1.4: Tokenization Types

- 8.1.4.1: Numeric (0-9)

- 8.1.4.2: Integer (0-9)

- 8.1.4.3: Credit Card

- 8.1.4.4: Alpha (A-Z)

- 8.1.4.5: Upper-Case Alpha (A-Z)

- 8.1.4.6: Alpha-Numeric (0-9, a-z, A-Z)

- 8.1.4.7: Upper-Case Alpha-Numeric (0-9, A-Z)

- 8.1.4.8: Lower ASCII

- 8.1.4.9: Datetime (YYYY-MM-DD HH:MM:SS)

- 8.1.4.10: Decimal

- 8.1.4.11: Unicode Gen2

- 8.1.4.12: Binary

- 8.1.4.13: Email

- 8.1.4.14: Printable

- 8.1.4.15: Date (YYYY-MM-DD, DD/MM/YYYY, MM.DD.YYYY)

- 8.1.4.16: Unicode

- 8.1.4.17: Unicode Base64

- 8.1.5:

- 8.1.6:

- 8.2: Protegrity Format Preserving Encryption

- 8.2.1: FPE Properties

- 8.2.2: Code Points

- 8.2.3: Tweak Input

- 8.2.4: Left and Right Settings

- 8.2.5: Handling Special Numeric Credit Card Data

- 8.3: Protegrity Encryption

- 8.3.1: Encryption Algorithms

- 8.3.1.1: AES-128 and AES-256

- 8.3.1.2: CUSP

- 8.3.1.3: 3DES

- 8.3.2: Encryption Properties - IV, CRC, Key ID

- 8.3.3: Data Length and Padding in Encryption

- 8.3.4:

- 8.3.5:

- 8.3.6:

- 8.4: No Encryption

- 8.5: Monitoring

- 8.6: Masking

- 8.7: Hashing

- 8.8: ASCII Character Codes

- 8.9: Examples of Column Sized Calculation for AES and 3DES Encryption

- 8.10: Empty String Handling by Protectors

- 8.11: Hashing Functions and Examples

- 8.11.1: Hash Data column size

- 8.11.2: Using Hashing Triggers and View

- 8.12: Codebook Re-shuffling in the Data Security Gateway

- 8.13:

- 8.14:

- 8.15:

- 8.16:

- 9: Application Protector

- 9.1: Application Protector Java

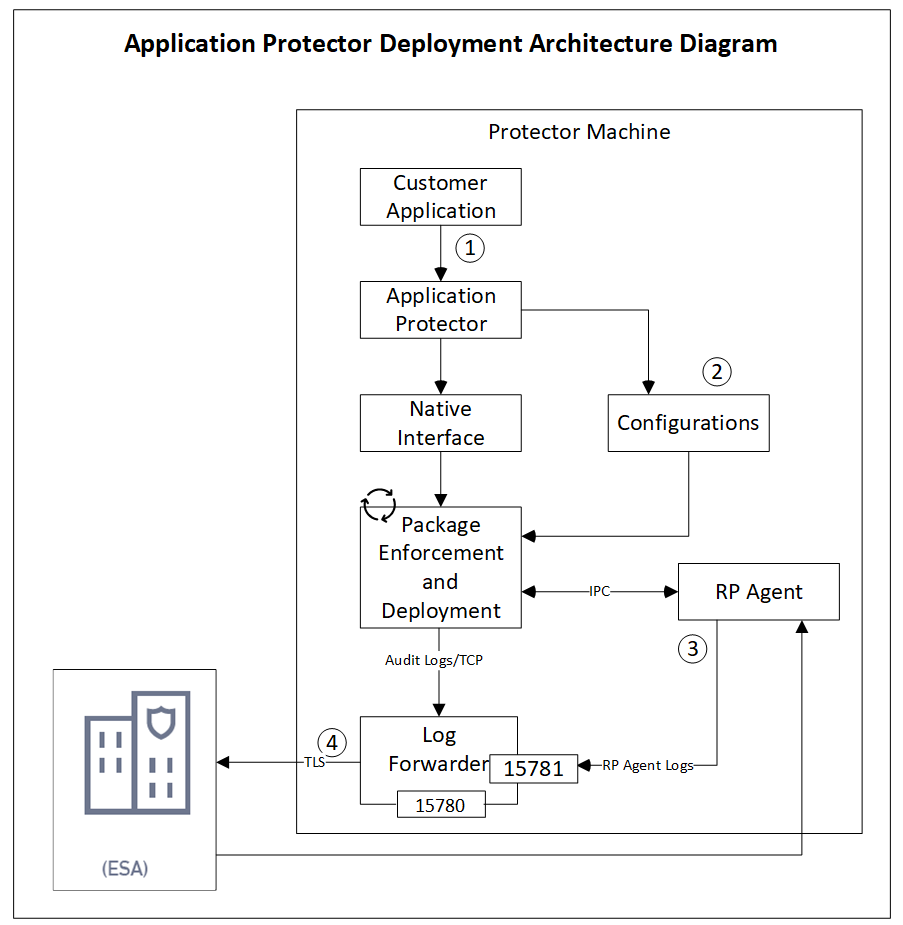

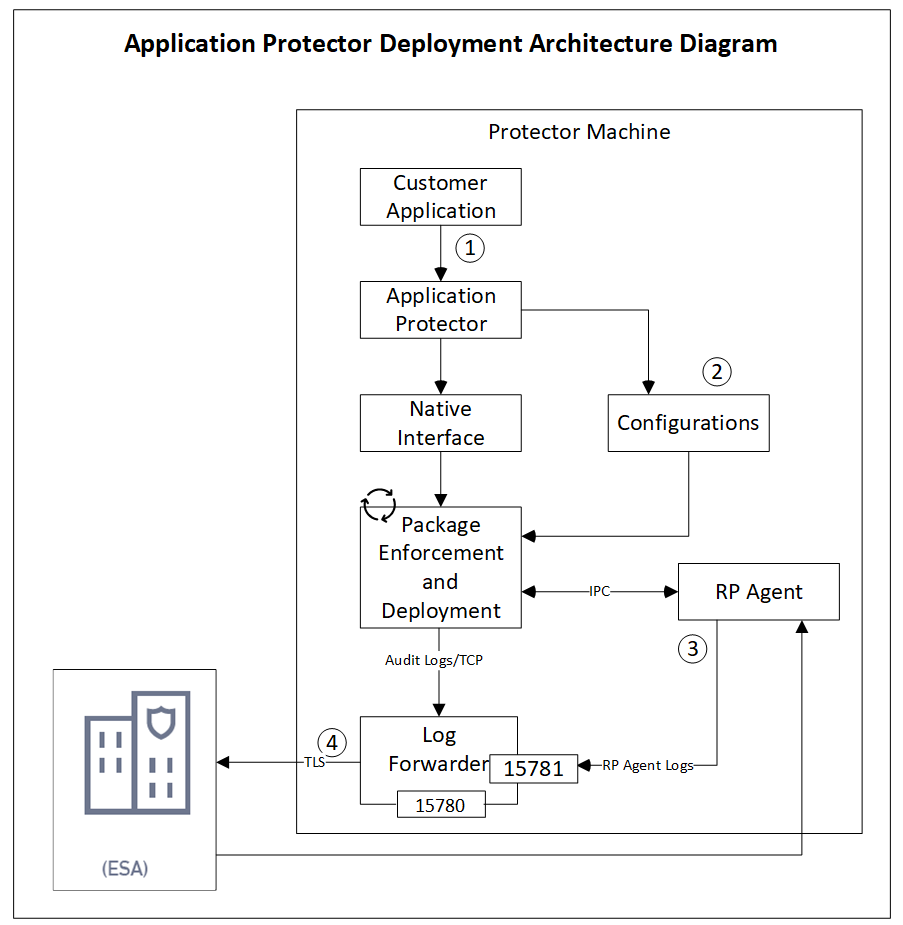

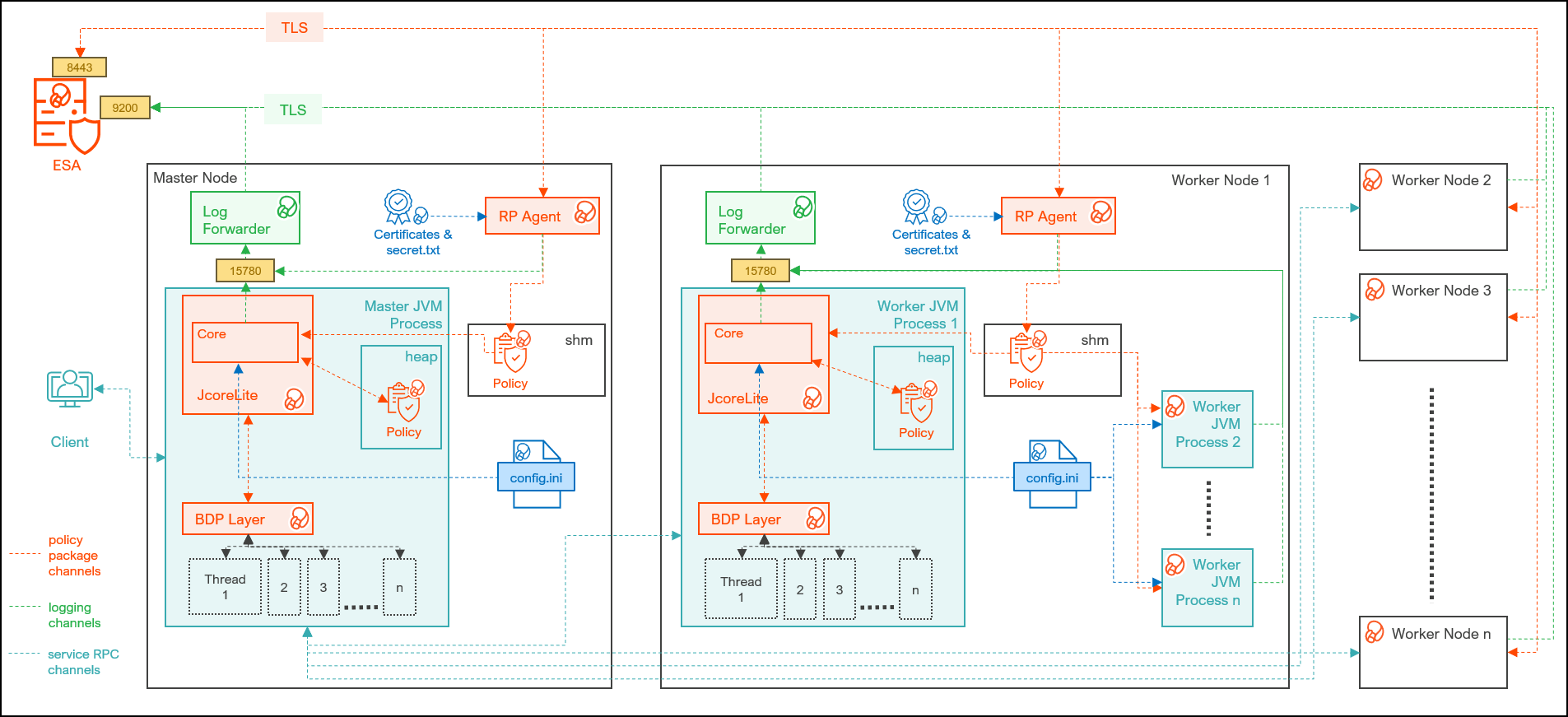

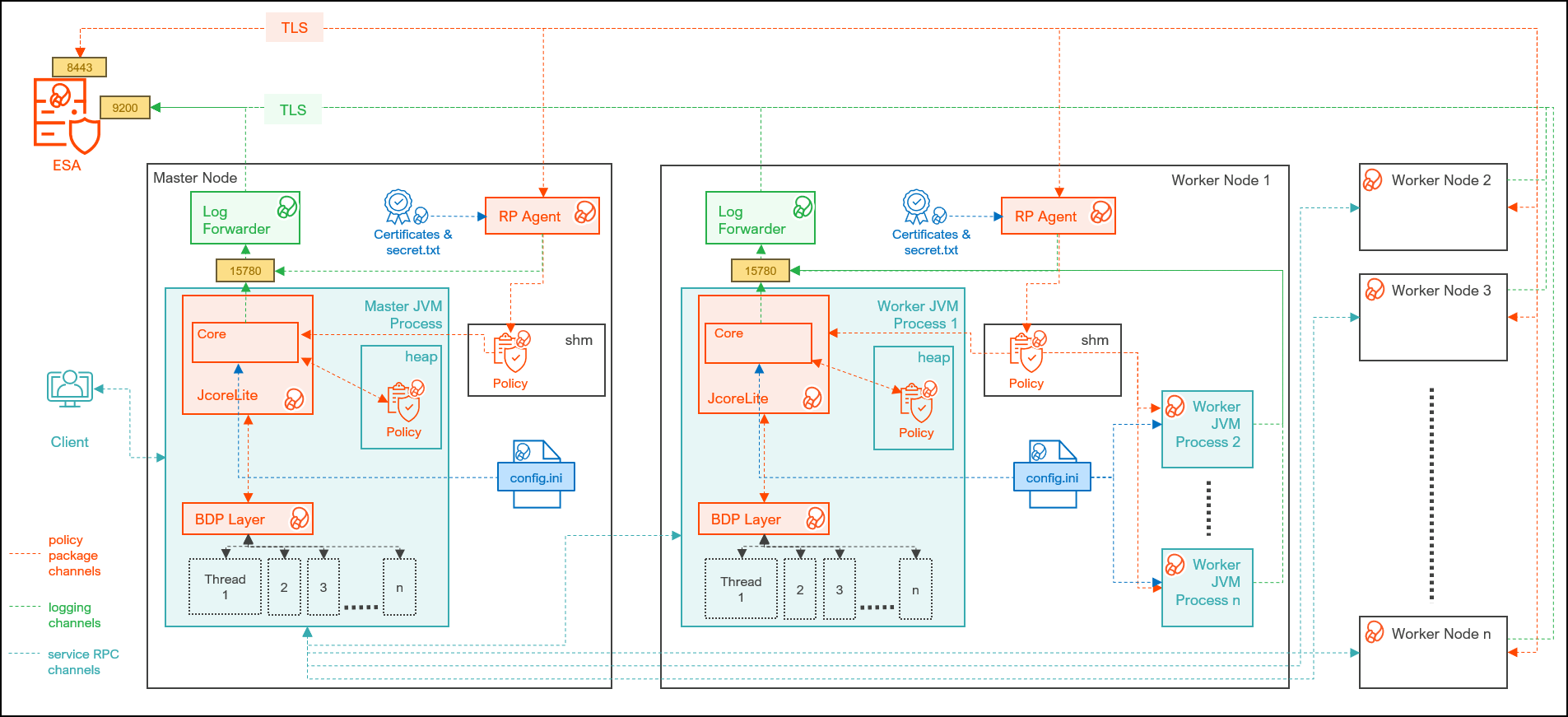

- 9.1.1: Understanding the Architecture

- 9.1.2: System Requirements

- 9.1.3: Preparing the Environment

- 9.1.4: Installing the AP Java Protector

- 9.1.5: Configuring the Protector

- 9.1.6: Application Protector Java APIs

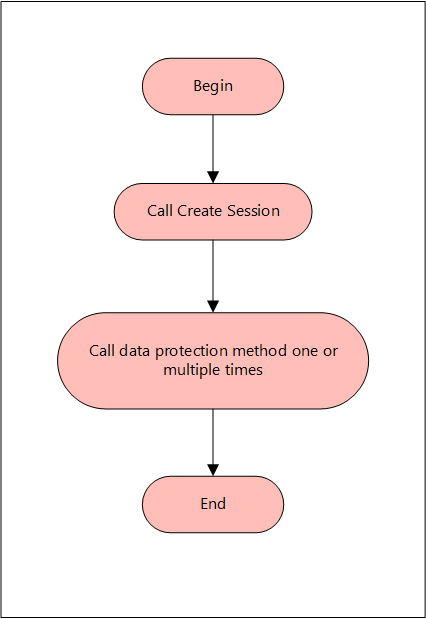

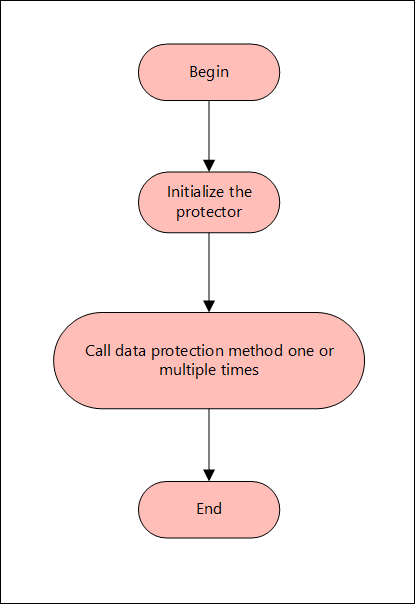

- 9.1.6.1: Using the AP Java APIs

- 9.1.7: Additional Topics

- 9.2: Application Protector Python

- 9.2.1: Understanding the Architecture

- 9.2.2: System Requirements

- 9.2.3: Preparing the Environment

- 9.2.4: Installing the AP Python Protector

- 9.2.5: Configuring the Protector

- 9.2.6: Application Protector Python APIs

- 9.2.6.1: Using the AP Python APIs

- 9.2.7: Additional Topics

- 9.2.7.1: Uninstalling the Application Protector

- 9.2.7.2: Memory Usage of the AP Python

- 9.2.7.3: Setting Up AP Python on Linux in a Development Environment

- 9.2.7.4: DevOps Approach for Application Protector Python

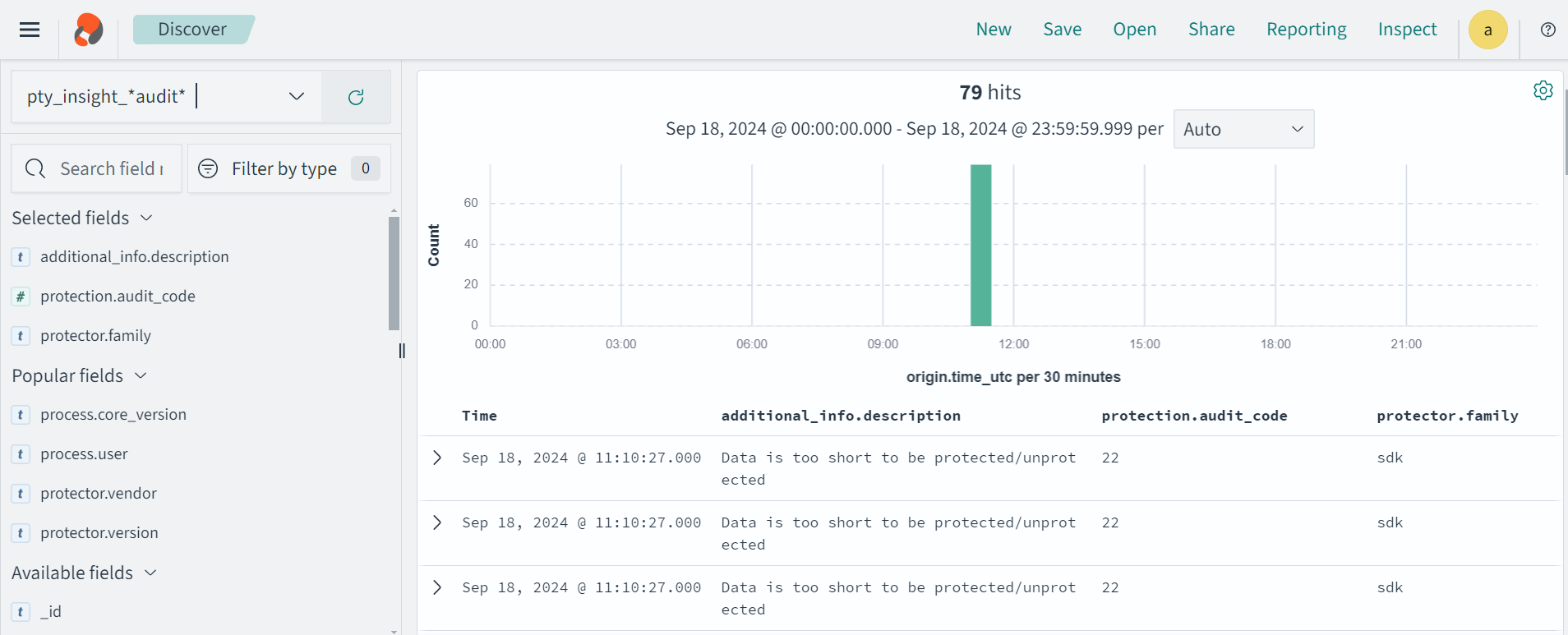

- 9.2.7.5: Application Protector API Return Codes

- 9.2.7.6: Config.ini file for Application Protector

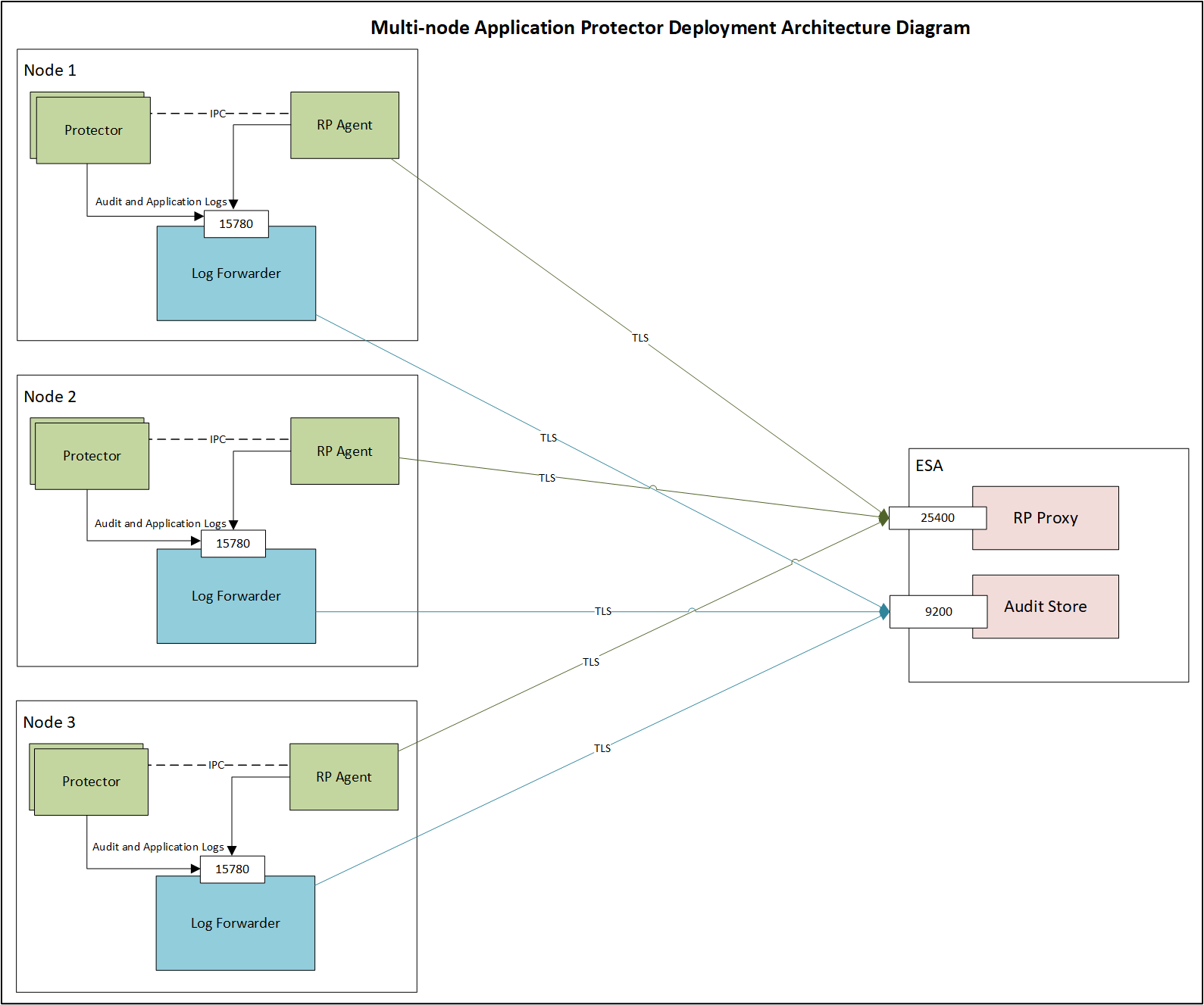

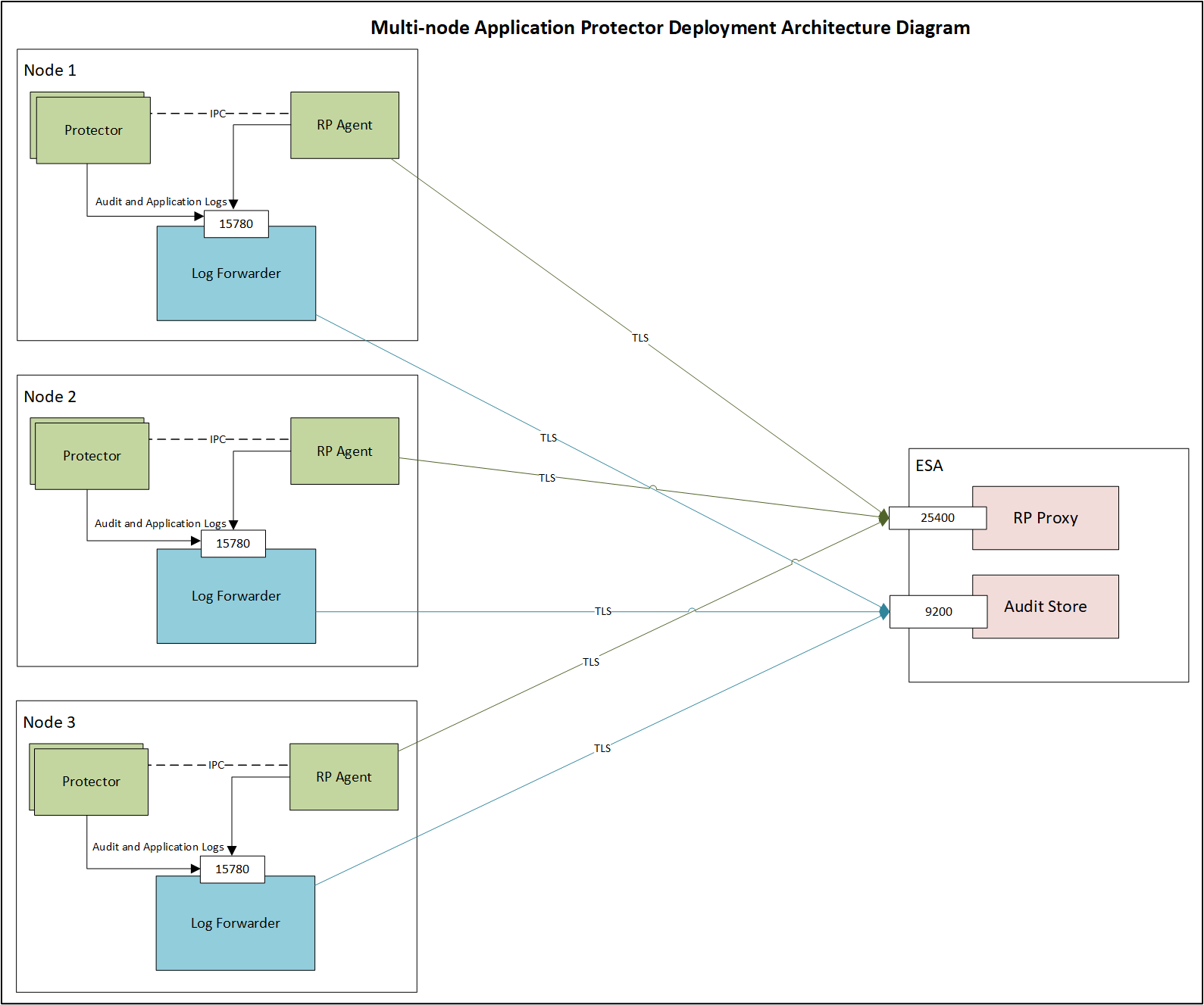

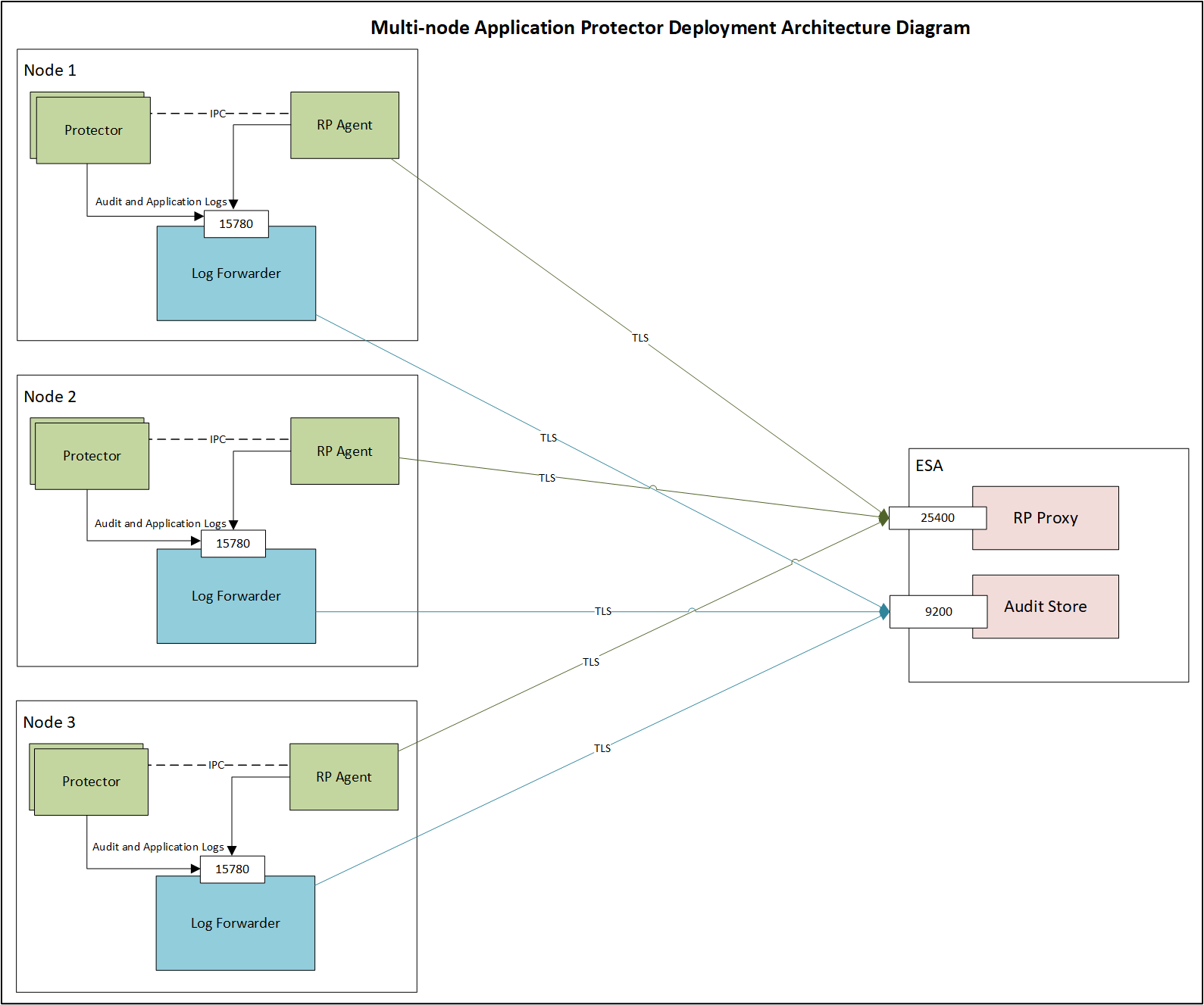

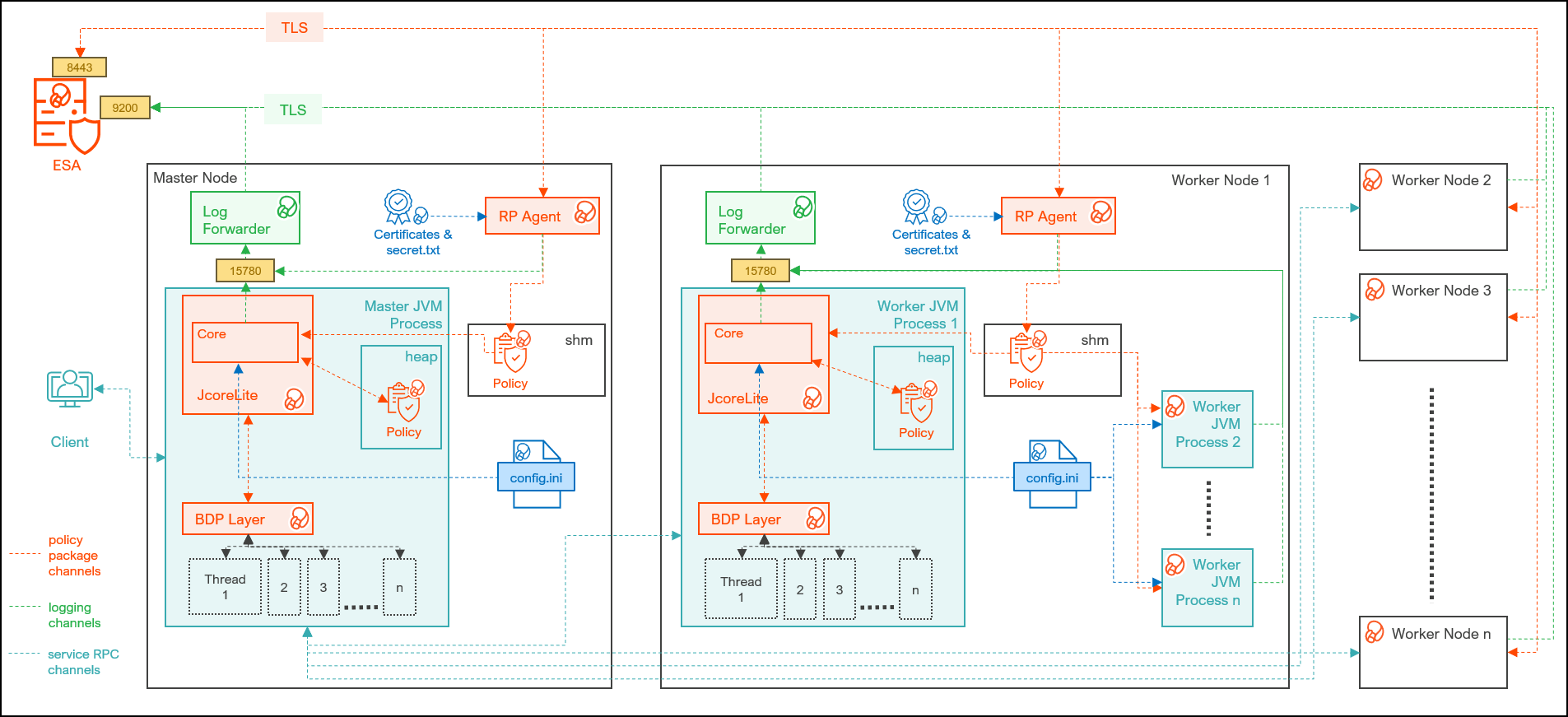

- 9.2.7.7: Multi-node Application Protector Architecture

- 9.3: Application Protector .Net

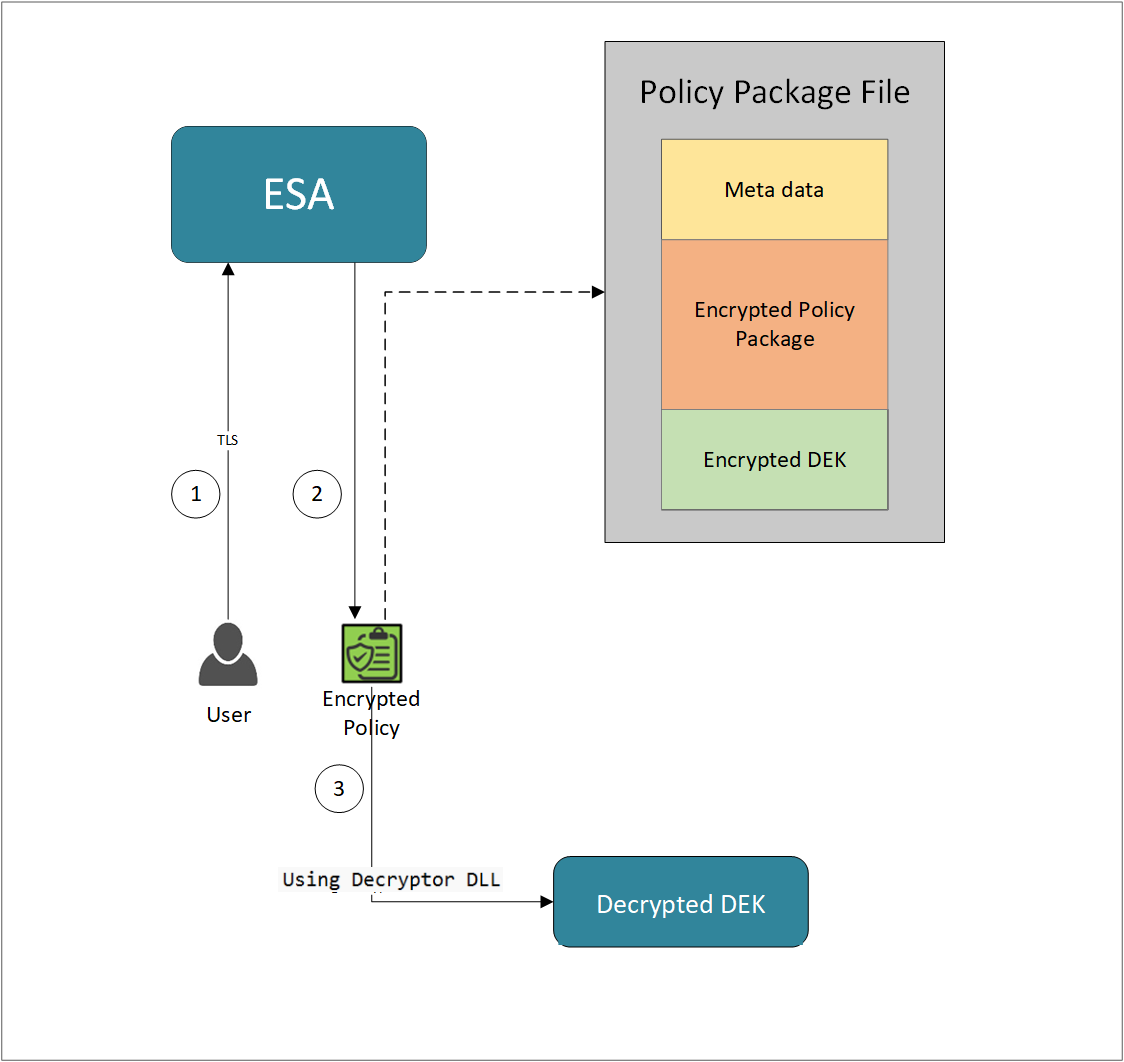

- 9.3.1: Architecture and Workflow

- 9.3.2: System Requirements

- 9.3.3: Preparing the Environment

- 9.3.4: Installing the AP .Net Protector

- 9.3.5: Configuring the Protector

- 9.3.6: Using the AP .Net APIs

- 9.3.6.1: Application Protector .Net APIs

- 9.3.7: Additional Topics

- 9.3.7.1: Uninstalling the Application Protector

- 9.3.7.2: Memory Usage of the AP .Net

- 9.3.7.3: Setting Up AP .Net Mock on Windows in a Development Environment

- 9.3.7.4: DevOps Approach for Application Protector

- 9.3.7.5: Application Protector API Return Codes

- 9.3.7.6: Config.ini file for Application Protector

- 9.3.7.7: Multi-node Application Protector Architecture

- 10: Big Data Protector

- 10.1: Amazon EMR

- 10.1.1: Understanding the architecture

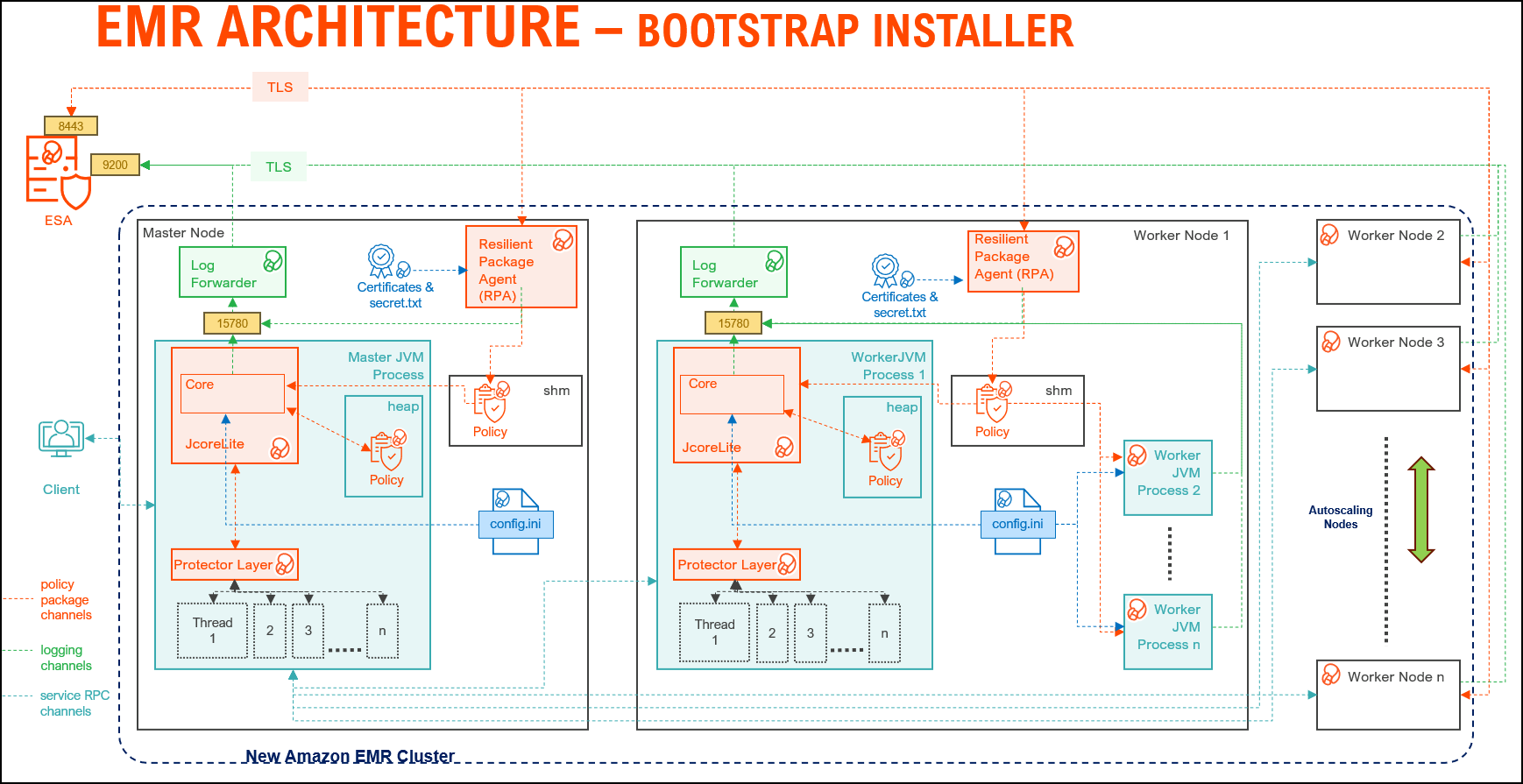

- 10.1.1.1: Bootstrap installer architecture

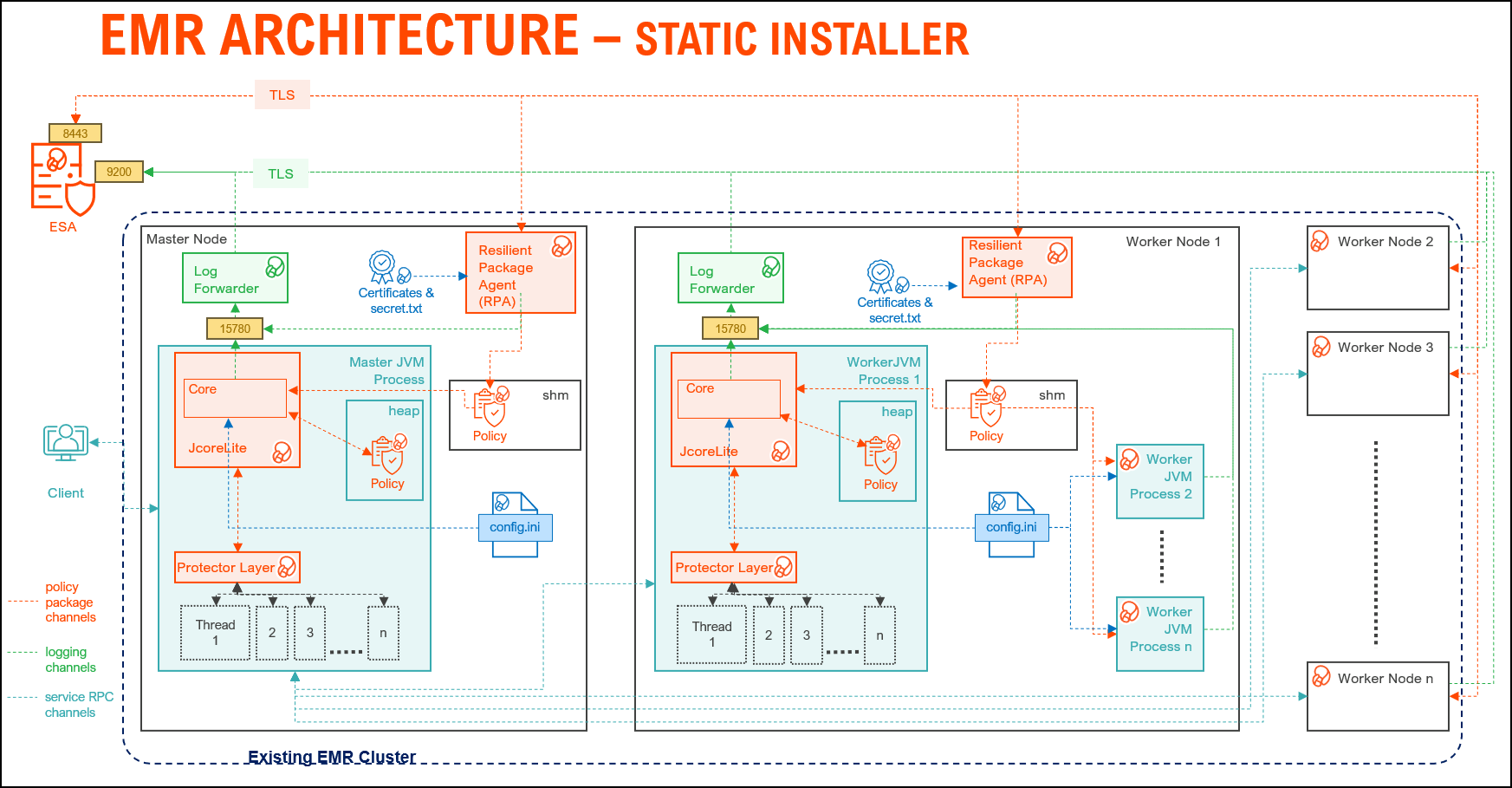

- 10.1.1.2: Static installer architecture

- 10.1.2: Preparing the environment

- 10.1.2.1: Setting up for the Bootstrap Installer

- 10.1.2.1.1: Verifying the prerequisites

- 10.1.2.1.2: Extracting the Big Data Protector Package

- 10.1.2.1.3: Executing the Configurator Script

- 10.1.2.2: Setting up for the Static Installer

- 10.1.2.2.1: Verifying the prerequisites for Static Installer

- 10.1.2.2.2: Extracting the Installation Package

- 10.1.2.2.3: Updating the BDP.Config File

- 10.1.3: Installing the protector

- 10.1.3.1: Using the Bootstrap Installer

- 10.1.3.1.1: Creating a Cluster

- 10.1.3.1.2: Managing the Cluster Nodes

- 10.1.3.1.3: Verifying the Parameters

- 10.1.3.2: Using the Static Installer

- 10.1.3.2.1: Installing the Protector on all the Nodes

- 10.1.3.2.2: Installing the Protector on Specific Nodes

- 10.1.3.2.3: Verifying the Parameters

- 10.1.4: Configuring the protector

- 10.1.5: Working with Cluster Utilities

- 10.1.5.1: RPAgent Control Script

- 10.1.5.2: Log Forwarder Control Script

- 10.1.5.3: Sync Config.ini

- 10.1.5.4: Sync Log Forwarder Configuration

- 10.1.5.5: Sync RPAgent Configuration

- 10.1.6: Uninstalling the protector

- 10.1.6.1: Uninstalling the Big Data Protector when Bootstrap is used

- 10.1.6.2: Uninstalling the Big Data Protector when Static installer is used

- 10.1.6.2.1: From all the Nodes

- 10.1.6.2.2: From Specific Nodes

- 10.2: AWS Databricks

- 10.2.1: Understanding the architecture

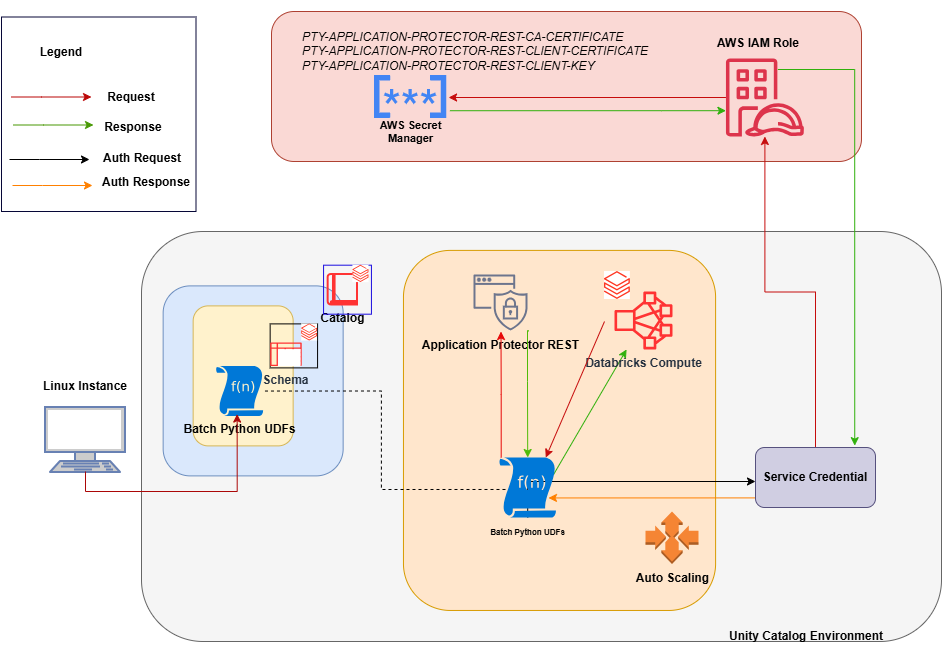

- 10.2.1.1: For the Application Protector REST Approach

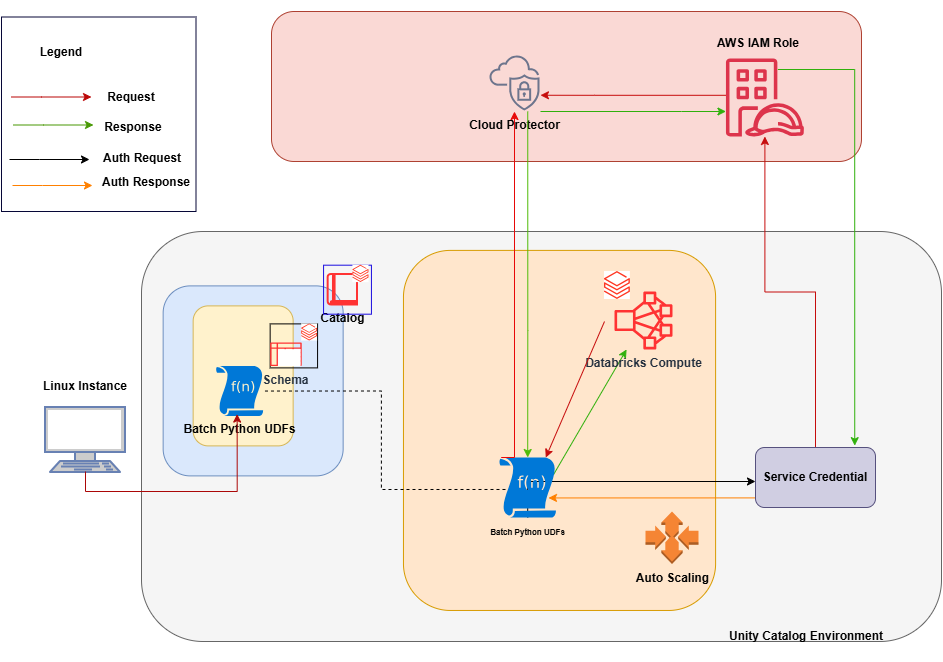

- 10.2.1.2: For the Cloud Protector Approach

- 10.2.2: System Requirements

- 10.2.2.1: For the Application Protector REST Approach

- 10.2.2.2: For the Cloud Protector Approach

- 10.2.3: Preparing the Environment

- 10.2.3.1: Extracting the Installation Package

- 10.2.3.2: Working with the Configurator Script

- 10.2.3.3: Retrieving the IP Address

- 10.2.3.4: Uploading the Secrets

- 10.2.4: Installing the Protector

- 10.2.4.1: Creating the User Defined Functions

- 10.2.5: Configuring the Protector

- 10.2.5.1: Editing the Cluster Configuration

- 10.2.6: Uninstalling the Protector

- 10.2.6.1: Dropping the User Defined Functions

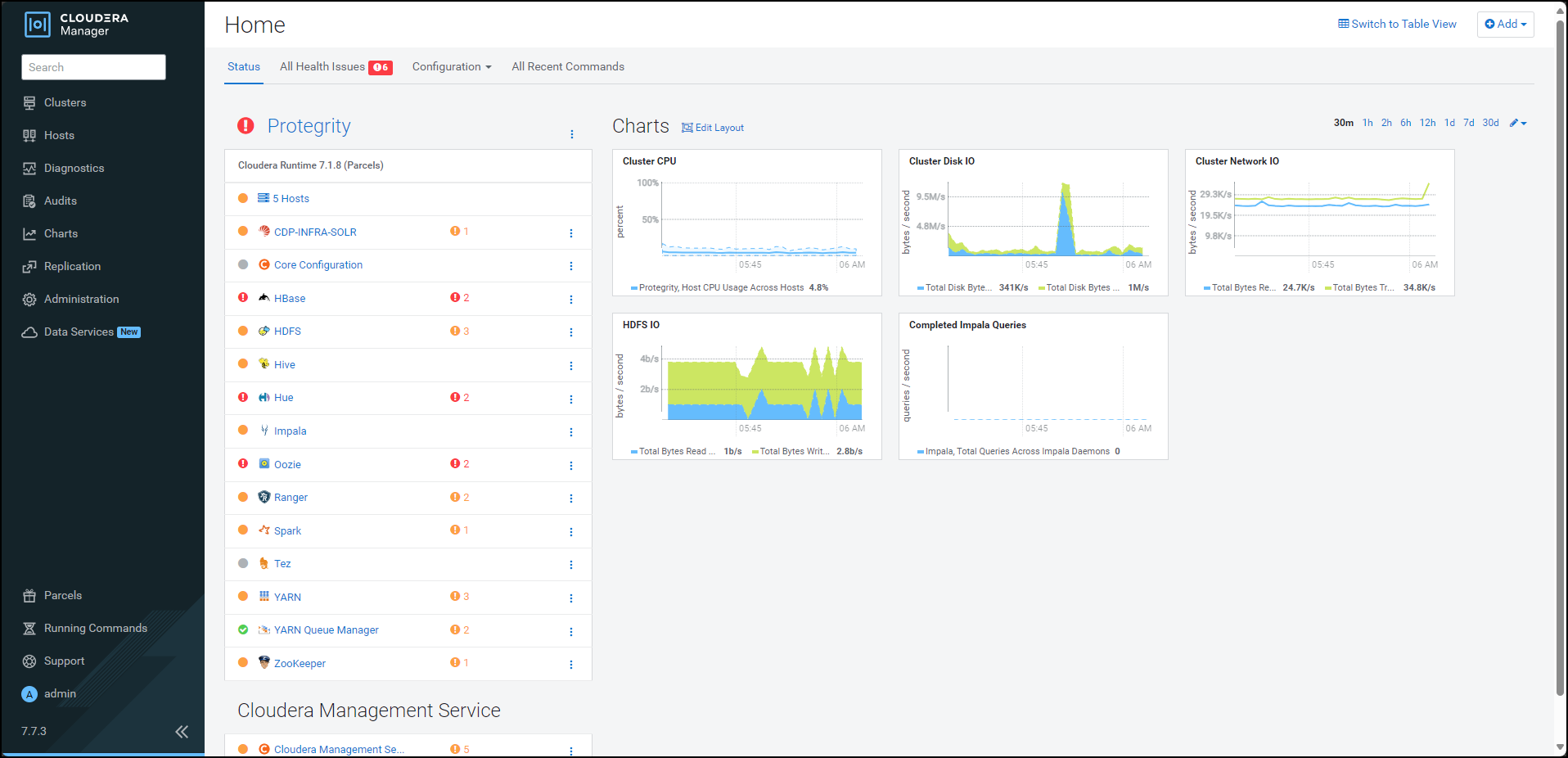

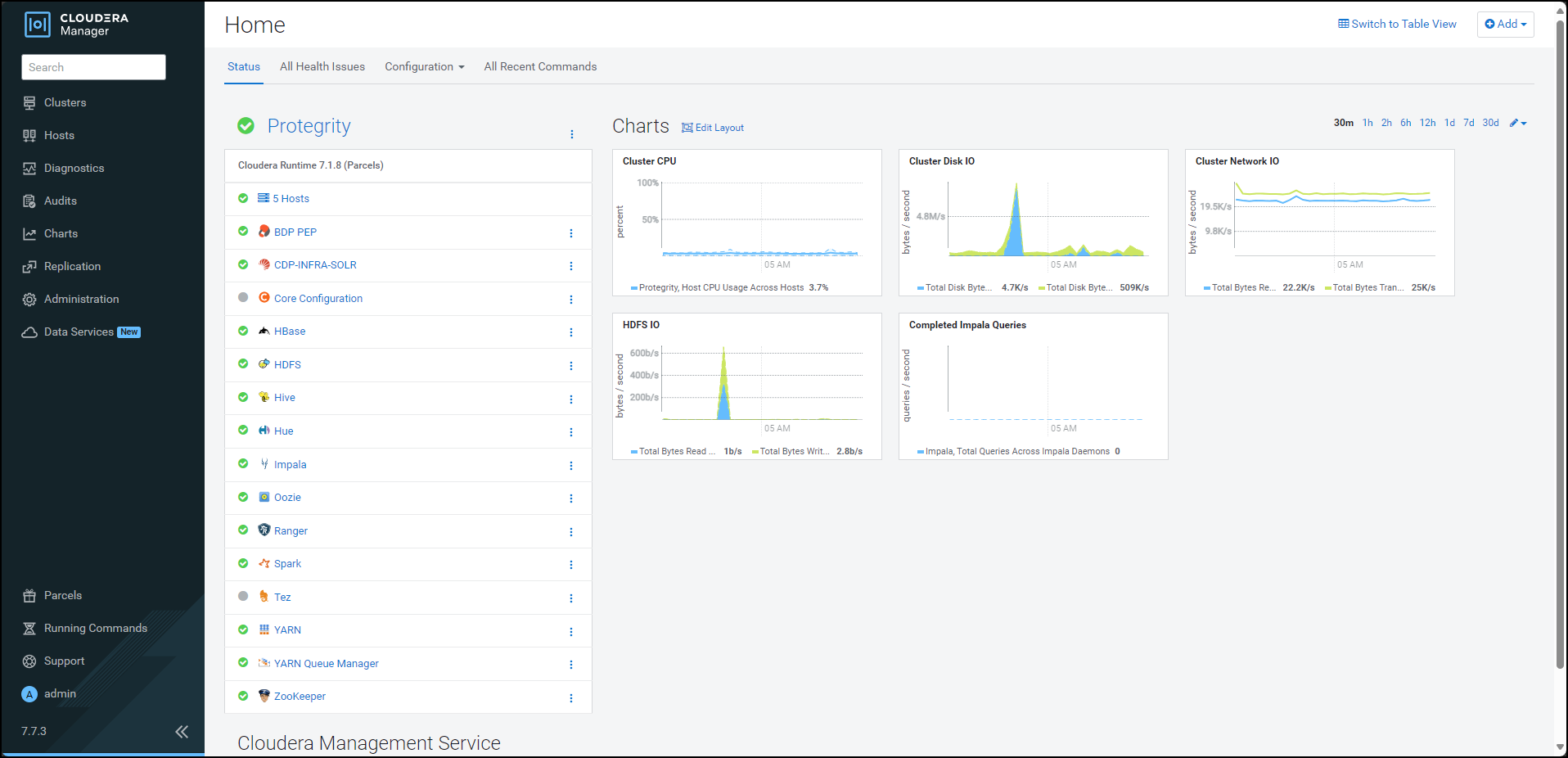

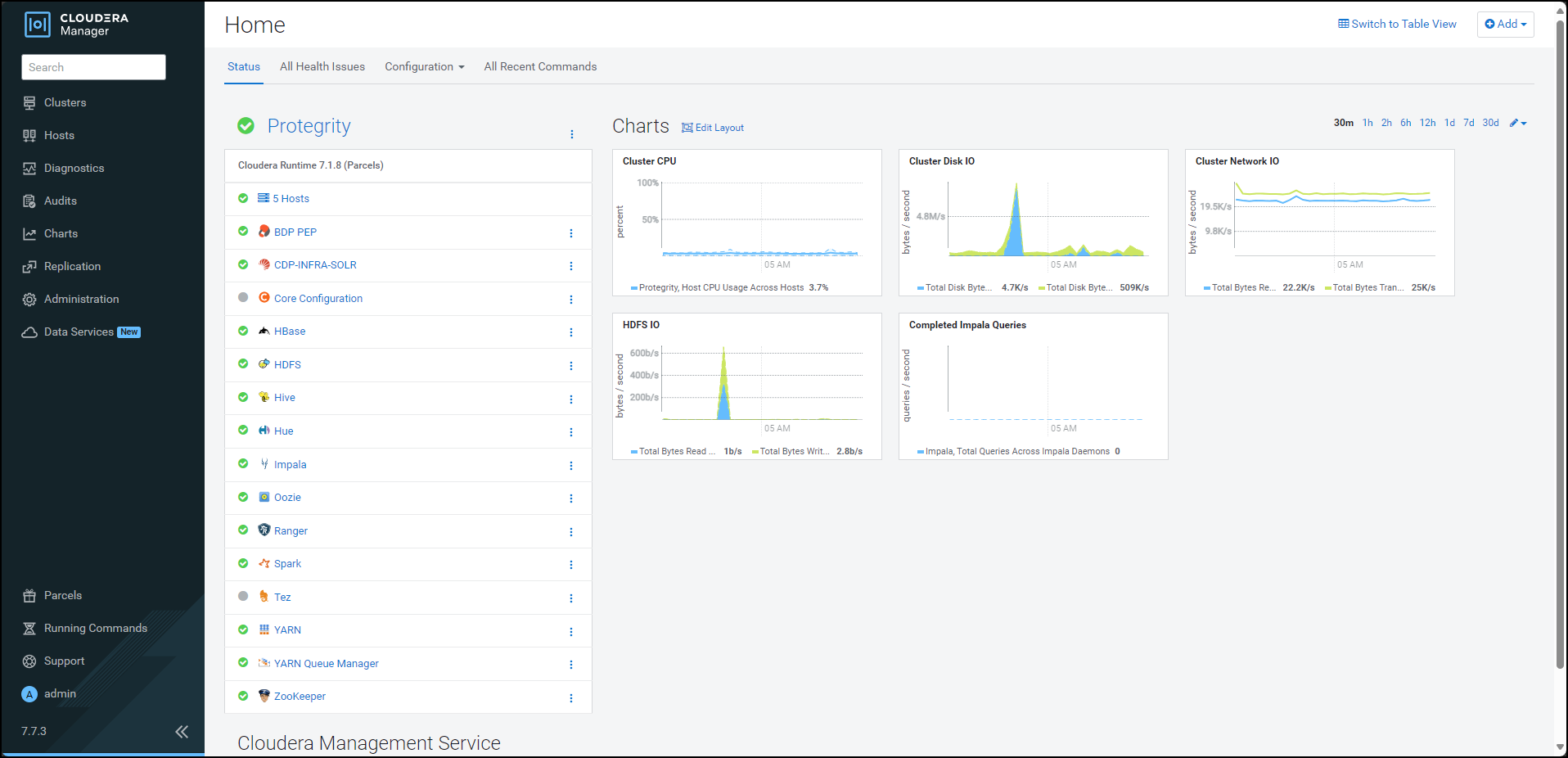

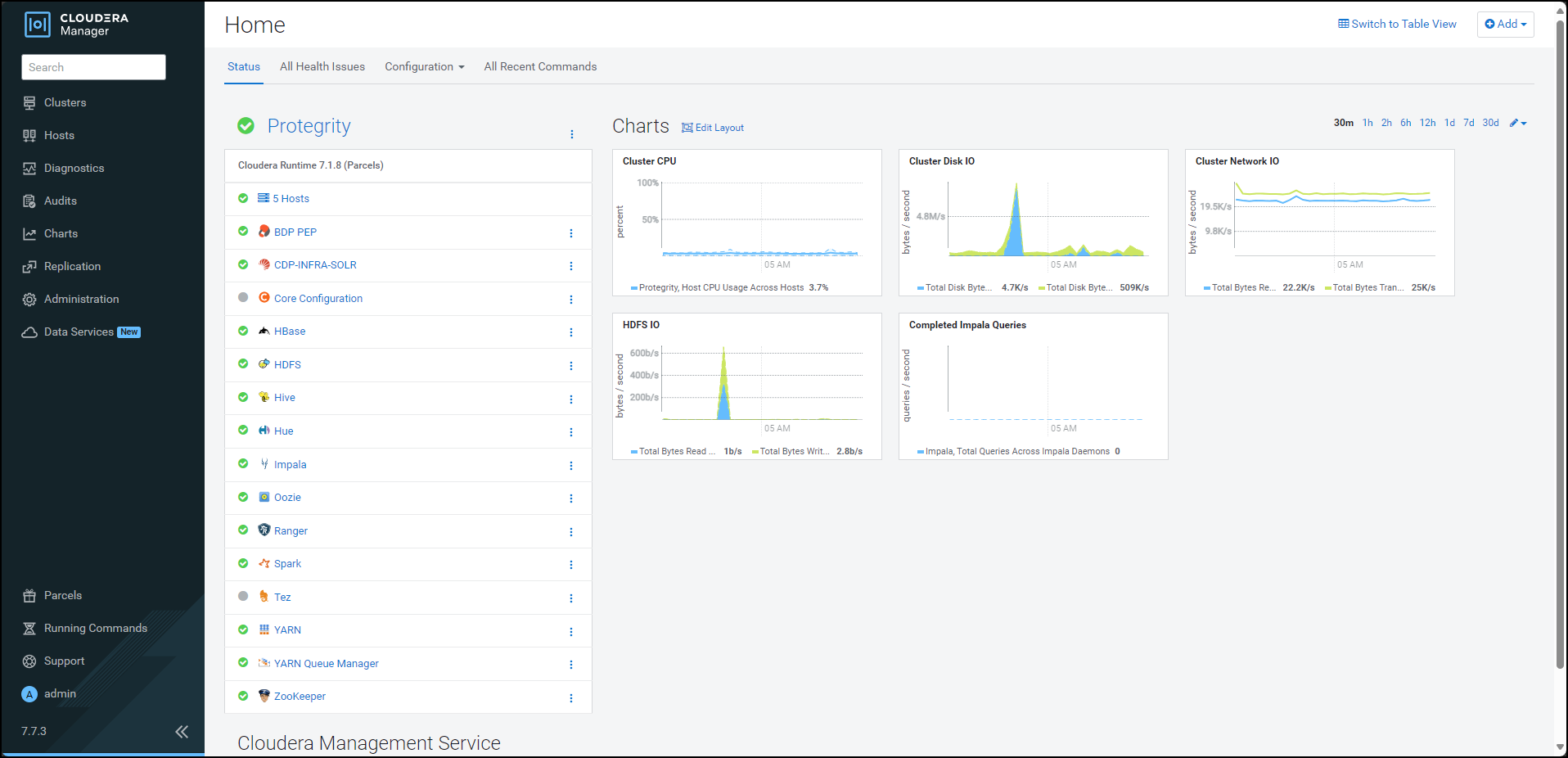

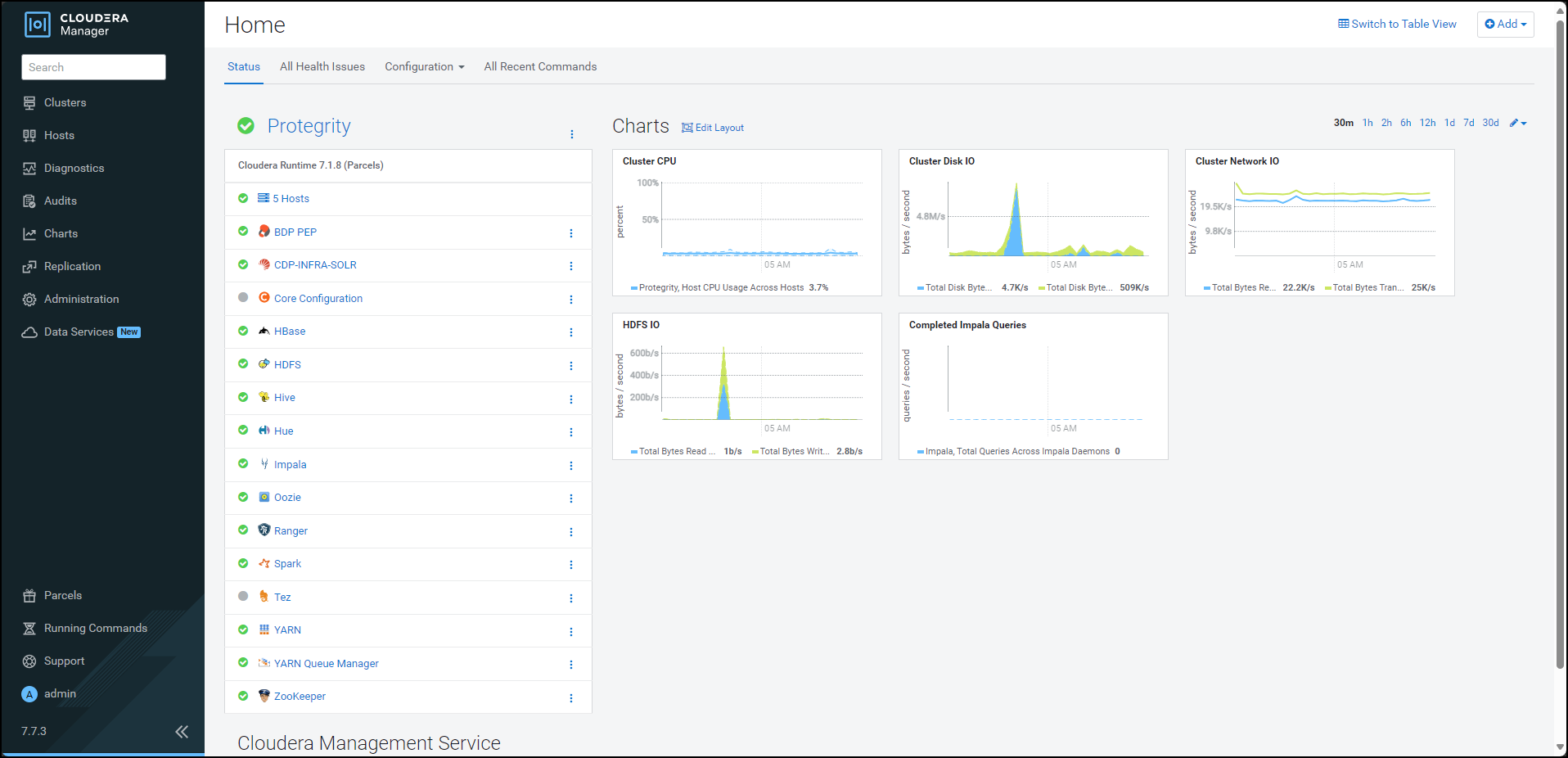

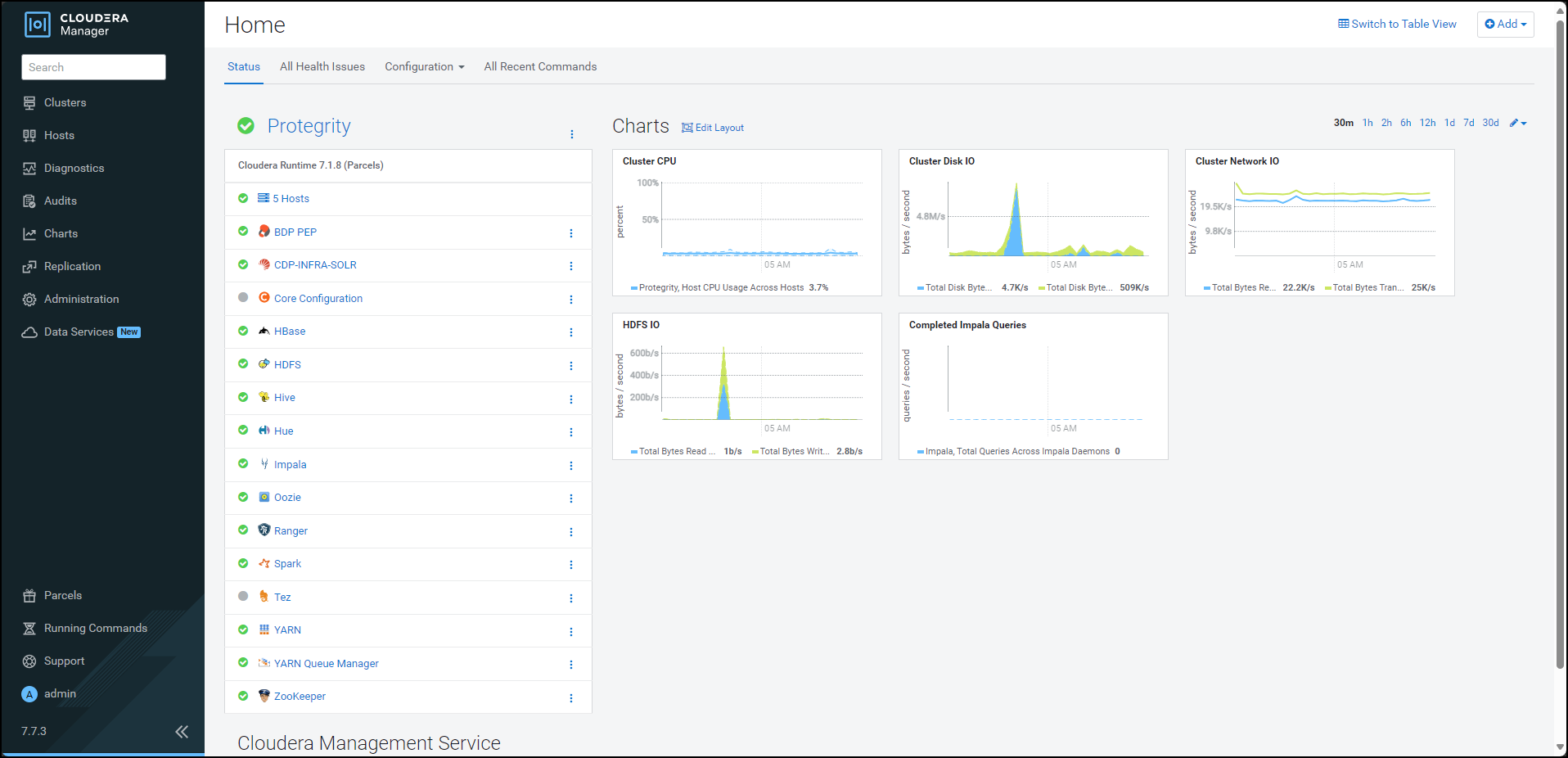

- 10.3: CDP-PVC-Base

- 10.3.1: Understanding the architecture

- 10.3.2: System Requirements

- 10.3.3: Preparing the Environment

- 10.3.3.1: Extracting the installation package

- 10.3.3.2: Running the configurator script

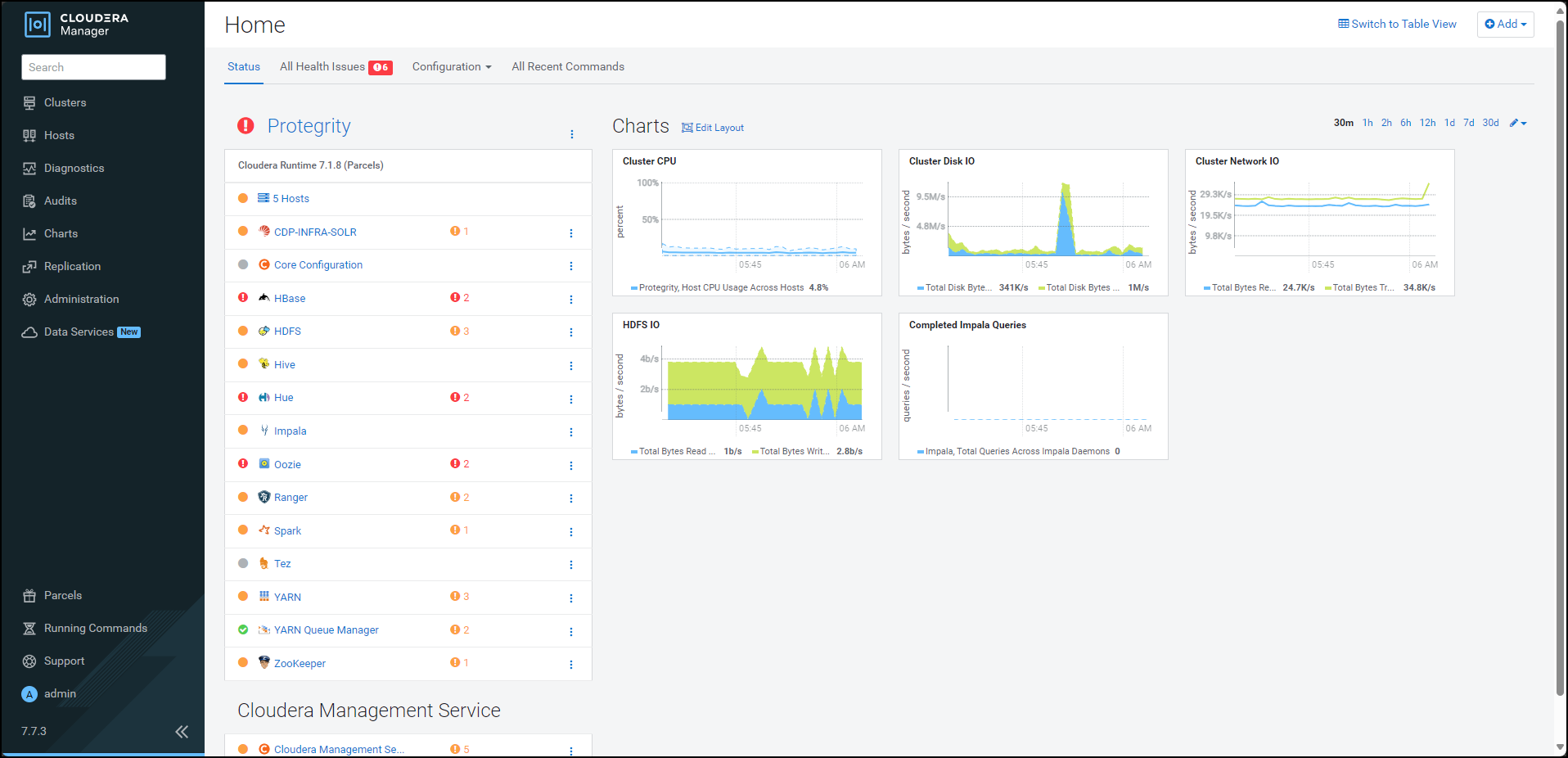

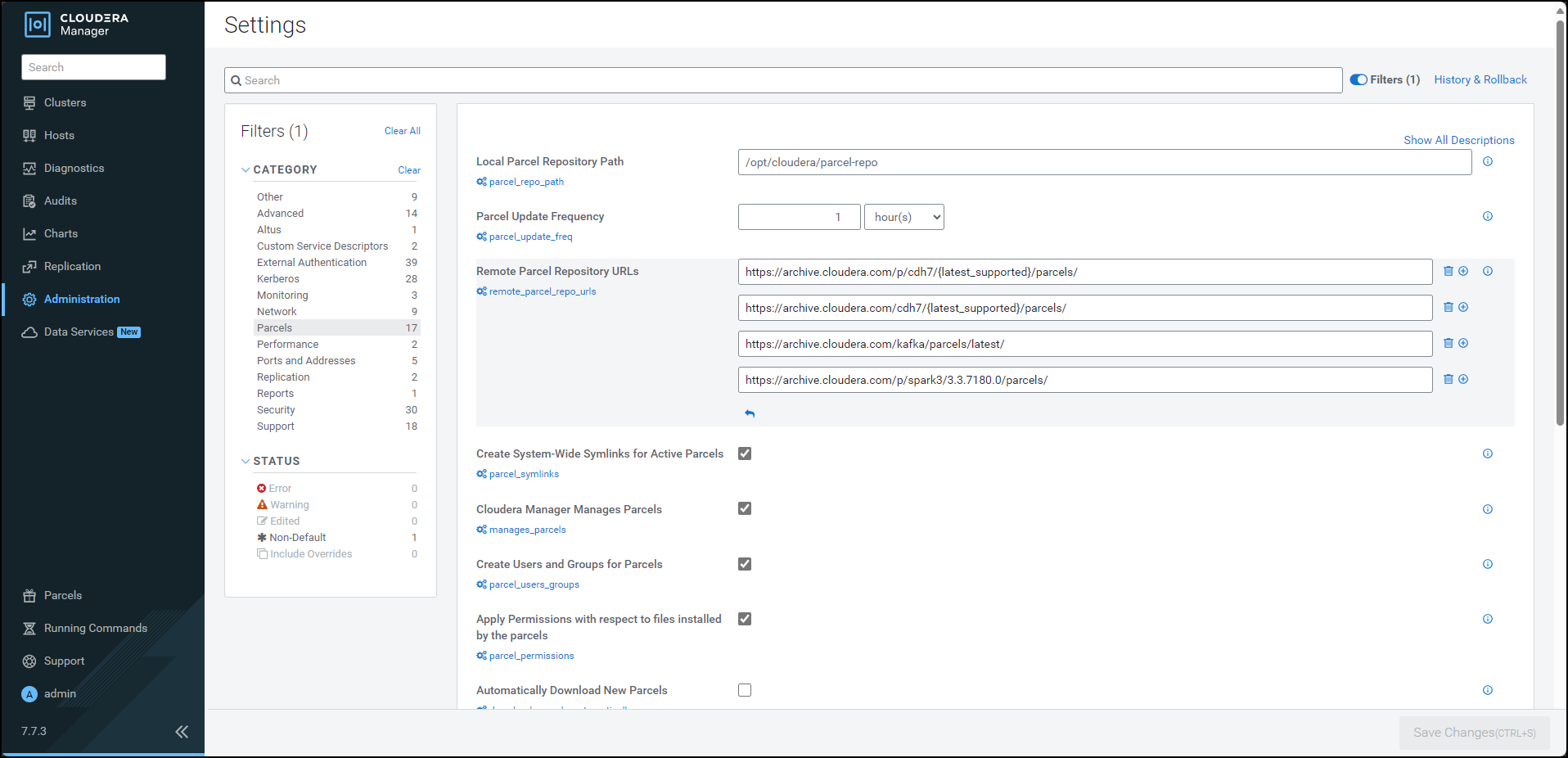

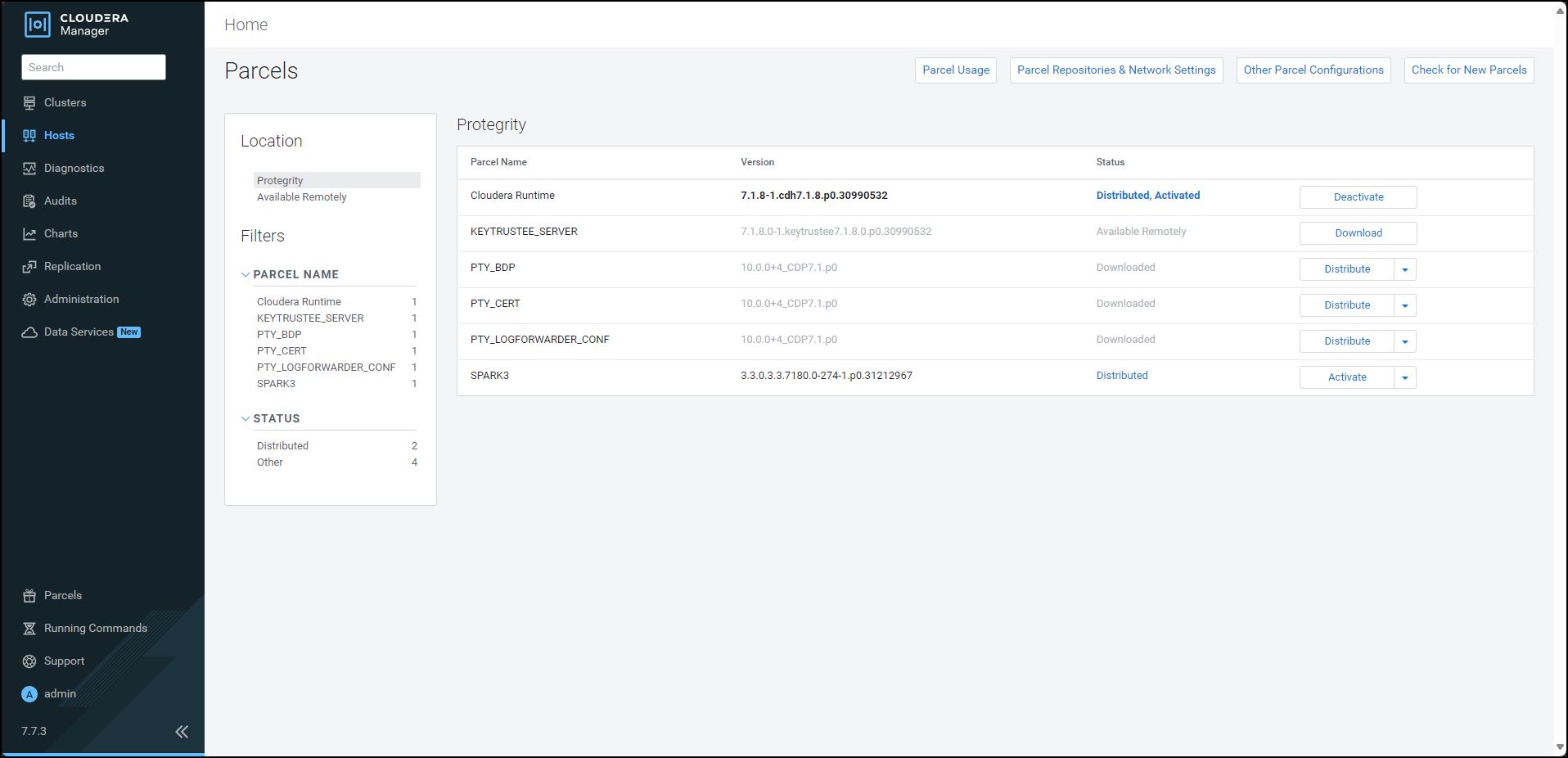

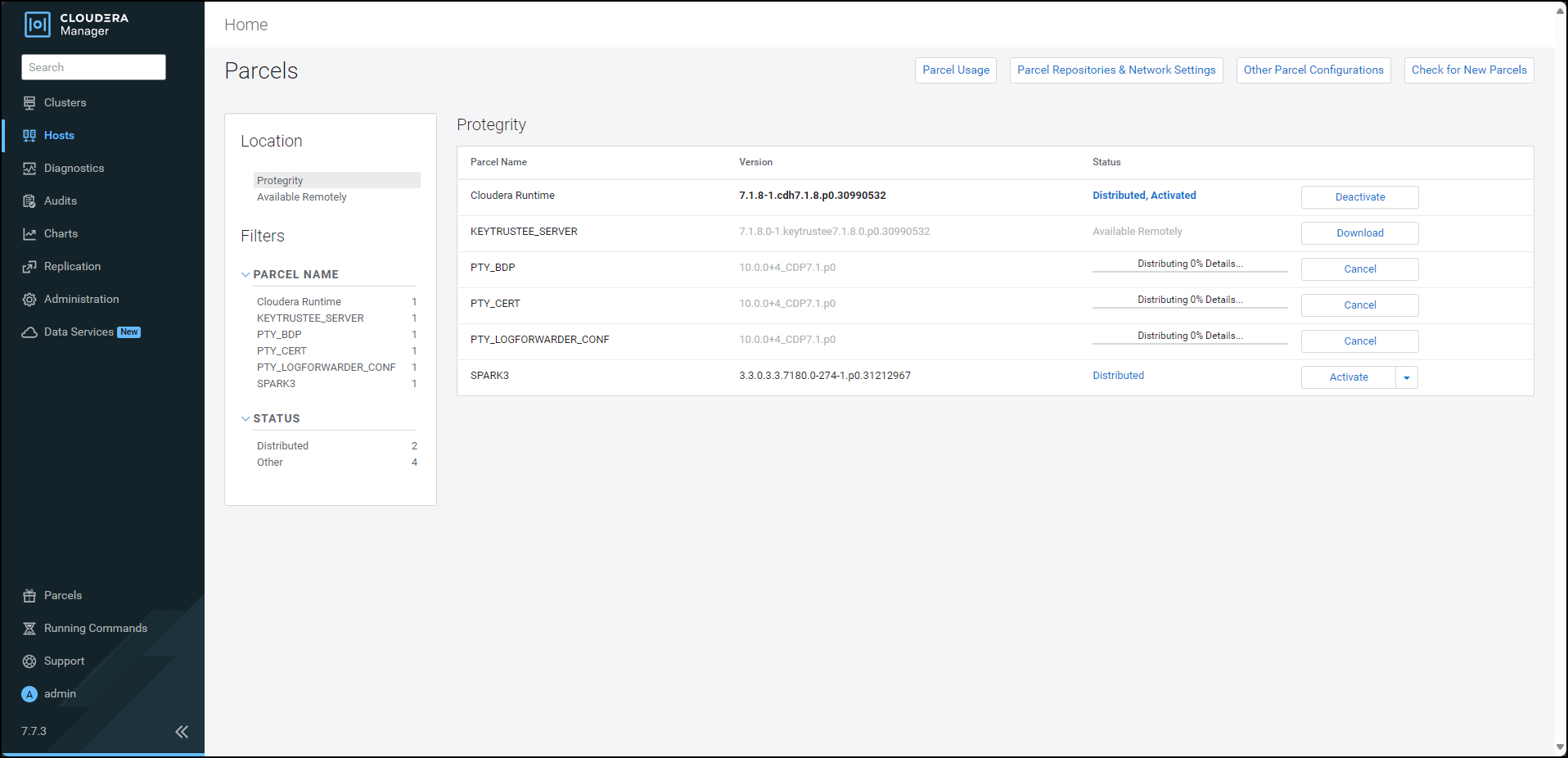

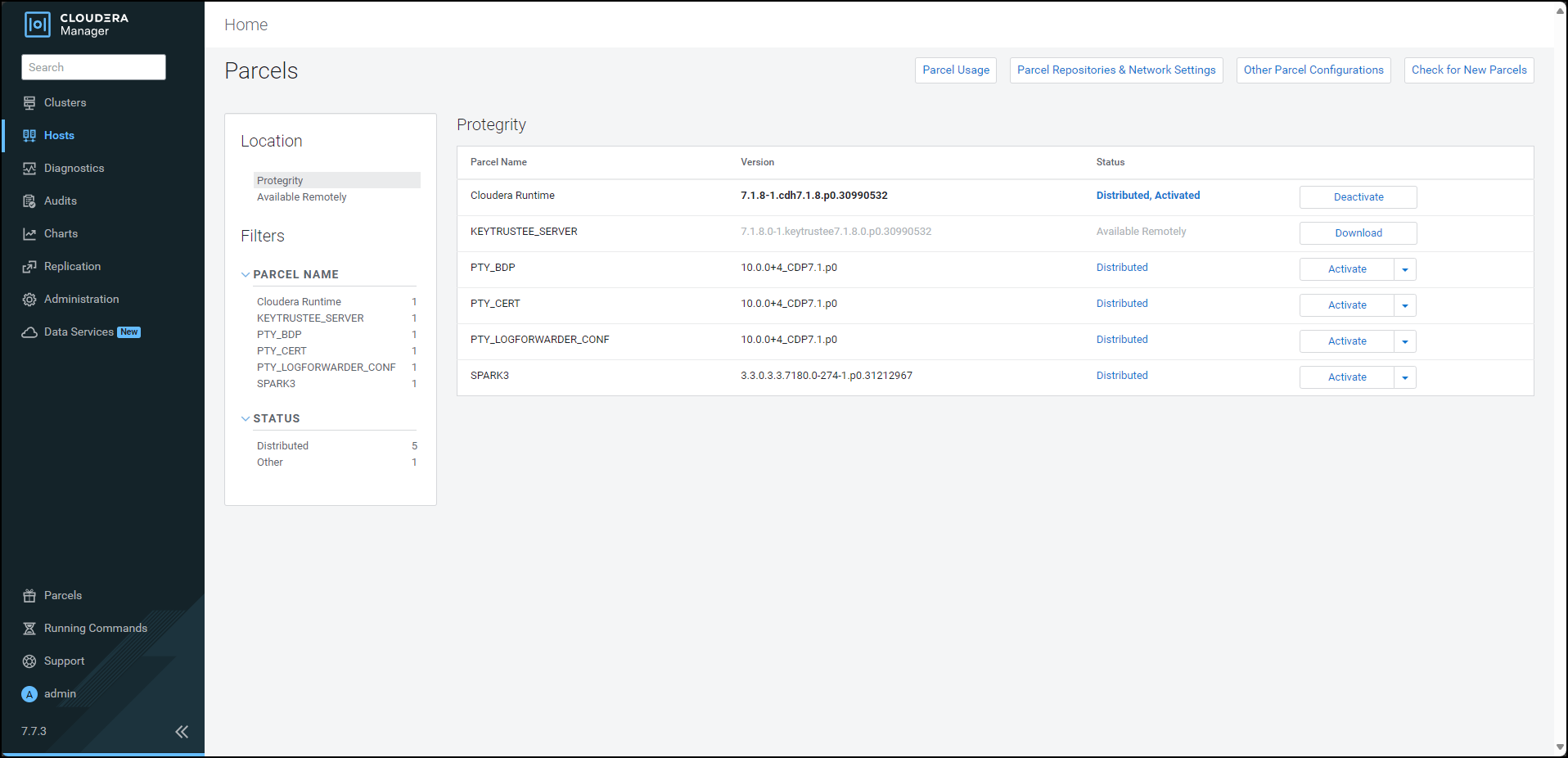

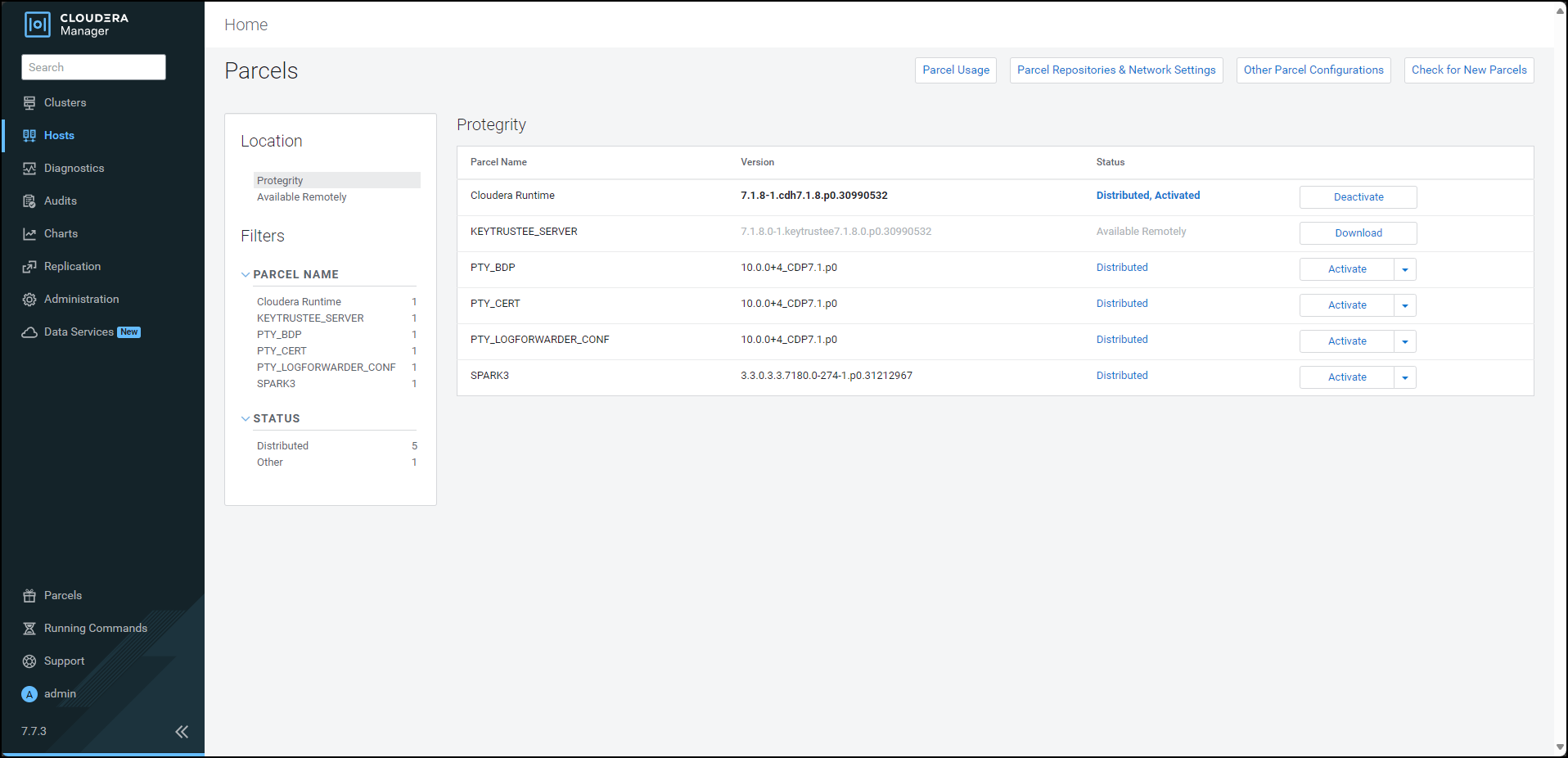

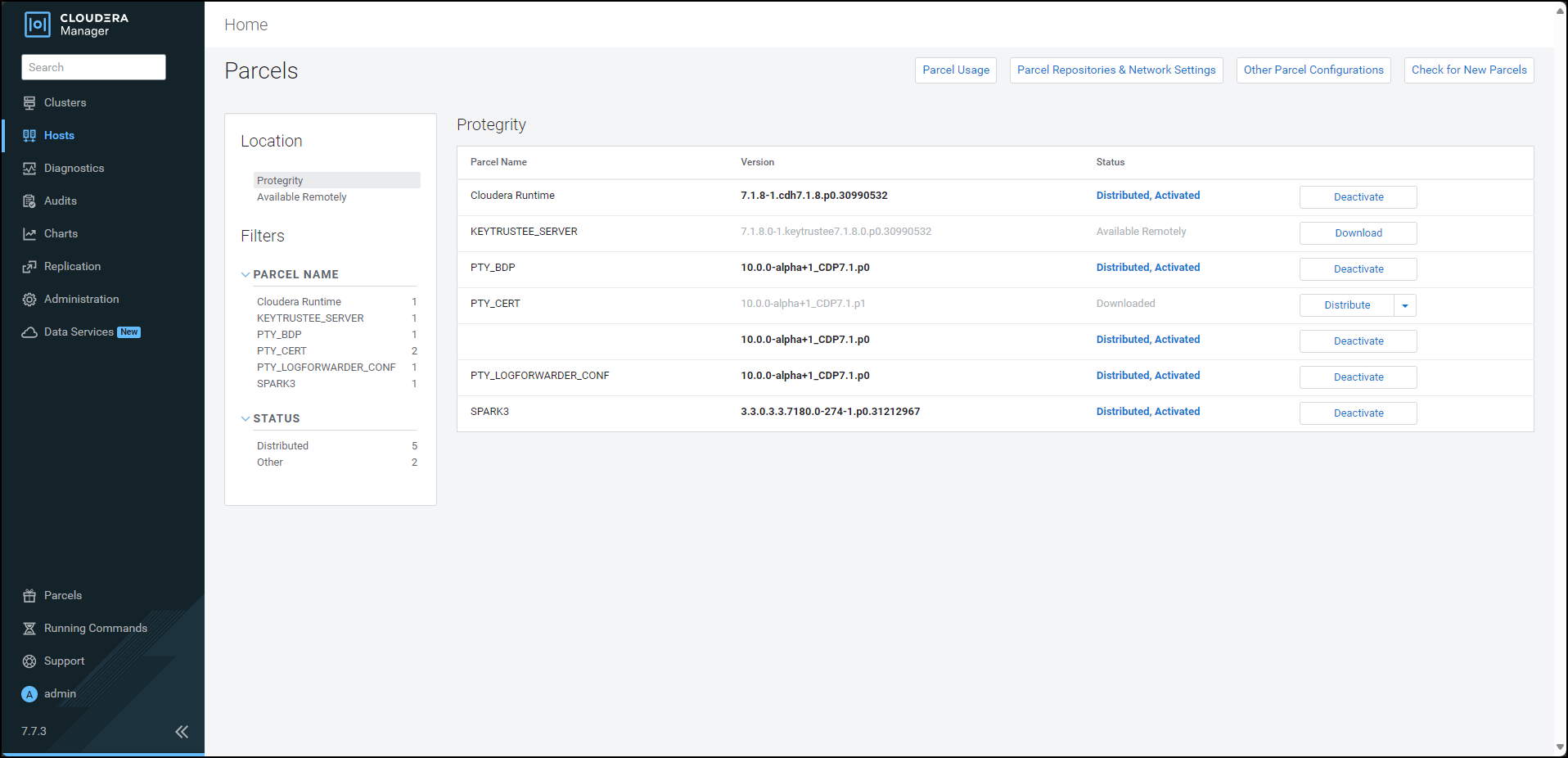

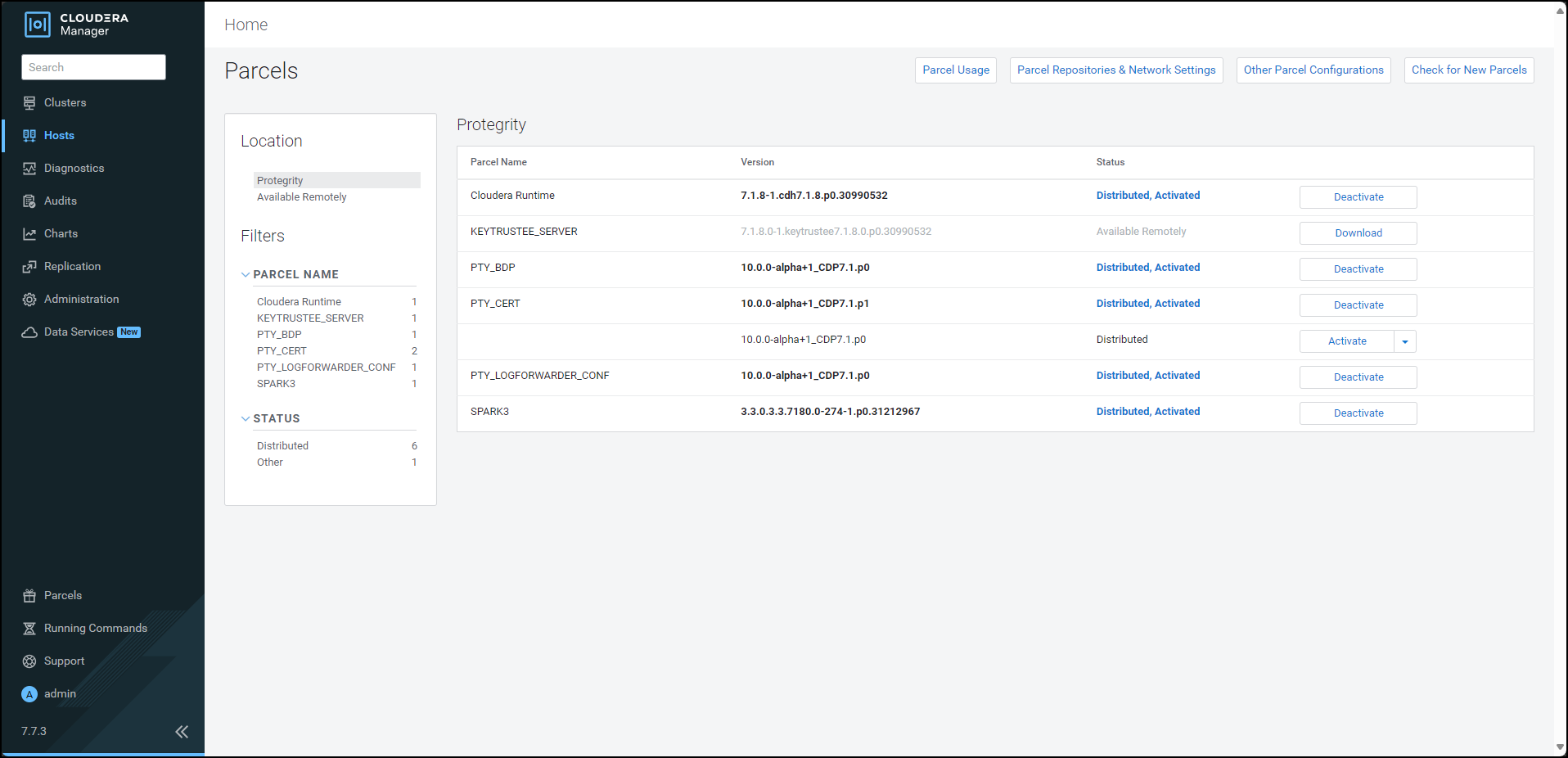

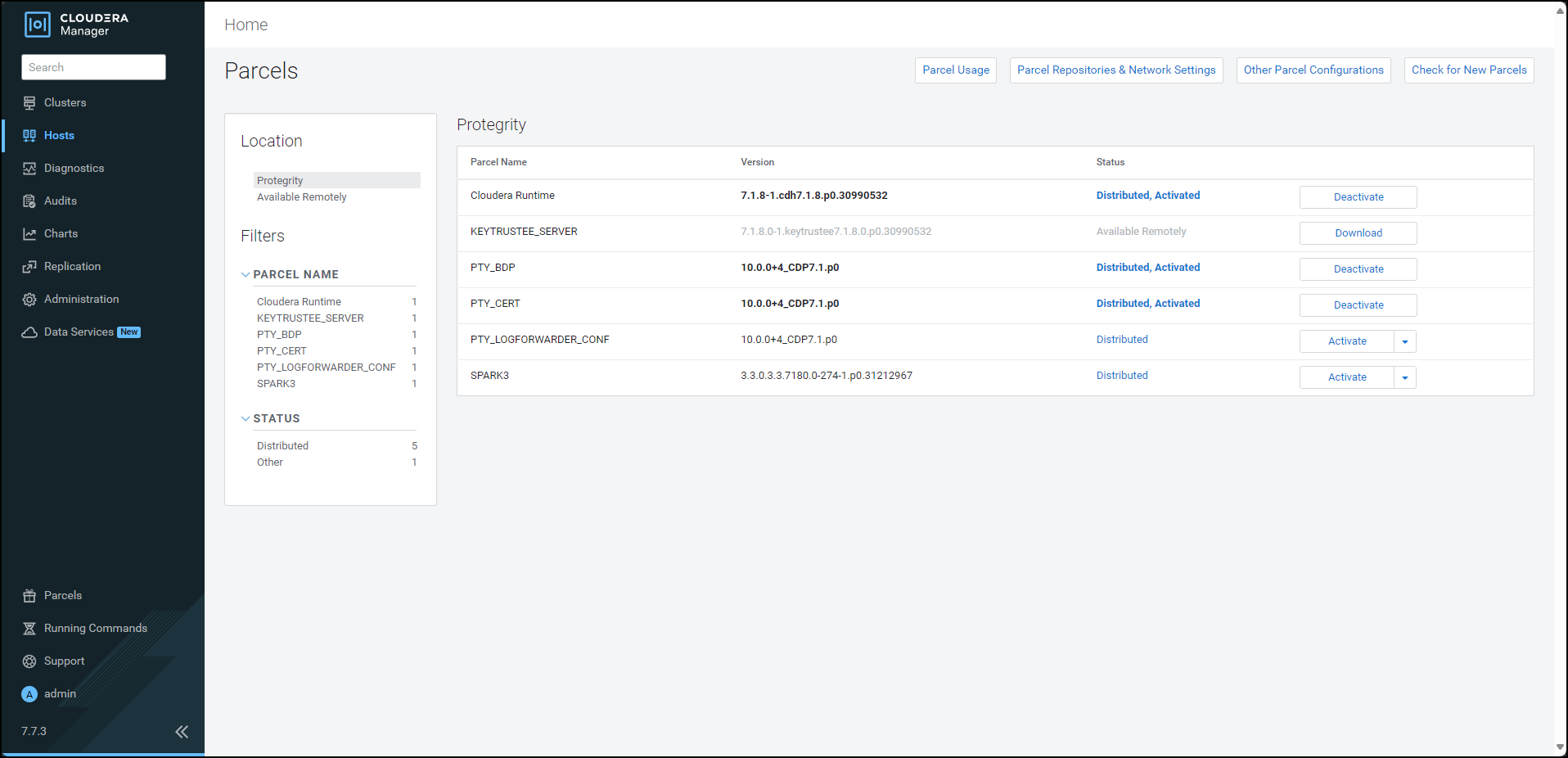

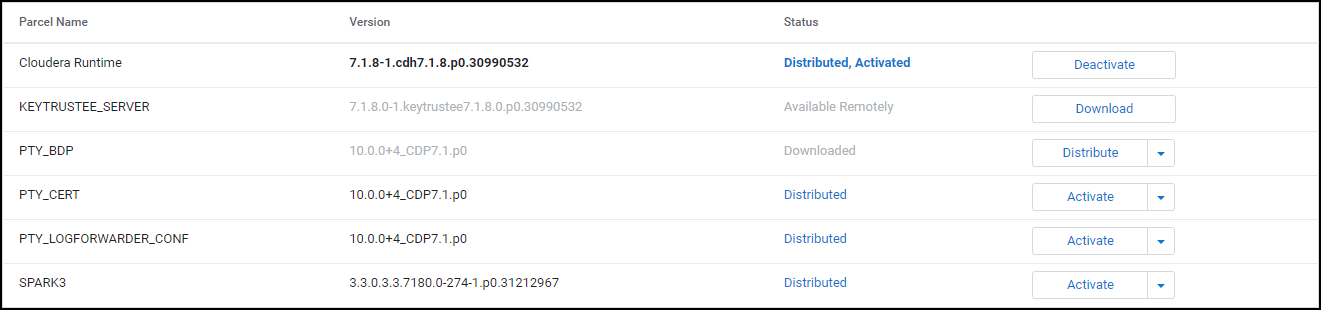

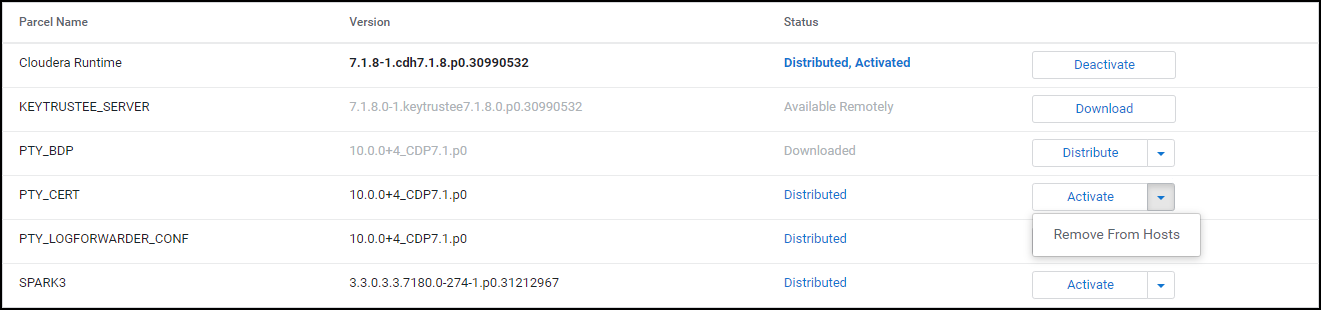

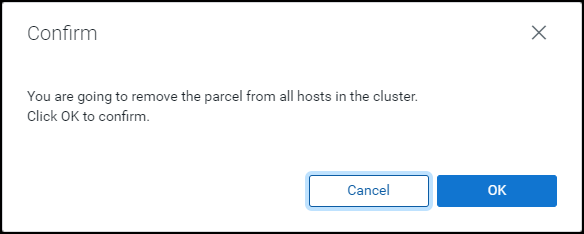

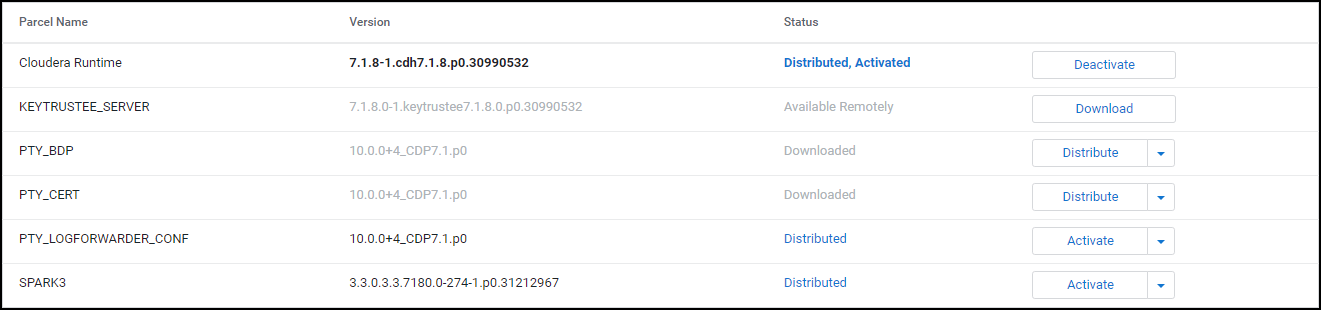

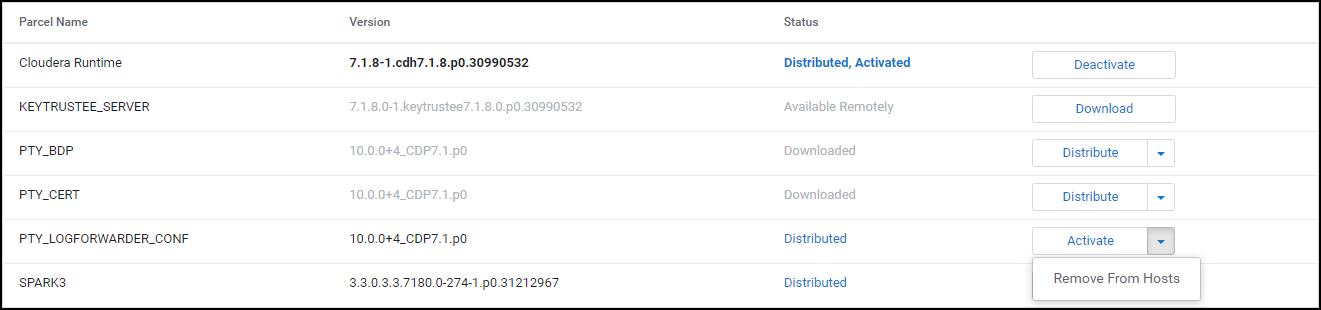

- 10.3.3.3: Distributing the parcels

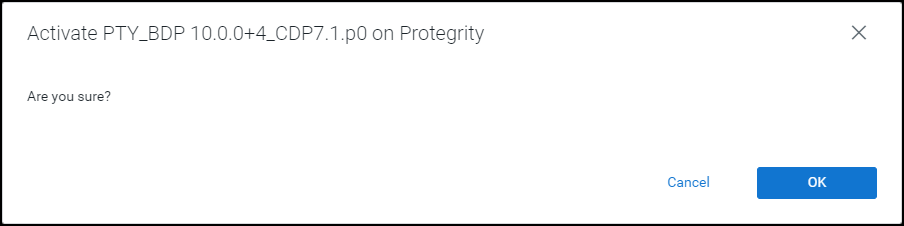

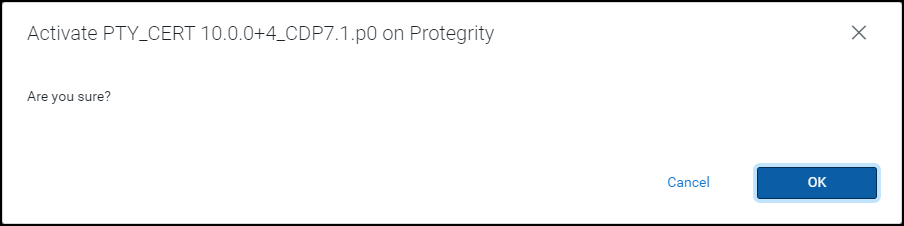

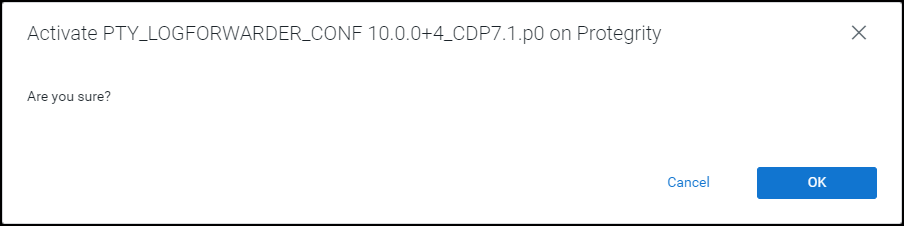

- 10.3.3.4: Activating the parcels

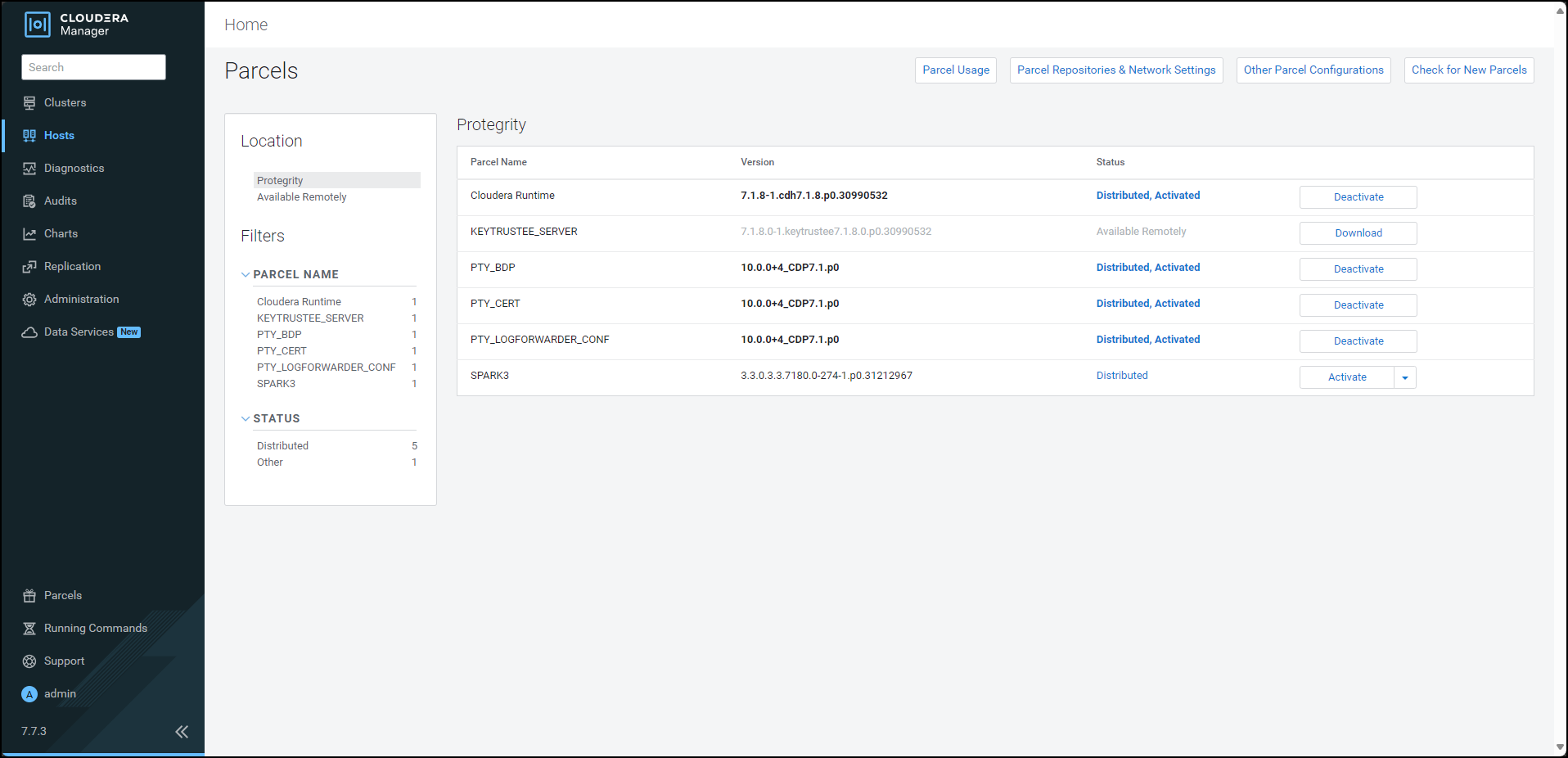

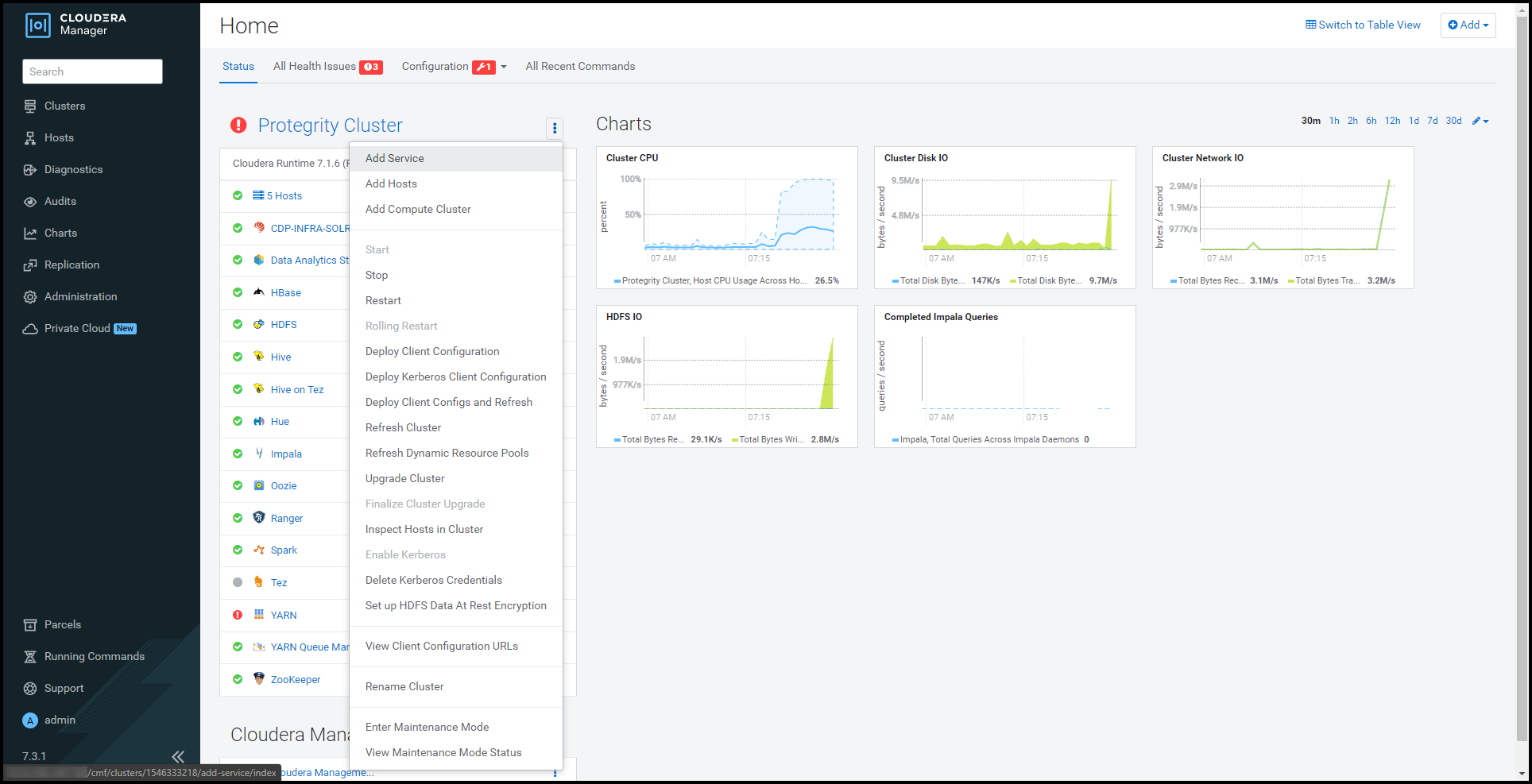

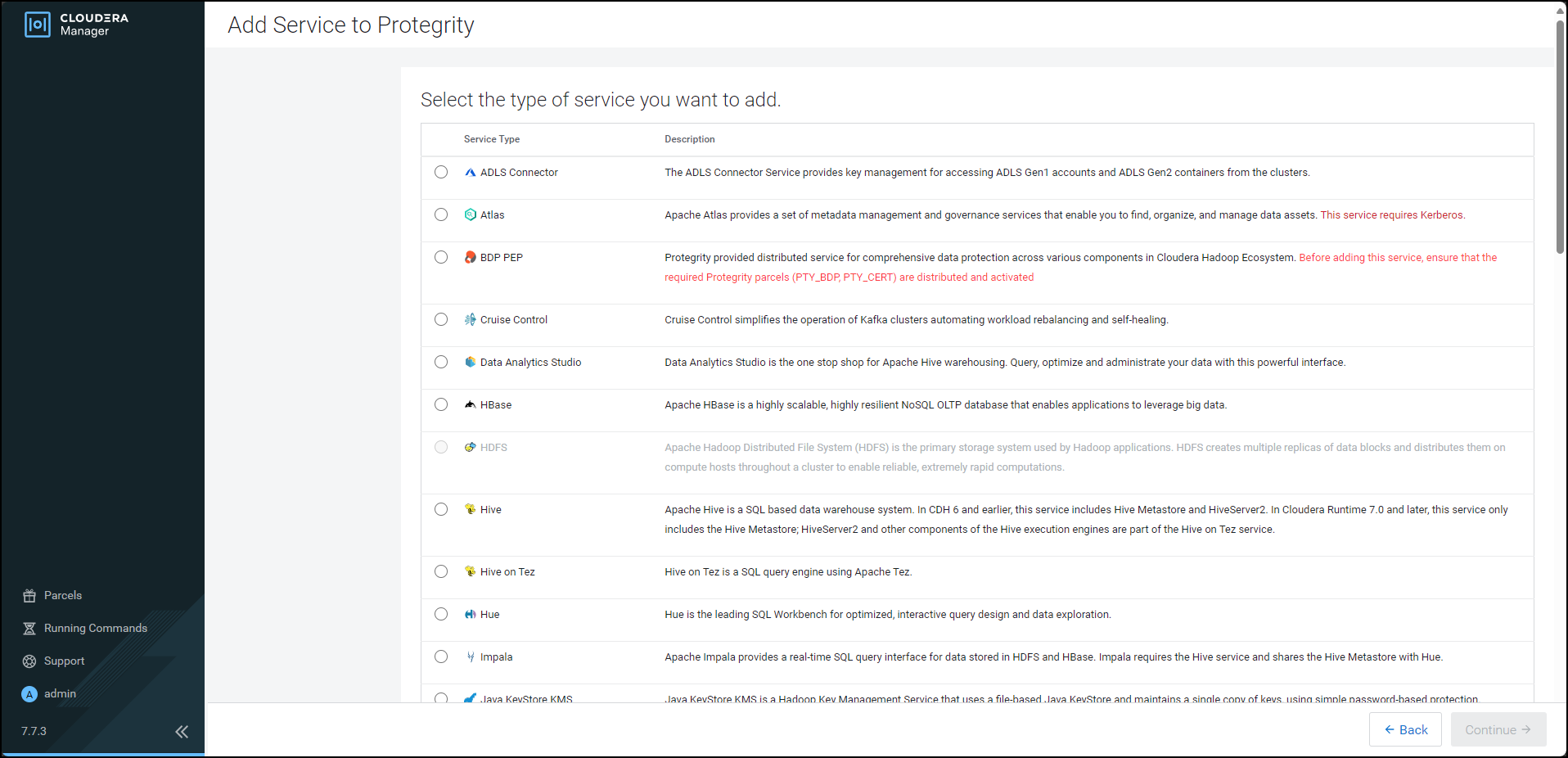

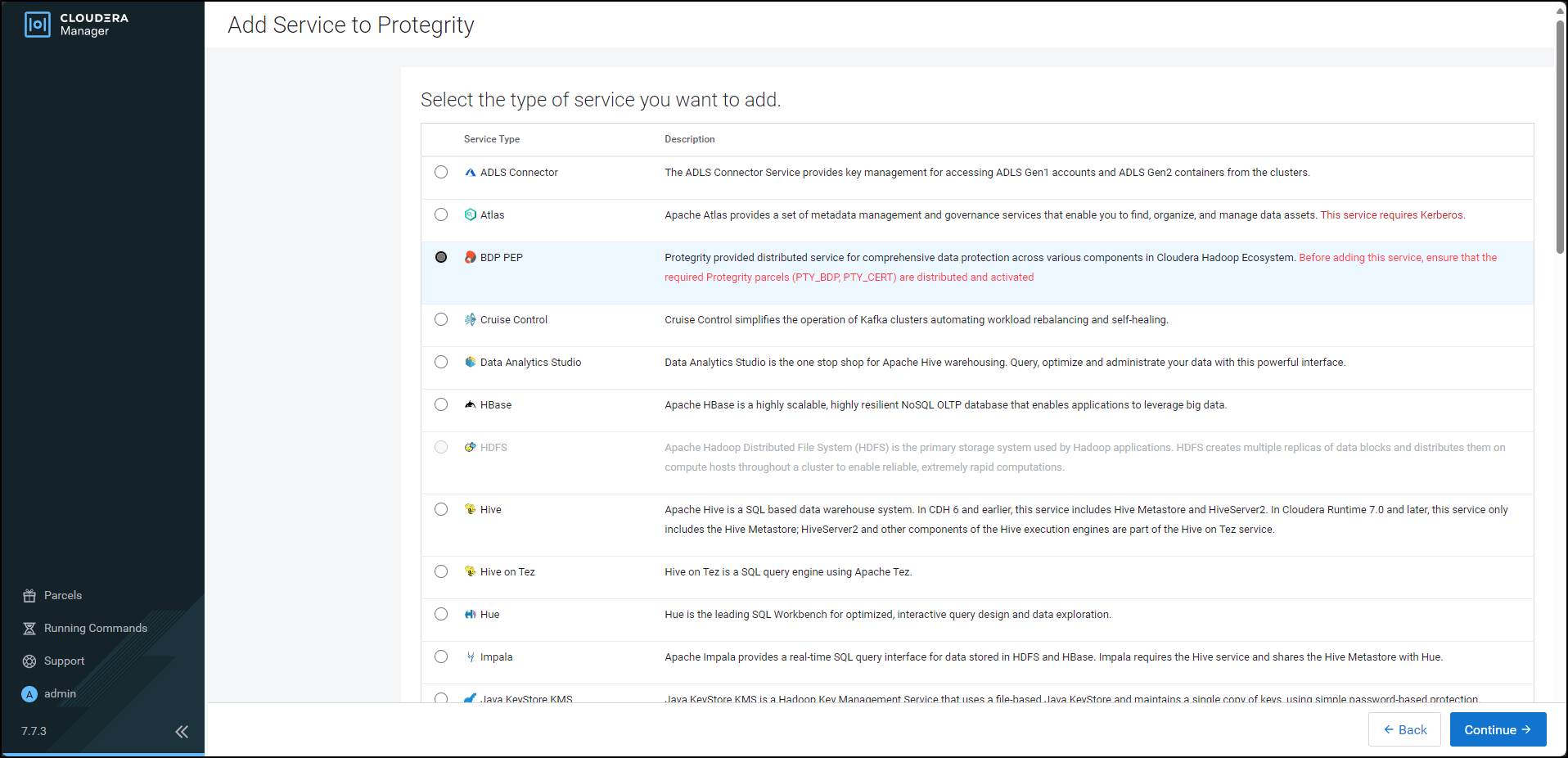

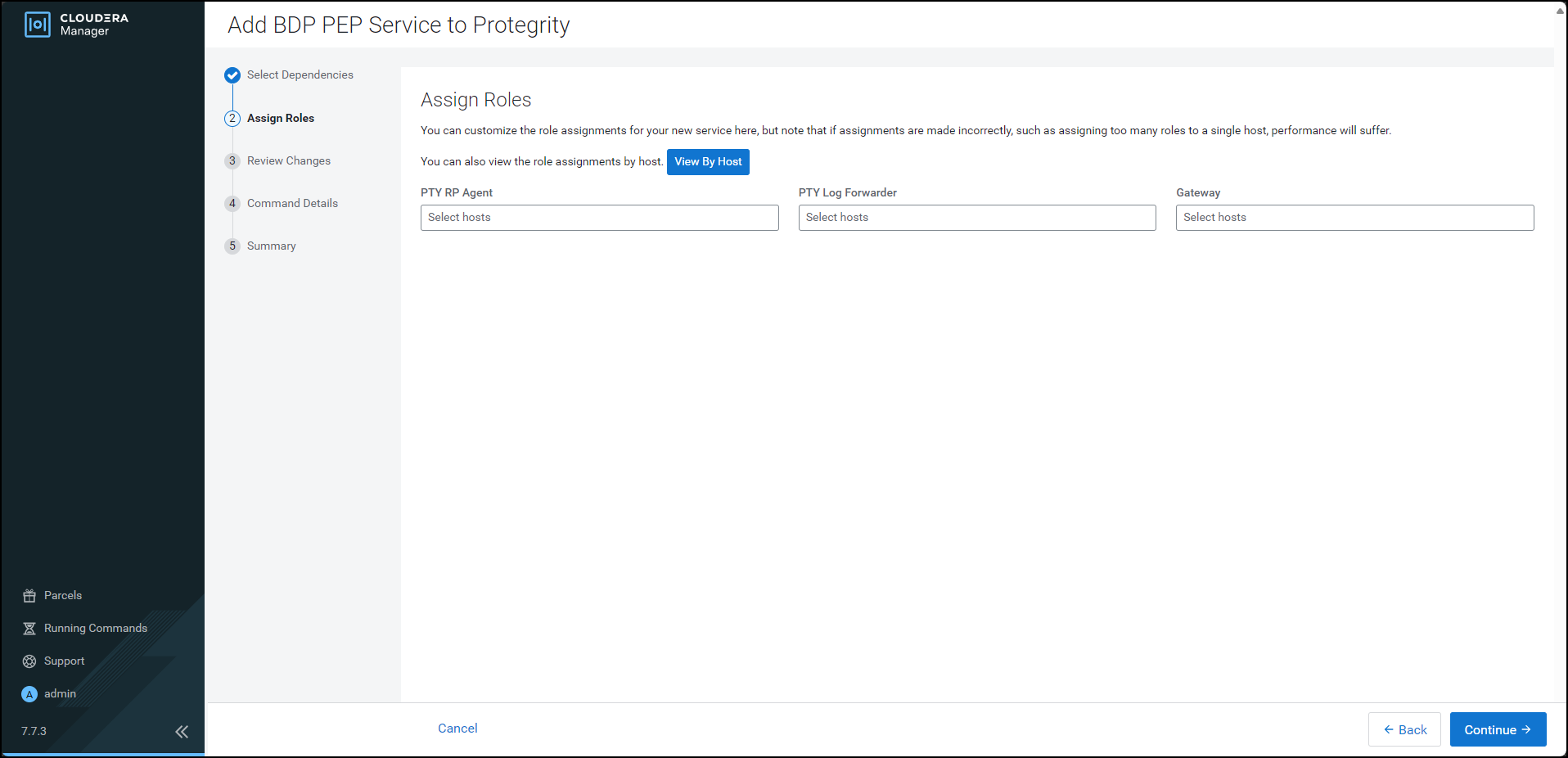

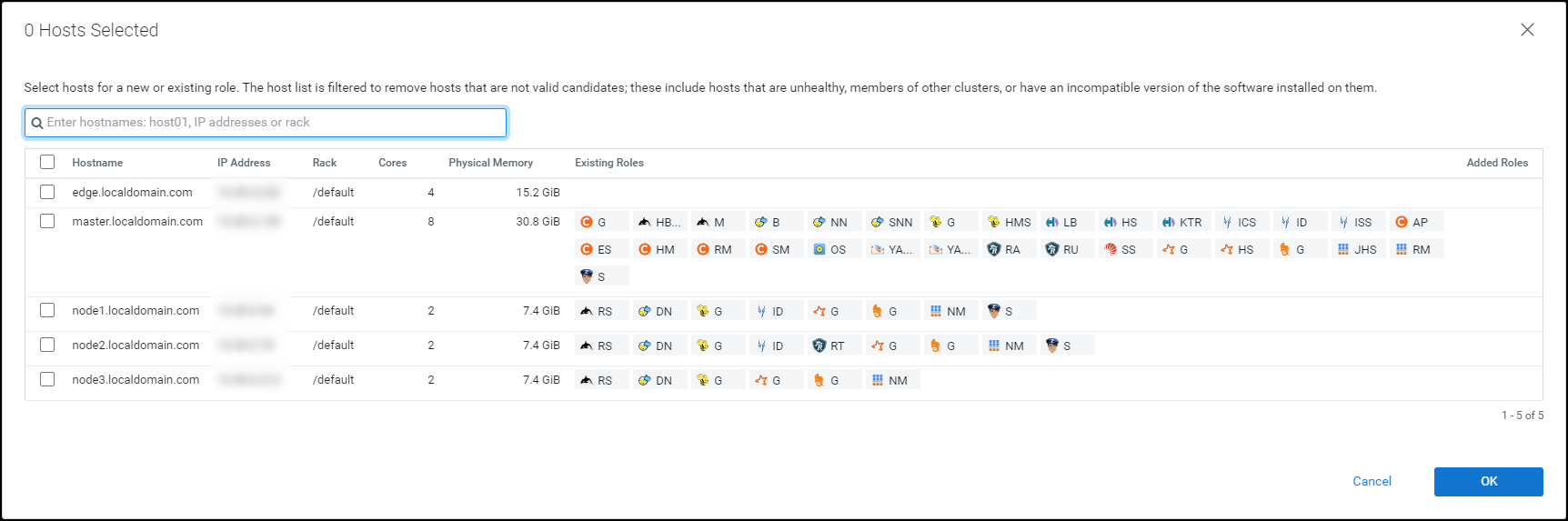

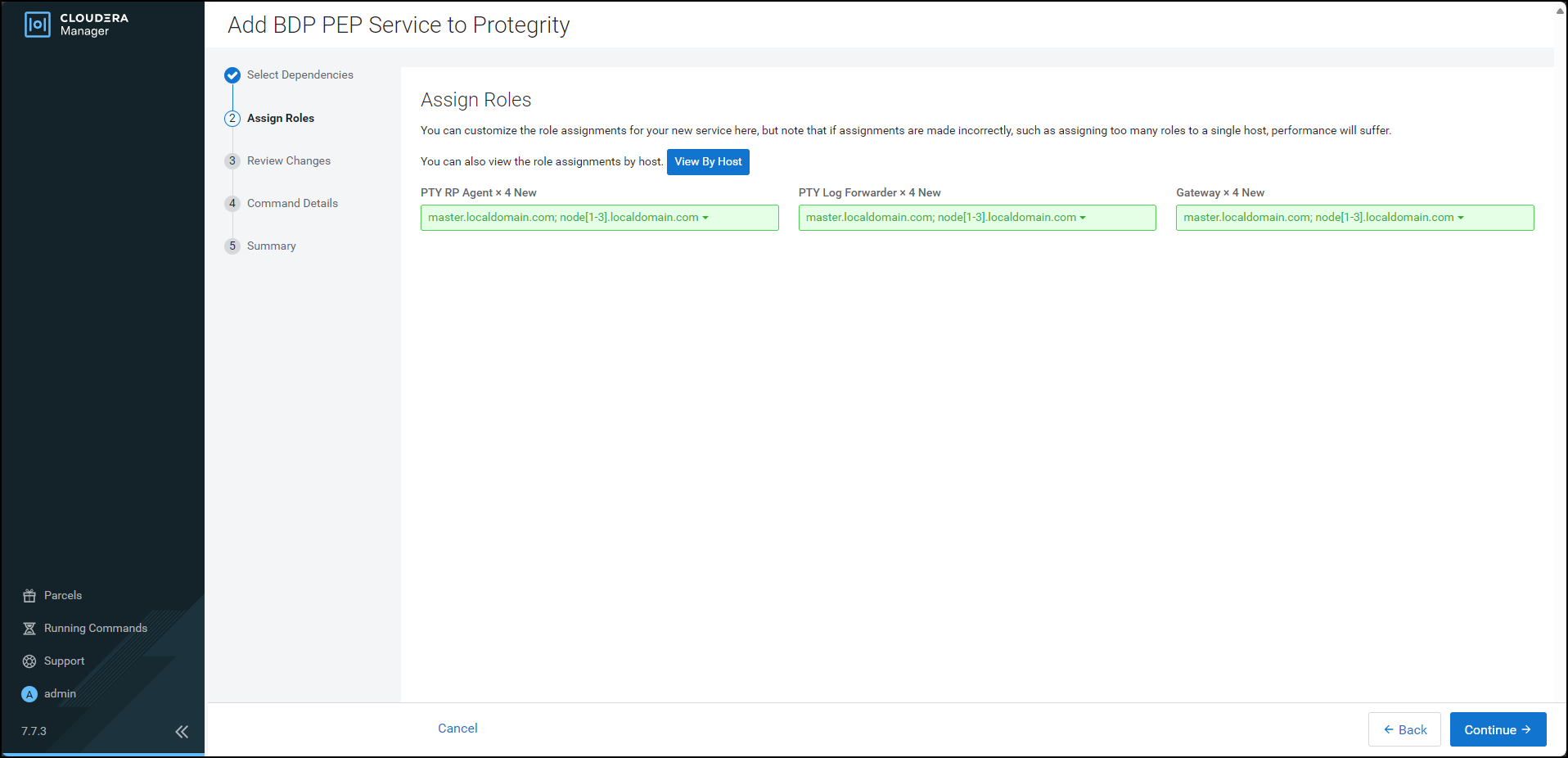

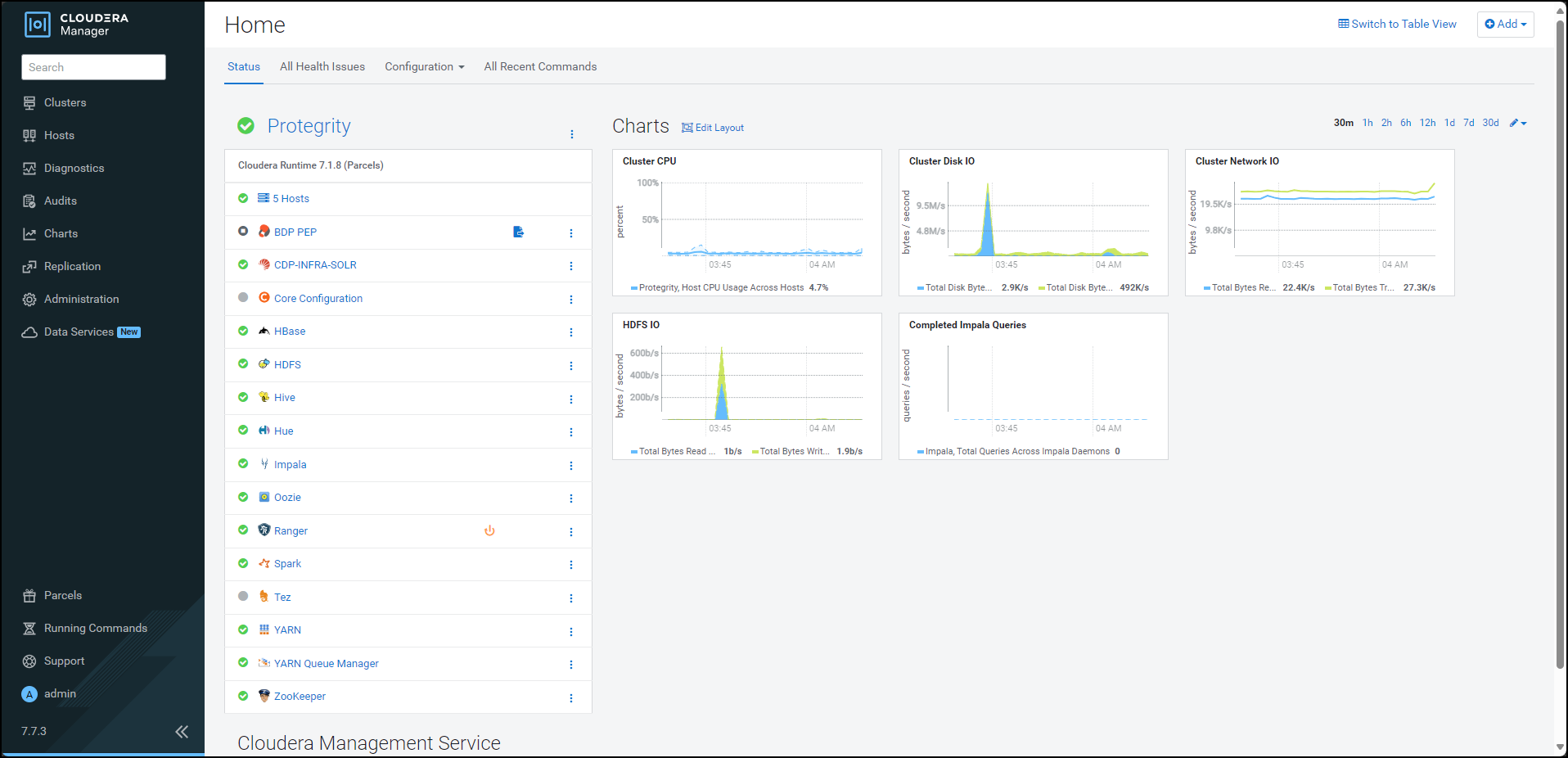

- 10.3.4: Installing the Protector

- 10.3.4.1: Installing the parcel on a new node

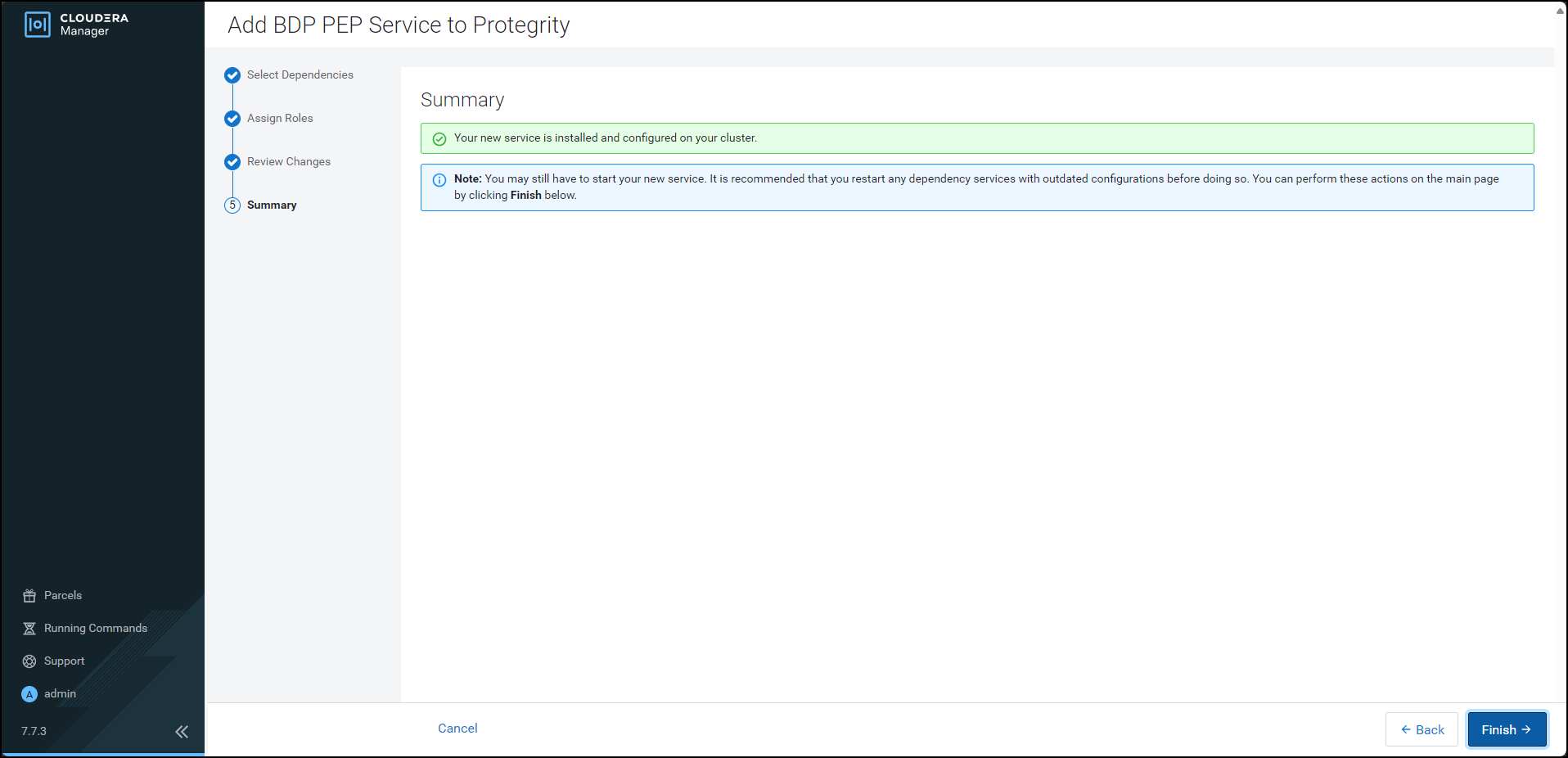

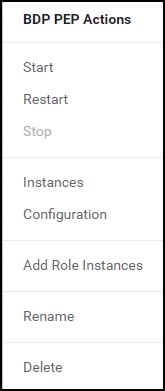

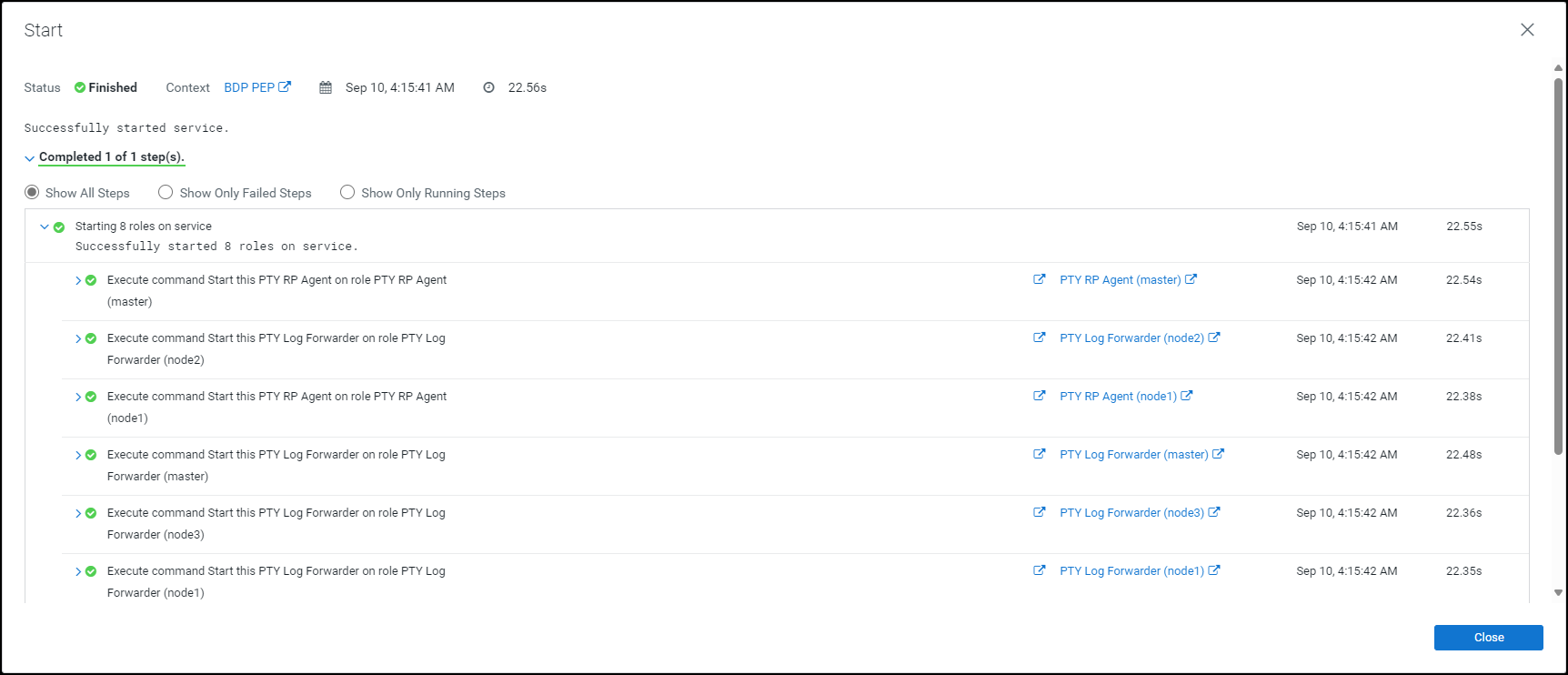

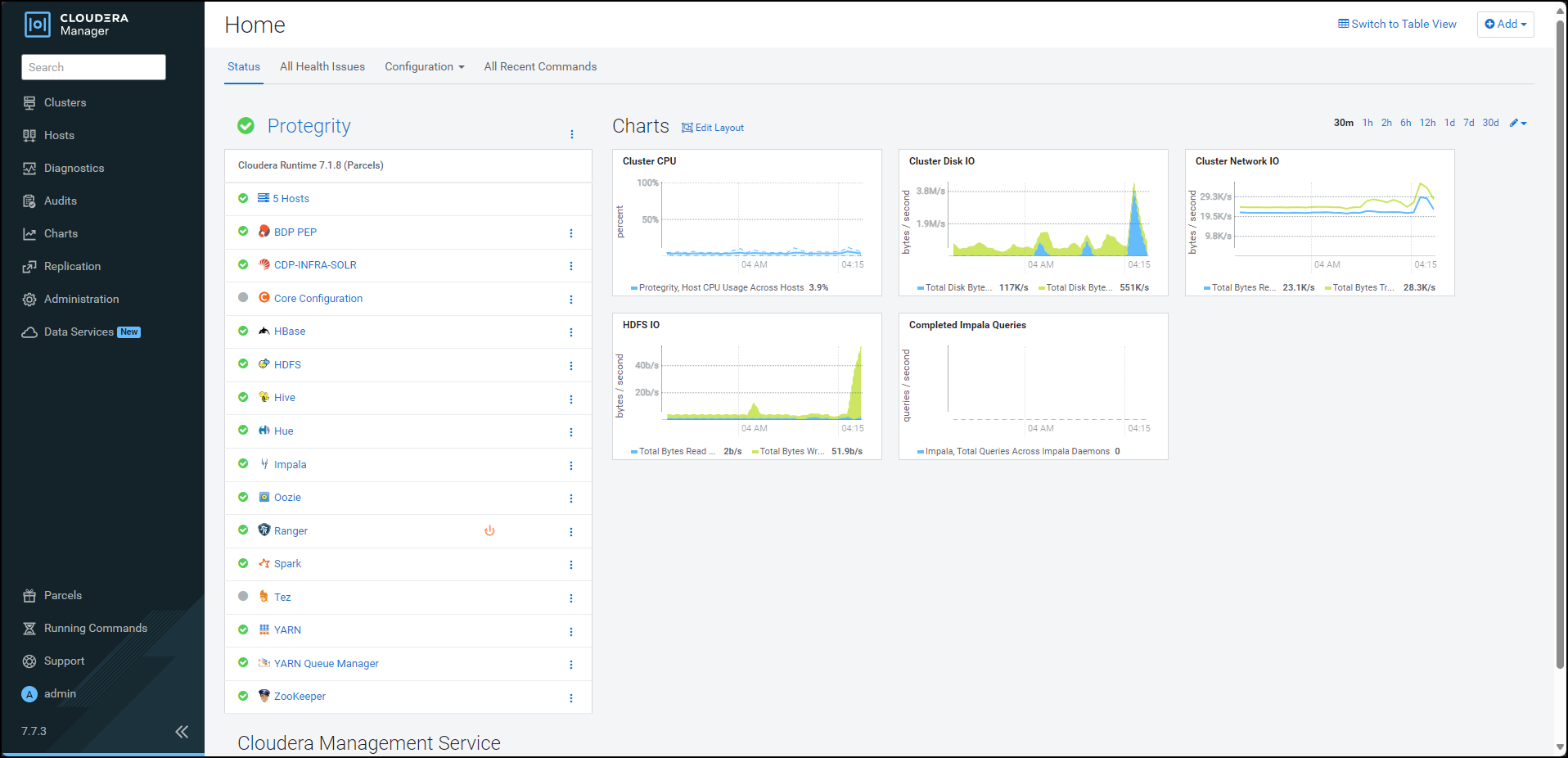

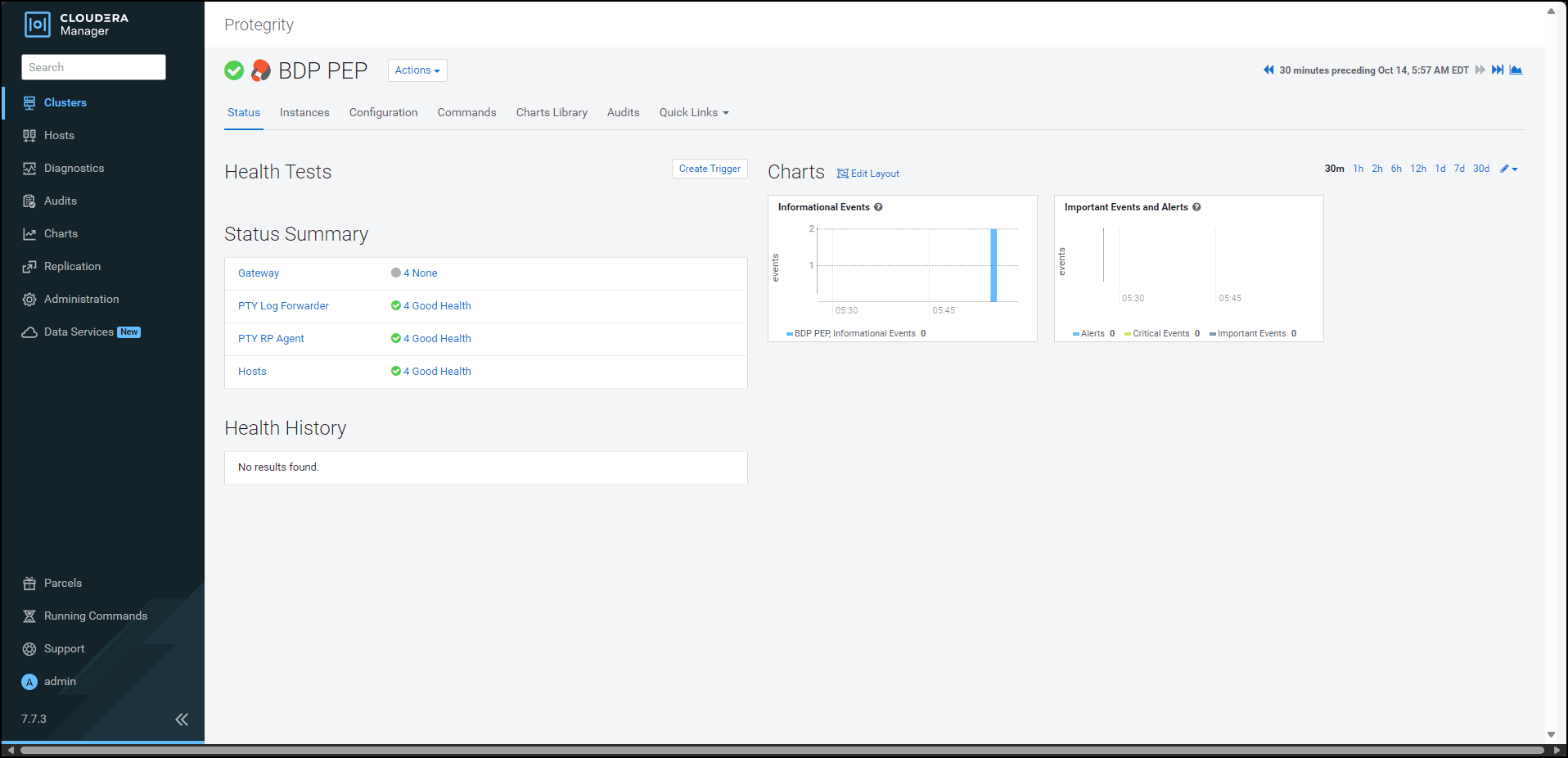

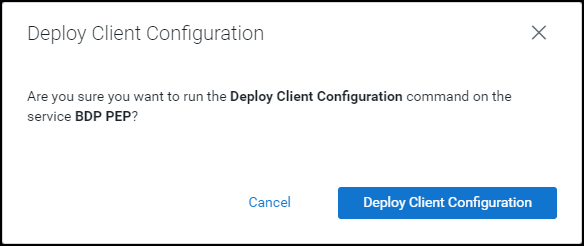

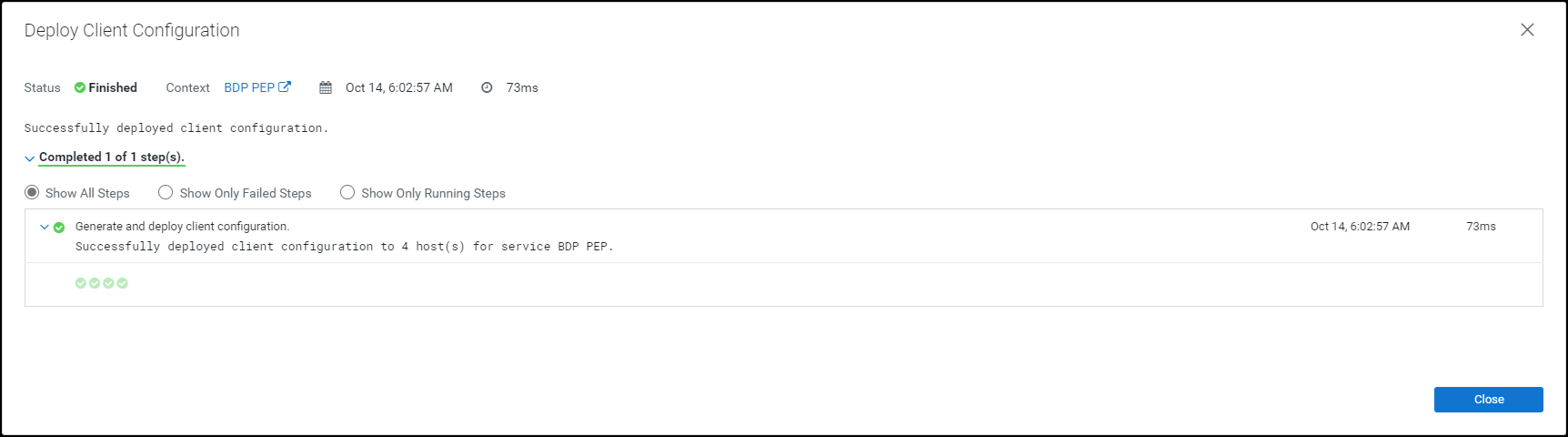

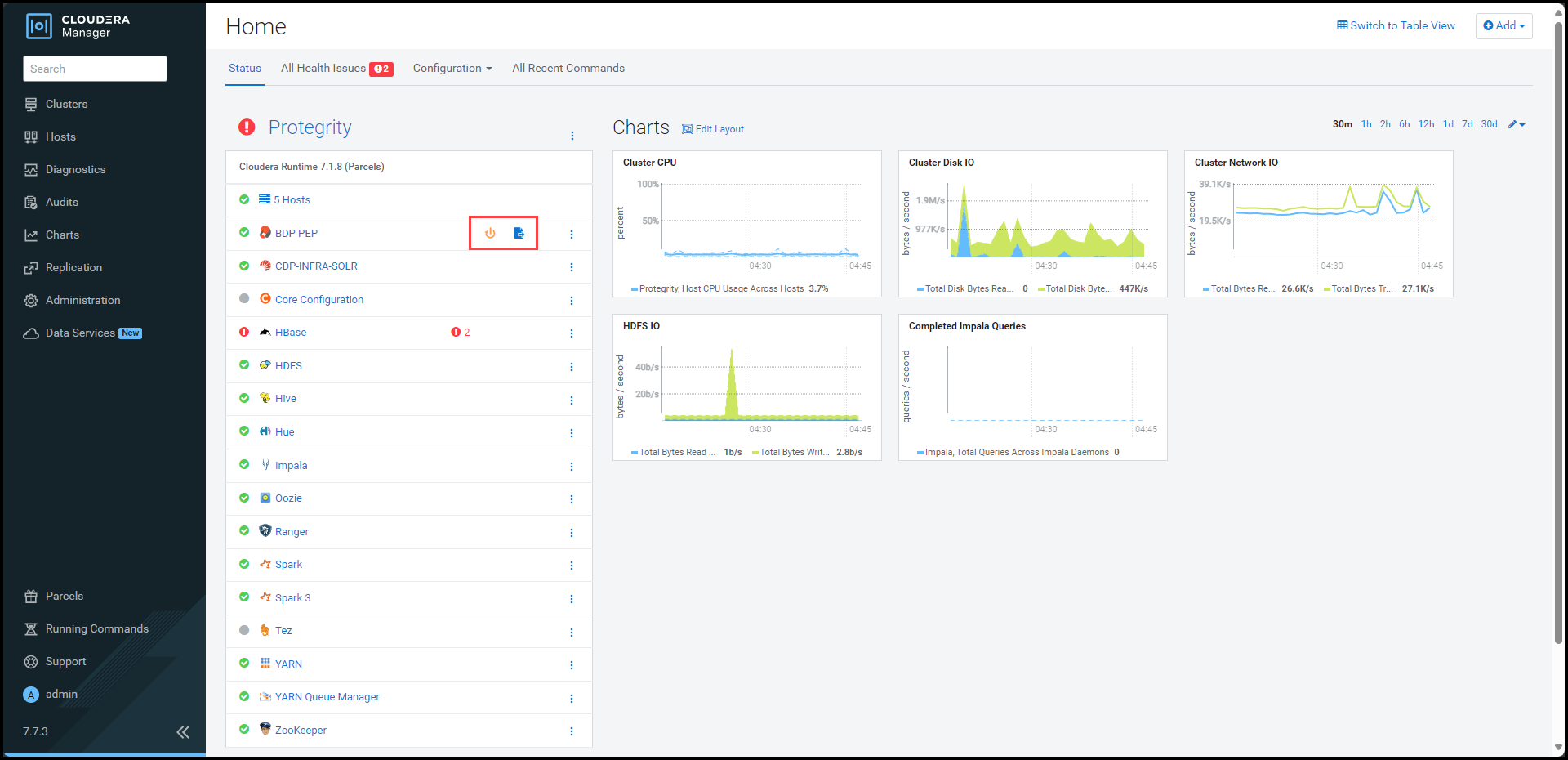

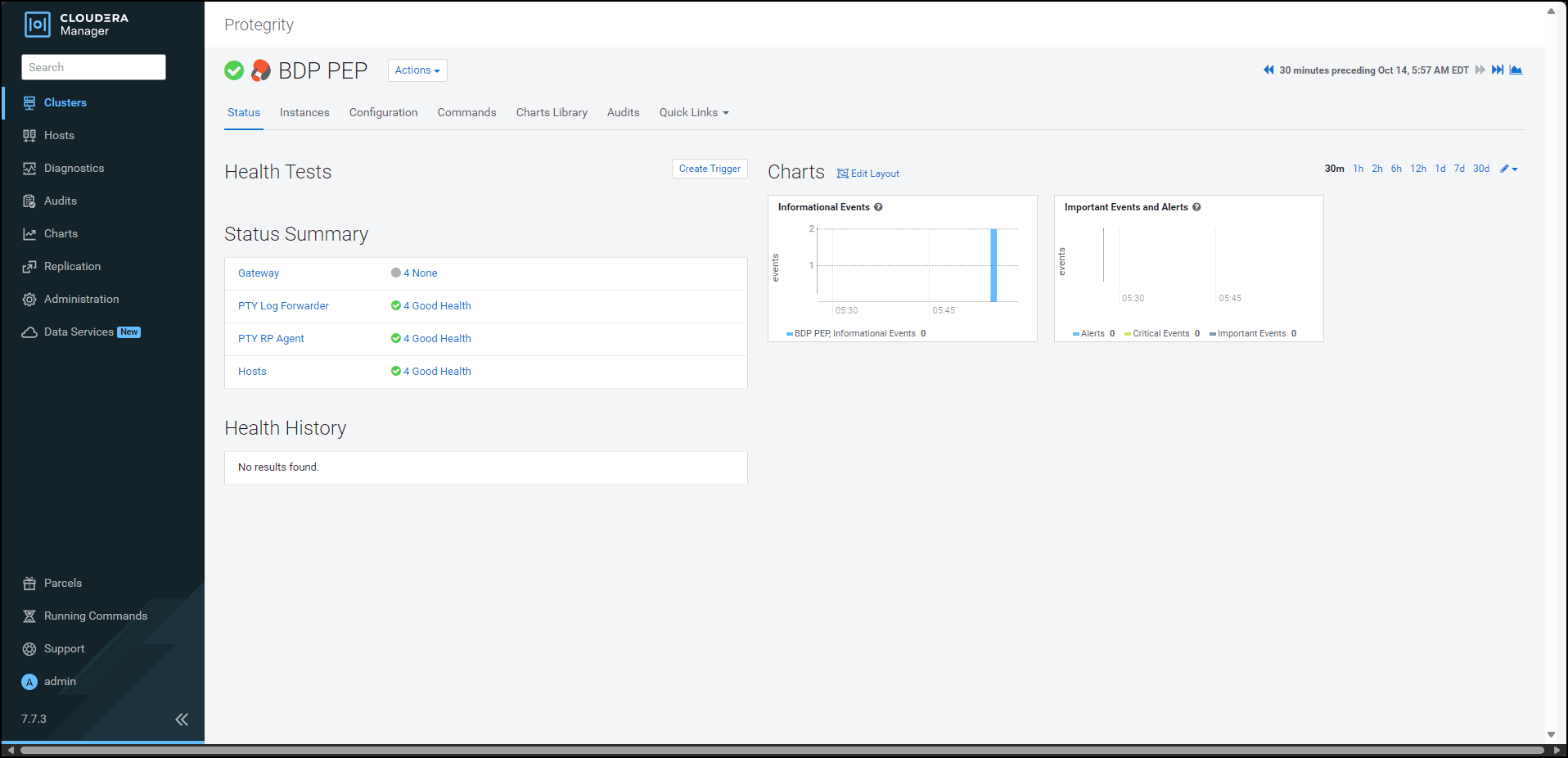

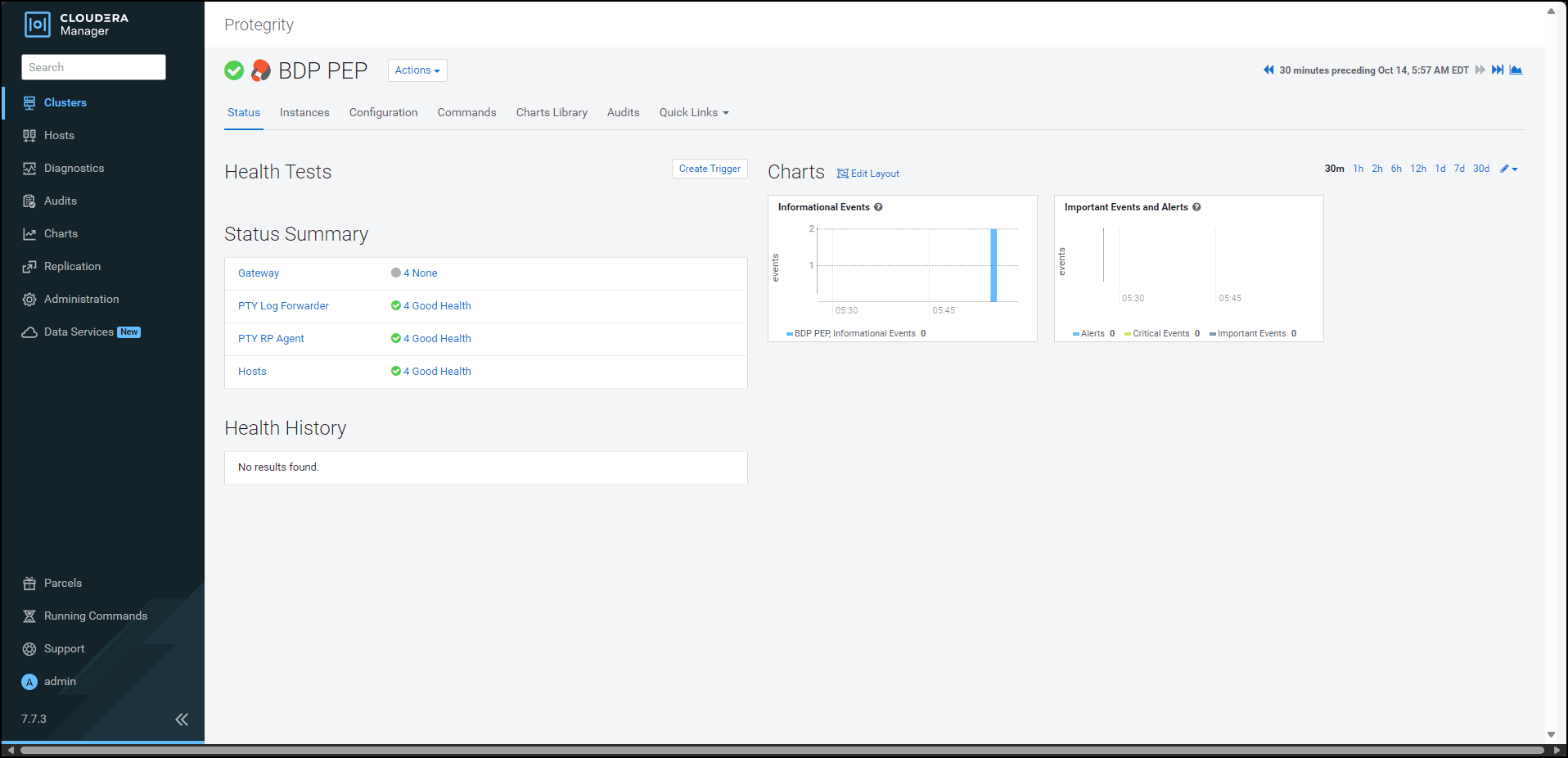

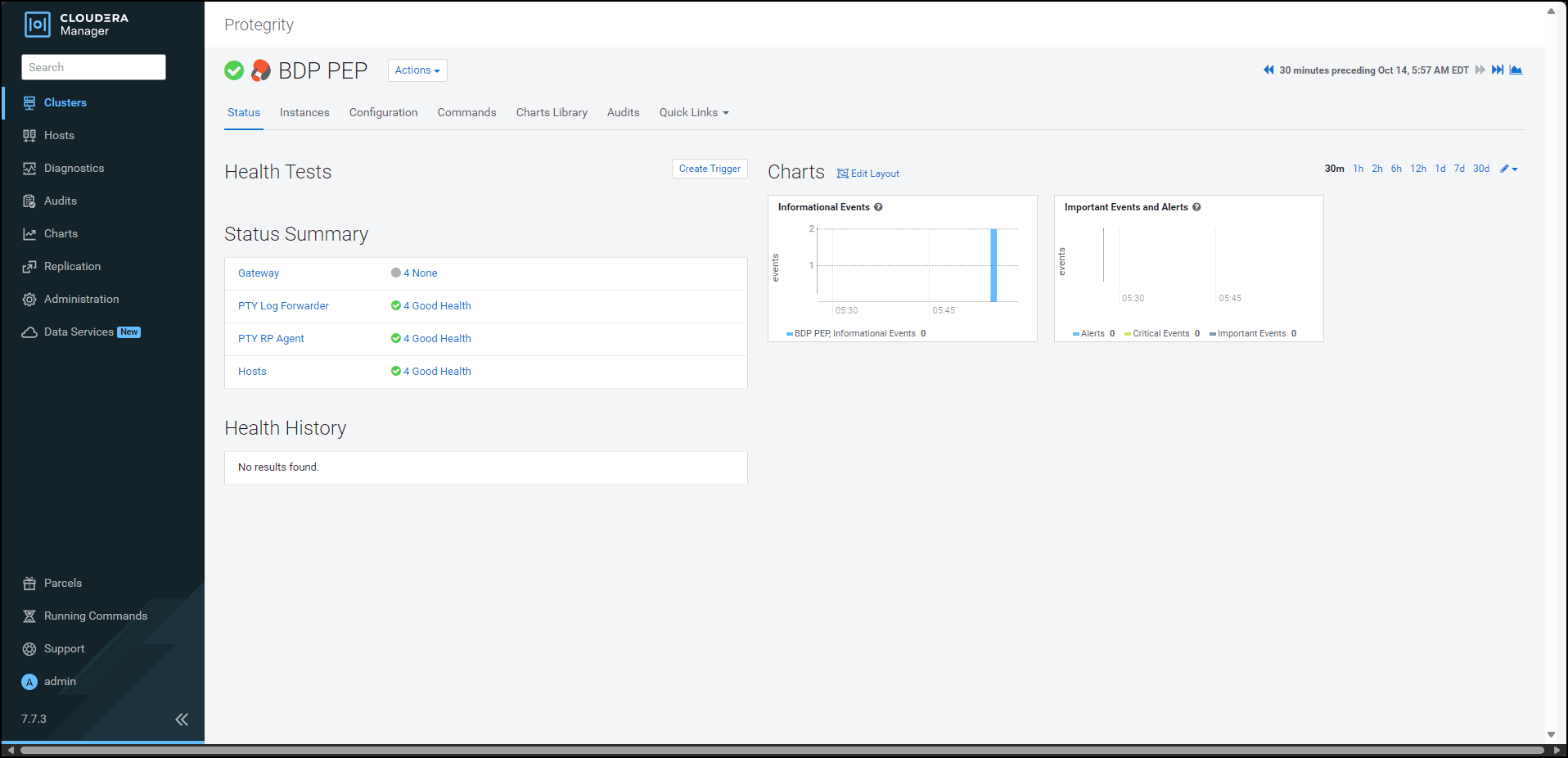

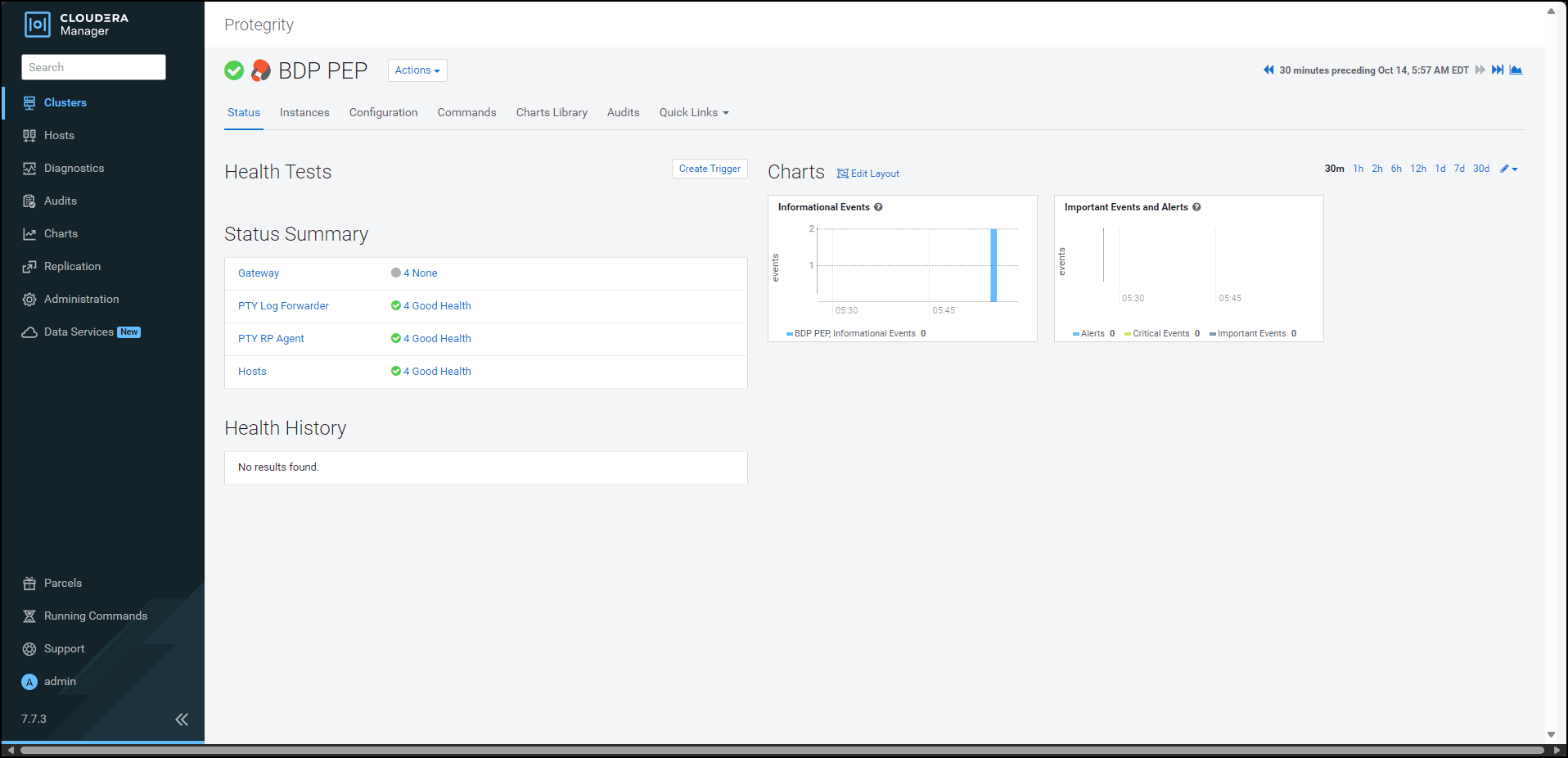

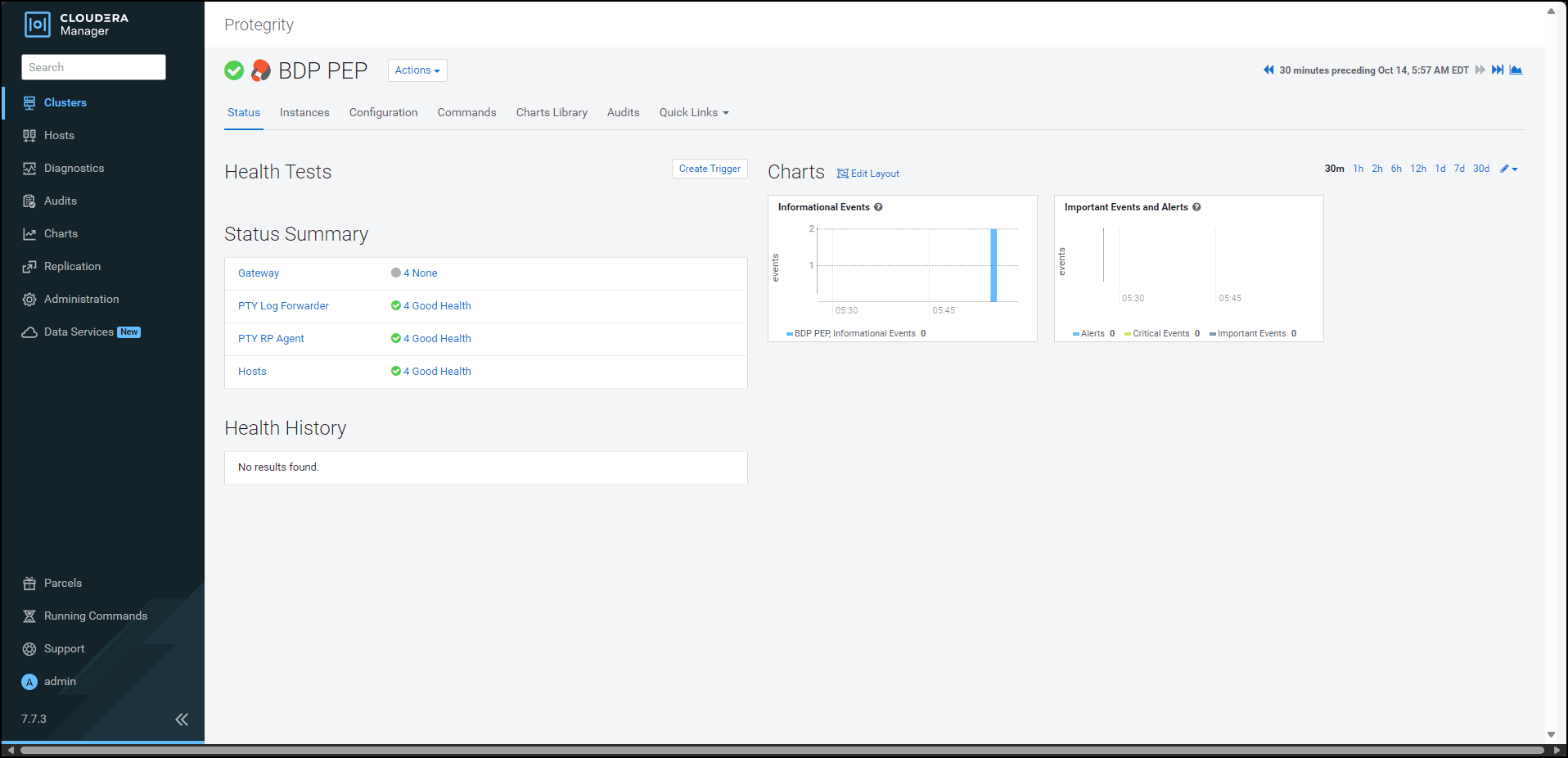

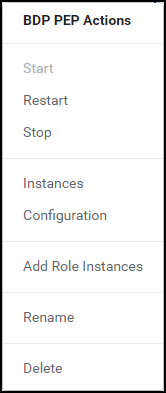

- 10.3.4.2: Starting the Big Data Protector service

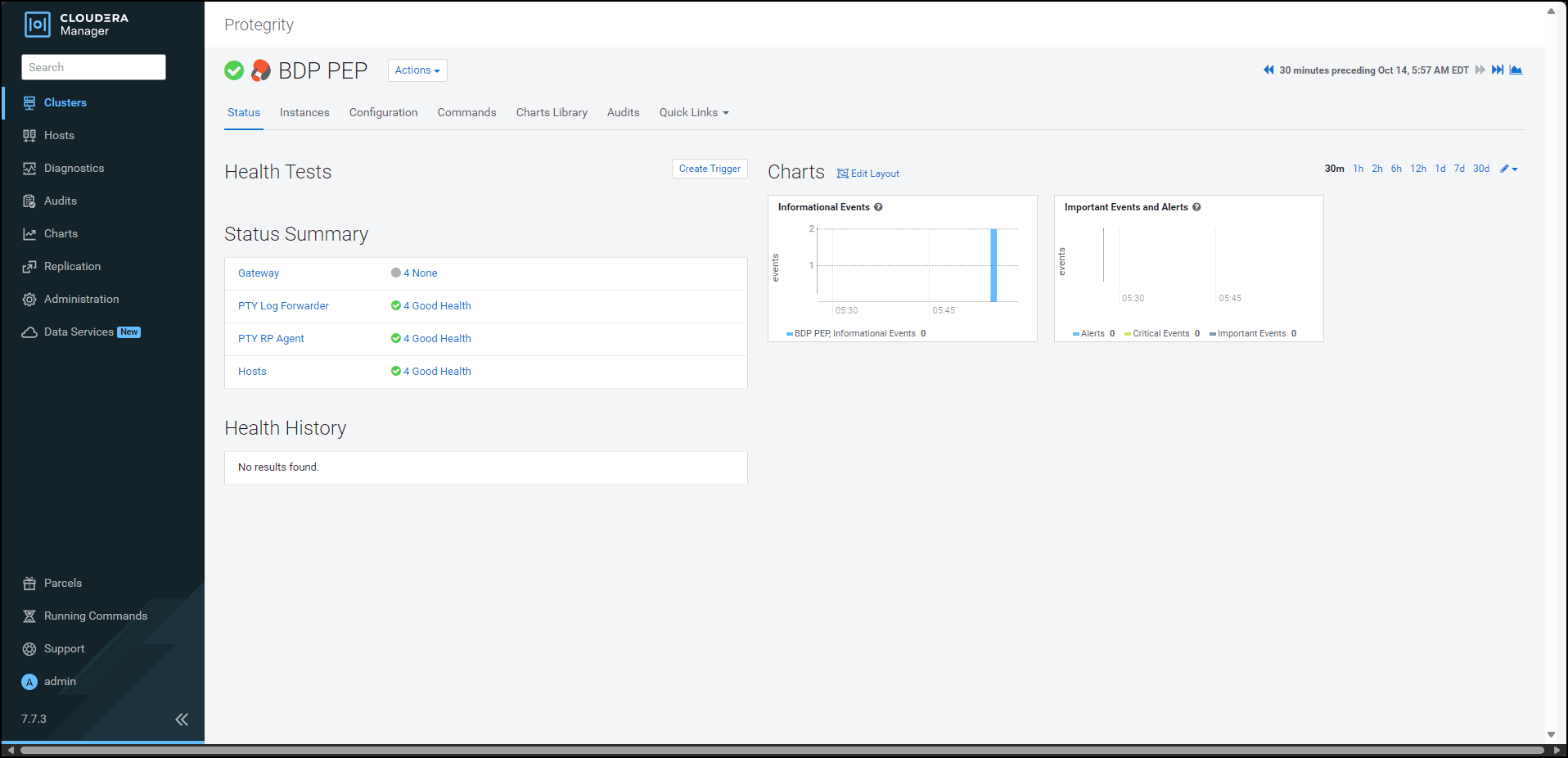

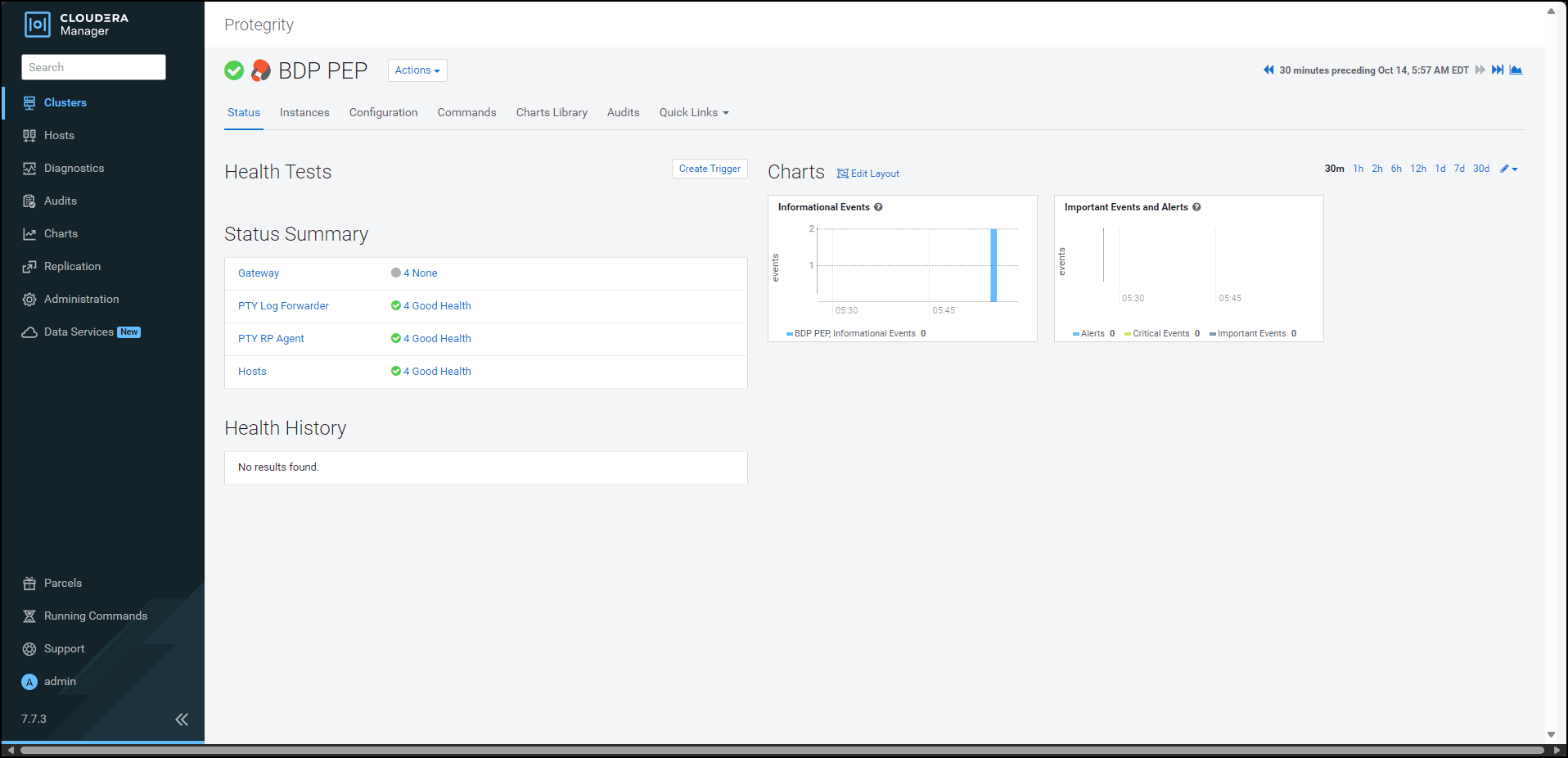

- 10.3.4.3:

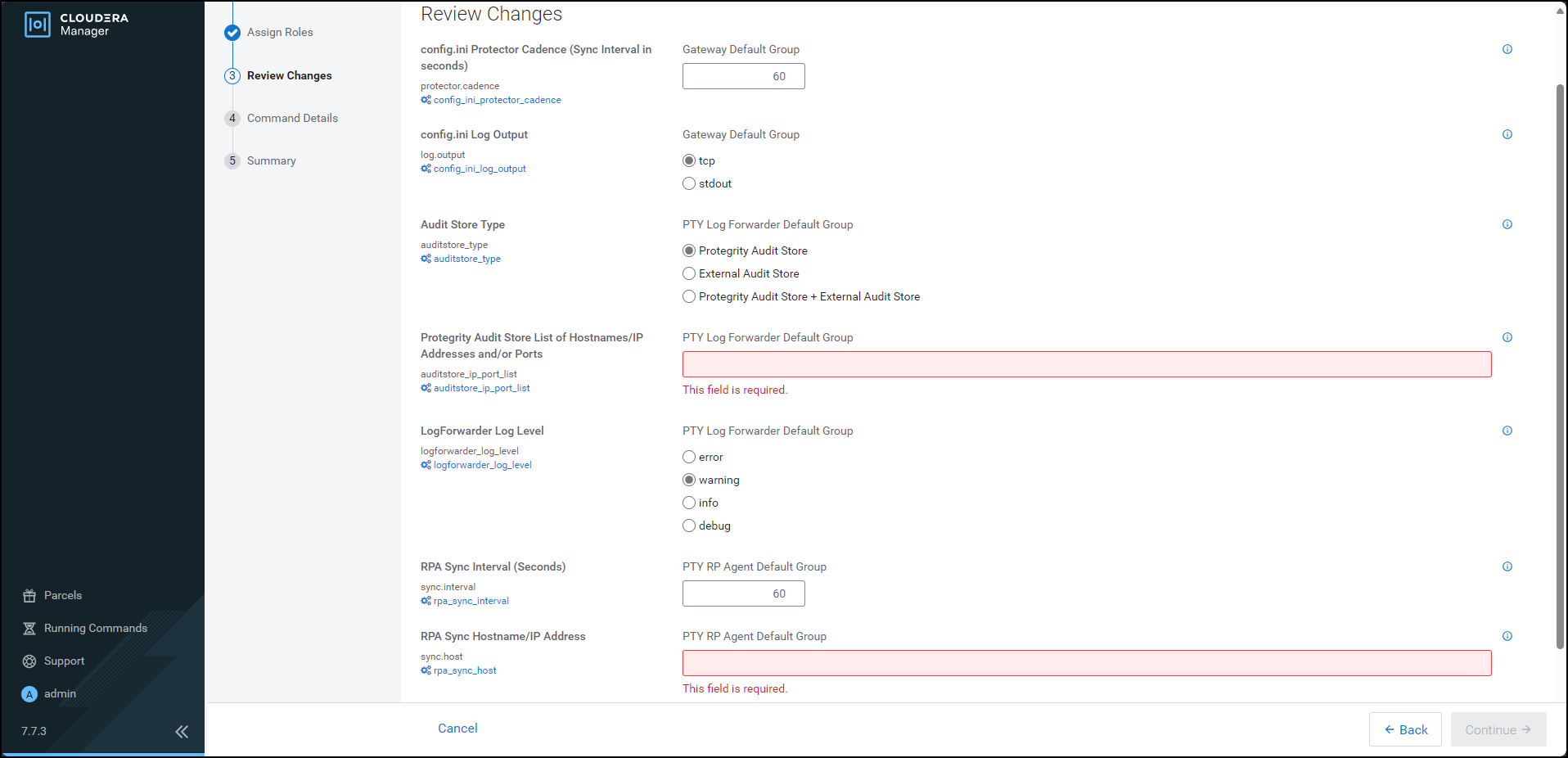

- 10.3.5: Configuring the Protector

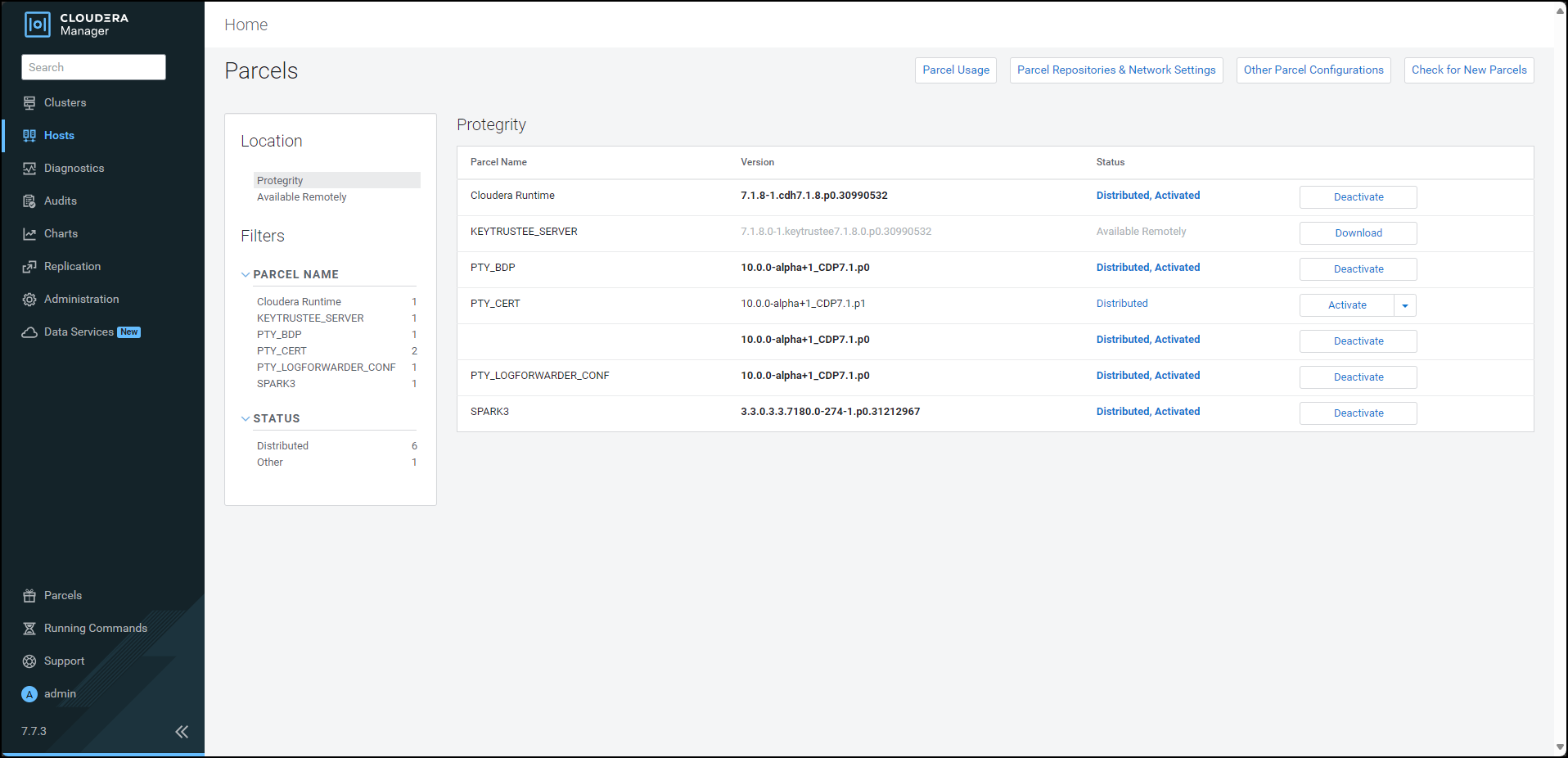

- 10.3.5.1: Updating the Parcels

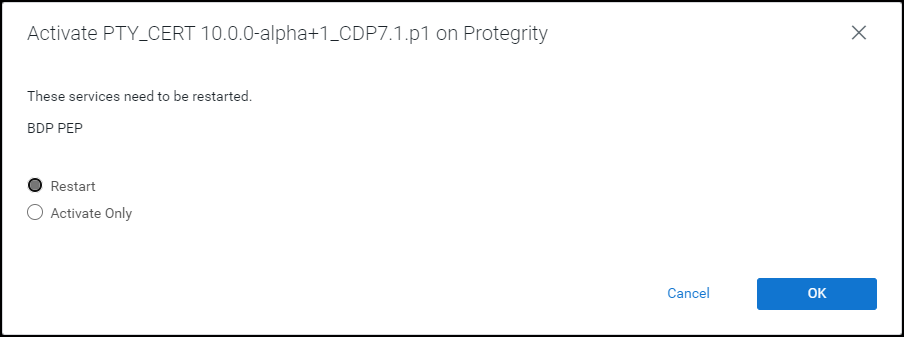

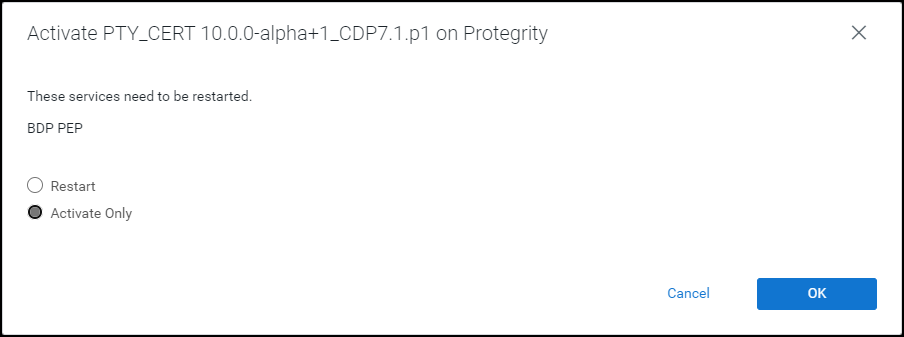

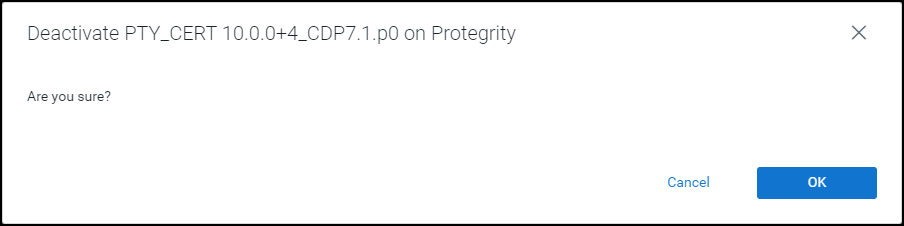

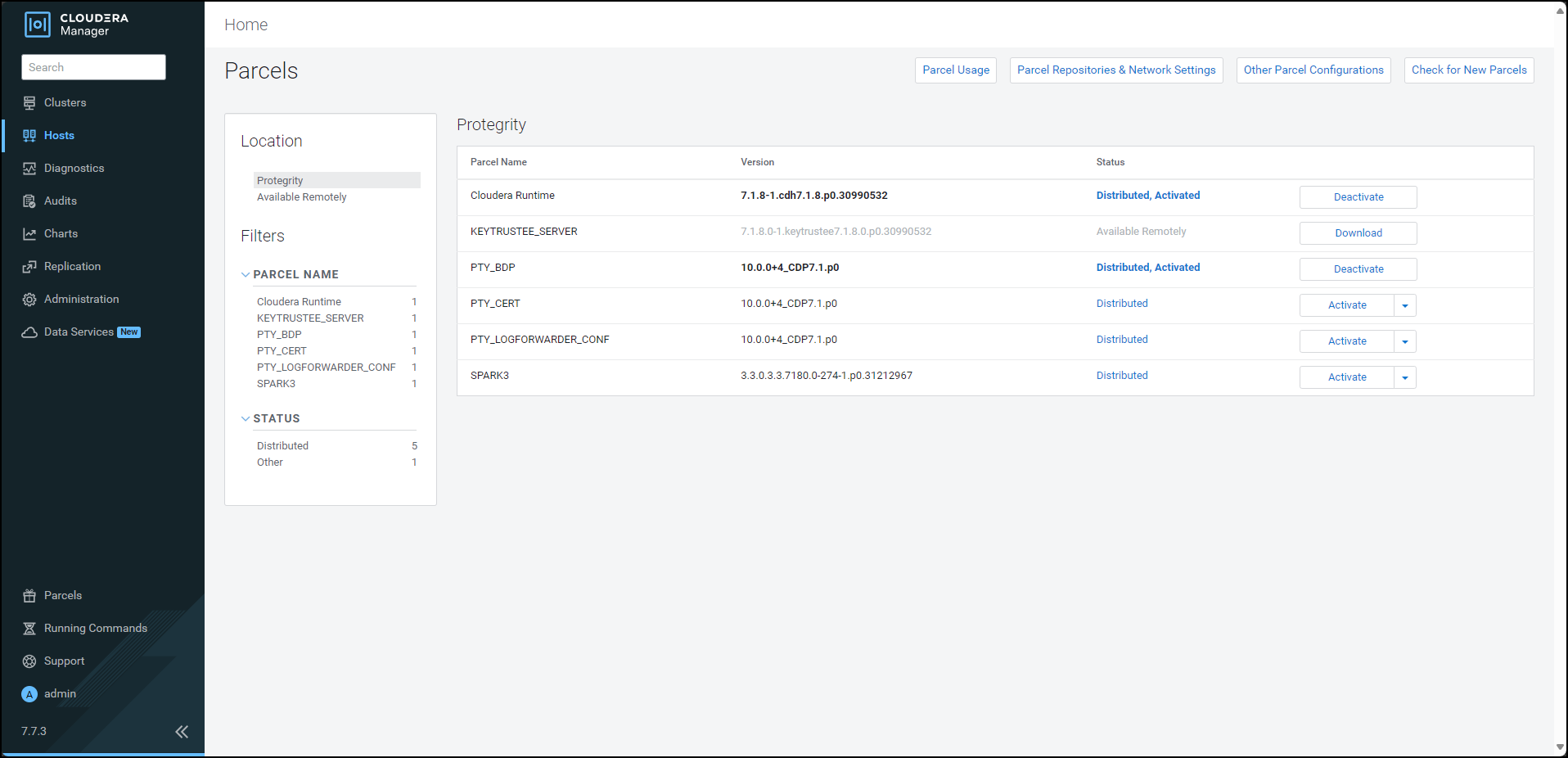

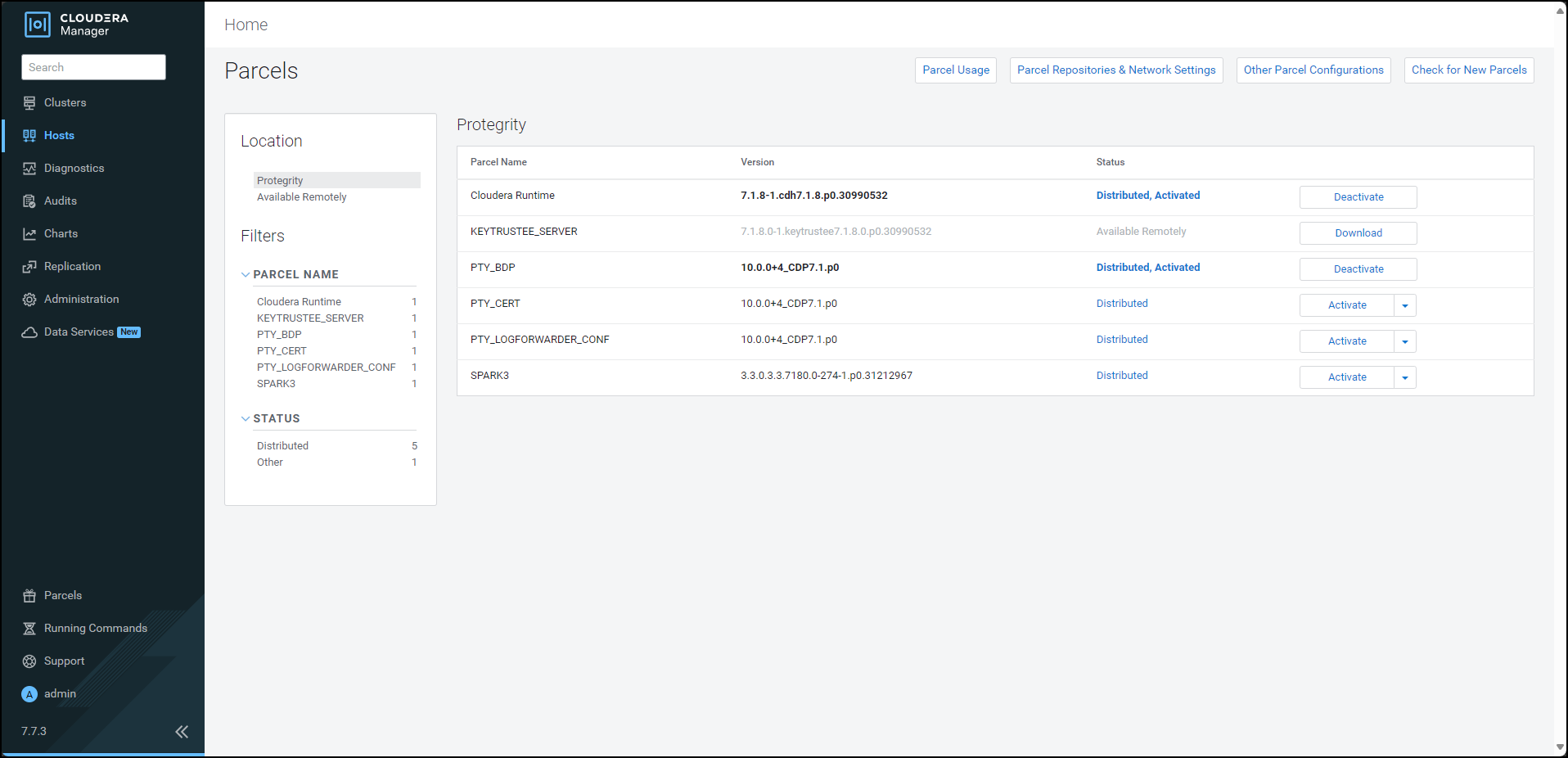

- 10.3.5.1.1: Updating the Certifcate Parcels with a Restart

- 10.3.5.1.2: Updating the Certificate Parcels without a Restart

- 10.3.5.1.3: Updating the Log Forwarder Parcel

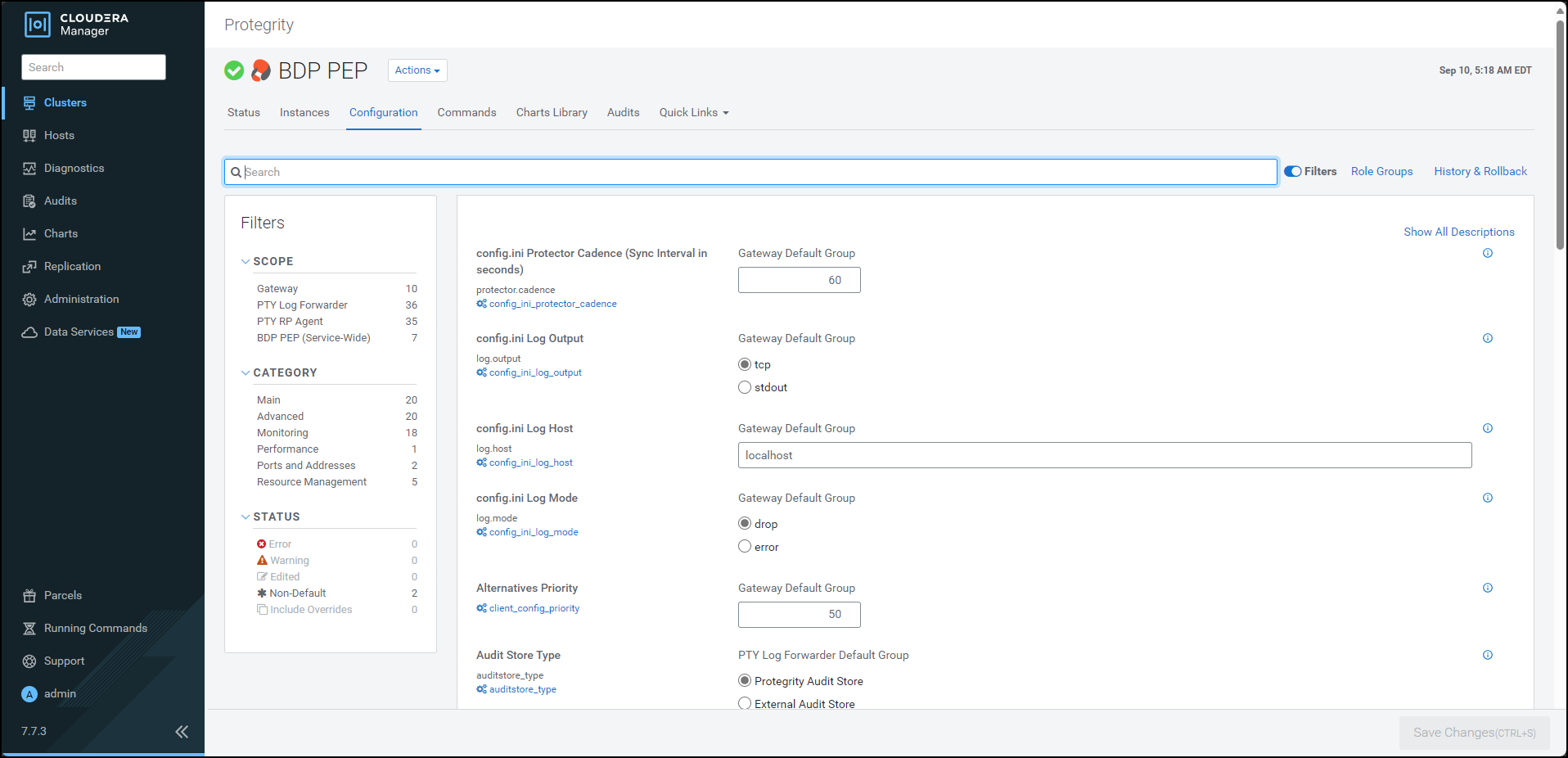

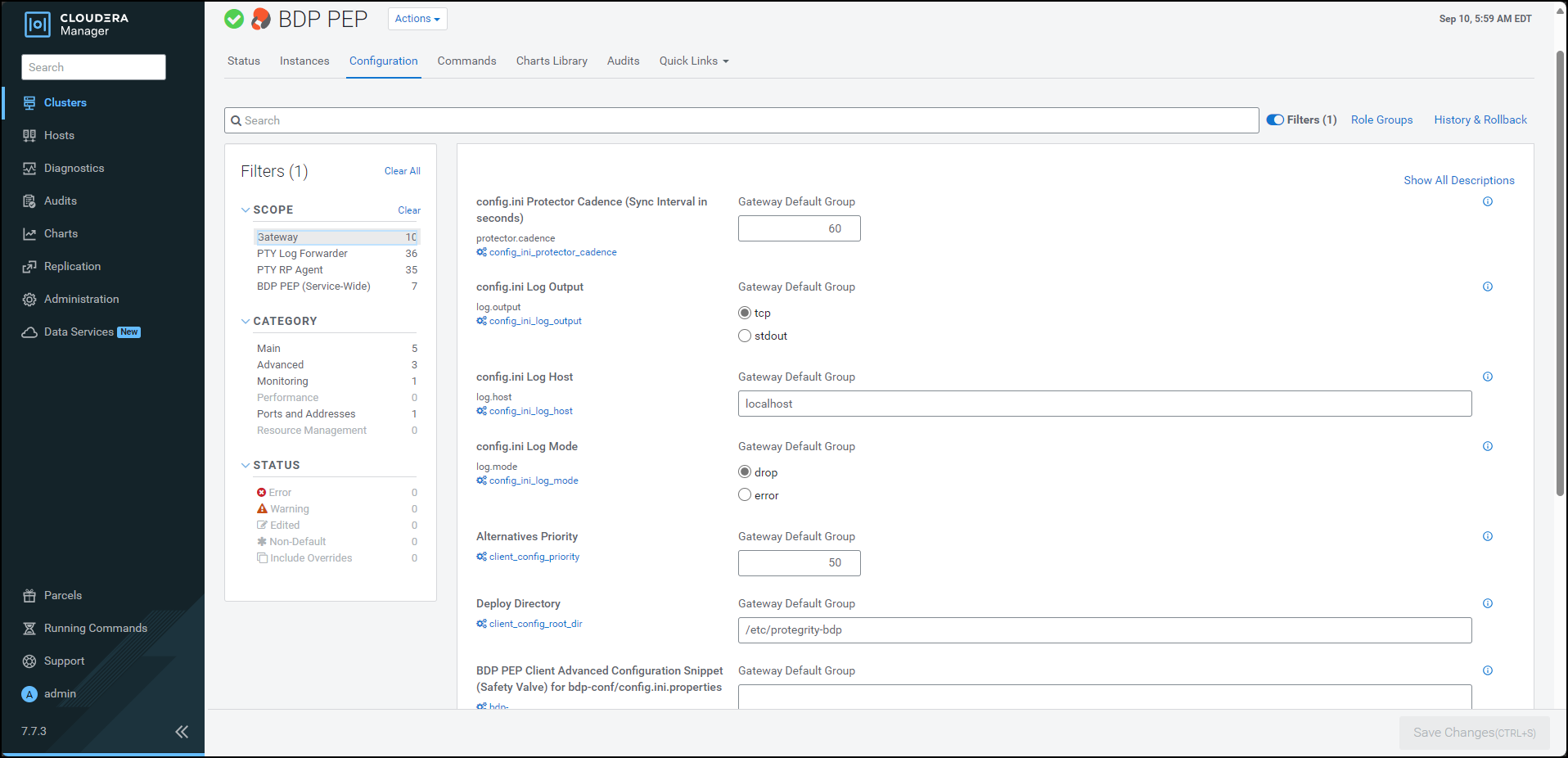

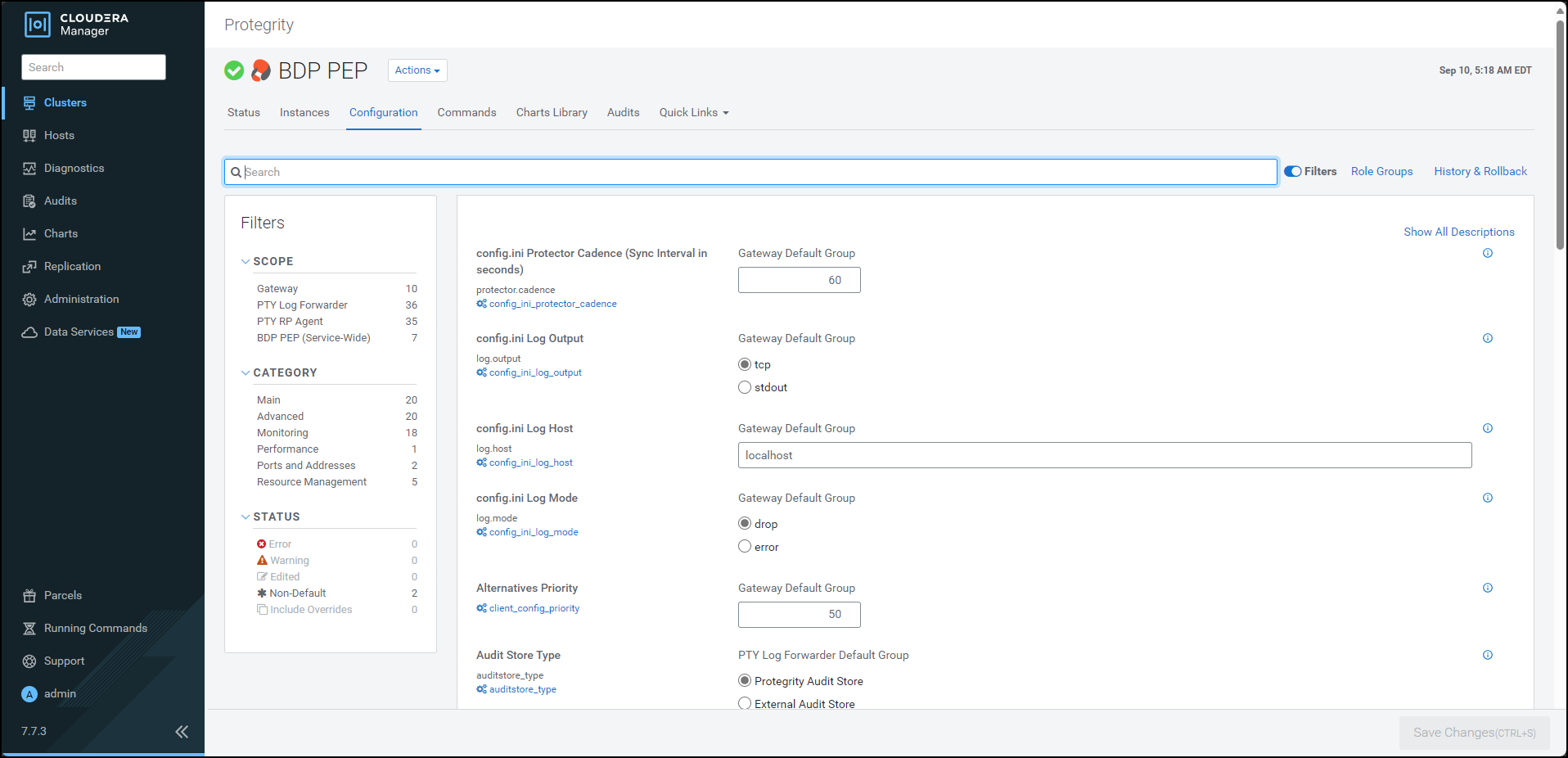

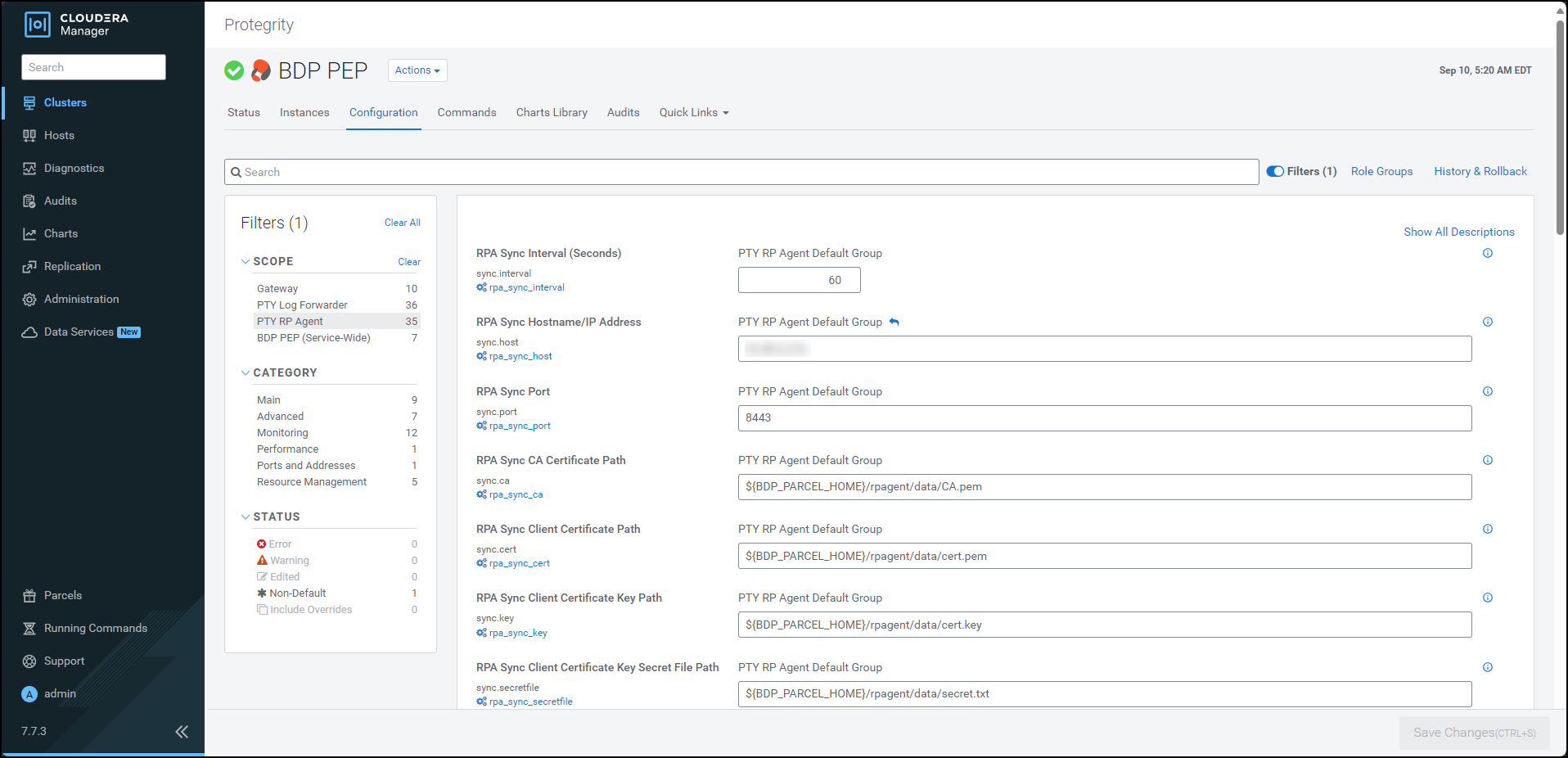

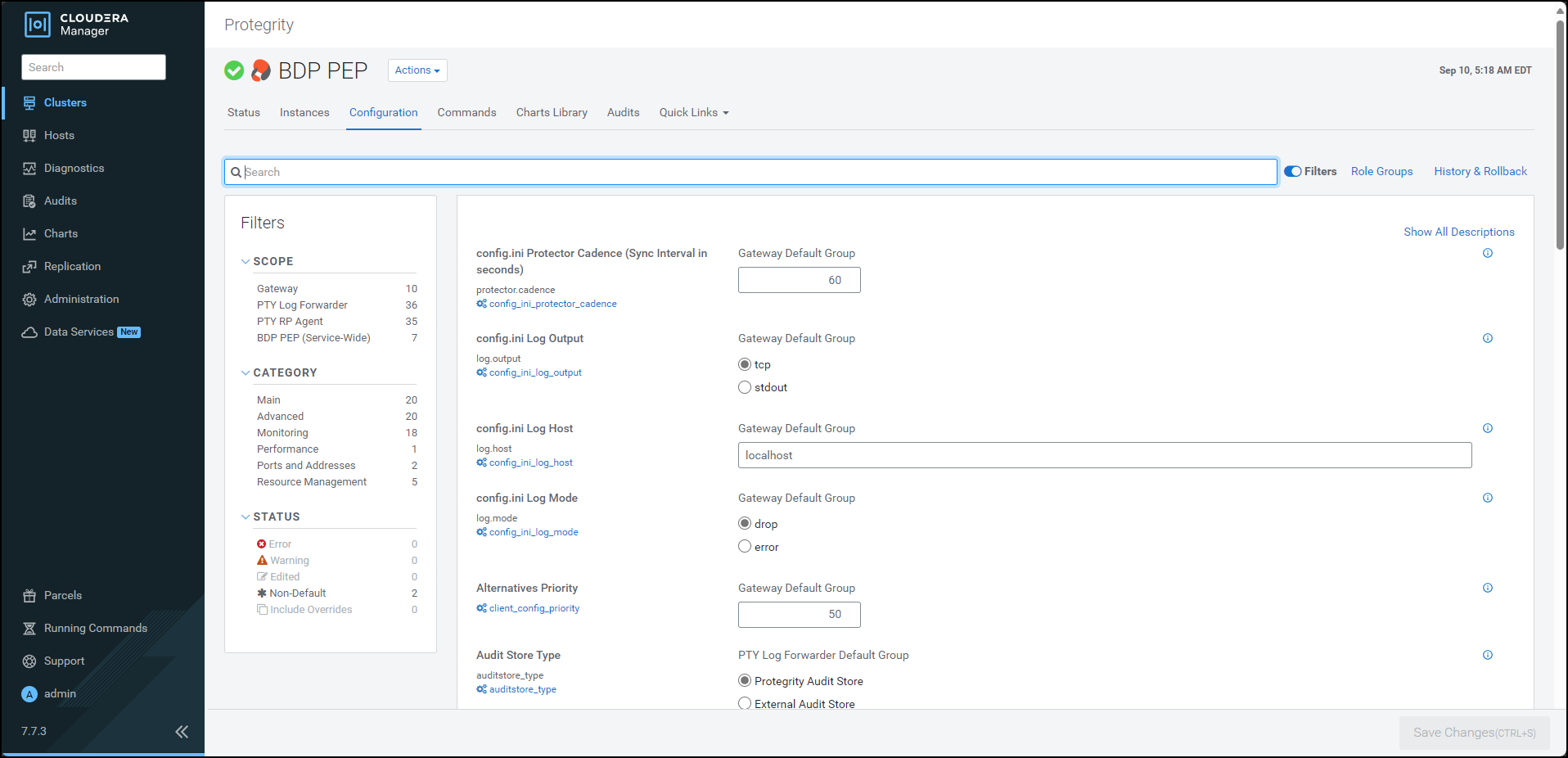

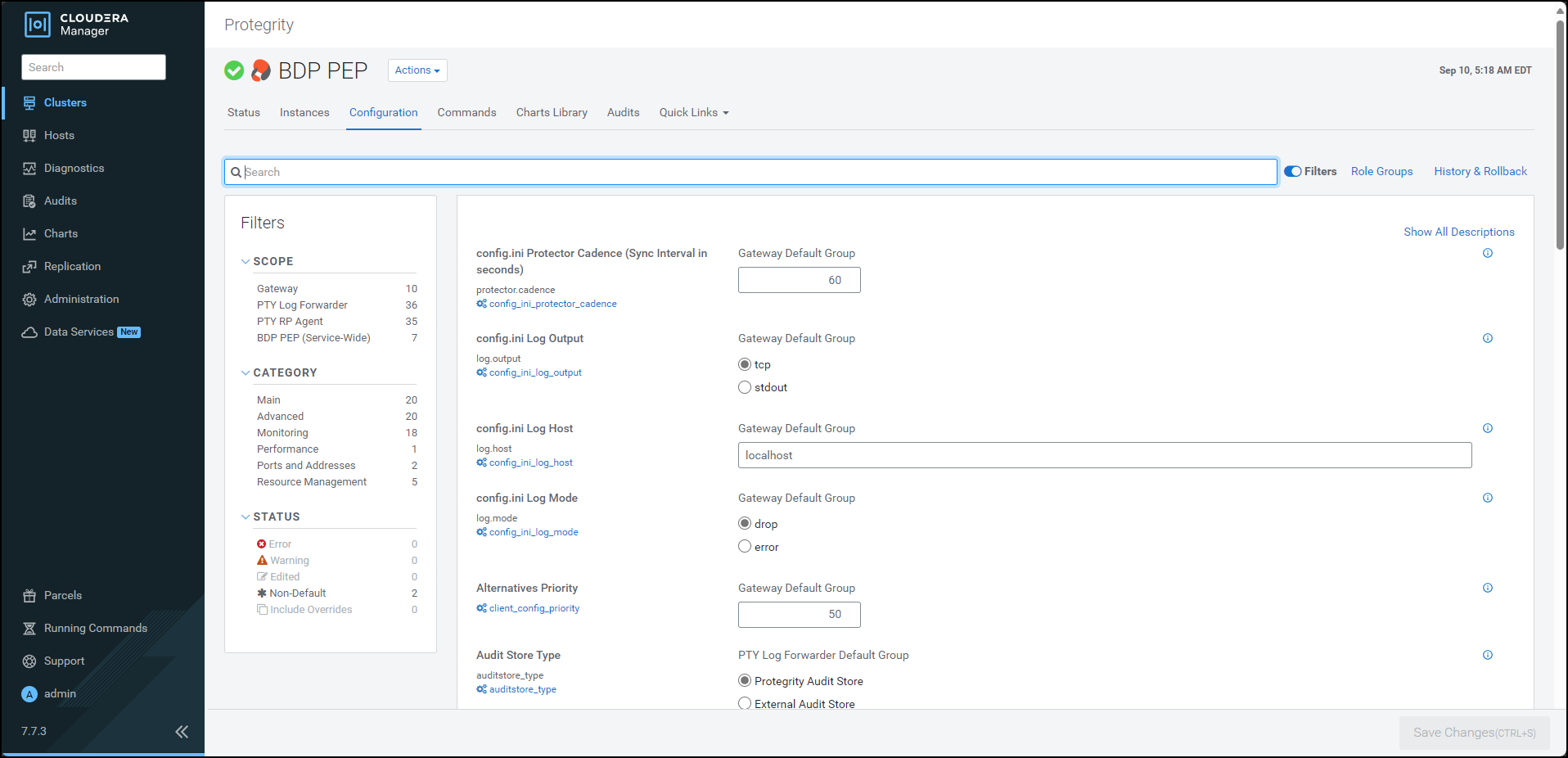

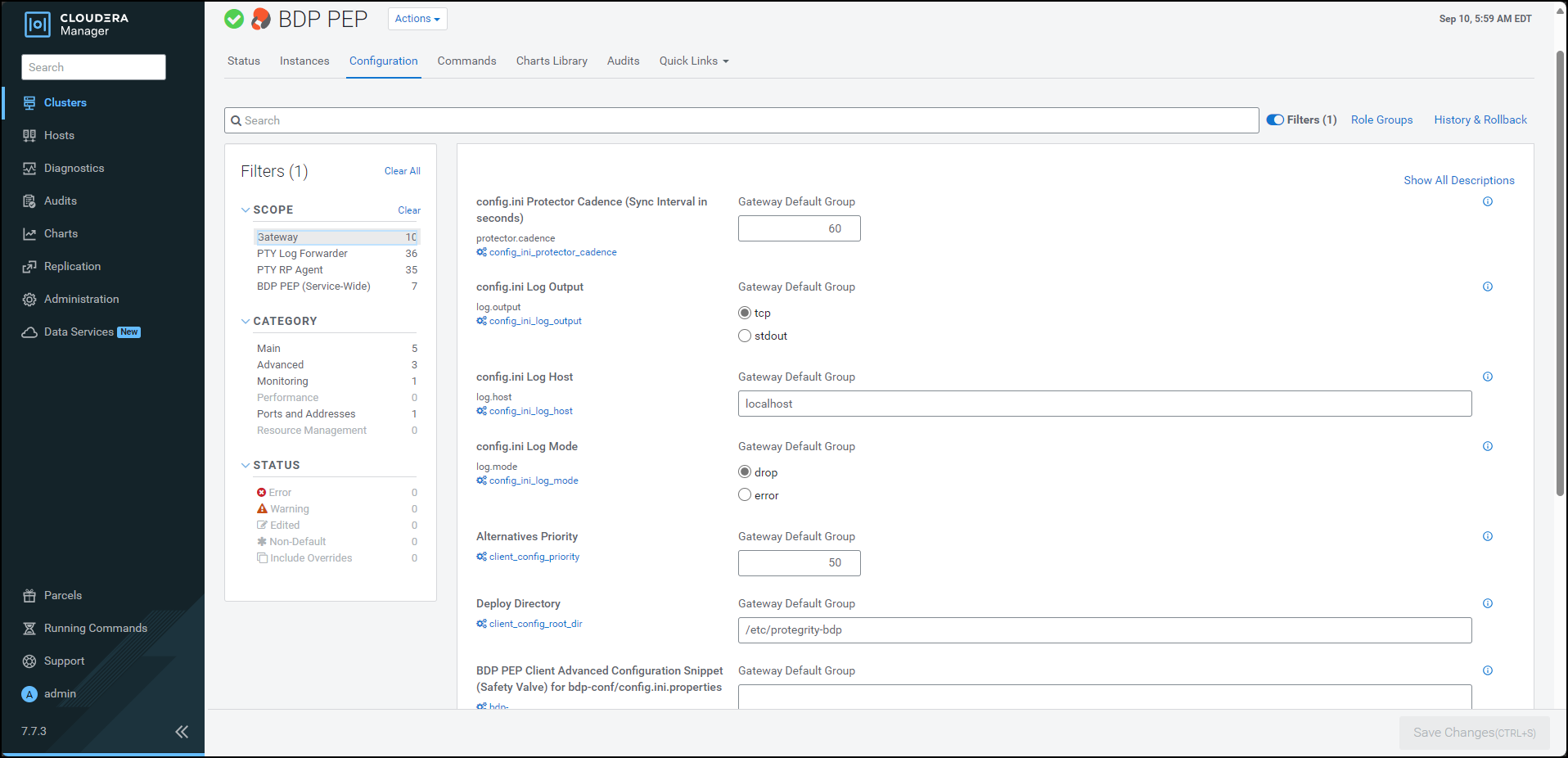

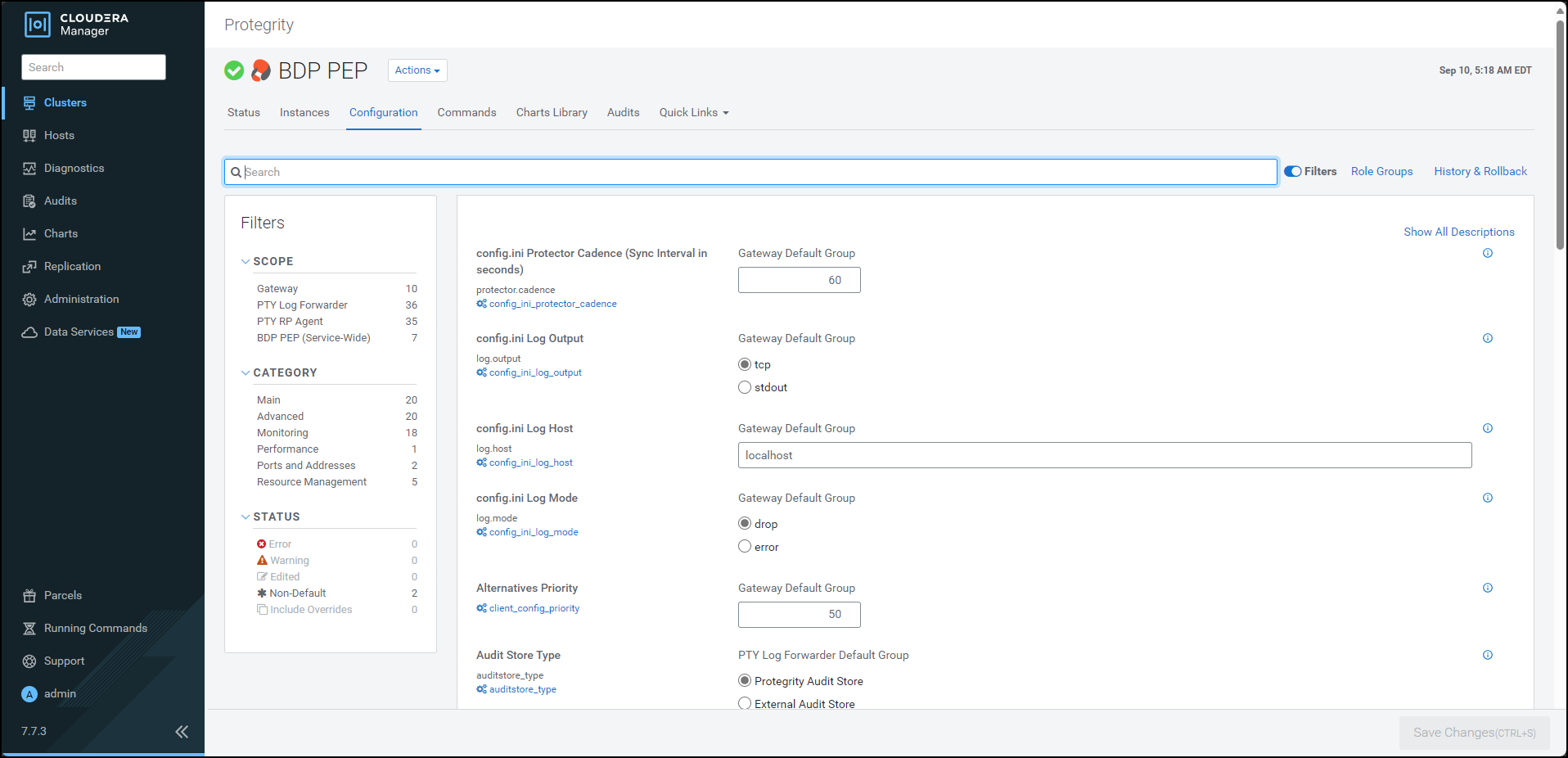

- 10.3.5.2: Setting the Configurations

- 10.3.5.2.1: Updating Parameters in the config.ini file

- 10.3.5.2.2: Updating Parameters for the RP Agent

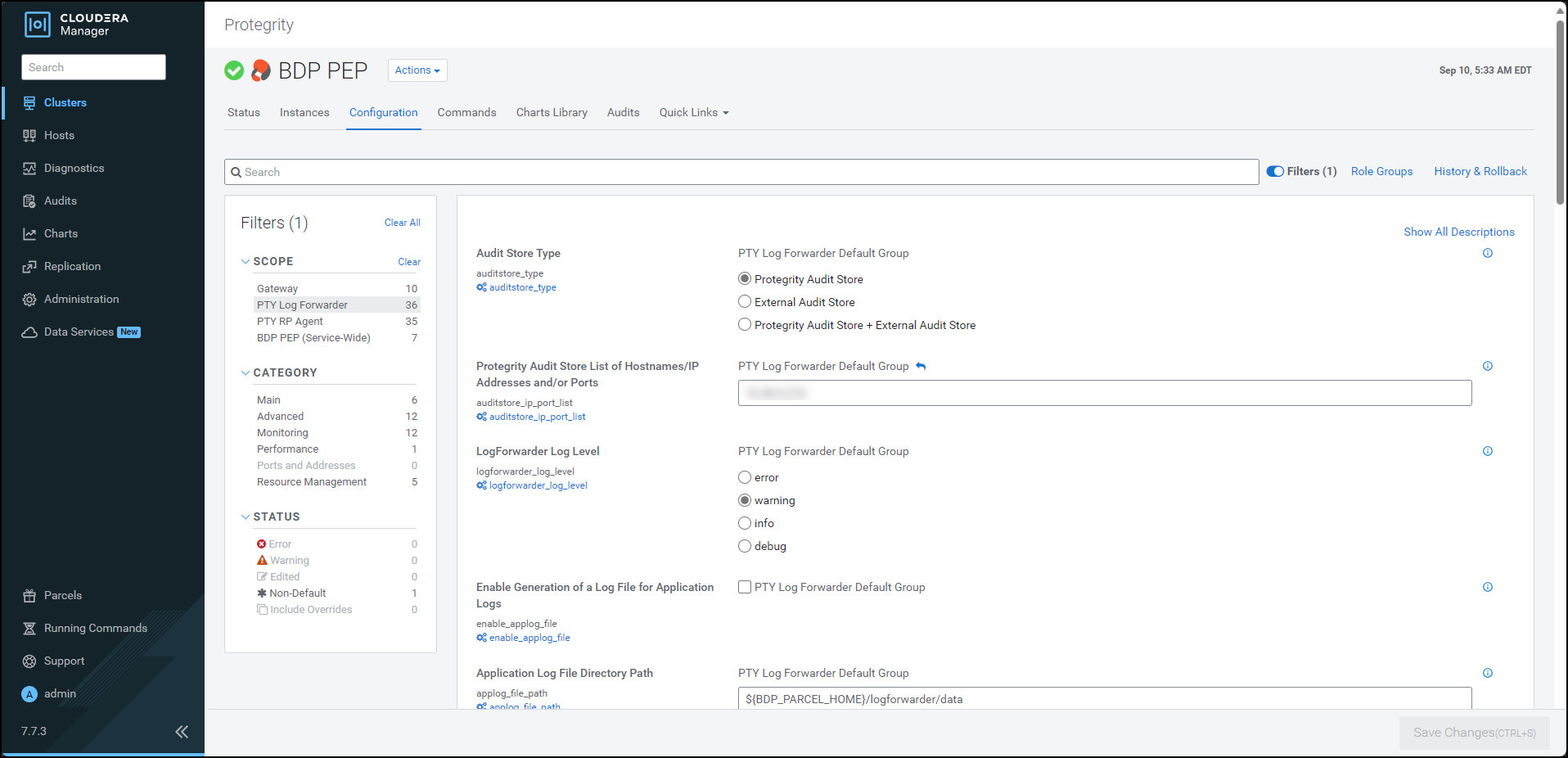

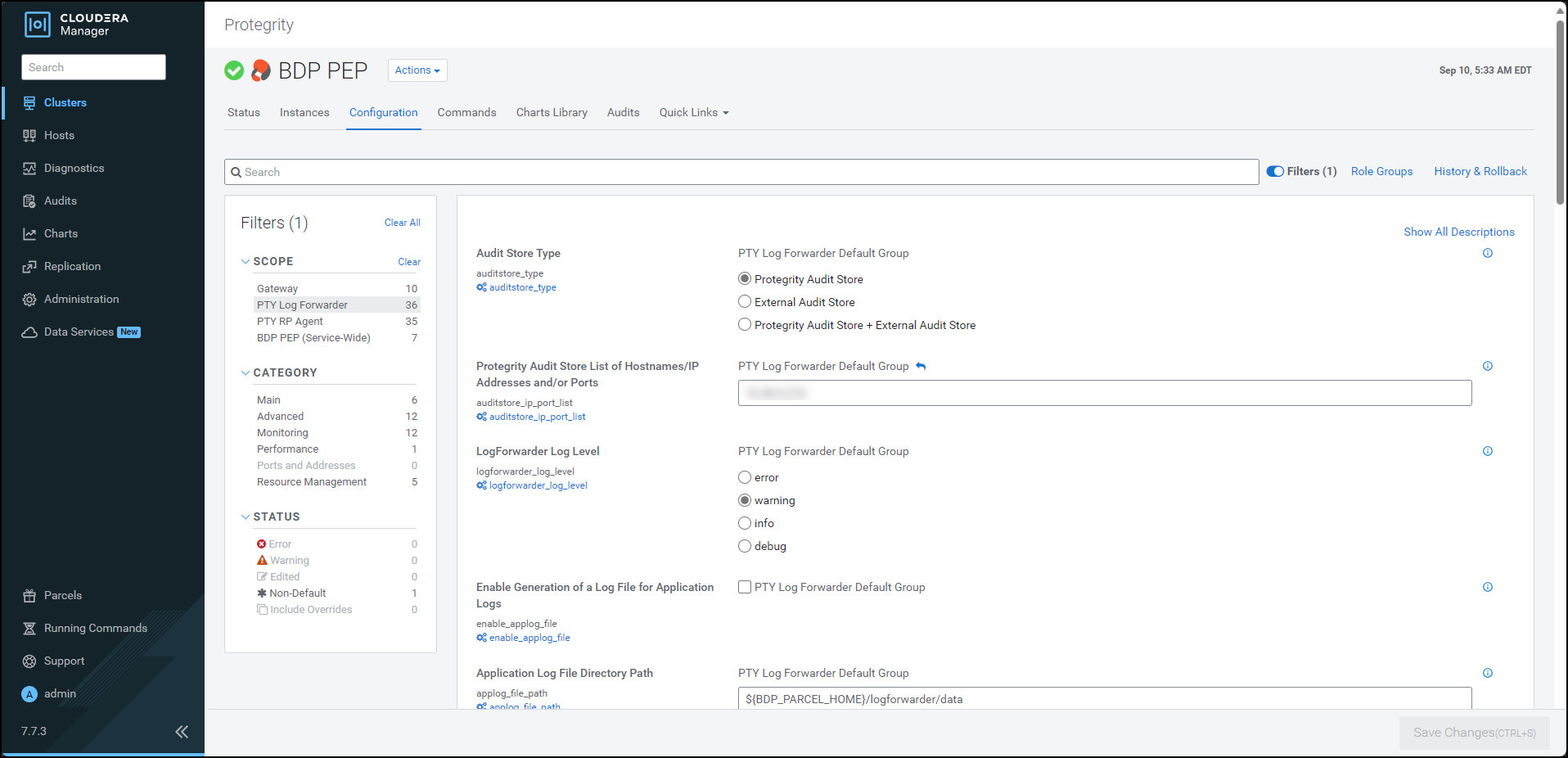

- 10.3.5.2.3: Updating Parameters for the Log Forwarder

- 10.3.5.2.4: Adding a new configuration parameter

- 10.3.5.2.5: Setting the Big Data Protector configuration

- 10.3.5.2.6: Enabling the application log file

- 10.3.5.3: Creating the User Defined Functions

- 10.3.5.3.1: Registering and dropping the Hive UDFs

- 10.3.5.3.2: Registering the Spark UDFs

- 10.3.5.3.3: Registering the Impala UDFs

- 10.3.5.3.4: Installing the Impala UDFs

- 10.3.6: Uninstalling the Protector

- 10.3.6.1: Dropping the User Defined Functions

- 10.3.6.2: Uninstalling the Impala UDFs

- 10.3.6.3: Restoring the Big Data Protector configuration

- 10.3.6.4: Removing the Big Data Protector Services

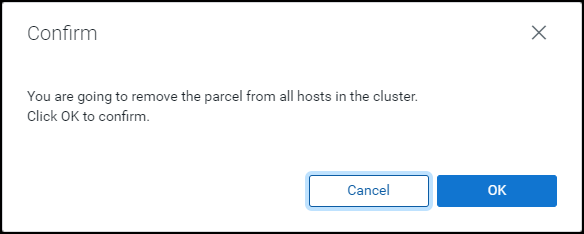

- 10.3.6.5: Deactivating the parcels

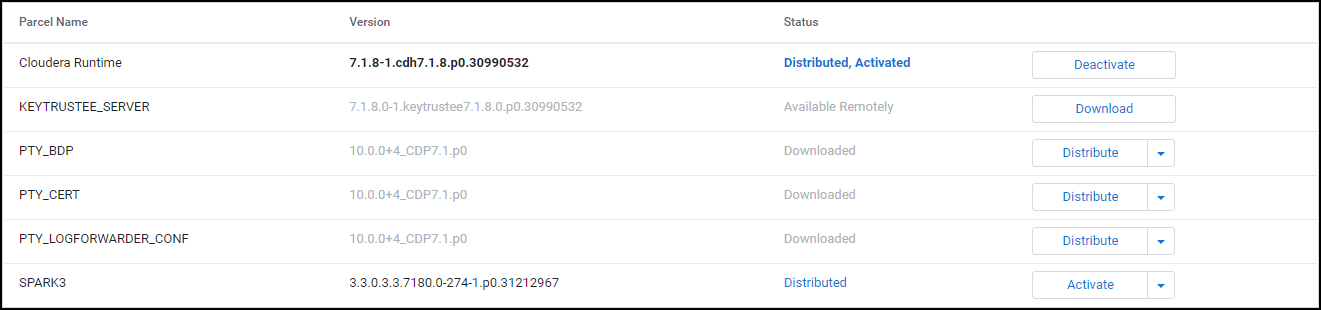

- 10.3.6.6: Removing the parcels

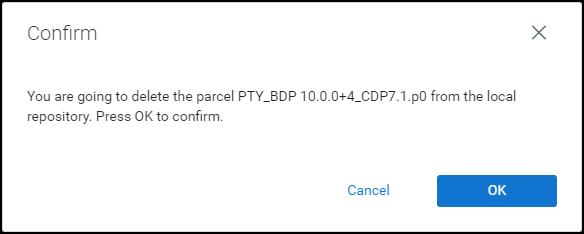

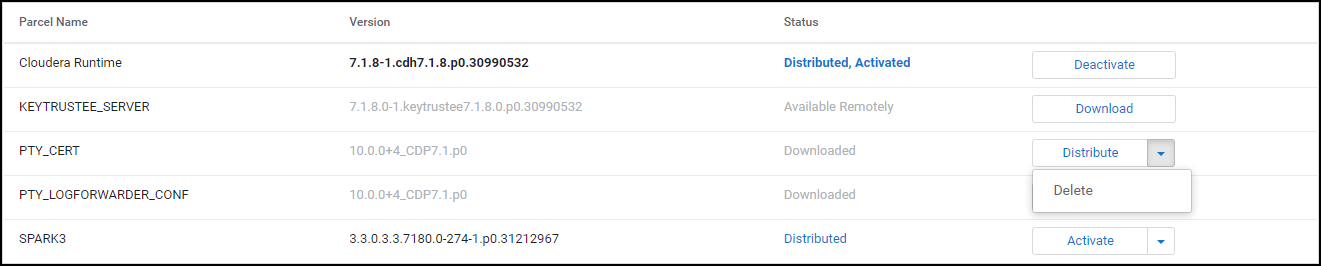

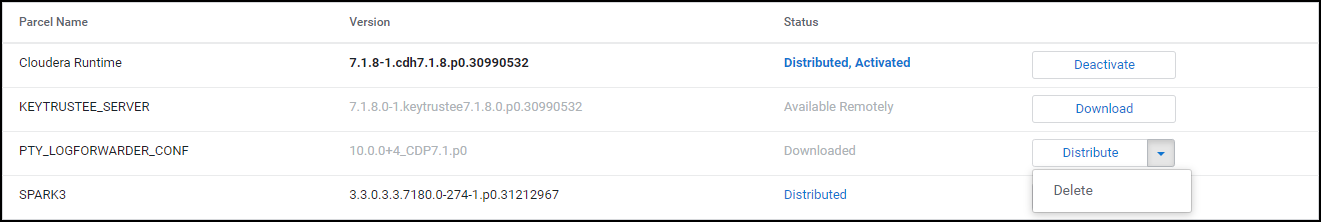

- 10.3.6.7: Deleting the parcels from the local repository

- 10.3.6.8: Deleting the CSD files

- 10.4: CDP AWS DataHub

- 10.4.1: Understanding the architecture

- 10.4.2: System Requirements

- 10.4.3: Preparing the Environment

- 10.4.3.1: Extracting the package

- 10.4.3.2: Executing the configurator script

- 10.4.3.3: Registering the Recipe Scripts

- 10.4.3.4: Creating and Registering the Custom Cluster Template

- 10.4.4: Installing the Big Data Protector

- 10.4.4.1: Creating the DataHub Cluster

- 10.4.5: Configuring the Big Data Protector

- 10.4.5.1: Setting the Parameters Manually

- 10.4.5.2: Setting the Parameters using the Helper Script

- 10.4.5.3: Installing the UDFs using the Helper Script

- 10.4.5.4: Updating the Configuration Parameters

- 10.4.5.5: Updating the Certificates Parcel

- 10.4.5.6: Updating the Log Forwarder Parcel

- 10.4.6: Uninstalling the Big Data Protector

- 10.5: User Defined Functions and APIs

- 10.5.1: MapReduce APIs

- 10.5.2: Hive UDFs

- 10.5.3: Pig UDFs

- 10.5.4: HBase Commands

- 10.5.5: Impala UDFs

- 10.5.6: Spark Java APIs

- 10.5.7: Spark SQL UDFs

- 10.5.8: PySpark - Scala Wrapper UDFs

- 10.5.9: Unity Catalog Batch Python UDFs

- 10.6: Additional Information

- 11: Data Warehouse Protectors

- 11.1: Deploying the Data Warehouse Protectors

- 11.2: Teradata Data Warehouse Protector

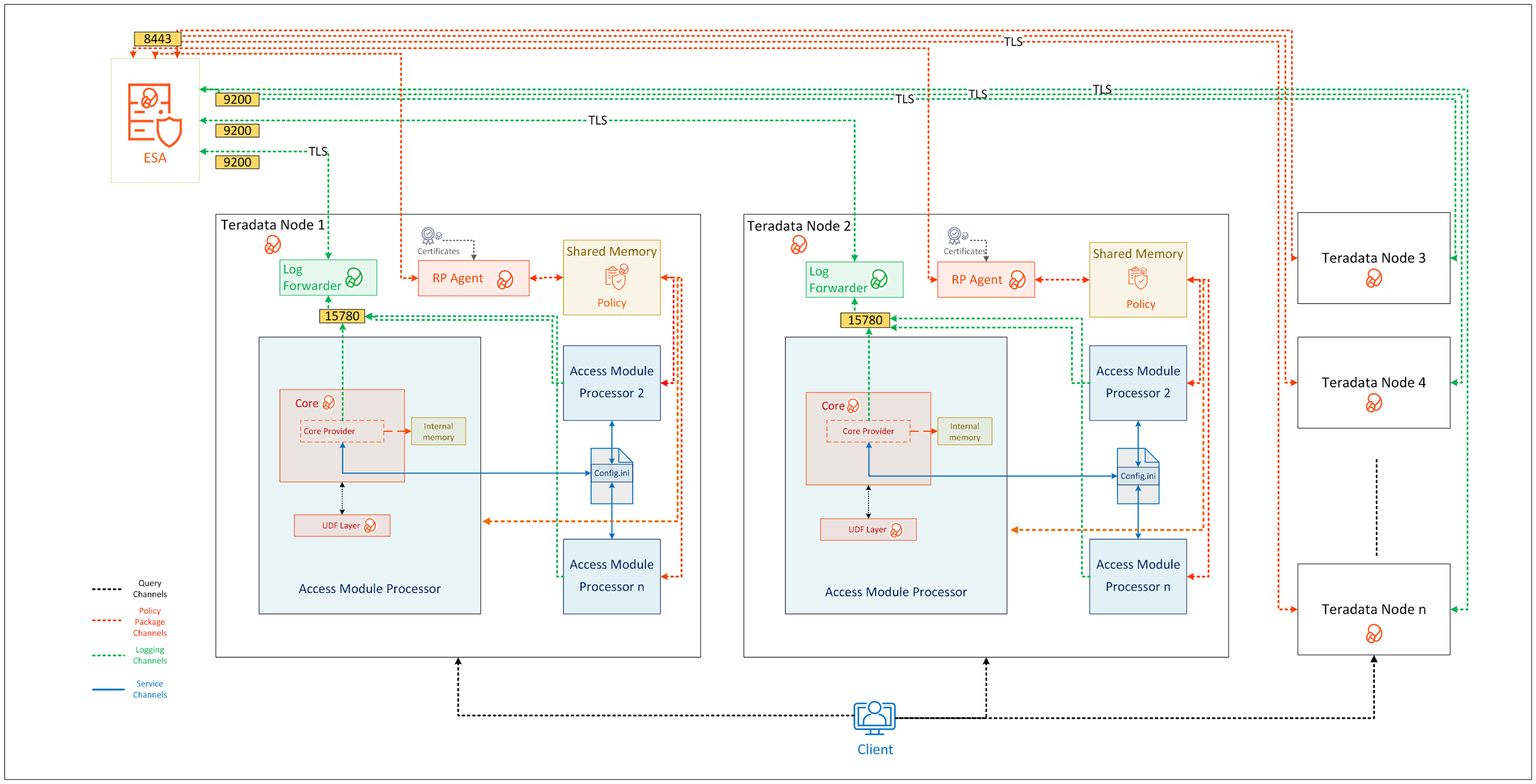

- 11.2.1: Understanding the Architecture

- 11.2.2: System Requirements

- 11.2.3: Preparing the Environment

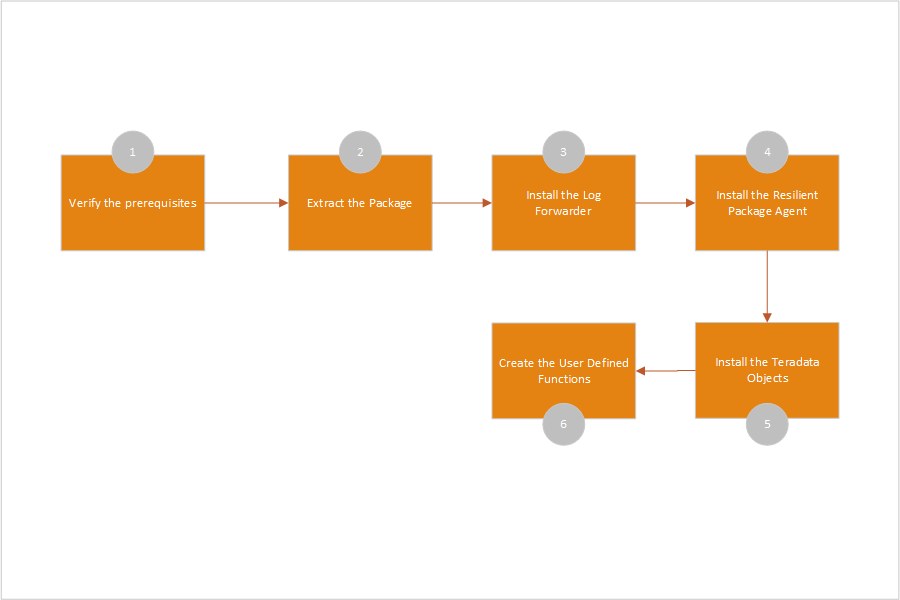

- 11.2.3.1: Extracting the Teradata Installation Package

- 11.2.3.2: Installing the Log Forwarder

- 11.2.3.3: Installing the Resilient Package Agent

- 11.2.4: Installing the Protector

- 11.2.4.1: Installing the Teradata Objects

- 11.2.4.2: Creating the Teradata User Defined Functions (UDFs)

- 11.2.4.3: Installing the Teradata User Defined Types (UDTs)

- 11.2.4.4: Creating the Teradata User Defined Types (UDTs)

- 11.2.5: Configuring the Protector

- 11.2.5.1: Working with the config.ini file

- 11.2.5.1.1: Accessing the config.ini File

- 11.2.5.1.2: Understanding the Parameters in the config.ini File

- 11.2.5.2: Updating the Output Buffer for the Unicode UDFs

- 11.2.5.2.1: Updating the dbpuserconf.ini file

- 11.2.5.2.2: Updating the createvarcharunicode.sql file

- 11.2.6: Uninstalling the Protector

- 11.2.6.1: Uninstalling the Log Forwarder

- 11.2.6.2: Uninstalling the RPAgent

- 11.2.6.3: Uninstalling the User Defined Functions (UDFs)

- 11.2.6.4: Removing the Installation Directory

- 11.3: User Defined Functions and APIs

- 11.3.1: Teradata UDFs

- 11.3.1.1: General UDFs

- 11.3.1.2: Access Check UDFs

- 11.3.1.3: Varchar Latin UDFs

- 11.3.1.4: Varchar Unicode UDFs

- 11.3.1.5: Float UDFs

- 11.3.1.6: Small Integer UDFs

- 11.3.1.7: Integer UDFs

- 11.3.1.8: Big Integer UDFs

- 11.3.1.9: Date UDFs

- 11.3.1.10: 8-Byte and 16-Byte Decimal UDFs

- 11.3.1.11: JSON UDFs

- 11.3.1.12: XML UDFs

- 11.3.1.13: Float UDFs for No Encryption

- 11.3.1.14: Date UDFs for No Encryption

- 11.3.1.15: 8-Byte AND 16-Byte Decimal UDFs for No Encryption

- 11.3.2: Trino User Defined Functions and Procedures

- 11.3.2.1: General UDFs

- 11.3.2.2: VarChar UDFs

- 11.3.2.3: BigInt UDFs

- 11.3.2.4: SmallInt UDFs

- 11.3.2.5: Integer UDFs

- 11.3.2.6: Date UDFs

- 11.3.2.7: DateTime UDFs

- 11.3.2.8: VarChar Encryption UDFs

- 11.3.2.9: Unicode UDFs

- 11.3.2.10: Decimal UDFs

- 11.3.2.11: Double UDFs

- 11.3.2.12: VarBinary Encryption UDFs

- 11.4: Appendix

- 11.4.1: Additional references for the Protectors

- 11.4.1.1: Additional references for the Teradata Protector

- 11.4.1.1.1: Configuring access to execute queries

- 11.4.1.1.2: Teradata Query Bands and Trusted Sessions

- 11.4.2: Data Warehouse Sample Scripts

- 11.4.3: Return Codes for Data Warehouse Protectors

- 11.4.4: Supported Data Warehouse Protectors Matrix

- 11.5: Trino Data Warehouse Protector

- 11.5.1: Understanding the Architecture

- 11.5.2: System Requirements

- 11.5.3: Preparing the Environment

- 11.5.3.1: Extracting the Files from the Installation Package

- 11.5.3.2: Executing the Configurator Script

- 11.5.4: Installing the Trino Protector

- 11.5.5: Configuring the Trino Protector

- 11.5.5.1: Working with Cluster Utilities

- 11.5.5.1.1: Log Forwarder Control Script

- 11.5.5.1.2: RPAgent Control Script

- 11.5.5.1.3: Sync Config.ini

- 11.5.5.1.4: Sync RPAgent

- 11.5.5.1.5: Sync Log Forwarder

- 11.5.6: Uninstalling the Trino Protector

- 12: Database Protector

- 12.1: Oracle Database Protector

- 12.1.1: Understanding the Architecture

- 12.1.2: System Requirements

- 12.1.3: Preparing the Environment

- 12.1.3.1: Extracting the Installation Package

- 12.1.3.2: Installing the Log Forwarder

- 12.1.3.3: Installing the RPAgent

- 12.1.4: Installing the Oracle Database Protector

- 12.1.4.1: Installing the Policy Enforcement Point (PEP)

- 12.1.4.2: Creating the User Defined Functions (UDFs)

- 12.1.5: Configuring the Oracle Database Protector

- 12.1.5.1: User Impersonation

- 12.1.5.2: Enterprise User Security (EUS) in the Oracle Database

- 12.1.6: Uninstalling the Oracle Database Protector

- 12.2: User Defined Functions and APIs

- 12.2.1: Oracle User Defined Functions and APIs

- 12.2.1.1: General UDFs

- 12.2.1.2: Access Check Procedures

- 12.2.1.3: Insert Encryption UDFs

- 12.2.1.4: Insert No-Encryption, Token, and FPE UDFs

- 12.2.1.5: Multiple Insert Encryption Procedures

- 12.2.1.6: Select Decryption UDFs

- 12.2.1.7: Select No-Encryption, Token, and FPE UDFs

- 12.2.1.8: Update Encryption UDFs

- 12.2.1.9: Update No-Encryption, Token, and FPE UDFs

- 12.2.1.10: Multiple Update Encryption Procedures

- 12.2.1.11: Hash UDFs

- 12.2.1.12: Blob UDFs

- 12.2.1.13: Clob UDFs

- 12.2.1.14: Bulk UDFs

- 12.2.1.15: Oracle Input Datatype to UDF Mapping

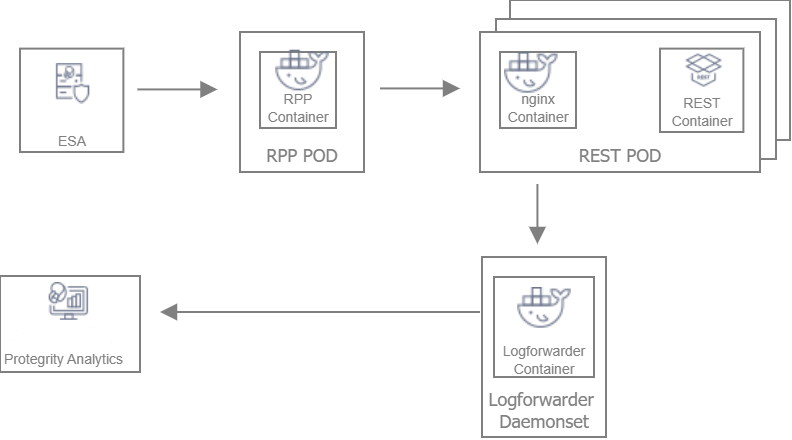

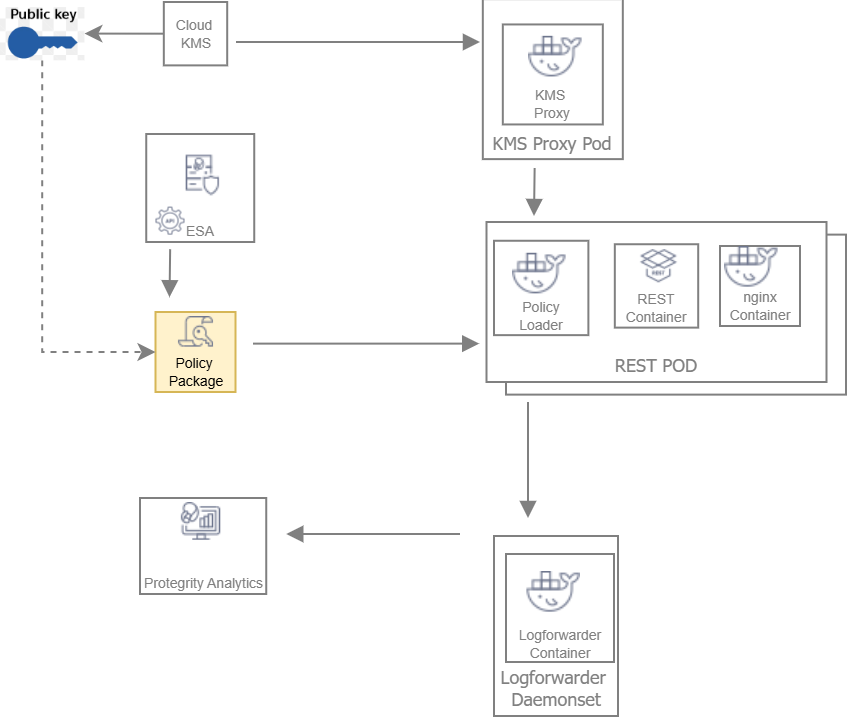

- 13: REST Container

- 13.1: Understanding the Architecture

- 13.1.1: Architecture and Components using Dynamic-based Deployment

- 13.1.2: Architecture and Components using Static Deployment

- 13.2: System Requirements

- 13.2.1: Software Requirements

- 13.2.2: Hardware Requirements

- 13.3: Preparing the Environment

- 13.3.1: Initializing the Jump Box

- 13.3.2: Extracting the Installation Package

- 13.3.3: Creating Certificates

- 13.3.4: Uploading the Images to the Container Repository

- 13.3.5: Creating the AWS Environment

- 13.3.5.1: Creating the AWS Setup for Static Mode

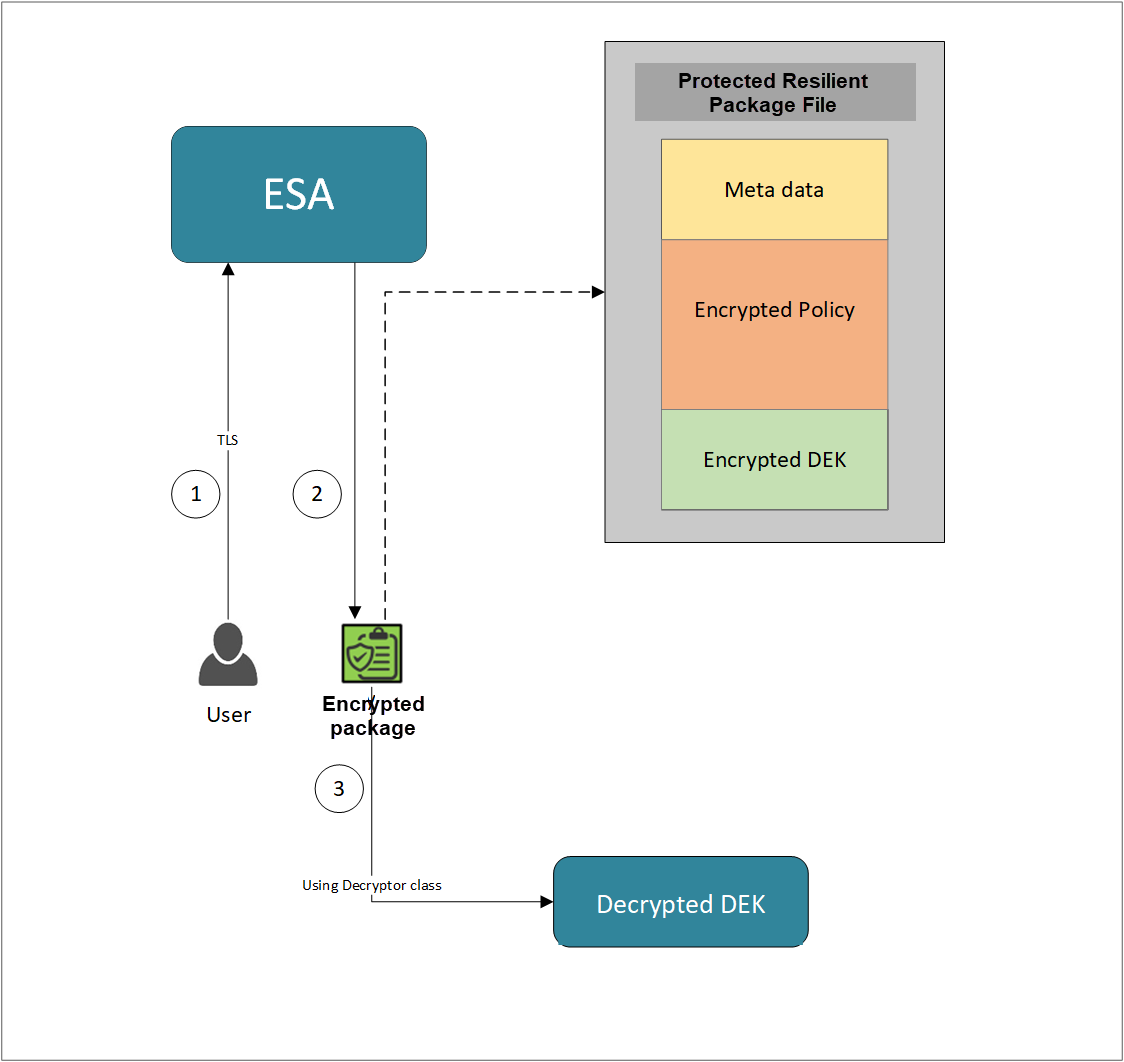

- 13.3.5.1.1: Creating an Data Encryption Key (DEK)

- 13.3.5.1.2: Creating an AWS S3 Bucket

- 13.3.5.1.3: Creating an AWS EFS

- 13.3.5.2: Creating a Kubernetes Cluster

- 13.4: Installing the Protector

- 13.4.1: Deploying REST Container for Dynamic Method

- 13.4.1.1: Deploying Log Forwarder

- 13.4.1.2: Deploying Resilient Package Proxy (RPP)

- 13.4.1.3: Deploying the REST Container

- 13.4.2: Deploying REST Product in Static Mode

- 13.4.2.1: Retrieving the Policy Package from the ESA

- 13.4.2.2: Deploying Log Forwarder

- 13.4.2.3: Deploying KMSProxy Container

- 13.4.2.4: Deploying REST Container Using Static Method

- 13.4.2.5: Updating the Policy Package

- 13.5: Application Protector API on REST

- 13.5.1: Version 4 (V4) Application Protector API on REST

- 13.5.1.1: List of REST APIs

- 13.5.1.1.1: HTTP GET version

- 13.5.1.1.2: HTTP POST protect

- 13.5.1.1.3: HTTP POST unprotect

- 13.5.1.1.4: HTTP POST reprotect

- 13.5.1.1.5: HTTP GET doc

- 13.5.1.1.6: HTTP Headers

- 13.5.1.2: V4 AP REST HTTP Response Codes

- 13.5.2: Version 1 (V1) Application Protector API on REST

- 13.5.2.1: List of REST APIs

- 13.5.2.1.1: HTTP GET version

- 13.5.2.1.2: HTTP POST protect

- 13.5.2.1.3: HTTP POST unprotect

- 13.5.2.1.4: HTTP POST reprotect

- 13.5.2.1.5: HTTP Headers

- 13.5.2.2: Error Handling for v1 API

- 13.5.2.3: V1 AP REST HTTP Response Codes

- 13.6: Using Samples

- 13.7: Running the Autoscaling Script

- 13.8: Using Dockerfiles to Build Custom Images

- 13.9: Appendix - Deploying the Helm Charts by Using the Set Argument

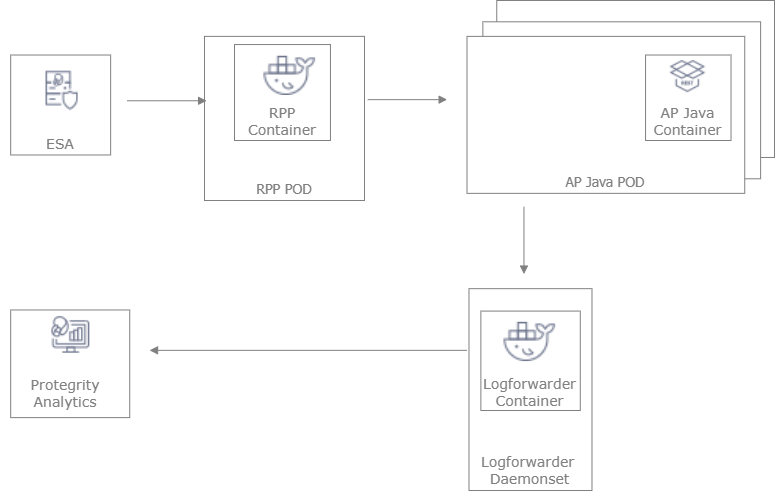

- 14: Application Protector Java Container

- 14.1: Understanding the Architecture

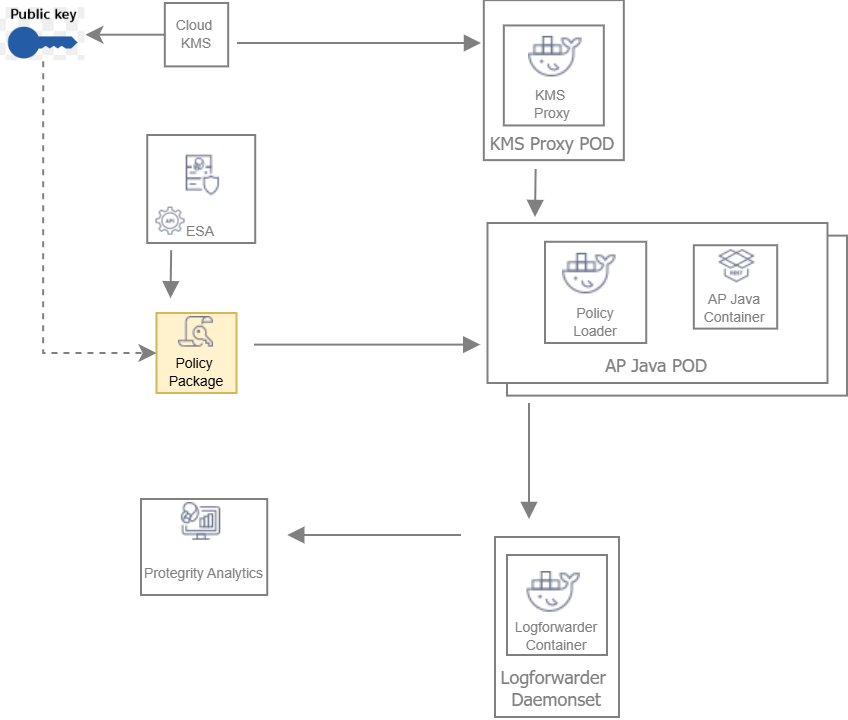

- 14.1.1: Architecture and Components using Dynamic-based Deployment

- 14.1.2: Architecture and Components using Static Deployment

- 14.2: System Requirements

- 14.2.1: Software Requirements

- 14.2.2: Hardware Requirements

- 14.3: Preparing the Environment

- 14.3.1: Initializing the Jump Box

- 14.3.2: Extracting the Installation Package

- 14.3.3: Creating Certificates

- 14.3.4: Uploading the Images to the Container Repository

- 14.3.5: Creating the AWS Environment

- 14.3.5.1: Creating the AWS Setup for Static Mode

- 14.3.5.1.1: Creating a Data Encryption Key (DEK)

- 14.3.5.1.2: Creating an AWS S3 Bucket

- 14.3.5.1.3: Creating an AWS EFS

- 14.3.5.2: Creating a Kubernetes Cluster

- 14.4: Installing the Protector

- 14.4.1: Deploying AP Java Container for Dynamic Method

- 14.4.1.1: Deploying Log Forwarder

- 14.4.1.2: Deploying Resilient Package Proxy (RPP)

- 14.4.1.3: Deploying the AP Java Container with Dynamic Method

- 14.4.2: Deploying AP Java Container in Static Mode

- 14.4.2.1: Retrieving the Policy Package from the ESA

- 14.4.2.2: Deploying Log Forwarder

- 14.4.2.3: Deploying KMSProxy Container

- 14.4.2.4: Deploying AP Java Container Using Static Method

- 14.4.2.5: Updating the Policy Package

- 14.5: Running Security Operations

- 14.6: Using Dockerfiles to Build Custom Images

- 14.7: Appendix - Deploying the Helm Charts by Using the Set Argument

- 15: Protegrity AWS EC2 Protector

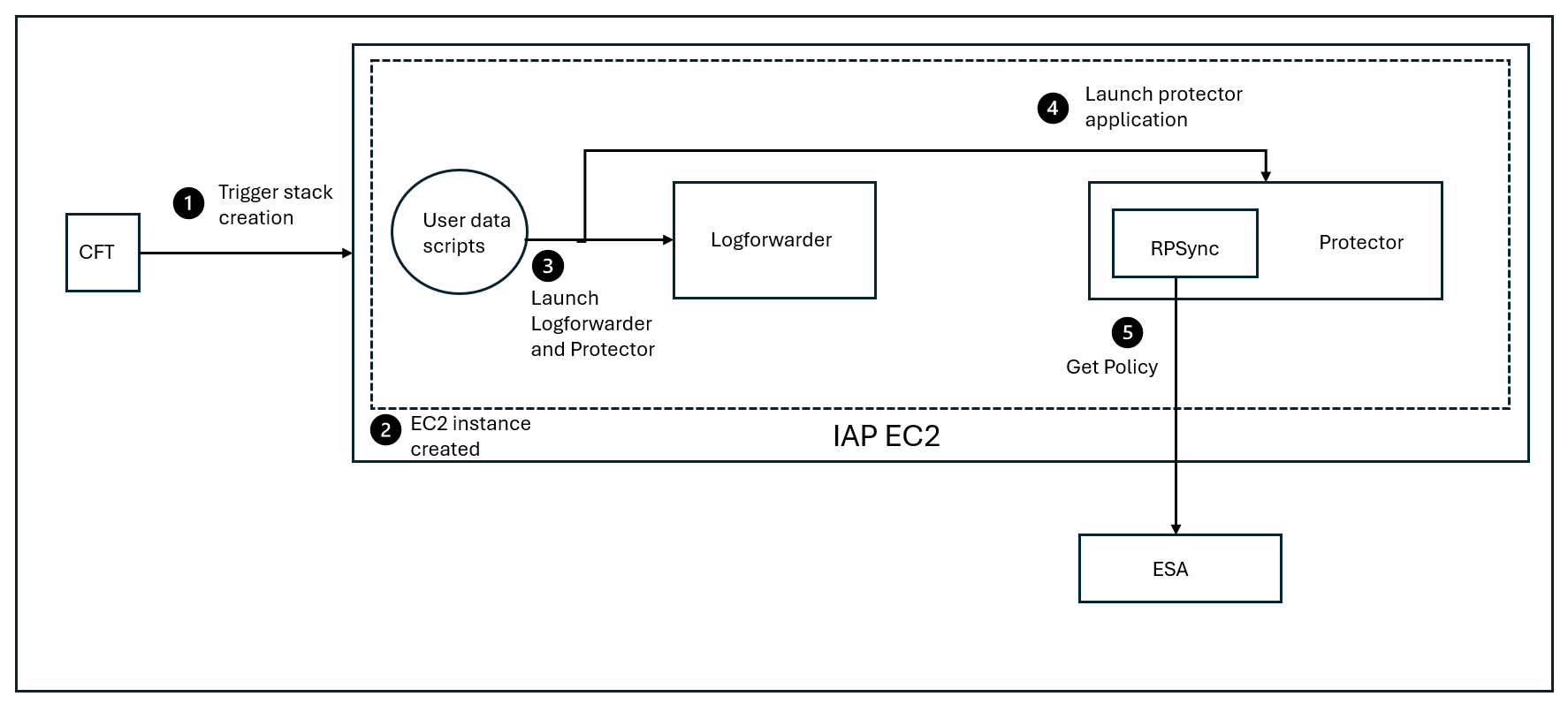

- 15.1: Understanding the Architecture

- 15.2: System Requirements

- 15.3: Preparing the Environment

- 15.3.1: Initializing the Jump Box

- 15.3.2: Extracting the Installation Package

- 15.3.3: Creating a JAR for the Sample Application

- 15.3.4: Creating a Linux AMI for the Sample Application

- 15.3.5: Creating Certificates and Keys for TLS Authentication

- 15.3.6: Uploading the Server Certificates to the AWS Identity and Access Management

- 15.3.7: Uploading the RPSyncConfig Package to the AWS S3 Bucket

- 15.3.8: Preparing the AWS Requirements

- 15.4: Installing the Protector

- 15.5: Running Security Operations

- 15.6: Upgrading the Protector from Version 9.x to 10.x

- 15.7: Upgrading the Protector from Version 10.x to 10.y

1 - Configuration Parameters for Protector

The protectors can be configured by specifying the parameters in a configuration file or by specifying the parameters as environment variables. The parameters specified as environment variables have a higher priority than the parameters specified in the configuration file. The value specified in the environment varibles overrides the value specified in the configuration file for the same parameter.

The configuration parameters can be categorized as follows:

- Core - Responsible for performing URP operations and generating the logs.

- Resilient Package Sync - Responsible for retrieving the package from the ESA, RPP, or the shared memory at regular intervals.

- Resilient Package Status - Responsible for sending the status of the resilient package to Insight at regular intervals.

- Forwarding Audits and Logs - Responsible for forwarding the audits and logs to the Log Forwarder or other outputs.

The configuration parameters can be mandatory and optional. Mandatory parameters are the ones where users are expected to modify the values as per their requirement. Optional parameters are the ones where users are recommended to retain the default values. Users should exercise extreme caution while updating the values for any optional parameter.

Core Configuration Parameter

The following is the Core configuration parameter.

| Parameter in Configuration File | Environment Variable | Description | Mandatory or Optional |

|---|---|---|---|

| flushinterval | PTY_CORE_FLUSHINTERVAL | Specify the time interval in seconds after which the audits are flushed from the cache. The default value is 1. The maximum value is 86400. 0 is an invalid value. Caution: If you change the default value, then the timestamp on the protection audits will not be accurate. For example, if you set this value to 60, then the flush audits will happen once a minute. Every audit that is flushed will have the timestamp of when the flushing occurred, even if the protection happened 59 seconds ago. This caution is not applicable to short-lived nodes and short-lived protectors. In these instances, the flushing always happens when the process is shut down correctly. | Optional |

In the configuration file, specify this parameter under the section [core].

For example:

[core]

flushinterval = <value>

Resilient Package Sync Configuration Parameters

The following are the configuration parameters for Resilient Package Sync.

| Parameter in Configuration File | Environment Variable | Description | Mandatory or Optional |

|---|---|---|---|

| interval | PTY_SYNC_INTERVAL | Specifies the time interval in seconds after which the protector retrieves a new package from the ESA, RPP, or shared memory. The default value is 60. The maximum value is 86400. 0 is an invalid value. | Optional |

| protocol | PTY_SYNC_PROTOCOL | Specify the protocol used by the protector to retrieve the resilient packages. Specify one of the following options: The default value is https. | Optional |

| host | PTY_SYNC_HOST | Specify the host name or IP address of the upstream server that is providing the resilient packages. | Mandatory if protocol is set to https. |

| port | PTY_SYNC_PORT | Specify the port number of the upstream server that is providing the resilient packages. The default value is 25400. | Mandatory if protocol is set to https. |

| ca | PTY_SYNC_CA | Specify the path of the CA certificate that is used to authenticate the ESA. For more information about certificates, refer to Certificate Management in ESA | Mandatory if protocol is set to https. |

| cert | PTY_SYNC_CERT | Specify the path of the client certificate for the protector. It is used for mutual TLS communication between the upstream server and the protector. | Mandatory if protocol is set to https. |

| key | PTY_SYNC_KEY | Specify the path to the client certificate key. This parameter is required if you are using a client certificate. | Mandatory if protocol is set to https. |

| secretfile | PTY_SYNC_SECRET_FILE | Specify the path to the file that contains the secret. This secret is used to decrypt the client certificate key. | Mandatory if protocol is set to https. |

| requesttimeout | PTY_SYNC_REQUESTTIMEOUT | Timeout of the RP sync request in seconds. The default value is 0, which means that the timeout is never triggered. The maximum value is 86400. Caution: If you specify a timeout value and the network is slow, then it is possible that the request continuously times out before retrieving any package. | Optional |

| verify | PTY_SYNC_VERIFY | Verify whether the common name mentioned on the server certificate is the same one as specified in the company DNS. The default value is false. This parameter is not applicable if you are using self-signed certificates. | Optional |

| setlocalip | PTY_SYNC_SET_LOCAL_IP | Automatically add the local IP address of the protector, from where the sync request has originated, to the X-Forwarded-For header. The default value is true. | Optional |

| channel | PTY_SYNC_CHANNEL | Specify a communication channel to the shared memory. This parameter is required if you want to run multiple RPAs with different package versions on the same node. The default value is 0. The range of the value is from 0 to 255. | Optional |

In the configuration file, specify this parameter under the section [sync].

For example:

[sync]

interval = <value>

protocol = <value>

host = <value>

port = <value>

ca = <value>

cert = <value>

key = <value>

secretfile = <value>

requesttimeout = <value>

verify = <value>

setlocalip = <value>

channel = <value>

Resilient Package Status Configuration Parameter

The following is the configuration parameter for Resilient Package Status.

| Parameter in Configuration File | Environment Variable | Description | Mandatory or Optional |

|---|---|---|---|

| interval | PTY_STATUS_INTERVAL | Specifies the time interval in seconds after which the protectors send the status of the resilient package to Insight. The default value is 60. The maximum value is 86400. 0 is an invalid value. Caution: If you change the default value, then it might result in a mismatch in the status appearing on the Protector Status Dashboard. For example, if you change the interval to 3800, that is more than one hour, then the Connectivity status will show the status as error. This caution is not applicable to short-lived nodes and short-lived protectors. | Optional |

In the configuration file, specify this parameter under the section [status].

For example:

[status]

interval = <value>

Configuration Parameters for Forwarding Audits and Logs

The following are the configuration parameters for forwarding the audits and logs.

| Parameter in Configuration File | Environment Variable | Description | Mandatory or Optional |

|---|---|---|---|

| output | PTY_LOG_OUTPUT | Specify the output type for protection logs. Specify one of the following values:

| Optional |

| mode | PTY_LOG_MODE | Specify how the logs are handled in case the connection to the Log Forwarder is lost. Specify one of the following options:

This setting is only applicable for the protector logs sent from the protector, and not for the application logs available in the ESA. | Optional |

| host | PTY_LOG_HOST | Specify the host name or IP address of the machine where the Log Forwarder is installed. The logs will be forwarded from the protector to this host name or IP address. The default value is set to localhost. | Optional |

| port | PTY_LOG_PORT | Specify the port number of the machine where the Log Forwarder is installed. The logs will be forwarded from the protector to this port. The default value is 15780. | Optional |

In the configuration file, specify this parameter under the section [log].

For example:

[log]

output = <value>

mode = <value>

host = <value>

port = <value>

2 - Installing Resilient Package (RP) Agent on Linux or Unix and Windows

The following section describes the steps to install the RP Agent on Linux or Unix and Windows.

Installing RP Agent on Linux or Unix

The following section describes the steps to install the RP Agent on a Linux or Unix platform using the Interactive or Silent mode of installation.

By default, the RP Agent is installed in the /opt/protegrity directory. You can choose to install the RP Agent in a different directory by specifying the -d or --dir argument in the installation command. If you change the base installation directory, then the installation path will also change accordingly.

Use the --help argument if you need any help with installing the RP Agent, as shown in the following command.

./RPAgentSetup_Linux_x64_<version>.sh --help

The following snippet displays the output.

Install:

./RPAgentSetup_Linux_x64_<version>.sh -e <host[:port]...> [-d <dir>]

Options:

-h, --host Host or IP of the upstream

-u, --user ESA username. Must be a user with the Export Certificates role.

-p, --password ESA password. Must be a user with the Export Certificates role.

-t, --token ESA token. Must be a token with the Export Certificates role.

--port Port to connect to on the upstream (default: 25400)

-d, --dir Path to base directory for installation (default: /opt/protegrity)

, --nocert Disable certificates

Important: The

-tor--tokenparameter is currently not applicable.

Installing RP Agent on Linux or Unix using Interactive Mode

To install the RP Agent on a Linux or Unix platform using the Interactive mode:

Run the RP Agent installer using the following command.

./RPAgentSetup_Linux_x64_<version>.shThe prompt to enter the upstream hostname or IP address appears.

Please enter upstream host name or IP address []:Enter the ESA Host Name or IP Address.

Press ENTER.

The prompt to enter the username for downloading certificates appears.

Please enter the user name for downloading certificates []:Enter the username for downloading the certificates.

Press ENTER.

The prompt to enter the password for downloading the certificates appears.

Please enter the password for downloading certificates []:Press ENTER to install into the destination directory.

Directories are created under /opt/protegrity/rpagent by default, and the required installation files are installed in these directories.

Ensure that the ESA is up and running with the HubController service in running status to enable automatic downloading of certificates.

If you have installed the RP Agent using the

--nocertparameter, then the ESA certificates are not downloaded during the installation. To manually install the certificates to the /opt/protegrity/rpagent/data directory of the RP Agent, perform the following steps.i. Navigate to the /opt/protegrity/rpagent/bin directory and run the following command.

./GetCertificates -u <ESA User with the Export Certificates role> [-h <ESA host name or IP address>] [-p portno] [-d directory]This initiates a secure communication between the RP Agent and the ESA.

ii. Enter the password for the ESA user.

iii. Verify that the following files have been copied to the /opt/protegrity/rpagent/data directory:

- CA.pem

- cert.key

- cert.pem

- rpagent.cfg

- secret.txt

Start the RP Agent by using the following command.

/opt/protegrity/rpagent/bin/rpagentctrl startThe RP Agent is successfully installed.

Installing RP Agent on Linux or Unix using Silent Mode

You can also execute the RP Agent installer without any manual intervention, which is also known as the Silent mode of installation. The following parameters must be provided to execute the installer in the Silent mode.

| Parameter | Description |

|---|---|

-h or --host | Host or IP address of the upstream server that is providing the resilient packages. |

-u or --user | The name of the ESA user with the Export Certificates role. |

-p or --password | The password of the ESA user with the Export Certificates role. |

-t or --token | The name of the ESA token with the Export Certificates role. |

--port | Port number of the upstream server that is providing the resilient packages. The default port number is 25400. |

-d or --dir | Specifies the installation directory, which is an optional parameter. If the installation directory is not specified, then the installation path is the default directory, which is /opt/protegrity. |

Important: The

-tor--tokenparameter is currently not applicable.

At the command prompt, type the following command from the installer directory.

./RPAgentSetup_Linux_x64_<version>.sh (-u <user> -p <password>) [-h <host>] [--port <port>]

If you want to install the RP Agent in a directory other than the default directory, then you can add the -d parameter to the command to specify the directory. The following command displays a sample snippet.

./RPAgentSetup_Linux_x64_<version>.sh (-u <user> -p <password>) [-h <host>] [--port <port>] [-d <dir>]

Uninstalling the RP Agent on Linux or Unix

Navigate to the /opt/protegrity/rpagent/bin directory.

Stop the RP Agent by using the following command.

./rpagentctrl stopDelete the rpagent directory.

The RP Agent and all its components are uninstalled.

Installing RP Agent on Windows

The following section describes the steps to install the RP Agent on a Windows platform using the Windows wizard or through silent installation.

When you install the RP Agent, the system automatically sets up a directory structure with the required files in the ..\Protegrity\rpagent directory.

Installing RP Agent on Windows using the Windows Wizard

To install the RP Agent on a Windows platform using the Windows wizard:

Double-click or run the RPAgentSetup_

<OS>_<version>.exe file.The Setup Wizard appears.

Click Next.

The Upstream Connectivity Information screen appears.

Specify the following details.

Parameter Description Address Host name or IP address of the upstream server that is providing the resilient packages. Port Port number of the upstream server that is providing the resilient packages.

The default value is 25400.Certificate download user Name of the ESA user for downloading the certificates from the ESA.

This is an optional parameter.Certificate download password Password of the ESA user required for downloading the certificates from the ESA.

This is an optional parameter.Note: Ensure that the ESA is up and running with the HubController service in running status to enable automatic downloading of certificates.

Click Next.

The screen to specify the RP Agent location appears.

Browse to the directory in which you want to install the RP Agent, or retain the default location.

It is recommended to retain the default location.

Click Next.

The Ready to Install screen appears.

Click Install.

The Windows wizard installs the RP Agent on your machine.

Click Finish to close the Resilient Package Agent Setup Wizard and complete the installation.

The directories are created under the installation directory that was defined and the installation files are installed in these directories.

If you have not specified the Certificate download user name and password in the Upstream Connectivity Information screen, then the ESA certificates are not downloaded during the installation. To manually install the certificates to the \Protegrity\rpagent\data directory of the RP Agent, perform the following steps.

i. Navigate to the \Protegrity\rpagent\bin directory and run the following command.

.\GetCertificates.bat -u <ESA User with the Export Certificates role> -p <Password of the ESA user> [-h <ESA host name or IP address>] [--port portno] [-d directory]This initiates a secure communication between the RP Agent and the ESA.

ii. Verify that the following files have been copied to the \Protegrity\rpagent\data directory:

- CA.pem

- cert.key

- cert.pem

- rpagent.cfg

- secret.txt

iii. Restart the rpagent service from the Windows Task Manager after editing the file.

Installing RP Agent on Windows using Silent Mode

You can also execute the RP Agent installer without any manual intervention, which is also known as the Silent mode of installation. The following parameters must be provided to execute the installer in the Silent Mode.

| Parameter | Description |

|---|---|

--host | Host name or IP address of the upstream server that is providing the resilient packages. |

--port | Port number of the upstream server that is providing the resilient packages. The default value is 25400. |

--user | Name of the ESA user for downloading the certificates from the ESA. |

--password | Password of the ESA user required for downloading the certificates from the ESA. |

--token | Name of the ESA token with the Export Certificates role. |

--dir | Installation directory of the RP Agent, which is optional. If the installation directory is not specified, then the installation path is the default directory, which is the ..\Protegrity\rpagent directory. |

Important: The

--tokenparameter is currently not applicable.

At the command prompt, type the following command from the installation directory.

.\RPAgentSetup_<OS>_<version>.exe --host <ip address> --port <port number> --user <ESA user name> --password <ESA user password>

To install the RP Agent in a directory other than the default directory, add the --dir parameter to the command to specify the RP Agent installation directory. The following snippet displays a sample command.

.\RPAgentSetup_<OS>_<version>.exe --host <ip address> --port <port number> --user <ESA user name> --password <ESA user password> --dir <RP Agent installation directory>

Uninstalling the RP Agent on Windows

Navigate to the \Protegrity\rpagent directory.

Double-click the unins000.exe file.

The Resilient Package Agent Uninstall dialog box appears. A message appears asking you to confirm whether you want to uninstall the RP Agent.

Click Yes. The RP Agent and all its components are uninstalled.

3 - Installing Resilient Package (RP) Proxy on Linux or Unix and Windows

The following section describes the steps to install the RP Proxy on Linux or Unix and Windows.

Installing RP Proxy on Linux or Unix

The following section describes the steps to install the RP Proxy on a Linux or Unix platform using the Interactive or Silent mode of installation.

By default, the RP Proxy is installed in the /opt/protegrity directory. You can choose to install the RP Proxy in a different directory by specifying the -d or --dir argument in the installation command. If you change the base installation directory, then the installation path will also change accordingly.

Use the --help argument if you need any help with installing the RP Proxy, as shown in the following command.

./pty-rpproxy-setup-linux64-<version>.sh --help

The following snippet displays the output.

Install:

./pty-rpproxy-setup-linux64-<version>.sh (-u <user> -p <password> | -t <token> | --nocert) [-h <hostname>] [--port <port>] [-d <dir>]

Options:

-h, --host Host or IP of the upstream

--port Port to connect to on the upstream (default: 25400)

-u, --user ESA user. Must be a user with the Export Certificates role.

-p, --password ESA user's password.

-t, --token ESA token. Must be a token with the Export Certificates role.

-d, --dir Path to base directory for installation (default: /opt/protegrity)

, --nocert Disable certificates

Important: The

-tor--tokenparameter is currently not applicable.

Installing RP Proxy on Linux or Unix using Interactive Mode

To install the RP Proxy on a Linux or Unix platform using the Interactive mode:

Run the RP Proxy installer using the following command.

./pty-rpproxy-setup-linux64-<version>.shThe prompt to enter the upstream hostname or IP address appears.

Please enter upstream host name or IP address []:Type the ESA Host Name or IP Address, and press ENTER.

The prompt to enter the username for downloading certificates appears.

Please enter the user name for downloading certificates []:Type the username for downloading the certificates, and press ENTER.

The prompt to enter the password for downloading the certificates appears.

Please enter the password for downloading certificates []:Type the password, and press ENTER to install into the destination directory.

Directories are created under /opt/protegrity/rpproxy by default, and the required installation files are installed in these directories.

Ensure that the ESA is up and running with the HubController service in running status to enable automatic downloading of certificates.

If you have installed the RP Proxy using the

--nocertparameter, then the ESA certificates are not downloaded during the installation. To manually install the certificates to the /opt/protegrity/rpproxy/data directory of the RP Proxy, perform the following steps.i. Navigate to the /opt/protegrity/rpproxy/bin directory and run the following command.

./GetCertificates -u <ESA User with the Export Certificates role> [-h <ESA host name or IP address>] [-p portno] [-d directory]This initiates a secure communication between the RP Proxy and the ESA.

ii. Enter the password for the ESA user.

iii. Verify that the following files have been copied to the /opt/protegrity/rpproxy/data directory:

- CA.pem

- cert.key

- cert.pem

- secret.txt

Start the RP Proxy by using the following command.

/opt/protegrity/rpproxy/bin/rpproxyctrl startThe RP Proxy is successfully installed.

Installing RP Proxy on Linux or Unix using Silent Mode

You can also execute the RP Proxy installer without any manual intervention, which is also known as the Silent mode of installation. The following parameters must be provided to execute the installer in the Silent mode.

| Parameter | Description |

|---|---|

-h or --host | Host or IP address of the upstream server that is providing the resilient packages. |

-u or --user | The name of the ESA user with the Export Certificates role. |

-p or --password | The password of the ESA user with the Export Certificates role. |

--port | Port number of the upstream server that is providing the resilient packages. The default port number is 25400. |

-d or --dir | Specifies the installation directory, which is an optional parameter. If the installation directory is not specified, then the installation path is the default directory, which is /opt/protegrity. |

At the command prompt, type the following command from the installer directory.

./pty-rpproxy-setup-linux64-<version>.sh (-u <user> -p <password>) [-h <host>] [--port <port>]

If you want to install the RP Proxy in a directory other than the default directory, then you can add the -d parameter to the command to specify the directory. The following command displays a sample snippet.

./pty-rpproxy-setup-linux64-<version>.sh (-u <user> -p <password>) [-h <host>] [--port <port>] [-d <dir>]

Installing RP Proxy on Windows

The following section describes the steps to install the RP Proxy on a Windows platform using the Windows wizard or through silent installation.

When you install the RP Proxy, the system automatically sets up a directory structure with the required files in the ..\Protegrity\rpproxy directory.

Installing RP Proxy on Windows using the Windows Wizard

To install the RP Proxy on a Windows platform using the Windows wizard:

Double-click or run the pty-rpproxy-setup-

<OS>-<version>.exe file. The Setup Wizard appears.Click Next. The screen to specify the RP Proxy location appears.

Browse to the directory in which you want to install the RP Proxy, or retain the default location. It is recommended to retain the default location.

Click Next.

The Proxy config screen appears.

Specify the following details.

Parameter Description IP/hostname Host name or IP address of the upstream server that is providing the resilient packages. Port Port number of the upstream server that is providing the resilient packages.

The default value is 25400.User Name of the ESA user for downloading the certificates from the ESA.

This is an optional parameter.Password Password of the ESA user required for downloading the certificates from the ESA.

This is an optional parameter.Note: Ensure that the ESA is up and running with the HubController service in running status to enable automatic downloading of certificates.

Click Next.

The Ready to Install screen appears.

Click Install.

The Windows wizard installs the RP Proxy on your machine.

Click Finish to close the rpproxy Setup Wizard and complete the installation.

The directories are created under the installation directory that was defined and the installation files are installed in these directories.

If you have not specified the Certificate download user name and password in the Proxy config screen, then the ESA certificates are not downloaded during the installation. To manually install the certificates to the \Protegrity\rpproxy\data directory of the RP Proxy, perform the following steps.

i. Navigate to the \Protegrity\rpproxy\bin directory and run the following command.

.\GetCertificates.bat -u <ESA User with the Export Certificates role> -p <Password of the ESA user> [-h <ESA host name or IP address>] [--port portno] [-d directory]This initiates a secure communication between the RP Proxy and the ESA.

ii. Verify that the following files have been copied to the \Protegrity\rpproxy\data directory:

- CA.pem

- cert.key

- cert.pem

- secret.txt

iii. Restart the rpproxy service from the Windows Task Manager after editing the file.

Installing RP Proxy on Windows using Silent Mode

You can also execute the RP Proxy installer without any manual intervention, which is also known as the Silent mode of installation. The following parameters must be provided to execute the installer in the Silent Mode.

| Parameter | Description |

|---|---|

--host | Host name or IP address of the upstream server that is providing the resilient packages. |

--port | Port number of the upstream server that is providing the resilient packages. The default value is 25400. |

--user | Name of the ESA user for downloading the certificates from the ESA. |

--password | Password of the ESA user required for downloading the certificates from the ESA. |

--dir | Installation directory of the RP Proxy, which is optional. If the installation directory is not specified, then the installation path is the default directory, which is the ..\Protegrity\rpproxy directory. |

At the command prompt, type the following command from the installation directory.

.\pty-rpproxy-setup-<OS>-<version>.exe --host <ip address> --port <port number> --user <ESA user name> --password <ESA user password>

To install the RP Proxy in a directory other than the default directory, add the --dir parameter to the command to specify the RP Proxy installation directory. The following snippet displays a sample command.

.\pty-rpproxy-setup-<OS>-<version>.exe --host <ip address> --port <port number> --user <ESA user name> --password <ESA user password> --dir <RP Proxy installation directory>

Uninstalling the RP Proxy

Navigate to the \Protegrity\rpproxy\bin directory.

Double-click the uninstall.exe file.

The Uninstall rpproxy dialog box appears. A message appears asking you to confirm whether you want to uninstall the RP Proxy.

Click Yes. The RP Proxy and all its components are uninstalled.

4 - Configuration Parameters for RPP outside of the ESA

The Resilient Package Proxy (RPP) is a HTTP cache and it enables Dynamic Resilient Package Deployment to scale. The RPP:

- Leads to faster response time on protectors.

- Reduces network traffic.

The configuration parameters for RPP include log level, host and port, and service configuration. These parameters are explained in detail in the following section.

Service Logging Configuration

Log Level

The following is the log level parameter.

| Parameter | Description |

|---|---|

| PTY_LOG_LEVEL | Specify the details about the application log level during run-time. The possible values are “ERROR”, “WARN”, “INFO”, “DEBUG”, and “TRACE”. |

Host and Port

The following is the host and port where the logs are forwarded.

| Parameter | Description |

|---|---|

| PTY_LOG_TCP_HOST | Specify the Fluentbit hostname or IP address where the logs are forwarded. The default value is 127.0.0.1. |

| PTY_LOG_TCP_PORT | Specify the Fluentbit port where the logs are forwarded. The default value is 15780. |

Service Configuration

Limit Request

The following is the limit request parameter.

| Parameter | Description |

|---|---|

| PTY_LIMIT_REQUEST | Specify the limit for simultaneous requests. The number of requests determine the throughput of packages to be downloaded. The default value is 20. |

Cache TTL

The following is the cache Time to live (TTL) parameter.

| Parameter | Description |

|---|---|

| PTY_CACHE_TTL | Specify the duration to refresh the cache. When a cached TTL expires, the cache has to be revalidated or updated. The default value is 60s. |

Listener Configuration

The following are the listener configuration parameters.

| Parameter | Description |

|---|---|

| PTY_LISTENER_SSL_ENABLED | Specify whether the listener SSL is enabled. The default value is true. |

| PTY_LISTENER_SSL_CA | Specify the path of the CA certificate that is used to authenticate the ESA. For more information about certificates, refer to Certificate Management in ESA. |

| PTY_LISTENER_SSL_CERT | Specify the path of the client certificate for the protector. It is used for mutual TLS communication between the listener and the protector. |

| PTY_LISTENER_SSL_KEY | Specify the path to the client certificate key. This parameter is required if you are using a client certificate. |

| PTY_LISTENER_SSL_SECRET_FILE | Specify the path to the file that contains the secret. This secret is used to decrypt the client certificate key. |

Authentication Server Configuration

The authentication (auth) server can be another RPP or ESA. The following are the auth server configuration parameters.

| Parameter | Description |

|---|---|

| PTY_AUTH_HOST | Specify the host name or IP address of the auth server that is providing the resilient packages. |

| PTY_AUTH_PORT | Specify the port number of the auth server that is providing the resilient packages. The default value is 25400. |

| PTY_AUTH_SSL_ENABLED | Specify whether the auth SSL is enabled. The default value is true. |

| PTY_AUTH_SSL_VERIFY_HOST | Verify the auth SSL host. The default value is false. |

| PTY_AUTH_SSL_CA | Specify the path of the CA certificate that is used to authenticate the ESA. For more information about certificates, refer to Certificate Management in ESA. |

| PTY_AUTH_SSL_CERT | Specify the path of the client certificate for the protector. It is used for mutual TLS communication between the auth server and the protector. |

| PTY_AUTH_SSL_KEY | Specify the path to the client certificate key. This parameter is required if you are using a client certificate. |

| PTY_AUTH_SSL_SECRET_FILE | Specify the path to the file that contains the secret. This secret is used to decrypt the client certificate key. |

Upstream Server Configuration

The upstream server can be another RPP or ESA. The following are the auth server configuration parameters.

| Parameter | Description |

|---|---|

| PTY_UPSTREAM_HOST | Specify the host name or IP address of the upstream server that is providing the resilient packages. |

| PTY_UPSTREAM_PORT | Specify the port number of the upstream server that is providing the resilient packages. The default value is 25400. |

| PTY_UPSTREAM_SSL_ENABLED | Specify whether the upstream SSL is enabled. The default value is true. |

| PTY_UPSTREAM_SSL_VERIFY_HOST | Verify the upstream SSL host. The default value is false. |

| PTY_UPSTREAM_SSL_CA | Specify the path of the CA certificate that is used to authenticate the ESA. For more information about certificates, refer to Certificate Management in ESA. |

| PTY_UPSTREAM_SSL_CERT | Specify the path of the client certificate for the protector. It is used for mutual TLS communication between the upstream server and the protector. |

| PTY_UPSTREAM_SSL_KEY | Specify the path to the client certificate key. This parameter is required if you are using a client certificate. |

| PTY_UPSTREAM_SSL_SECRET_FILE | Specify the path to the file that contains the secret. This secret is used to decrypt the client certificate key. |

Note: The parameters listed here are for configuring RPP outside of the ESA. RPP running on the ESA does not require configuration changes and any modifications would not be maintained during ESA upgrades.

5 - Installing Log Forwarder on Linux and Windows

The following section describes the steps to install the Log Forwarder on Linux or Windows.

Installing Log Forwarder on Linux

The following section describes the steps to install the Log Forwarder on a Linux platform using the Interactive or Silent mode of installation.

By default, the Log Forwarder is installed in the /opt/protegrity directory. You can choose to install the Log Forwarder in a different directory by specifying the -d or --dir argument in the installation command. If you change the base installation directory, then the installation path will also change accordingly.

Use the --help argument if you need any help with installing the Log Forwader, as shown in the following command.

./LogforwarderSetup_Linux_x64_<version>.sh --help

The following snippet displays the output.

Install:

./LogforwarderSetup_Linux_x64_<version>.sh -e <host[:port]...> [-d <dir>]

Options:

-e, --endpoint Host of the target audit store endpoint(s).

Repeat this option to specify multiple endpoints to balance the load on audit store endpoints.

Each endpoint may specify a port. When no port is specified, 9200 is used.

-d, --dir Path to base directory for installation (default: /opt/protegrity)

Installing Log Forwarder on Linux using Interactive Mode

To preserve all the configurations while upgrading the Log Forwarder, ensure that you backup all the files present under the /opt/protegrity/logforwarder/data/config.d directory.

To install the Log Forwarder on a Linux platform using the Interactive mode:

Run the Log Forwarder installer using the following command.

./LogforwarderSetup_Linux_x64_<version>.shThe prompt to enter the Audit Store endpoint appears.

Enter the audit store endpoint (host), alternative (host:port) to use another port than the default port 9200 :Enter the Audit Store endpoint that is the Audit Store IP address and the Audit Store port number where the Log Forwarder sends the logs. The default port number is 9200. If you are using the default port, then do not specify the port number.

Press ENTER.

The added Audit Store endpoint appears on the screen.

The prompt to enter an additional Audit Store appears.

Do you want to add another audit store endpoint? [y/n]:If you want to add more than one Audit Store endpoint, then type y otherwise type n. If you need to add additional Audit Store endpoints, then repeat both Step 2 and Step 3 for each additional endpoint to add.

Type the y key to install into the destination directory.

The Log Forwarder is installed in the /opt/protegrity/logforwarder/ directory.

Start the

Protegrity Log Forwarderservice by using the following command./opt/protegrity/logforwarder/bin/logforwarderctrl startThe Log Forwarder is successfully installed.

If you want to modify the number of Audit Stores, then perform the following steps after the installation completes.

i. Edit the upstream.cfg file to add the audit stores.

ii. Navigate to the /opt/protegrity/logforwarder/data/config.d directory, and edit the upstream.cfg file as follows. The

[Node]block must be added for each new Audit Store.[NODE] Name node-1 Host 10.37.4.150 Port 9200 tls on tls.verify off Pipeline logs_pipeline [NODE] Name node-2 Host 10.37.4.158 Port 9200 tls on tls.verify off Pipeline logs_pipelineThe following parameters need to be added for a new node.

Parameter Description Name Set a name for the Audit Store. Host IP address or host name of the Audit Store. Port Set the port number.

The default port number is 9200.tls Enable or disable the TLS support. Set this parameter to on to enable the TLS support and off to disable the TLS support.

The default tls setting is on.tls.verify Force certificate validation. Set this parameter to on to enforce certificate validation and off to disable certificate verification.

The default tls.verify setting is off.Pipeline Set a filter for the Audit Store.

The default pipeline setting is logs_pipeline.iii. Use the following command to restart the

Protegrity Log Forwarderservice after editing the file./opt/protegrity/logforwarder/bin/logforwarderctrl start

Installing Log Forwarder on Linux using Silent Mode

To preserve all the configurations while upgrading the Log Forwarder, ensure that you backup all the files present under the /opt/protegrity/logforwarder/data/config.d directory.

You can also execute the Log Forwarder installer without any manual intervention, which is also known as the Silent mode of installation. The following parameters must be provided to execute the installer in the Silent mode.

| Parameter | Description |

|---|---|

-e or --endpoint | The IP address and port number of the Audit Store instance. You can add multiple Audit Store endpoints. If you add multiple Audit Store points, then you need to provide the -e or --endpoint argument for each endpoint.The default port number is 9200. If you are using the default port, then do not specify the port number. |

-d or --dir | Installation directory of the Log Forwarder, which is optional. If the installation directory is not specified, then the installation path is the default directory, which is the /opt/protegrity directory. |

At the command prompt, type the following command from the installer directory.

./LogforwarderSetup_Linux_x64_<version>.sh -e <ip address:port number> [-e <ip address:port number>]

If you want to install the Log Forwarder in a directory other than the default directory, add the -d or --dir argument to the command to specify the Log Forwarder installation directory

The following snippet displays a sample command.

./LogforwarderSetup_Linux_x64_<version>.sh -e <ip address:port number> [-e <ip address:port number>] -d <Log Forwarder installation directory>

Uninstalling the Log Forwarder on Linux

Navigate to the /opt/protegrity/logforwarder/bin directory.

Stop the Log Forwarder by using the following command.

./logforwarderctrl stopDelete the logforwarder directory.

The Log Forwarder and all its components are uninstalled.

Installing Log Forwarder on Windows

The following section describes the steps to install the Log Forwarder on a Windows platform using the Windows wizard or through silent installation.

When you install the Log Forwarder, the system automatically sets up a directory structure with the required files in the ..\Protegrity\logforwarder directory.

Installing Log Forwarder on Windows using the Windows Wizard

To install the Log Forwarder on a Windows platform using the Windows wizard:

Double-click or run the LogforwarderSetup_

<OS>_<version>.exe file.The Setup Wizard appears.

Click Next.

The Audit Store Connectivity Information screen appears.

Select the number of audit stores that are needed, and then click Next.

The screen to specify the Audit Store location appears.

Enter the Audit Store endpoint (IP address:port number).

The default port number is 9200.

Click Next.

The Select Destination Location screen appears.

Browse to the directory in which you want to install the Log Forwarder, or retain the default location.

It is recommended to retain the default location.

Click Next.

The Ready to Install screen appears.

Click Install.

The Windows wizard installs the Log Forwarder on your machine.

Click Finish to close the Log Forwarder Setup Wizard and complete the installation. The directories are created under the installation directory that was defined and the installation files are installed in these directories.

If you want to modify the number of Audit Stores or if you have selected an incorrect number of Audit Stores in step 3, then perform the following steps after the installation completes.

i. Edit the upstream.cfg file to add the audit stores.

ii. Navigate to the ..\Protegrity\logforwarder\data\config.d directory, and edit the upstream.cfg file as follows. The

[Node]block must be added for each new Audit Store.[NODE] Name node-1 Host 10.37.4.150 Port 9200 tls on tls.verify off Pipeline logs_pipeline [NODE] Name node-2 Host 10.37.4.158 Port 9200 tls on tls.verify off Pipeline logs_pipelineThe following parameters need to be added for a new node.

Parameter Description Name Set a name for the Audit Store. Host IP address or host name of the Audit Store. Port Set the port number.

The default port number is 9200.tls Enable or disable the TLS support. Set this parameter to on to enable the TLS support and off to disable the TLS support.

The default tls setting is on.tls.verify Force certificate validation. Set this parameter to on to enforce certificate validation and off to disable certificate verification.

The default tls.verify setting is off.Pipeline Set a filter for the Audit Store.

The default pipeline setting is logs_pipeline.iii. Restart the Log Forwarder service from the Windows Task Manager after editing the file.

Installing Log Forwarder on Windows using Silent Mode

You can also execute the Log Forwarder installer without any manual intervention, which is also known as the Silent mode of installation. The following parameters must be provided to execute the installer in the Silent Mode.

| Parameter | Description |

|---|---|

-endpoint1, -endpoint2, -endpoint3 | Audit Store IP address and the Port number where the Log Forwarder sends the logs. The default port number is 9200. The parameters -endpoint2 and -endpoint3 are optional. |

-dir | Installation directory of the Log Forwarder, which is optional. If the installation directory is not specified, then the installation path is the default directory, which is the ..\Protegrity\logforwarder directory. |

At the command prompt, type the following command from the installation directory.

.\LogforwarderSetup_<OS>_<version>.exe -endpoint1 <ip address:port number> [-endpoint2 <ip address:port number>] [-endpoint3 <ip address and port number>]

To install the Log Forwarder in a directory other than the default directory, add the -dir parameter to the command to specify the Log Forwarder installation directory. The following snippet displays a sample command.

.\LogforwarderSetup_<OS>_<version>.exe -endpoint1 <ip address:port number> [-endpoint2 <ip address:port number>] [-endpoint3 <ip address and port number>] -dir <Log Forwarder installation directory>

Uninstalling the Log Forwarder

Navigate to the \Protegrity\logforwarder directory.

Double-click the unins000.exe file.

The Log Forwarder Uninstall dialog box appears. A message appears asking you to confirm whether you want to uninstall the Log Forwarder.

Click Yes.

The Log Forwarder and all its components are uninstalled.

5.1 - Configuring the disk space on the Log Forwarder

If the incoming logs are cached faster than they are sent to Insight, then a back pressure arises.

The following formula can be used to calculate the disk space on the Log Forwarder. The formula requires the estimated audit rate and time to sustain the audit rate, without logs being sent to Insight. Modify the values in this example as required. The default value of the disk space is 256 MB.

Disk Space in Mega bytes = (Audit Rate X Time in Seconds X 5.9 ) / 1024.

- Audit Rate = Number of policy audits generated per second

- Time in Seconds = Time duration for which the disk can sustain the audit rate without the logs being sent to Insight.

If the default or the configured value of the storage.total_limit_size setting is reached, then the Log Forwarder discards the oldest audits to create disk space for new audits.

Perform the following steps to configure the storage.total_limit_size setting in the out.conf file on the protector machine.

Log in and open a CLI on the protector machine.

Navigate to the config.d directory using the following command.

cd /opt/protegrity/logforwarder/data/config.dProtectors v9.2.0.0 and later use the /opt/protegrity/logforwarder/data/config.d path. Use the /opt/protegrity/fluent-bit/data/config.d path for protectors v9.1.0.0 and earlier.

Back up the existing out.conf file using the following command.

cp out.conf out.conf_backupOpen the out.conf file using a text editor.

Update the value of storage.total_limit_size setting in the output blocks. The default value of the storage.total_limit_size is 256 MB. The following snippet shows the extract of the code.

[OUTPUT] Name opensearch Match logdata Retry_Limit False Index pty_insight_audit Type _doc Time_Key ingest_time_utc Upstream /opt/protegrity/logforwarder/data/config.d/upstream.cfg storage.total\_limit\_size 256M [OUTPUT] Name opensearch Match flulog Retry_Limit 1 Index pty_insight_audit Type _doc Time_Key ingest_time_utc Upstream /opt/protegrity/logforwarder/data/config.d/upstream.cfg storage.total\_limit\_size 256M [OUTPUT] Name opensearch Match errorlog Retry_Limit 1 Index pty_insight_audit Type _doc Time_Key ingest_time_utc Upstream /opt/protegrity/logforwarder/data/config.d/upstream.cfg storage.total\_limit\_size 256MProtectors v9.2.0.0 and later use the /opt/protegrity/logforwarder/data/config.d path. Use the /opt/protegrity/fluent-bit/data/config.d path for protectors v9.1.0.0 and earlier.

Save and close the file.

Restart the Log Forwarder on the protector using the following commands.

/opt/protegrity/logforwarder/bin/logforwarderctrl stop /opt/protegrity/logforwarder/bin/logforwarderctrl startProtectors v9.2.0.0 and later use the /opt/protegrity/logforwarder/bin path. Use the /opt/protegrity/fluent-bit/bin path for protectors v9.1.0.0 and earlier.

If required, complete the configurations on the remaining protector machines.

6 - Memory Utilization in Resilient Protectors

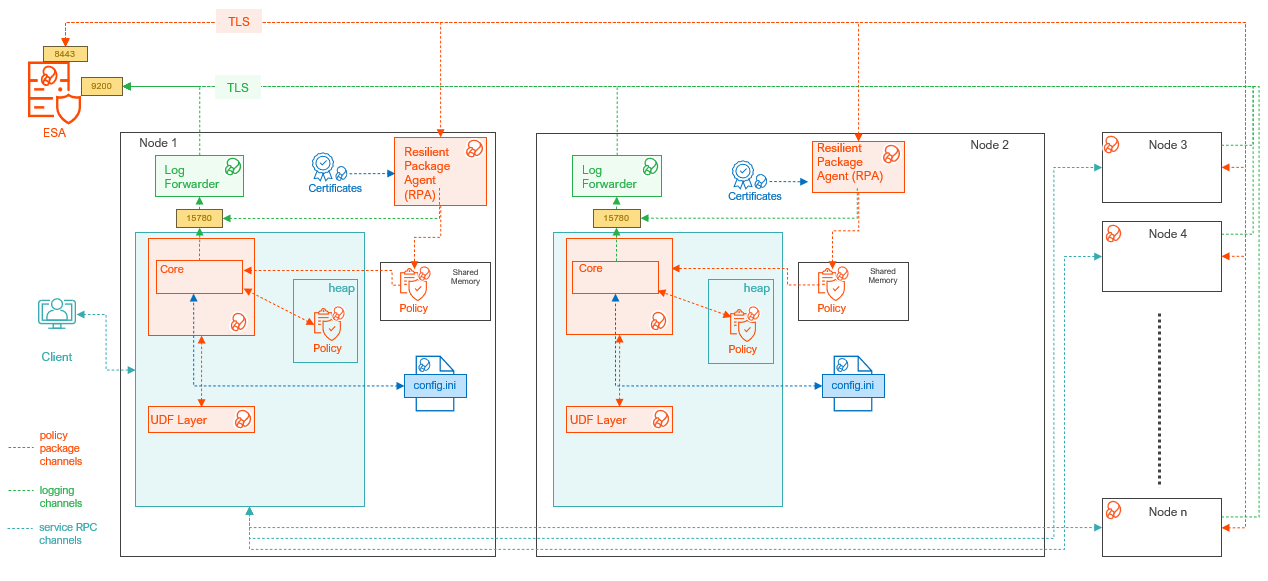

The 10.0.x Protectors support a Resilient Deployment architecture, which uses dynamic memory allocation to store the resilient packages downloaded from the ESA or the upstream server. In comparison, the protectors prior to version 10.0.x support a PEP Server based architecture, which uses fixed memory allocation to store the policies downloaded from the ESA. As a result, the Resilient Deployment architecture offers a memory efficient solution as compared to the PEP Server architecture.

The new Resilient Deployment architecture offers the following advantages over the PEP Server architecture:

- Scalability:

- Easy to scale the protectors by increasing the hardware.

- No limits on the policy size.

- Lower costs: Less network and memory requirements thereby reducing the hardware costs.

- Secure: Enables configuration to only store policy in process memory.

- Improved performance: Takes less time in distributing the resilient packages.

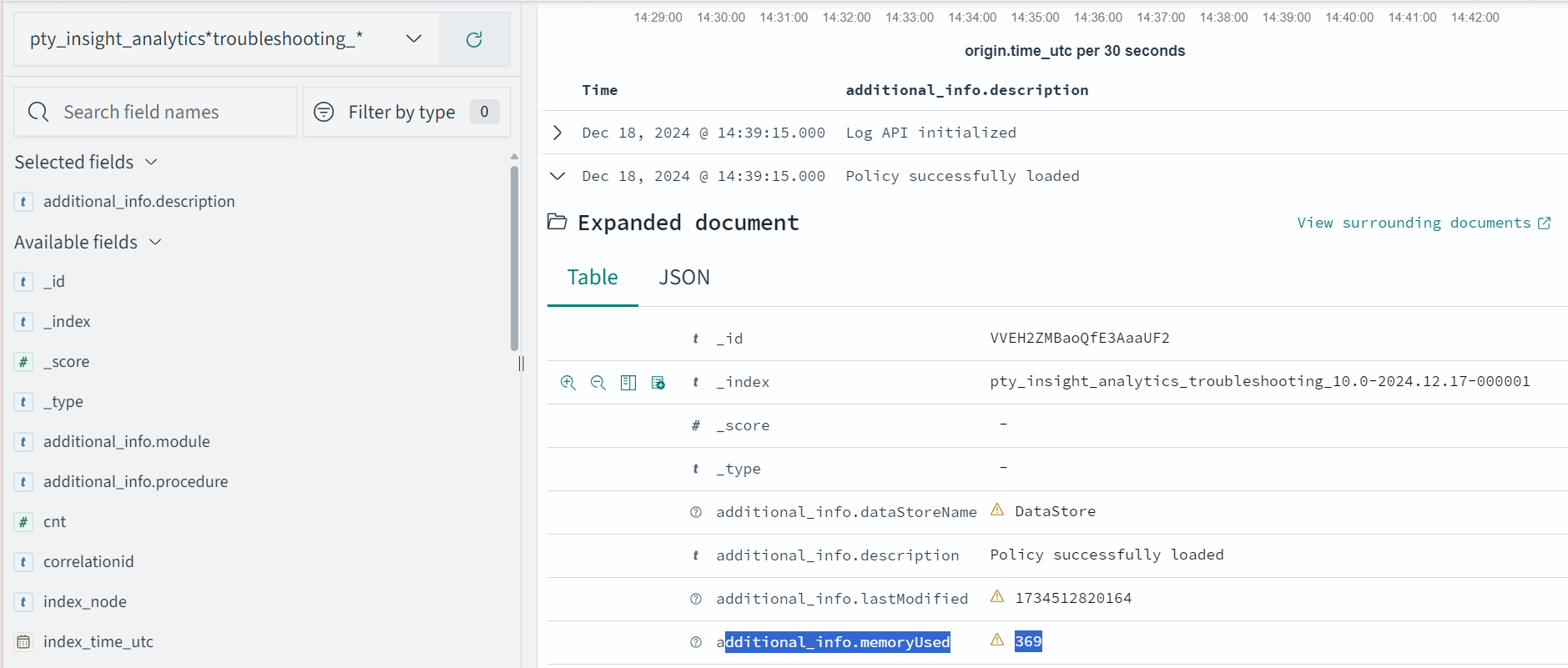

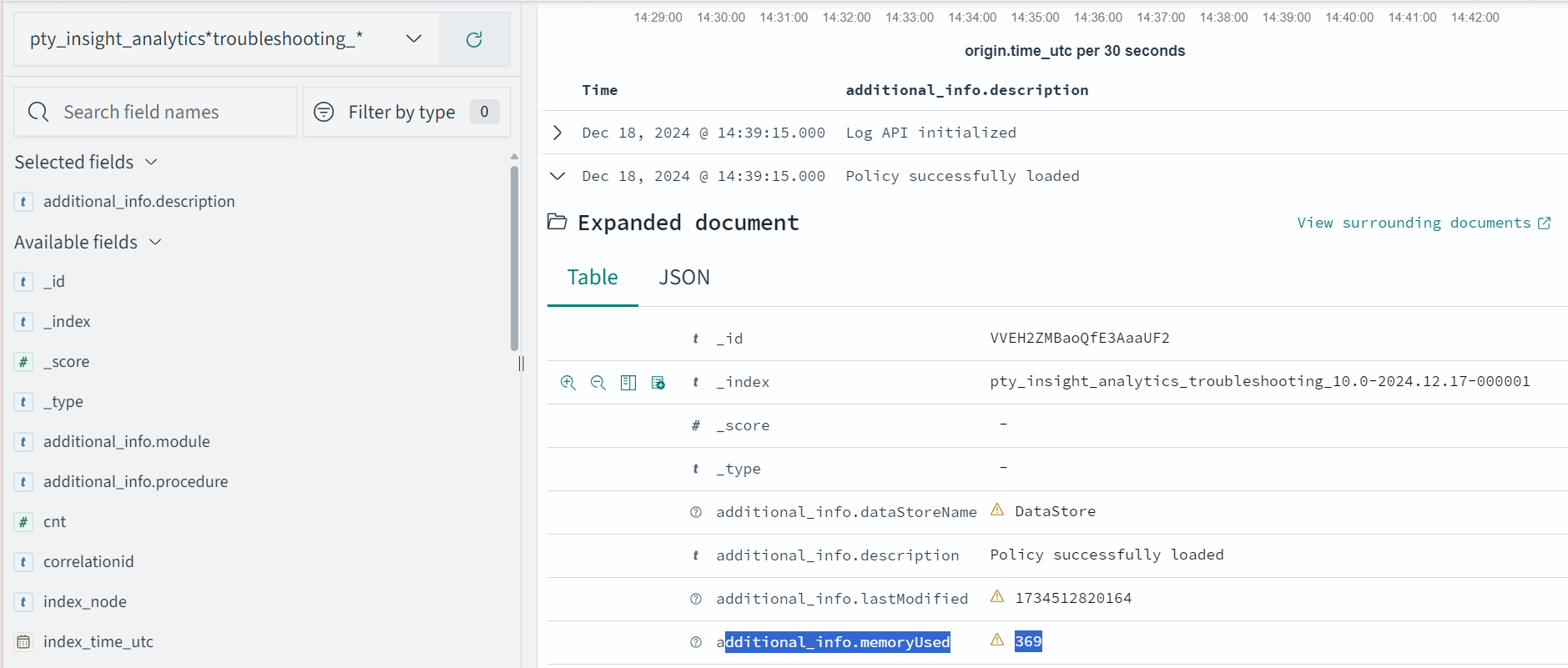

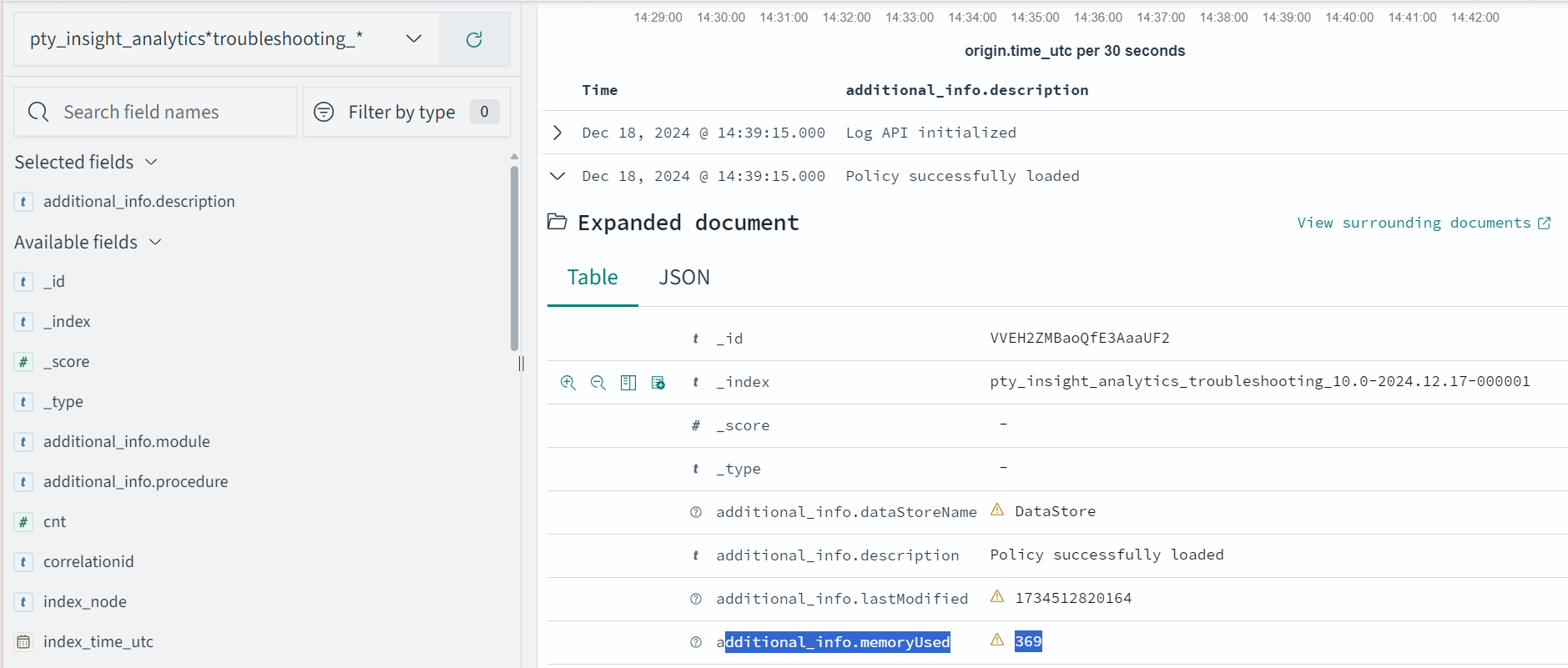

Memory Consumption during Policy Enforcement

The following example shows how much memory is consumed by an 10.0.x Protector when a policy is successfully loaded in heap memory. An application log is generated specifying the memory used.

{"additional_info":{"description":"Policy successfully loaded","memoryUsed":"13"}}

In this example, the memory used is 13 MB. This application log is then sent to Insight, where you can view the memory utilization for all the protectors.

For more information about Protegrity Dashboards, refer to the section Viewing the dashboards.

For more information about application logs, refer to the section Application Logs.

The following table shows how the number of data elements in combination with number of users affect the memory consumption.

| Policy Size | Sample Number of Users | Sample Number of Data Elements | Resilient Deployment | PEP Server Deployment |

|---|---|---|---|---|

| Small | 100 | 5 data elements. For example:

|

|

|

| Medium | 1000 | 15 data elements. For example:

|

|

|

| Large | 70,000 | 30 data elements. For example:

|

|

|

The average length of user names is 16 characters.

For more information about capacity planning, contact Protegrity Support.

7 - Verification of Signed Protector Build

Verifying the signed protector build on the Linux platform

The digital signature of the signed protector build on the Linux platform, using the GNU Privacy Guard (GPG) encryption key, must be verified.

To verify the digital signature on the Linux platform:

Download the protector to any location on the machine where you want to install the protector.

To get the GPG encryption key from the ESA, which is located in the /opt/verification_keys/ directory, run the following command on the protector machine.

sshpass -p <ESA root password> scp -r root@<ESA ip>:/opt/verification_keys/10.0.gpg <location of 10.0.gpg file>Import the key to the GPG utility using the following command.

gpg --import <location for 10.0.gpg file>Extract the protector build using the following command.

tar -xvf <protector build (.tgz)>Verify the signature using the following command.

gpg --verify signatures/<signature of protector build (.sig)> <protector build (.tgz)>Extract the protector build again using the following command to obtain the various components to install the protector.

tar -xvf <protector build (.tgz)>

Verifying the signed protector build on the Windows platform

The digital signature of the signed protector build on the Windows platform, using the GNU Privacy Guard (GPG), must be verified.

To verify the digital signature on the Windows platform:

Download the protector to any location on the machine where you want to install the protector.

From 10.0 ESA, get the gpg encryption key which is located in

/opt/verification_keysfolder to the protector machine.Run the following command on the protector machine to transfer the file in the current directory.

scp -r root@<ESA_IP>:/opt/verification_keys/10.0.gpg .Note: Alternatively, login to the ESA using ssh. Navigate to the

/opt/verification_keys/folder and download the10.0.gpgfile manually. Then upload it to the protector machine.Install

gpg4winon your Windows protector machine.

a. Navigate to https://files.gpg4win.org/.

b Download the latest https://files.gpg4win.org/gpg4win-4.4.1.exe installer.

c. Run the downloaded installer.

d. Verify the installed version using the following command.gpg --version

The gpg version gets displayed. For example; gpg (GnuPG) 2.4.8.Import the key to the GPG utility using the following command.

gpg --import <location for 10.0.gpg file>Extract the protector build file.

a. Right-click the protector build file and click Extract All.

b. Click Extract.Verify the signature using the following command.

gpg --verify <full path of signature file (.sig)> <full path of protector build (.zip)>The signature file “.sig” is present in the signatures folder.

Extract the protector build file to get other components.

a. Navigate to the protector build folder.

b. Right-cick the protector build file and click Extract All.

c. Click Extract.

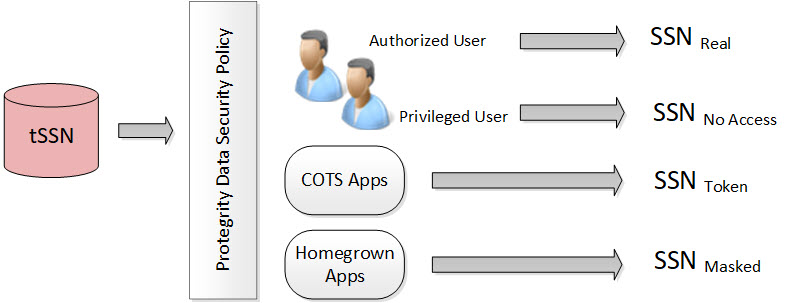

8 - Protection Method Reference

Protegrity products can protect sensitive data with the following protection methods:

The following table describes the protection methods for structured and unstructured data security policy types.

Table: Protection Methods by Data Security Policy Type

| Protection Method | Description | Structured | Unstructured |

|---|---|---|---|

| Tokenization (all types) | Tokenization is the process of replacing sensitive data with tokens that has no worth to someone who gains unauthorized access to the data. | √ | |

| Format Preserving Encryption (FPE) | A data encryption technique that preserves the ciphertext format using FF1 mode of operation for AES-256 block cipher algorithm. | √ | |

| AES-128 | A block cipher with 128 bit encryption keys. | √ | √ |

| AES-256 | A block cipher with 256 bit encryption keys. | √ | √ |

| CUSP AES-128, CUSP AES-256 | A modified block algorithm mainly used in environments where an IBM mainframe is present. | √ | |

| No Encryption | It does not protect data but lets the sensitive data be stored in clear. Protection comes from access control, monitoring, and masking. | √ | |

| Monitoring | It does not protect data but is used for monitoring and auditing. | √ | |

| Masking | It does not protect the data but applies masking to the sensitive data. | √ | |

| Hashing (HMAC-SHA256) | A Keyed-Hash Message Authentication Code. It is used only for protection of data using hashing. Since hashing is a one-way function, the original data cannot be restored. | √ |

The following table describes the deprecated protection methods for structured and unstructured data security policy types.

Table: Deprecated Protection Methods by Data Security Policy Type

| Protection Method | Description | Structured | Unstructured |

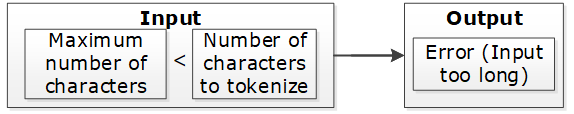

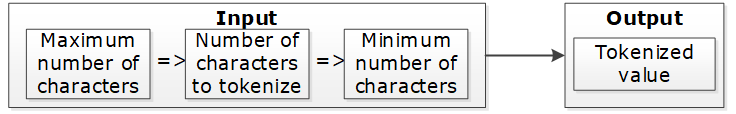

|---|---|---|---|

| 3DES | A block cipher with 168 bit encryption keys. | √ | √ |

| CUSP 3DES | A modified block algorithm mainly used in environments where an IBM mainframe is present. | √ | |

| Hashing (HMAC-SHA1) | A Keyed-Hash Message Authentication Code. It is used only for protection of data using hashing. Since hashing is a one-way function, the original data cannot be restored. | √ |

Protegrity protection methods, including tokenization, encryption, monitoring, masking, and hashing, support various input formats. This enables you to protect sensitive data using these methods. Some examples of input formats are as follows:

- Social Security Numbers (SSNs)

- Credit Card Numbers (CCNs)

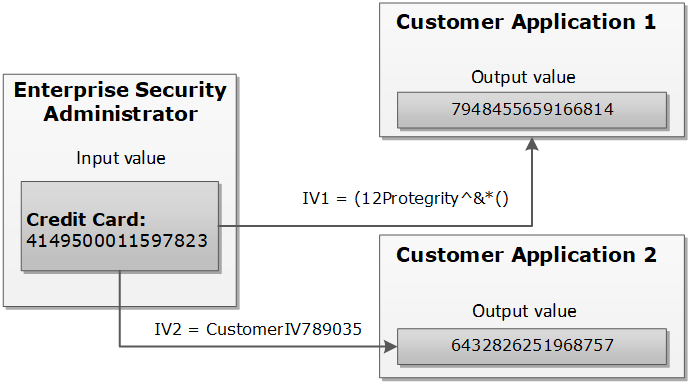

- Electronic Personal Health Information (ePHI), which is controlled by Health Insurance Portability and Accountability Act (HIPPA) and Health Information Technology for Economic and Clinical Health (HITECH)

- Personally identifiable information (PII)

The following table shows different types of sensitive data that can be protected using different protection methods. It demonstrates input values and their corresponding protected values.

Table: Examples of Protected Data

| # | Type of Data | Input | Protected Value | Comments on Protected Value |

|---|---|---|---|---|

| 1 | SSN delimiters | 075-67-2278 | 287-38-2567 | Numeric token, delimiters in input |

| 2 | Credit Card | 5511 3092 3993 4975 | 8278 2789 2990 2789 | Numeric token |

| 3 | Credit Card | 5511 3092 3993 4975 | 8278 2789 2990 4975 | Numeric token, last 4 digits in clear |

| 4 | Credit Card | 5511309239934975 | 551130########## | No Encryption with mask exposing the first 6 digits. A mask is applied by the data security policy when a user tries to unprotect the protected value. |

| 5 | Credit Card | 5511309239934975 | 1437623387940746 | Credit Card token with invalid Luhn digit property. Tokenized value has invalid Luhn checksum. |

| 6 | Credit Card | 5511309239934975 | 8313123036143103 | Credit Card token with invalid card type identification. The first digit in tokenized value is not a valid card type. |

| 7 | Credit Card | 5511309239934975 | 1854817J97347370 | Credit Card token with alphabetic indicator on the 8th position. |

| 8 | Phone/Fax number | 1 888 397 8192 | 9 853 888 8435 | Numeric token |

| 9 | Medical ID | 29M2009ID | iA6wx0Mw1 | Alpha-Numeric token |

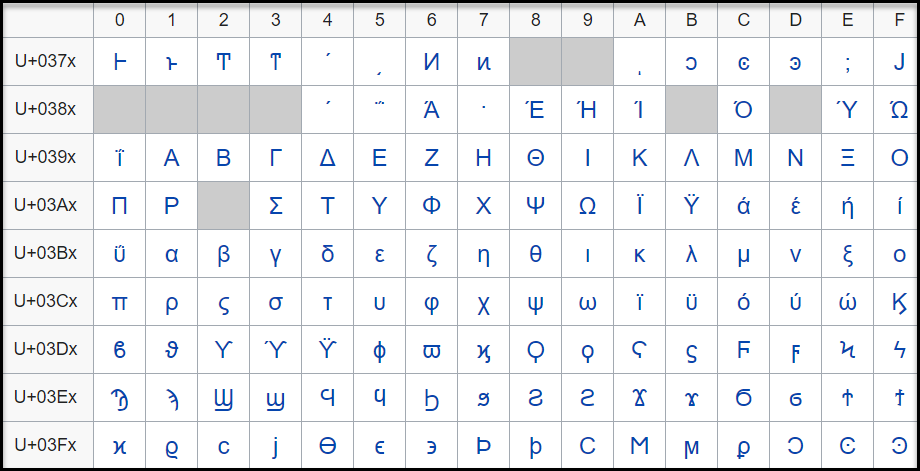

| 10 | Date and Time | 2012.12.31 12:23:34 | 1816.07.22 14:31:51 | Datetime token, date and time parts are tokenized |

| 11 | Proper names | Alfred Hitchcock | uRLzbg cvofdBFJh | Alpha token |

| 12 | Short names | Al | kKX | Alpha token non-length preserving |

| 13 | Abbreviations | CXR | GTP | Upper-case Alpha token |

| 14 | License plates | 583-LBE | 44J-KLT | Upper Alpha-Numeric token |

| 15 | Addresses | 5 High Ridge Park, Stamford | 5 hcY2 k9rLp Z0uA, KunZYNEM | Alpha-Numeric token. Punctuation marks and spaces are treated as delimiters. |

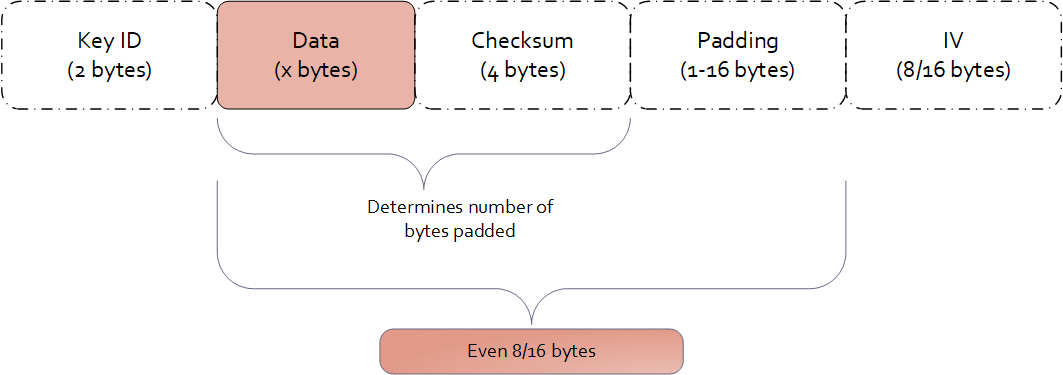

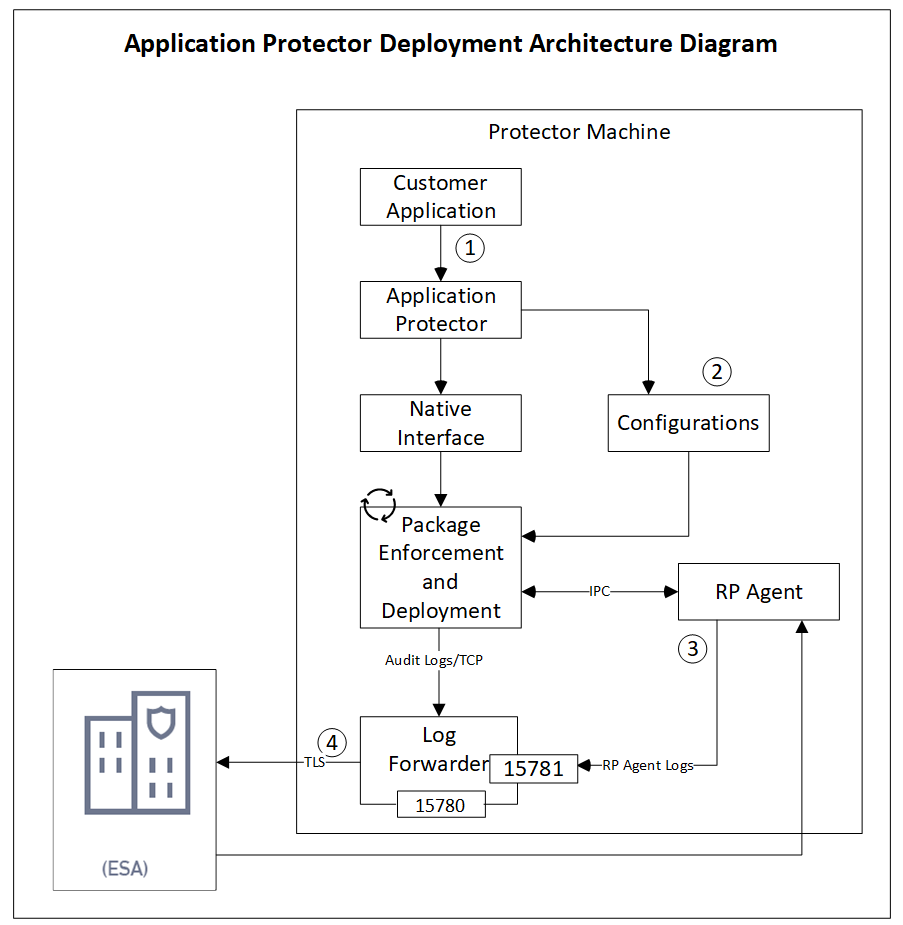

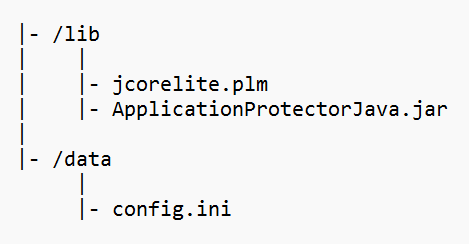

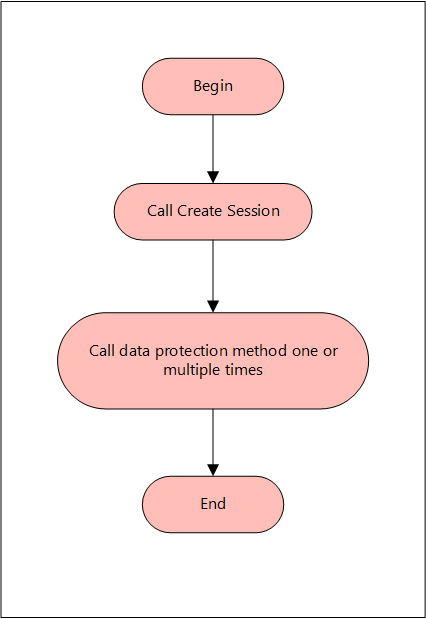

| 16 | E-mail Address | Protegrity1234@gmail.com | tzJkXJDRwjcNLU@02ici.com | Alpha-Numeric token, delimiters in input, last 3 characters in clear |